Ignoring the Big Thing: Google and Its PR Hunger

December 18, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “FunSearch: Making New Discoveries in Mathematical Sciences Using Large Language Models.” The main idea is that Google’s smart software is — once again — going where no mortal man has gone before. The write up states:

Today, in a paper published in Nature, we introduce FunSearch, a method to search for new solutions in mathematics and computer science. FunSearch works by pairing a pre-trained LLM, whose goal is to provide creative solutions in the form of computer code, with an automated “evaluator”, which guards against hallucinations and incorrect ideas. By iterating back-and-forth between these two components, initial solutions “evolve” into new knowledge. The system searches for “functions” written in computer code; hence the name FunSearch.

I like the idea of getting the write up in Nature, a respected journal. I like even better the idea of Google-splaining how a large language model can do mathy things. I absolutely love the idea of “new.”

“What’s with the pointed stick? I needed a wheel,” says the disappointed user of an advanced technology in days of yore. Thanks, MSFT Copilot. Good enough, which is a standard of excellence in smart software in my opinion.

Here’s a wonderful observation summing up Google’s latest development in smart software:

FunSearch is like one of those rocket cars that people make once in a while to break land speed records. Extremely expensive, extremely impractical and terminally over-specialized to do one thing, and do that thing only. And, ultimately, a bit of a show. YeGoblynQueenne via YCombinator.

My question is, “Is Google dusting a code brute force method with marketing sprinkles?” I assume that the approach can be enhanced with more tuning of the evaluator. I am not silly enough to ask if Google will explain the settings, threshold knobs, and probability levers operating behind the scenes.

Google’s prose makes the achievement clear:

This work represents the first time a new discovery has been made for challenging open problems in science or mathematics using LLMs. FunSearch discovered new solutions for the cap set problem, a longstanding open problem in mathematics. In addition, to demonstrate the practical usefulness of FunSearch, we used it to discover more effective algorithms for the “bin-packing” problem, which has ubiquitous applications such as making data centers more efficient.

The search for more effective algorithms is a never-ending quest. Who bothers to learn how to get a printer to spit out “Hello, World”? Today I am pleased if my printer outputs a Gmail message. And bin-packing is now solved. Good.

As I read the blog post, I found the focus on large language models interesting. But that evaluator strikes me as something of considerable interest. When smart software discovers something new, who or what allows the evaluator to “know” that something “new” is emerging. That evaluator must be something to prevent hallucination (a fancy term for making stuff up) and blocking the innovation process. I won’t raise any Philosophy 101 questions, but I will say, “Google has the keys to the universe” with sprinkles too.

There’s a picture too. But where’s the evaluator. Simplification is one thing, but skipping over the system and method that prevents smart software hallucinations (falsehoods, mistakes, and craziness) is quite another.

Google is not a company to shy from innovation from its human wizards. If one thinks about the thrust of the blog post, will these Googlers be needed. Google’s innovativeness has drifted toward me-too behavior and being clever with advertising.

The blog post concludes:

FunSearch demonstrates that if we safeguard against LLMs’ hallucinations, the power of these models can be harnessed not only to produce new mathematical discoveries, but also to reveal potentially impactful solutions to important real-world problems.

I agree. But the “how” hangs above the marketing. But when a company has quantum supremacy, the grimness of the recent court loss, and assorted legal hassles — what is this magical evaluator?

I find Google’s deal to use facial recognition to assist the UK in enforcing what appears to be “stop porn” regulations more in line with what Google’s smart software can do. The “new” math? Eh, maybe. But analyzing every person trying to access a porn site and having the technical infrastructure to perform cross correlation. Now that’s something that will be of interest to governments and commercial customers.

The bin thing and a short cut for a python script. Interesting but it lacks the practical “big bucks now” potential of the facial recognition play. That, as far as I know, was not written up and ponied around to prestigious journals. To me, that was news, not the FUN as a cute reminder of a “function” search.

Stephen E Arnold, December 18, 2023

Weaponizing AI Information for Rubes with Googley Fakes

December 8, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

From the “Hey, rube” department: “Google Admits That a Gemini AI Demo Video Was Staged” reports as actual factual:

There was no voice interaction, nor was the demo happening in real time.

Young Star Wars’ fans learn the truth behind the scenes which thrill them. Thanks, MSFT Copilot. One try and some work with the speech bubble and I was good to go.

And to what magical event does this mysterious statement refer? The Google Gemini announcement. Yep, 16 Hollywood style videos of “reality.” Engadget asserts:

Google is counting on its very own GPT-4 competitor, Gemini, so much that it staged parts of a recent demo video. In an opinion piece, Bloomberg says Google admits that for its video titled “Hands-on with Gemini: Interacting with multimodal AI,” not only was it edited to speed up the outputs (which was declared in the video description), but the implied voice interaction between the human user and the AI was actually non-existent.

The article makes what I think is a rather gentle statement:

This is far less impressive than the video wants to mislead us into thinking, and worse yet, the lack of disclaimer about the actual input method makes Gemini’s readiness rather questionable.

Hopefully sometime in the near future Googlers can make reality from Hollywood-type fantasies. After all, policeware vendors have been trying to deliver a Minority Report-type of investigative experience for a heck of a lot longer.

What’s the most interesting part of the Google AI achievement? I think it illuminates the thinking of those who live in an ethical galaxy far, far away… if true, of course. Of course. I wonder if the same “fake it til you make it” approach applies to other Google activities?

Stephen E Arnold, December 8, 2023

Google Smart Software Titbits: Post Gemini Edition

December 8, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

In the Apple-inspired roll out of Google Gemini, the excitement is palpable. Is your heart palpitating? Ah, no. Neither is mine. Nevertheless, in the aftershock of a blockbuster “me to” the knowledge shrapnel has peppered my dinobaby lair; to wit: Gemini, according to Wired, is a “new breed” of AI. The source? Google’s Demis Hassabis.

What happens when the marketing does not align with the user experience? Tell the hardware wizards to shift into high gear, of course. Then tell the marketing professionals to evolve the story. Thanks, MSFT Copilot. You know I think you enjoyed generating this image.

Navigate to “Google Confirms That Its Cofounder Sergey Brin Played a Key Role in Creating Its ChatGPT Rival.” That’s a clickable headline. The write up asserts: “Google hinted that its cofounder Sergey Brin played a key role in the tech giant’s AI push.”

Interesting. One person involved in both Google and OpenAI. And Google responding to OpenAI after one year? Management brilliance or another high school science club method? The right information at the right time is nine-tenths of any battle. Was Google not processing information? Was the information it received about OpenAI incorrect or weaponized? Now Gemini is a “new breed” of AI. The Verge reports that McDonald’s burger joints will use Google AI to “make sure your fries are fresh.”

Google has been busy in non-AI areas; for instance:

- The Register asserts that a US senator claims Google and Apple reveal push notification data to non-US nation states

- Google has ramped up its donations to universities, according to TechMeme

- Lost files you thought were in Google Drive? Never fear. Google has a software tool you can use to fix your problem. Well, that’s what Engadget says.

So an AI problem? What problem?

Stephen E Arnold, December 8, 2023

Gemini Twins: Which Is Good? Which Is Evil? Think Hard

December 6, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I received a link to a Google DeepMind marketing demonstration Web page called “Welcome to Gemini.” To me, Gemini means Castor and Pollux. Somewhere along the line — maybe a wonky professor named Chapman — told my class that these two represented Zeus and Hades. Stated another way, one was a sort of “good” deity with a penchant for non-godlike behavior. The other downright awful most of the time. I assume that Google knows about Gemini, its mythological baggage, and the duality of a Superman type doing the trust, justice, American way, and the other inspiring a range of bad actors. Imagine. Something that is good and bad. That’s smart software I assume. The good part sells ads; the bad part fails at marketing perhaps?

Two smart Googlers in New York City learn the difference between book learning for a PhD and street learning for a degree from the Institute of Hard Knocks. Thanks, MSFT Copilot. (Are you monitoring Google’s effort to dominate smart software by announcing breakthroughs very few people understand? Are you finding Google’s losses at the AI shell game entertaining?

Google’s blog post states with rhetorical aplomb:

Gemini is built from the ground up for multimodality — reasoning seamlessly across text, images, video, audio, and code.

Well, any other AI using Google’s previous technology is officially behind the curve. That’s clear to me. I wonder if Sam AI-Man, Microsoft, and the users of ChatGPT are tuned to the Google wavelength? There’s a video or more accurately more than a dozen of them, but I don’t like video so I skipped them all. There are graphs with minimal data and some that appear to jiggle in “real” time. I skipped those too. There are tables. I did read the some of the data and learned that Gemini can do basic arithmetic and “challenging” math like geometry. That is the 3, 4, 5 triangle stuff. I wonder how many people under the age of 18 know how to use a tape measure to determine if a corner is 90 degrees? (If you don’t, why not ask ChatGPT or MSFT Copilot.) I processed the twin’s size which come in three sizes. Do twins come in triples? Sigh. Anyway one can use Gemini Ultra, Gemini Pro, and Gemini Nano. Okay, but I am hung up on the twins and the three sizes. Sorry. I am a dinobaby. There are more movies. I exited the site and navigated to YCombinator’s Hacker News. Didn’t Sam AI-Man have a brush with that outfit?

You can find the comments about Gemini at this link. I want to highlight several quotations I found suggestive. Then I want to offer a few observations based on my conversation with my research team.

Here are some representative statements from the YCombinator’s forum:

- Jansan said: Yes, it [Google] is very successful in replacing useful results with links to shopping sites.

- FrustratedMonkey said: Well, deepmind was doing amazing stuff before OpenAI. AlphaGo, AlphaFold, AlphaStar. They were groundbreaking a long time ago. They just happened to miss the LLM surge.

- Wddkcs said: Googles best work is in the past, their current offerings are underwhelming, even if foundational to the progress of others.

- Foobar said: The whole things reeks of being desperate. Half the video is jerking themselves off that they’ve done AI longer than anyone and they “release” (not actually available in most countries) a model that is only marginally better than the current GPT4 in cherry-picked metrics after nearly a year of lead-time?

- Arson9416 said: Google is playing catchup while pretending that they’ve been at the forefront of this latest AI wave. This translates to a lot of talk and not a lot of action. OpenAI knew that just putting ChatGPT in peoples hands would ignite the internet more than a couple of over-produced marketing videos. Google needs to take a page from OpenAI’s playbook.

Please, work through the more than 600 comments about Gemini and reach your own conclusions. Here are mine:

- The Google is trying to market using rhetorical tricks and big-brain hot buttons. The effort comes across to me as similar to Ford’s marketing of the Edsel.

- Sam AI-Man remains the man in AI. Coups, tension, and chaos — irrelevant. The future for many means ChatGPT.

- The comment about timing is a killer. Google missed the train. The company wants to catch up, but it is not shipping products nor being associated to features grade school kids and harried marketers with degrees in art history can use now.

Sundar Pichai is not Sam AI-Man. The difference has become clear in the last year. If Sundar and Sam are twins, which represents what?

Stephen E Arnold, December 6, 2023

x

x

x

x

xx

Forget Deep Fakes. Watch for Shallow Fakes

December 6, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

“A Tech Conference Listed Fake Speakers for Years: I Accidentally Noticed” revealed a factoid about which I knew absolutely zero. The write up reveals:

For 3 years straight, the DevTernity conference listed non-existent software engineers representing Coinbase and Meta as featured speakers. When were they added and what could have the motivation been?

The article identifies and includes what appear to be “real” pictures of a couple of these made-up speakers. What’s interesting is that only females seem to be made up. Is that perhaps because conference organizers like to take the easiest path, choosing people who are “in the news” or “friends.” In the technology world, I see more entities which appear to be male than appear to be non-males.

Shallow fakes. Deep fakes. What’s the problem? Thanks, MSFT Copilot. Nice art which you achieved exactly how? Oh, don’t answer that question. I don’t want to know.

But since I don’t attend many conferences, I am not in touch with demographics. Furthermore, I am not up to speed on fake people. To be honest, I am not too interested in people, real or fake. After a half century of work, I like my French bulldog.

The write up points out:

We’ve not seen anything of this kind of deceit in tech – a conference inventing speakers, including fake images – and the mainstream media covered this first-of-a-kind unethical approach to organizing a conference,

That’s good news.

I want to offer a handful of thoughts about creating “fake” people for conferences and other business efforts:

- Why not? The practice went unnoticed for years.

- Creating digital “fakes” is getting easier and the tools are becoming more effective at duplicating “reality” (whatever that is). It strikes me that people looking for a short cut for a diverse Board of Directors, speaker line up, or a LinkedIn reference might find the shortest, easiest path to shape reality for a purpose.

- The method used to create a fake speaker is more correctly termed ka “shallow” fake. Why? As the author of the cited paper points out. Disproving the reality of the fakes was easy and took little time.

Let me shift gears. Why would conference organizers find fake speakers appealing? Here are some hypotheses:

- Conferences fall into a “speaker rut”; that is, organizers become familiar with certain speakers and consciously or unconsciously slot them into the next program because they are good speakers (one hopes), friendly, or don’t make unwanted suggestions to the organizers

- Conference staff are overworked and understaffed. Applying some smart workflow magic to organizing and filling in the blanks spaces on the program makes the use of fakery appealing, at least at one conference. Will others learn from this method?

- Conferences have become more dependent on exhibitors. Over the years, renting booth space has become a way for a company to be featured on the program. Yep, advertising, just advertising linked to “sponsors” of social gatherings or Platinum and Gold sponsors who get to put marketing collateral in a cheap nylon bag foisted on every registrant.

I applaud this write up. Not only will it give people ideas about how to use “fakes.” It will also inspire innovation in surprising ways. Why not “fake” consultants on a Zoom call? There’s an idea for you.

Stephen E Arnold, December 6, 2023

Google Maps: Trust in Us. Well, Mostly

December 1, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Friday and December 1, 2023. I want to commemorate the beginning of the last month of what has been an exciting 2023. How exciting. How about a Google Maps’ story?

Navigate to “Google Maps Mistake Leaves Dozens of Families Stranded in the Desert”. Here’s the story: The outstanding and from my point of view almost unusable Google Maps directed a number of people to a “dreadful dirt path during a dust storm.”

“Mommy, says the teenage son, “I told you exactly what the smart map system said to do. Why are we parked in a tree?” Thanks, MSFT Copilot. Good enough art.

Hey, wait up. I thought Google had developed a super duper quantum smart weather prediction system. Is Google unable to cross correlate Google Maps with potential negative weather events?

The answer, “Who are you kidding?” Google appears to be in content marketing hyperbole “we are better at high tech” mode. Let’s not forget the Google breakthrough regarding material science. Imagine. Google’s smart software identified oodles of new materials. Was this “new” news? Nope. Computational chemists have been generating potentially useful chemical substances for — what is it now? — decades. Is the Google materials science breakthrough going to solve the problem of burned food sticking to a cookie sheet? Sure, I am waiting for the news release.

What’s up with the Google Maps?

The write up says:

Google Maps apologized for the rerouting disaster and said that it had removed that route from its platform.

Hey, that’s helpful. I assume it was a quantum answer to a “we’re smart” outfit.

I wish I had kept the folder which had my collection of Google Map news items. I do recall someone who drove off a cliff. I had my own notes about my trying to find Seymour Rubinstein’s house on a bright sunny day. The inventor of WordStar did not live in the Bay. That was the location of Mr. Rubinstein’s house, according to Google Maps. I did find the house, and I had sufficient common sense not to drive into the water. I had other examples of great mappiness, but, alas!, no longer.

Is directing a harried mother into a desert during a dust storm humorous? Maybe to some in Sillycon Valley. I am not amused. I don’t think the mother was amused because in addition to the disturbing situation, her vehicle suffered $5,000 in damage.

The question is, “Why?”

Perhaps Google’s incentive system is not aligned to move consumer products like Google Maps from “good enough” to “excellent.” And the money that could have been spent on improving Google Maps may be needed to output stories about Google’s smart software inventing new materials.

Interesting. Isn’t OpenAI and the much loved Microsoft leading the smart software mindshare race? I think so. Perhaps Maps’ missteps are signal about management misalignment and deep issues within the Alphabet Google YouTube inferiority complex?

Stephen E Arnold, December 1, 2023

Maybe the OpenAI Chaos Ended Up as Grand Slam Marketing?

November 28, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Yep, Q Star. The next Big Thing. “About That OpenAI Breakthrough” explains

OpenAI could in fact have a breakthrough that fundamentally changes the world. But “breakthroughs” rarely turn to be general to live up to initial rosy expectations. Often advances work in some contexts, not otherwise.

I agree, but I have a slightly different view of the matter. OpenAI’s chaotic management skills ended up as accidental great marketing. During the dust up and dust settlement, where were the other Big Dogs of the techno-feudal world? If you said, who? you are on the same page with me. OpenAI burned itself into the minds of those who sort of care about AI and the end of the world Terminator style.

In companies and organizations with “do gooder” tendencies, the marketing messages can be interpreted by some as a scientific fact. Nope. Thanks, MSFT Copilot. Are you infringing and expecting me to take the fall?

First, shotgun marriages can work out here in rural Kentucky. But more often than not, these unions become the seeds of Hatfield and McCoy-type Thanksgivings. “Grandpa, don’t shoot the turkey with birdshot. Granny broke a tooth last year.” Translating from Kentucky argot: Ideological divides produce craziness. The OpenAI mini-series is in its first season and there is more to come from the wacky innovators.

Second, any publicity is good publicity in Sillycon Valley. Who has given a thought to Google’s smart software? How did Microsoft’s stock perform during the five day mini-series? What is the new Board of Directors going to do to manage the bucking broncos of breakthroughs? Talk about dominating the “conversation.” Hats off to the fun crowd at OpenAI. Hey, Google, are you there?

Third, how is that regulation of smart software coming along? I think one unit of the US government is making noises about the biggest large language model ever. The EU folks continue to discuss, a skill essential to representing the interests of the group. Countries like China are chugging along, happily downloading code from open source repositories. So exactly what’s changed?

Net net: The OpenAI has been a click champ. Good, bad, or indifferent, other AI outfits have some marketing to do in the wake of the blockbuster “Sam AI-Man: The Next Bigger Thing.” One way or another, Sam AI-Man dominates headlines, right Zuck, right Sundar?

Stephen E Arnold, November 28, 2023

Predicting the Weather: Another Stuffed Turkey from Google DeepMind?

November 27, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

By or design, the adolescents at OpenAI have dominated headlines for the pre-turkey, the turkey, and the post-turkey celebrations. In the midst of this surge in poohbah outputs, Xhitter xheets, and podcast posts, non-OpenAI news has been struggling for a toehold.

An important AI announcement from Google DeepMind stuns a small crowd. Were the attendees interested in predicting the weather or getting a free umbrella? Thank, MSFT Copilot. Another good enough art work whose alleged copyright violations you want me to determine. How exactly am I to accomplish that? Use, Google Bard?

What is another AI company to do?

A partial answer appears in “DeepMind AI Can Beat the Best Weather Forecasts. But There Is a Catch”. This is an article in the esteemed and rarely spoofed Nature Magazine. None of that Techmeme dominating blue link stuff. None of the influential technology reporters asserting, “I called it. I called it.” None of the eye wateringly dorky observations that OpenAI’s organizational structure was a problem. None of the “Satya Nadella learned about the ouster at the same time we did.” Nope. Nope. Nope.

What Nature provided is good, old-fashioned content marketing. The write up points out that DeepMind says that it has once again leapfrogged mere AI mortals. Like the quantum supremacy assertion, the Google can predict the weather. (My great grandmother made the same statement about The Farmer’s Almanac. She believed it. May she rest in peace.)

The estimable magazine reported in the midst of the OpenAI news making turkeyfest said:

To make a forecast, it uses real meteorological readings, taken from more than a million points around the planet at two given moments in time six hours apart, and predicts the weather six hours ahead. Those predictions can then be used as the inputs for another round, forecasting a further six hours into the future…. They [Googley DeepMind experts] say it beat the ECMWF’s “gold-standard” high-resolution forecast (HRES) by giving more accurate predictions on more than 90 per cent of tested data points. At some altitudes, this accuracy rose as high as 99.7 per cent.

No more ruined picnics. No weddings with bridesmaids’ shoes covered in mud. No more visibly weeping mothers because everyone is wet.

But Nature, to the disappointment of some PR professionals presents an alternative viewpoint. What a bummer after all those meetings and presentations:

“You can have the best forecast model in the world, but if the public don’t trust you, and don’t act, then what’s the point? [A statement attributed to Ian Renfrew at the University of East Anglia]

Several thoughts are in order:

- Didn’t IBM make a big deal about its super duper weather capabilities. It bought the Weather Channel too. But when the weather and customers got soaked, I think IBM folded its umbrella. Will Google have to emulate IBM’s behavior. I mean “the weather.” (Note: The owner of the IBM Weather Company is an outfit once alleged to have owned or been involved with the NSO Group.)

- Google appears to have convinced Nature to announce the quantum supremacy type breakthrough only to find that a professor from someplace called East Anglia did not purchase the rubber boots from the Google online store.

- The current edition of The Old Farmer’s Almanac is about US$9.00 on Amazon. That predictive marvel was endorsed by Gussie Arnold, born about 1835. We are not sure because my father’s records of the Arnold family were soaked by sudden thunderstorm.

Just keep in mind that Google’s system can predict the weather 10 days ahead. Another quantum PR moment from the Google which was drowned out in the OpenAI tsunami.

Stephen E Arnold, November 27, 2023

Google Pulls Out a Rhetorical Method to Try to Win the AI Spoils

November 20, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

In high school in 1958, our debate team coach yapped about “framing.” The idea was new to me, and Kenneth Camp pounded it into our debate’s collective “head” for the four years of my high school tenure. Not surprisingly, when I read “Google DeepMind Wants to Define What Counts As Artificial General Intelligence” I jumped back in time 65 years (!) to Mr. Camp’s explanation of framing and how one can control the course of a debate with the technique.

Google should not have to use a rhetorical trick to make its case as the quantum wizard of online advertising and universal greatness. With its search and retrieval system, the company can boost, shape, and refine any message it wants. If those methods fall short, the company can slap on a “filter” or “change its rules” and deprecate certain Web sites and their messages.

But Google values academia, even if the university is one that welcomed a certain Jeffrey Epstein into its fold. (Do you remember the remarkable Jeffrey Epstein. Some of those who he touched do I believe.) The estimable Google is the subject of referenced article in the MIT-linked Technology Review.

From my point of view, the big idea is the write up is, and I quote:

To come up with the new definition, the Google DeepMind team started with prominent existing definitions of AGI and drew out what they believe to be their essential common features. The team also outlines five ascending levels of AGI: emerging (which in their view includes cutting-edge chatbots like ChatGPT and Bard), competent, expert, virtuoso, and superhuman (performing a wide range of tasks better than all humans, including tasks humans cannot do at all, such as decoding other people’s thoughts, predicting future events, and talking to animals). They note that no level beyond emerging AGI has been achieved.

Shades of high school debate practice and the chestnuts scattered about the rhetorical camp fire as John Schunk, Jimmy Bond, and a few others (including the young dinobaby me) learned how one can set up a frame, populate the frame with logic and facts supporting the frame, and then point out during rebuttal that our esteemed opponents were not able to dent our well formed argumentative frame.

Is Google the optimal source for a definition of artificial general intelligence, something which does not yet exist. Is Google’s definition more useful than a science fiction writer’s or a scene from a Hollywood film?

Even the trusted online source points out:

One question the researchers don’t address in their discussion of _what_ AGI is, is _why_ we should build it. Some computer scientists, such as Timnit Gebru, founder of the Distributed AI Research Institute, have argued that the whole endeavor is weird. In a talk in April on what she sees as the false (even dangerous) promise of utopia through AGI, Gebru noted that the hypothetical technology “sounds like an unscoped system with the apparent goal of trying to do everything for everyone under any environment.” Most engineering projects have well-scoped goals. The mission to build AGI does not. Even Google DeepMind’s definitions allow for AGI that is indefinitely broad and indefinitely smart. “Don’t attempt to build a god,” Gebru said.

I am certain it is an oversight, but the telling comment comes from an individual who may have spoken out about Google’s systems and methods for smart software.

Mr. Camp, the high school debate coach, explains how a rhetorical trope can gut even those brilliant debaters from other universities. (Yes, Dartmouth, I am still thinking of you.) Google must have had a “coach” skilled in the power of framing. The company is making a bold move to define that which does not yet exist and something whose functionality is unknown. Such is the expertise of the Google. Thanks, Bing. I find your use of people of color interesting. Is this a pre-Sam ouster or a post-Sam ouster function?

What do we learn from the write up? In my view of the AI landscape, we are given some insight into Google’s belief that its rhetorical trope packaged as content marketing within an academic-type publication will lend credence to the company’s push to generate more advertising revenue. You may ask, “But won’t Google make oodles of money from smart software?” I concede that it will. However, the big bucks for the Google come from those willing to pay for eyeballs. And that, dear reader, translates to advertising.

Stephen E Arnold, November 20, 2023

The OpenAI Algorithm: More Data Plus More Money Equals More Intelligence

November 13, 2023

This essay is the work of a dumb humanoid. No smart software required.

This essay is the work of a dumb humanoid. No smart software required.

The Financial Times (I continue to think of this publication as the weird orange newspaper) published an interview converted to a news story. The title is an interesting one; to wit: “OpenAI Chief Seeks New Microsoft Funds to Build Superintelligence.” Too bad the story is about the bro culture in the Silicon Valley race to become the king of smart software’s revenue streams.

The hook for the write up is Sam Altman (I interpret the wizard’s name as Sam AI-Man), who appears to be fighting a bro battle with the Google’s, the current champion of online advertising. At stake is a winner takes all goal in the next big thing, smart software.

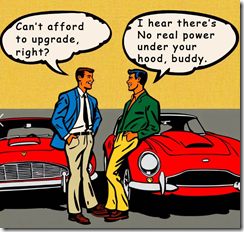

In the clubby world of smart software, I find the posturing of Google and OpenAI an extension of the mentality which pits owners of Ferraris (slick, expensive, and novel machines) in a battle of for the opponent’s hallucinating machine. The patter goes like this, “My Ferrari is faster, better looking, and brighter red than yours,” one owner says. The other owner says, “My Ferrari is newer, better designed, and has a storage bin”.) This is man cave speak for what counts.

When tech bros talk about their powerful machines, the real subject is what makes a man a man. In this case the defining qualities are money and potency. Thanks, Microsoft Bing, I have looked at the autos in the Microsoft and Google parking lots. Cool, macho.

The write up introduces what I think is a novel term: “Magic intelligence.” That’s T shirt grade sloganeering. The idea is that smart software will become like a person, just smarter.

One passage in the write up struck me as particularly important. The subject is orchestration, which is not the word Sam AI-Man uses. The idea is that the smart software will knit together the processes necessary to complete complex tasks. By definition, some tasks will be designed for the smart software. Others will be intended to make super duper for the less intelligent humanoids. Sam AI-Man is quoted by the Financial Times as saying:

“The vision is to make AGI, figure out how to make it safe . . . and figure out the benefits,” he said. Pointing to the launch of GPTs, he said OpenAI was working to build more autonomous agents that can perform tasks and actions, such as executing code, making payments, sending emails or filing claims. “We will make these agents more and more powerful . . . and the actions will get more and more complex from here,” he said. “The amount of business value that will come from being able to do that in every category, I think, is pretty good.”

The other interesting passage, in my opinion, is the one which suggests that the Google is not embracing the large language model approach. If the Google has discarded LLMs, the online advertising behemoth is embracing other, unnamed methods. Perhaps these are “small language models” in order to reduce costs and minimize the legal vulnerability some thing the LLM method beckons. Here’s the passage from the FT’s article:

While OpenAI has focused primarily on LLMs, its competitors have been pursuing alternative research strategies to advance AI. Altman said his team believed that language was a “great way to compress information” and therefore developing intelligence, a factor he thought that the likes of Google DeepMind had missed. “[Other companies] have a lot of smart people. But they did not do it. They did not do it even after I thought we kind of had proved it with GPT-3,” he said.

I find the bro jockeying interesting for three reasons:

- An intellectual jousting tournament is underway. Which digital knight will win? Both the Google and OpenAI appear to believe that the winner comes from a small group of contestants. (I wonder if non-US jousters are part of the equation “more data plus more money equals more intelligence”?

- OpenAI seems to be driving toward “beyond human” intelligence or possibly a form of artificial general intelligence. Google, on the other hand, is chasing a wimpier outcome.

- Outfits like the Financial Times are hot on the AI story. Why? The automated newsroom without humans promises to reduce costs perhaps?

Net net: AI vendors, rev your engines for superintelligence or magic intelligence or whatever jargon connotes more, more, more.

Stephen E Arnold, November 13, 2023

test