Xoogler Predicts the Future: China Bad, Xoogler Good

March 26, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Did you know China, when viewed from the vantage point of a former Google executive, is bad? That is a stunning comment. Google tried valiantly to convert China into a money stream. That worked until it didn’t. Now a former Googler or Xoogler in some circles has changed his tune.

Thanks, MSFT Copilot. Working on security I presume?

“Eric Schmidt’s China Alarm” includes some interesting observations. None of which address Google’s attempt to build a China-acceptable search engine. Oh, well, anyone can forget minor initiatives like that. Let’s look at a couple of comments from the article:

How about this comment about responding to China:

"We have to do whatever it takes."

I wonder if Mr. Schmidt has been watching Dr. Strangelove on YouTube. Someone might pull that viewing history to clarify “whatever it takes.”

Another comment I found interesting is:

China has already become a peer of the U.S. and has a clear plan for how it wants to dominate critical fields, from semiconductors to AI, and clean energy to biotech.

That’s interesting. My thought is that the “clear plan” seems to embrace education; that is, producing more engineers than some other countries, leveraging open source technology, and erecting interesting barriers to prevent US companies from selling some products in the Middle Kingdom. How long has this “clear plan” been chugging along? I spotted portions of the plan in Wuhan in 2007. But I guess now it’s a more significant issue after decades of being front and center.

I noted this comment about artificial intelligence:

Schmidt also said Europe’s proposals on regulating artificial intelligence "need to be re-done," and in general says he is opposed to regulating AI and other advances to solve problems that have yet to appear.

The idea is an interesting one. The UN and numerous NGOs and governmental entities around the world are trying to regulate, tame, direct, or ameliorate the impact of smart software. How’s that going? My answer is, “Nowhere fast.”

The article makes clear that Mr. Schmidt is not just a Xoogler; he is a global statesperson. But in the back of my mind, once a Googler, always a Googler.

Stephen E Arnold, March 26, 2024

AI Proofing Tools in Higher Education Limbo

March 26, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Where is the line between AI-assisted plagiarism and a mere proofreading tool? That is something universities really should have decided by now. Those that have not risk appearing hypocritical and unjust. For example, the University of North Georgia (UNG) specifically recommends students use Grammarly to help proofread their papers. And yet, as News Nation reports, a “Student Fights AI Cheating Allegations for Using Grammarly” at that school.

The trouble began when Marley Stevens’ professor ran her paper through plagiarism-detection software Turnitin, which flagged it for an AI violation. Apparently that (ironically) AI-powered tool did not know Grammarly was on the university’s “nice” list. But surely the charge of cheating was reversed once human administrators got involved, right? Nope. Writer Damita Memezes tells us:

“‘I’m on probation until February 16 of next year. And this started when he sent me the email. It was October. I didn’t think that now in March of 2024, that this would still be a big thing that was going on,’ Stevens said. Despite Grammarly being recommended on the University of North Georgia’s website, Stevens found herself embroiled in battle to clear her name. The tool, briefly removed from the school’s website, later resurfaced, adding to the confusion surrounding its acceptable usage despite the software’s utilization of generative AI. ‘I have a teacher this semester who told me in an email like “yes use Grammarly. It’s a great tool.” And they advertise it,’ Stevens said. … Despite Stevens’ appeal and subsequent GoFundMe campaign to rectify the situation, her options seem limited. The university’s stance, citing the absence of suspension or expulsion, has left her in a bureaucratic bind.”

Grammarly’s Jenny Maxwell defends the tool and emphasizes her company’s transparency around its generative components. She suggests colleges and universities update their assessment methods to address evolving tech like Grammarly. For good measure, we would add Microsoft Word’s Copilot and Google Chrome’s "help me write" feature. Shouldn’t schools be training students in the responsible use of today’s technology? According to UNG, yes. And also, no.

This means that if you use Word and its smart software, you may be a cheater. No need to wait until you go to work at a blue chip consulting firm. You are working on your basic consulting skills.

Cynthia Murrell, March 26, 2024

Can Ma Bell Boogie?

March 25, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

AT&T provides numerous communication and information services to the US government and companies. People see the blue and white trucks with obligatory orange cones and think nothing about their presence. Decades after Judge Green rained on the AT&T monopoly parade, the company has regained some of its market chutzpah. The old-line Bell heads knew that would happen. One reason was the simple fact that communications services have a tendency to pool; that is, online, for instance, wants to be a monopoly. Like water, online and communication services seek the lowest level. One can grouse about a leaking basement, but one is complaining about a basic fact. Complain away, but the water pools. Similarly AT&T benefits and knows how to make the best of this pooling, consolidating, and collecting reality.

I do miss the “old” AT&T. Say what you will about today’s destabilizing communications environment, just don’t forget that the pre-Judge Green world produced useful innovations, provided hardware that worked, and made it possible for some government functions to work much better than those operations perform today.

Thanks, MSFT, it seems you understand ageing companies which struggle in the midst of the cyber whippersnappers.

But what’s happened?

In February 2024, AT&T experienced an outage. The redundant, fail-safe, state-of-the-art infrastructure failed. “AT&T Cellular Service Restored after Daylong Outage; Cause Still Unknown” reported:

AT&T said late Thursday [February 24, 2024] that based on an initial review, the outage was “caused by the application and execution of an incorrect process used as we were expanding our network, not a cyber attack.” The company will continue to assess the outage.

What do we publicly know about this remarkable event a month ago? Not much. I am not going to speculate how a single misstep can knock out AT&T, but it raises some questions about AT&T’s procedures, its security, and, yes, its technical competence. The AT&T Ashburn data center is an interesting cluster of facilities. Could it be “knocked offline”? My concern is that the answer to this question is, “You bet your bippy it could.”

A second interesting event surfaced as well. AT&T suffered a mysterious breach which appears to have compromised data about millions of “customers.” And “AT&T Won’t Say How Its Customers’ Data Spilled Online.” Here’s a statement from the report of the breach:

When reached for comment, AT&T spokesperson Stephen Stokes told TechCrunch in a statement: “We have no indications of a compromise of our systems. We determined in 2021 that the information offered on this online forum did not appear to have come from our systems. This appears to be the same dataset that has been recycled several times on this forum.”

Leaked data are no big deal and the incident remains unexplained. The AT&T system went down essential at one fell swoop. Plus there is no explanation which resonates with my understanding of the Bell “way.”

Some questions:

- What has AT&T accomplished by its lack of public transparency?

- Has the company lost its ability to manage a large, dynamic system due to cost cutting?

- Is a lack of training and perhaps capable staff undermining what I think of as “mission critical capabilities” for business and government entities?

- What are US regulatory authorities doing to address what is, in my opinion, a threat to the economy of the US and the country’s national security?

Couple the AT&T events with emerging technology like artificial intelligence, will the company make appropriate decisions or create vulnerabilities typically associated with a dominant software company?

Not a positive set up in my opinion. Ma Bell, are you to old and fat to boogie?

Stephen E Arnold, March 26, 2024

Research into Baloney Uses Four Letter Words

March 25, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I am critical of university studies. However, I spotted one which strikes as the heart of the Silicon Valley approach to life. “Research Shows That People Who BS Are More Likely to Fall for BS” has an interesting subtitle; to wit:

People who frequently mislead others are less able to distinguish fact from fiction, according to University of Waterloo researchers

A very good looking bull spends time reviewing information helpful to him in selling his artificial intelligence system. Unlike the two cows, he does not realize that he is living in a construct of BS. Thanks, MSFT Copilot. How are you doing with those printer woes today? Good enough, I assume.

Consider the headline in the context of promises about technologies which will “change everything.” Examples range from the marvels of artificial intelligence to the crazy assertions about quantum computing. My hunch is that the reason baloney has become one of the most popular mental foods in the datasphere is that people desperately want a silver bullet. Other know that if a silver bullet is described with appropriate language and a bit of sizzle, the thought can be a runway for money.

What’s this mean? We have created a culture in North America that makes “technology” and “glittering generalities” into hyperbole factories. Why believe me? Let’s look at the “research.”

The write up reports:

People who frequently try to impress or persuade others with misleading exaggerations and distortions are themselves more likely to be fooled by impressive-sounding misinformation… The researchers found that people who frequently engage in “persuasive bullshitting” were actually quite poor at identifying it. Specifically, they had trouble distinguishing intentionally profound or scientifically accurate fact from impressive but meaningless fiction. Importantly, these frequent BSers are also much more likely to fall for fake news headlines.

Let’s think about this assertion. The technology story teller is an influential entity. In the world of AI, for example, some firms which have claimed “quantum supremacy” showcase executives who spin glorious word pictures of smart software reshaping the world. The upsides are magnetic; the downsides dismissed.

What about crypto champions? Telegram, founded by two Russian brothers, are spinning fabulous tales of revenue from advertising in an encrypted messaging system and cheerleading for a more innovative crypto currency. Operating from Dubai, there are true believers. What’s not to like? Maybe these bros have the solution that has long been part of the Harvard winkle confections.

What shocked me about the write up was the use of the word “bullshit.” Here’s an example from the academic article:

“We found that the more frequently someone engages in persuasive bullshitting, the more likely they are to be duped by various types of misleading information regardless of their cognitive ability, engagement in reflective thinking, or metacognitive skills,” Littrell said. “Persuasive BSers seem to mistake superficial profoundness for actual profoundness. So, if something simply sounds profound, truthful, or accurate to them that means it really is. But evasive bullshitters were much better at making this distinction.”

What if the write up is itself BS? What if the journal publishing the article — British Journal of Social Psychology — is BS? On one level, I want to agree that those skilled in the art of baloney manufacturing, distributing, and outputting have a quite specific skill. On the other hand, I admit that I cannot determine at first glance if the information provided is not synthetic, ripped off, shaped, or weaponized. I would assert that most people are not able to identify what is “verifiable”, “an accurate accepted fact”, or “true.”

We live in a post-reality era. When the presidents of outfits like Harvard and Stanford face challenges to their research accuracy, what can I do when confronted with a media release about BS. Upon reflection, I think the generalization that people cannot figure out what’s on point or not is true. When drug store cashiers cannot make change, I think that’s strong anecdotal evidence that other parts of their mental toolkit have broken or missing parts.

But the statement that those who output BS cannot themselves identify BS may be part of a broader educational failure. Lazy people, those who take short cuts, people who know how to do the PT Barnum thing, and sales professionals trying to close a deal reflect a societal issue. In a world of baloney, everything is baloney.

Stephen E Arnold, March 25, 2024

AI Job Lawnmowers: Will Your Blooms Be Chopped Off and Put a Rat King in Your Future?

March 25, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I love “you will lose your job to AI” articles. I spotted an interesting one titled “The Job Sectors That Will Be Most Disrupted By AI, Ranked.” This is not so much an article as a billboard for an outfit named Voronoi, “where data tells the story.” That’s interesting because there is no data, no methodology, and no indication of the confidence level for each “nuked job.” Nevertheless, we have a ranking.

Thanks, MSFT Copilot. Will you be sparking human rat kings? I would wager that you will.

As I understand the analysis of 19,000 tasks, here’s that the most likely to be chopped down and converted to AI silage will be:

IT / programmers: 73 percent of the job will experience a large impact

Finance / bean counters: 70 percent of the jobs will experience a large impact

Customer sales: 67 percent of the job will experience a large impact

Operations (well, that’s a fuzzy category, isn’t it?): 65 percent of the job will experience a large impact

Personnel / HR: 57 percent of the job will experience a large impact

Marketing: 56 percent of the job will experience a large impact

Legal eagles: 46 percent of the job will experience a large impact

Supply chain (another fuzzy wuzzy bucket): 43 percent of the job will experience a large impact

The kicker in the data is that the numbers date from September 2023. Six months in the faerie land of smart software is a long, long time. Let’s assume that the data meet 2024’s gold standard.

Technology, finance, sales, marketing, and lawyering may shatter the future of employees of less value in terms of compensation, cost to the organization, or whatever management legerdemain the top dogs and their consultants whip up. Imagine eliminate the overhead for humans like office space, health care, retirement baloney, and vacations makes smart software into an attractive “play.”

And what about the fuzzy buckets? My thought is that many people will be trimmed because a chatbot can close a sale for a product without the hassle which humans drag into the office; for example, sexual harassment, mental, drug, and alcohol “issues,” and the unfortunate workplace shooting. I think that a person sitting in a field office to troubleshoot issues related to a state or county contract might fall into the “operations” category even though the employee sees the job as something smart software cannot perform. Ho ho ho.

Several observations:

- A trivial cost analysis of human versus software over a five-year period means humans lose

- AI systems, which may suck initially, will be improved over time. These initial failures may cause the once alert to replacement employee into a false sense of security

- Once displaced, former employees will have to scramble to produce cash. With lots of individuals chasing available work and money plays, life is unlikely to revert back to the good old days of the Organization Man. (The world will be Organization AI. No suit and white shirt required.)

Net net: I am glad I am old and not quite as enthralled by efficiency.

Stephen E Arnold, March 25, 2024

Getting Old in the Age of AI? Yeah, Too Bad

March 25, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read an interesting essay called “’Gen X Has Had to Learn or Die: Mid-Career Workers Are Facing Ageism in the Job Market.” The title assumes that the reader knows the difference between Gen X, Gen Y, Gen Z, and whatever other demographic slices marketers and “social” scientists cook up. I recognize one time slice: Dinobabies like me and a category I have labeled “Other.”

Two Gen X dinobabies find themselves out of sync with the younger reptiles’ version of Burning Man. Thanks, MSFT Copilot. Close enough.

The write up, which I think is a work product of a person who realizes that the stranger in a photograph is the younger version of today’s self. “How can that be?” the author of the essay asks. “In my Gen X, Y, or Z mind I am the same. I am exactly the way I was when I was younger.” The write up states:

Gen Xers, largely defined as people in the 44-to-59 age group, are struggling to get jobs.

The write up quotes an expert, Christina Matz, associate professor at the Boston College School of Social Work, and director of the Center on Aging and Work. I believe this individual has a job for now. The essay quotes her observation:

older workers are sometimes perceived as “doddering but dear”. Matz says, “They’re labelled as slower and set in their ways, well-meaning on one hand and incompetent on the other. People of a certain age are considered out-of-touch, and not seen as progressive and innovative.”

I like to think of myself as doddering. I am not sure anyone, regardless of age, will label me “dear.”

But back to the BBC’s essay. I read:

We’re all getting older.

Now that’s an insight!

I noted that the acronym “AI” appears once in the essay. One source is quoted as offering:

… we had to learn the internet, then Web 2.0, and now AI. Gen X has had to learn or die,

Hmmm. Learn of die.

Several observations:

- The write up does not tackle the characteristic of work that strikes me as important; namely, if one is in the Top Tier of people in a particular discipline, jobs will be hard to find. Artificial intelligence will elevate those just below the “must hire” level and allow organizations to replace what once was called “the organization man” with software.

- The discovery that just because a person can use a mobile phone does not give them intellectual super powers. The kryptonite to those hunting for a “job” is that their “package” does not have “value” to an organization seeking full time equivalents. People slap a price tag on themselves and, like people running a yard sale, realize that no one will pay very much for that stack of old time post cards grandma collected.

- The notion of entitlement does not appear in the write up. In my experience, a number of people believe that a company or other type of entity “owes them a living.” Those accustomed to receiving “Also Participated” trophies and “easy” A’s have found themselves on the wrong side of paradise.

My hunch is that these “ageism” write ups are reactions to the gradual adoption of ever more capable “smart” software. I am not sure if the author agrees with me. I am asserting that the examples and comments in the write up are a reaction to the existential threat AI, bots, and embedded machine intelligence finding their way into “systems” today. Probably not.

Now let’s think about the “learn” plank of the essay. A person can learn, adapt, and thrive, right? My personal view is that this is a shibboleth. Oh, oh.

Stephen E Arnold, March 25, 2024

Google: Practicing But Not Learning in France

March 22, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I had to comment on this Google synthetic gems. The online advertising company with the Cracker Jack management team is cranking out titbits every days or two. True, none of these rank with the Microsoft deal to hire some techno-management wizards with DeepMind experience, but I have to cope with what flows into rural Kentucky.

Those French snails are talkative — and tasty. Thanks, MSFT Copilot. Are you going to license, hire, or buy DeepMind?

“Google Fined $270 Million by French Regulatory Authority” delivers what strikes me a Lego block information about the estimable company. The write up presents yet another story about Google’s footloose and fancy free approach to French laws, rules, and regulations. The write up reports:

This latest fine is the result of Google’s artificial intelligence training practices. The [French regulatory] watchdog said in a statement that Google’s Bard chatbot — which has since been rebranded as Gemini —”used content from press agencies and publishers to train its foundation model, without notifying either them” or the Authority.

So what did the outstanding online advertising company do? The news story asserts:

The watchdog added that Google failed to provide a technical opt-out solution for publishers, obstructing their ability to “negotiate remuneration.”

The result? Another fine.

Google has had an interesting relationship with France. The country was the scene of the outstanding presentation of the Sundar and Prabhakar demonstration of the quantumly supreme Bard smart software. Google has written checks to France in the past. Now it is associated with flubbing what are relatively straightforward for France requirements to work with publishers.

Not surprisingly, the outfit based in far off California allegedly said, according to the cited news story:

Google criticized a “lack of clear regulatory guidance,” calling for greater clarity in the future from France’s regulatory bodies. The fine is linked to a copyright case that began in 2020, when the French Authority found Google to be acting in violation of France’s copyright and related rights law of 2019.

My experience with France, French laws, and the ins and outs of working with French organizations is limited. Nevertheless, my son — who attended university in France — told me an anecdote which illustrates how French laws work. Here’s the tale which I assume is accurate. He is a reliable sort.

A young man was in the immigration office in Paris. He and his wife were trying to clarify a question related to her being a French citizen. The bureaucrat had not accepted her birth certificate from a municipal French government, assorted documents from her schooling from pre-school to university, and the oddments of electric bills, rental receipts, and medical records. The husband who was an American told me son, “This office does not think my wife is French. She is. And I think we have it nailed this time. My wife has a photograph of General De Gaulle awarding her father a medal.” My son told me, “Dad, it did not work. The husband and wife had to refile the paperwork to correct an error made on the original form.”

My takeaway from this anecdote is that Google may want to stay within the bright white lines in France. Getting entangled in the legacy of Napoleon’s red tape can be an expensive, frustrating experience. Perhaps the Google will learn? On the other hand, maybe not.

Stephen E Arnold, March 22, 2023

The University of Illinois: Unintentional Irony

March 22, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I admit it. I was in the PhD program at the University of Illinois at Champaign-Urbana (aka Chambana). There was nothing like watching a storm build from the upper floors of now departed FAR. I spotted a university news release titled “Americans Struggle to Distinguish Factual Claims from Opinions Amid Partisan Bias.” From my point of view, the paper presents research that says that half of those in the sample cannot distinguish truth from fiction. That’s a fact easily verified by visiting a local chain store, purchasing a product, and asking the clerk to provide the change in a specific way; for example, “May I have two fives and five dimes, please?” Putting data behind personal experience is a time-honored chore in the groves of academe.

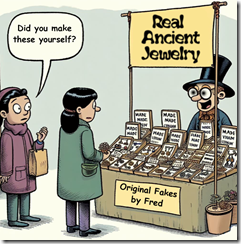

Discerning people can determine “real” from “original fakes.” Well, only half the people can it seems. The problem is defining what’s true and what’s false. Thanks, MSFT Copilot. Keep working on your security. Those breaches are “real.” Half the time is close enough for horseshoes.

Here’s a quote from the write up I noted:

“How can you have productive discourse about issues if you’re not only disagreeing on a basic set of facts, but you’re also disagreeing on the more fundamental nature of what a fact itself is?” — Matthew Mettler, a U. of I. graduate student and co-author of with Jeffery J. Mondak, a professor of political science and the James M. Benson Chair in Public Issues and Civic Leadership at Illinois.

The news release about Mettler’s and Mondak’s research contains this statement:

But what we found is that, even before we get to the stage of labeling something misinformation, people often have trouble discerning the difference between statements of fact and opinion…. “What we’re showing here is that people have trouble distinguishing factual claims from opinion, and if we don’t have this shared sense of reality, then standard journalistic fact-checking – which is more curative than preventative – is not going to be a productive way of defanging misinformation,” Mondak said. “How can you have productive discourse about issues if you’re not only disagreeing on a basic set of facts, but you’re also disagreeing on the more fundamental nature of what a fact itself is?”

But the research suggests that highly educated people cannot differentiate made up data from non-weaponized information. What struck me is that Harvard’s Misinformation Review published this U of I research that provides a road map to fooling peers and publishers. Harvard University, like Stanford University, has found that certain big-time scholars violate academic protocols.

I am delighted that the U of I research is getting published. My concern is that the Misinformation Review does not find my laughing at its Misinformation Review to their liking. Harvard illustrates that academic transgressions cannot be identified by half of those exposed to the confections of up-market academics.

Should Messrs Mettler and Mondak have published their research in another journal? That a good question, but I am no longer convinced that professional publications have more credibility than the outputs of a content farm. Such is the erosion of once-valued norms. Another peril of thumb typing is present.

Stephen E Arnold, March 22, 2024

US Bans Intellexa For Spying On Senator

March 22, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

One of the worst ideas in modern society is to spy on the United States. The idea becomes worse when the target is a US politician. Intellexa is a notorious company that designs software to hack smartphones and transform them into surveillance devices. NBC News reports how Intellexa’s software was recently used in an attempt to hack a US senator: “US Bans Maker Of Spyware That Targeted A Senator’s Phone.”

Intellexa designed the software Predator that once downloaded onto a phone turns it into a surveillance device. Predator can turn on a phone’s camera and microphone, track a user’s location, and download files. The US Treasure Department banned Intellexa from conducting business in the US and US citizens are banned from working with the company. These are the most aggressive sanctions the US has ever taken against a spyware company.

The official ban also targets Intellexa’s founder Tan Dilian, employee Sara Hamou, and four companies that are affiliated with it. Predator is also used by authoritarian governments to spy on journalists, human rights workers, and anyone deemed “suspicious:”

“An Amnesty International investigation found that Predator has been used to target journalists, human rights workers and some high-level political figures, including European Parliament President Roberta Metsola and Taiwan’s outgoing president, Tsai Ing-Wen. The report found that Predator was also deployed against at least two sitting members of Congress, Rep. Michael McCaul, R-Texas, and Sen. John Hoeven, R-N.D.”

John Scott-Railton is a senior spyware researcher at the University of Toronto’s Citizen Lab and he said the US Treasury’s sanctions will rock the spyware world. He added it could also inspire people to change their careers and leave countries.

Predator isn’t the only company that makes spyware. Hackers can also design their own then share it with other bad actors.

Whitney Grace, March 22, 2024

Peak AI? Do You Know What Happened to Catharists? Quiz ChatGPT or Whatever

March 21, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “Have We Reached Peak AI?” The question is an interesting one because some alleged monopolies are forming alliances with other alleged monopolies. Plus wonderful managers from an alleged monopoly is joining another alleged monopoly to lead a new “unit” of the alleged monopoly. At the same time, outfits like the usually low profile Thomson Reuters suggested that it had an $8 billion war chest for smart software. My team and I cannot keep up with the announcements about AI in fields ranging from pharma to ransomware from mysterious operators under the control of wizards in China and Russia.

Thanks, MSFT Copilot. You did a good job on the dinobabies.

Let’s look at a couple of statements in the essay which addresses the “peak AI” question.

I noticed that OpenAI is identified as an exemplar of a company that sticks to a script, avoids difficult questions, and gets a velvet glove from otherwise pointy fingernailed journalists. The point is well taken; however, attention does not require substance. The essay states:

OpenAI’s messaging and explanations of what its technology can (or will) do have barely changed in the last few years, returning repeatedly to “eventually” and “in the future” and speaking in the vaguest ways about how businesses make money off of — let alone profit from — integrating generative AI.

What if the goal of the interviews and the repeated assertions about OpenAI specifically and smart software in general is publicity and attention. Cut off the buzz for any technology and it loses its shine. Buzz is the oomph in the AI hot house. Who cares about Microsoft buying into games? Now who cares about Microsoft hooking up with OpenAI, Mistral, and Inception? That’s what the meme life delivers. Games, sigh. AI, let’s go and go big.

Another passage in the essay snagged me:

I believe a large part of the artificial intelligence boom is hot air, pumped through a combination of executive bullshitting and a compliant media that will gladly write stories imagining what AI can do rather than focus on what it’s actually doing.

One of my team members accused me of FOMO when I told Howard to get us a Flipper. (Can one steal a car with a Flipper? The answer is, “Not without quite a bit of work.) The FOMO was spot on. I had a “fear of missing out.” Canada wants to ban the gizmos. Hence, my request, “Get me a Flipper.” Most of the “technology” in the last decade is zipping along on marketing, PR, and YouTube videos. (I just watched a private YouTube video about intelware which incorporates lots of AI. Is the product available? Nope. But… soon. Let the marketing and procurement wheels begin turning.)

Journalists (often real ones) fall prey to FOMO. Just as I wanted a Flipper, the “real” journalists want to write about what’s apparently super duper important. The Internet is flagging. Quantum computing is old hat and won’t run in a mobile phone. The new version of Steve Gibson’s Spinrite is not catching the attention of blue chip investment firms. Even the enterprise search revivifier Glean is not magnetic like AI.

The issue for me is more basic than the “Peak AI” thesis; to wit, What is AI? No one wants to define it because it is a bit like “security.” The truth is that AI is a way to make money in what is a fairly problematic economic setting. A handful of companies are drowning in cash. Others are not sure they can keep the lights on.

The final passage I want to highlight is:

Eventually, one of these companies will lose a copyright lawsuit, causing a brutal reckoning on model use across any industry that’s integrated AI. These models can’t really “forget,” possibly necessitating a costly industry-wide retraining and licensing deals that will centralize power in the larger AI companies that can afford them.

I would suggest that Google has already been ensnared by the French regulators. AI faces an on-going flow of legal hassles. These range from cash-starved publishers to the work-from-home illustrator who does drawings for a Six-Flags-Over-Jesus type of super church. Does anyone really want to get on the wrong side of a super church in (heaven forbid) Texas?

I think the essay raises a valid point: AI is a poster child of hype.

However, as a dinobaby, I know that technology is an important part of the post-industrial set up in the US of A. Too much money will be left on the table unless those savvy to revenue flows and stock upsides ignore the mish-mash of AI. In an unregulated setting, people need and want the next big thing. Okay, it is here. Say “hello” to AI.

Stephen E Arnold, March 21, 2024