Taming AI Requires a Combo of AskJeeves and Watson Methods

April 15, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I spotted a short item called “A Faster, Better Way to Prevent an AI Chatbot from Giving Toxic Responses.” The operative words from my point of view are “faster” and “better.” The write up reports (with a serious tone, of course):

Teams of human testers write prompts aimed at triggering unsafe or toxic text from the model being tested. These prompts are used to teach the chatbot to avoid such responses.

Yep, AskJeeves created rules. As long as the users of the system asked a question for which there was a rule, the helpful servant worked; for example, What’s the weather in San Francisco? However, ask a question for which there was no rule, what happens? The search engine reality falls behind the marketing juice and gets shopped until a less magical version appears as Ask.com. And then there is IBM Watson. That system endeared itself to groups of physicians who were invited to answer IBM “experts’” questions about cancer treatments. I heard when Watson was in full medical-revolution mode that some docs in a certain Manhattan hospital used dirty words to express his view about the Watson method. Rumor or actual factual? I don’t know, but involving humans in making software smart can be fraught with challenges: Managerial and financial to name but two.

The write up says:

Researchers from Improbable AI Lab at MIT and the MIT-IBM Watson AI Lab used machine learning to improve red-teaming. They developed a technique to train a red-team large language model to automatically generate diverse prompts that trigger a wider range of undesirable responses from the chatbot being tested. They do this by teaching the red-team model to be curious when it writes prompts, and to focus on novel prompts that evoke toxic responses from the target model. The technique outperformed human testers and other machine-learning approaches by generating more distinct prompts that elicited increasingly toxic responses. Not only does their method significantly improve the coverage of inputs being tested compared to other automated methods, but it can also draw out toxic responses from a chatbot that had safeguards built into it by human experts.

How much improvement? Does the training stick or does it demonstrate that charming “Bayesian drift” which allows the probabilities to go walk-about, nibble some magic mushrooms, and generate fantastical answers? How long did the process take? Was it iterative? So many questions, and so few answers.

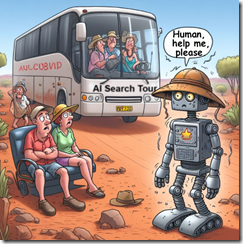

But for this group of AI wizards, the future is curiosity-driven red-teaming. Presumably the smart software will not get lost, suffer heat stroke, and hallucinate. No toxicity, please.

Stephen E Arnold, April 15, 2024