Google: Lost in Its Own AI Maze

May 31, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

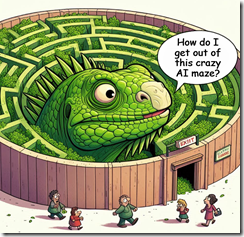

One “real” news items caught my attention this morning. Let me tell you. Even with the interesting activities in the Manhattan court, these jumped at me. Let’s take a quick look and see if Googzilla (see illustration) can make a successful exit from the AI maze in which the online advertising giant finds itself.

Googzilla is lost in its own AI maze. Can it find a way out? Thanks, MSFT Copilot. Three tries and I got a lizard in a maze. Keep allocating compute cycles to security because obviously Copilot is getting fewer and fewer these days.

“Google Pins Blame on Data Voids for Bad AI Overviews, Will Rein Them In” makes it clear that Google is not blaming itself for some of the wacky outputs its centerpiece AI function has been delivering. I won’t do the guilty-34-times thing. I will just mention the non-toxic glue and pizza item. This news story reports:

Google thinks the AI Overviews for its search engine are great, and is blaming viral screenshots of bizarre results on "data voids" while claiming some of the other responses are actually fake. In a Thursday post, Google VP and Head of Google Search Liz Reid doubles down on the tech giant’s argument that AI Overviews make Google searches better overall—but also admits that there are some situations where the company "didn’t get it right."

So let’s look at that Google blog post titled “AI Overviews: About Last Week.”

How about this statement?

User feedback shows that with AI Overviews, people have higher satisfaction with their search results, and they’re asking longer, more complex questions that they know Google can now help with. They use AI Overviews as a jumping off point to visit web content, and we see that the clicks to webpages are higher quality — people are more likely to stay on that page, because we’ve done a better job of finding the right info and helpful webpages for them.

The statement strikes me as something that a character would say in an episode of the Twilight Zone, a TV series in the 50s and 60s. The TV show had a weird theme, and I thought I heard it playing when I read the official Googley blog post. Is this the Google “bullseye” method or a bullsh*t method?

The official Googley blog post notes:

This means that AI Overviews generally don’t “hallucinate” or make things up in the ways that other LLM products might. When AI Overviews get it wrong, it’s usually for other reasons: misinterpreting queries, misinterpreting a nuance of language on the web, or not having a lot of great information available. (These are challenges that occur with other Search features too.) This approach is highly effective. Overall, our tests show that our accuracy rate for AI Overviews is on par with another popular feature in Search — featured snippets — which also uses AI systems to identify and show key info with links to web content.

Okay, we are into bullsh*t method. Google search is now a key moment in the Sundar & Prabhakar Comedy Act. Since the début in Paris which featured incorrect data, the Google has been in Code Red or Red Alert of red faced-embarrassment mode. Now the company wants people to eat rocks, and it is not the online advertising giant’s fault. The blog post explains:

There isn’t much web content that seriously contemplates that question, either. This is what is often called a “data void” or “information gap,” where there’s a limited amount of high quality content about a topic. However, in this case, there is satirical content on this topic … that also happened to be republished on a geological software provider’s website. So when someone put that question into Search, an AI Overview appeared that faithfully linked to one of the only websites that tackled the question. In other examples, we saw AI Overviews that featured sarcastic or troll-y content from discussion forums. Forums are often a great source of authentic, first-hand information, but in some cases can lead to less-than-helpful advice, like using glue to get cheese to stick to pizza.

Okay, I think one component of the bullsh*t method is that it is not Google’s fault. “Users” — not customers because Google has advertising clients, partners, and some lobbyists. Everyone else is a user, and it is users’ fault, the data creators’ fault, and probably Sam AI-Man’s fault. (Did I omit anyone on whom to blame the let them “eat rocks” result?)

And the Google cares. This passage is worthy of a Hallmark card with a foldout:

At the scale of the web, with billions of queries coming in every day, there are bound to be some oddities and errors. We’ve learned a lot over the past 25 years about how to build and maintain a high-quality search experience, including how to learn from these errors to make Search better for everyone. We’ll keep improving when and how we show AI Overviews and strengthening our protections, including for edge cases, and we’re very grateful for the ongoing feedback.

What’s my take on this?

- The assumption that Google search is “good” is interesting, just not in line with what I hear, read, and experience when I do use Google. Note: That my personal usage has decreased over time.

- Google is trying to explain away its obvious flaws. The Google speak may work for some people, just not for me.

- The tone is that of a entitled seventh-grader from a wealthy family, not the type of language I find particularly helpful when the “smart” Google software has to be remediated by humans. Google is terminating humans, right? Now Google needs humans. What’s up Google?

Net net: Google is snagged it ins own AI maze. I am growing less confident in the company’s ability to extricate itself. The Sam AI-Man has crafted deals with two outfits big enough to make Google’s life more interesting. Google’s own management seems ineffectual despite the flashing red and yellow lights and the honking of alarms. Google’s wordsmiths and lawyers are running out of verbal wiggle room. But most important, the failure of the bullseye method and the oozing comfort of the bullsh*it method marks a turning point for the company.

Stephen E Arnold, May 31, 2024