Free AI Round Up with Prices

June 18, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

EWeek (once PCWeek and a big fat Ziff publication) has published what seems to be a mash up of MBA-report writing, a bit of smart software razzle dazzle, and two scoops of Gartner Group-type “insight.” The report is okay, and its best feature is that it is free. Why pay a blue-chip or mid-tier consulting firm to assemble a short monograph? Just navigate to “21 Best Generative AI Chatbots.”

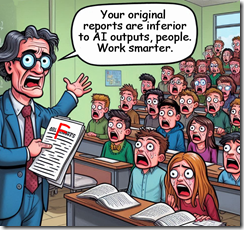

A lecturer shocks those in the presentation with a hard truth: Human-generated reports are worse than those produced by a “leading” smart software system. Is this the reason a McKinsey professional told interns, “Prompts are the key to your future.” Thanks, MSFT Copilot. Good enough.

The report consists of:

A table with the “leading” chatbots presented in random order. Forget that alphabetization baloney. Sorting by “leading” chatbot name is so old timey. The table presents these evaluative/informative factors:

- Best for use case; that is, in the opinion of the “analysts” when one would use a specific chatbot in the opinion of the EWeek “experts” I assume

- Query limit. This is baffling since recyclers of generative technology are eager to sell a range of special plans

- Language model. This column is interesting because it makes clear that of the “leading” chatbots 12 of them are anchored in OpenAI’s “solutions”; Claude turns up three times, and Llama twice. A few vendors mention the use of multiple models, but the “report” does not talk about AI layering or the specific ways in which different systems contribute to the “use case” for each system. Did I detect a sameness in the “leading” solutions? Yep.

- The baffling Chrome “extension.” I think the idea is that the “leading” solution with a Chrome extension runs in the Google browser. Five solutions do run as a Chrome extension. The other 16 don’t.

- Pricing. Now prices are slippery. My team pays for ChatGPT, but since the big 4o, the service seems to be free. We use a service not on the list, and each time I access the system, the vendor begs — nay, pleads — for more money. One vendor charges $2,500 per month paid annually. Now, that’s a far cry from Bing Chat Enterprise at $5 per month, which is not exactly the full six pack.

The bulk of the report is a subjective score for each service’s feature set, its ease of use, the quality of output (!), and support. What these categories mean is not provided in a definition of terms. Hey, everyone knows about “quality,” right? And support? Have you tried to contact a whiz-bang leading AI vendor? Let me know how that works out? The screenshots vary slightly, but the underlying sameness struck me. Each write up includes what I would call a superficial or softball listing of pros and cons.

The most stunning aspect of the report is the explanation of “how” the EWeek team evaluated these “leading” systems. Gee, what systems were excluded and why would have been helpful in my opinion. Let me quote the explanation of quality:

To determine the output quality generated by the AI chatbot software, we analyzed the accuracy of responses, coherence in conversation flow, and ability to understand and respond appropriately to user inputs. We selected our top solutions based on their ability to produce high-quality and contextually relevant responses consistently.

Okay, how many queries? How were queries analyzed across systems, assuming similar systems received the same queries? Which systems hallucinated or made up information? What queries causes one or more systems to fail? What were the qualifications of those “experts” evaluating the system responses? Ah, so many questions. My hunch is that EWeek just skipped the academic baloney and went straight to running queries, plugging in a guess-ti-mate, and heading to Starbucks? I do hope I am wrong, but I worked at the Ziffer in the good old days of the big fat PCWeek. There was some rigor, but today? Let’s hit the gym?

What is the conclusion for this report about the “leading” chatbot services? Here it is:

Determining the “best” generative AI chatbot software can be subjective, as it largely depends on a business’s specific needs and objectives. Chatbot software is enormously varied and continuously evolving, and new chatbot entrants may offer innovative features and improvements over existing solutions. The best chatbot for your business will vary based on factors such as industry, use case, budget, desired features, and your own experience with AI. There is no “one size fits all” chatbot solution.

Yep, definitely worth the price of admission.

Stephen E Arnold, June 18, 2024