MIT Iceberg: Identifying Hotspots

December 10, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

I like the idea of identifying exposure hotspots. (I hate to mention this, but MIT did have a tie up with Jeffrey Epstein, did it not? How long did it take for that hotspot to be exposed? The dynamic duo linked in 2002 and wound down the odd couple relationship in 2017. That looks to me to be about 15 years.) Therefore, I approach MIT-linked research from some caution. Is this a good idea? Yep.)

What is this iceberg thing? I won’t invoke the Titanic’s encounter with an iceberg, nor will I point to some reports about faulty engineering. I am confident had MIT been involved, that vessel would probably be parked in a harbor, serving as a museum.

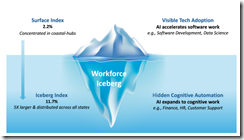

I read “The Iceberg Index: Measuring Skills-centered Exposure in the AI Economy.” You can too. The paper is free at least for a while. It also has 10 authors who generated 21 pages to point out that smart software is chewing up jobs. Of course, this simple conclusion is supported by quite a bit of academic fireworks.

The iceberg chart which reminds me of the Dark Web charts. I wonder if Jeffrey Epstein surfed the Dark Web while waiting for a meet and greet at MIT? The source for this image is MIT or possibly an AI system helping out the MIT graphic artist humanoid.

I love these charts. I find them eye catching and easily skippable.

Even though smart software makes up stuff, appears to have created some challenges for teachers and college professors (except those laboring in Jeffrey Epstein’s favorite grove of academic, of course), and people looking for jobs. The as is smart software can eliminate about 10 to 11 percent of here and now jobs. The good news is that 90 percent of the workers can wait for AI to get better and then eliminate another chunk of jobs. For those who believe that technology just gets better and better, the number of jobs for humanoids is likely to be gnawed and spat out for the foreseeable future.

I am not going to cause the 10 authors to hire SEO spam shops in Africa to make my life miserable. I will suggest, however, that there may be what I call de-adoption in the near future. The idea is that an organization is unhappy with the cost / value for its AI installation. A related factor is that some humans in an organization may introduce some work flow friction. The actions can range from griping about services interrupting work like Microsoft’s enterprise Copilot to active sabotage. People can fake being on a work related video conference, and I assume a college graduate (not from MIT, of course) might use this tactic to escape these wonderful face to face innovations. Nor will I suggest that AI may continue to need humans to deliver successful work task outcomes. Does an AI help me buy more of a product? Does AI boost your satisfaction with an organization pushing and AI helper on each of its Web pages?

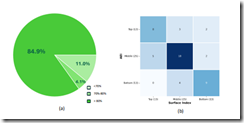

And no academic paper (except those presented at AI conferences) are complete without some nifty traditional economic type diagrams. Here’s an example for the industrious reader to check:

Source: the MIT Report. Is it my imagination or five of the six regression lines pointing down? What’s negative correlation? (Yep, dinobaby stuff.)

Several observations:

- This MIT paper is similar to blue chip consulting “thought pieces.” The blue chippers write to get leads and close engagements. What is the purpose of this paper? Reading posts on Reddit or LinkedIn makes clear that AI allegedly is replacing jobs or used as an excuse to dump expensive human workers.

- I identified a couple of issues I would raise if the 10 authors had trooped into my office when I worked at a big university and asked for comments. My hunch is that some of the 10 would have found me manifesting dinobaby characteristics even though I was 23 years old.

- The spate of AI indexes suggests that people are expressing their concern about smart software that makes mistakes by putting lipstick on what is a very expensive pig. I sense a bit of anxiety in these indexes.

Net net: Read the original paper. Take a look at your coworkers. Which will be the next to be crushed because of the massive investments in a technology that is good enough, over hyped, and perceived as the next big thing. (Measure the bigness by pondering the size of Meta’s proposed data center in the southern US of A.) Remember, please, MIT and Epstein Epstein Epstein.

Stephen E Arnold, December 10, 2025

File Conversion. No Problem. No Kidding?

December 10, 2025

Another short essay from a real and still-alive dinobaby. If you see an image, we used AI. The dinobaby is not an artist like Grandma Moses.

Another short essay from a real and still-alive dinobaby. If you see an image, we used AI. The dinobaby is not an artist like Grandma Moses.

Every few months, I get a question about file conversion. The questions are predictable. Here’s a selection from my collection:

- “We have data about chemical structures. How can we convert these to for AI processing?”

- “We have back up files in Fastback encrypted format. How do we decrypt these and get the data into our AI system?”

- “We have some old back up tapes from our Burroughs’ machines?”

- “We have PDFs. Some were created when Adobe first rolled out Acrobat and some generated by different third-party PDF printing solutions. How can we convert these so our AI can provide our employees with access?”

The answer to each of these questions from the new players in AI-search system is, “No problem.” I hate to rain on these marketers’ assertions, but these are typical problems large, established organizations have moving content from a legacy system into a BAIT (big AI tech) based findability solution. There are technical challenges. There are cost challenges. There are efficiency challenges. That’s right. Challenges, and in my long career in electronic content processing, these hurdles still remain. But I am an aged dinobaby. Why believe me? Hire a Gartner-type of expert to tell you what you want to hear. Have fun with that solution, please.

Thanks, Venice.ai. Close enough for horse shoes, the high-water mark today I believe.

Venture Beat is one of my go-to sources for timely content marketing. On November 14, 2025, the venerable firm published “Databricks: PDF Parsing for Agentic AI Is Still Unsolved. New Tool Replaces Multi-Service Pipelines with a Single Function.” The write up makes clear that I am 100 percent dead wrong about processing PDF files with their weird handling of tables, charts, graphs, graphic ornaments, and dense financial data.

The write up explains how really off base I am; for example, the Databricks Agent Bricks Platform. It cracks the AI parsing problem. I learned from the Venture Beat write up identifies what the DABP does with PDF information:

1 “Tables preserved exactly as they appear, including merged cells and nested structures

2 Figures and diagrams with AI-generated captions and descriptions

3 Spatial metadata and bounding boxes for precise element location

4 Optional image outputs for multimodal search applications”

Once the PDFs have been processed by DABP, the outputs can be used in a number of ways. I assume these are advanced, stable, and efficient as the name “databrick” metaphorically suggests:

1 Spark declarative pipelines

2 Unity catalog (I don’t know what this means)

3 Vector search (yep, search and retrieval)

4 AI function chaining (yep, bots)

5 Multi-agent supervisor (yep, command and control).

The write up concludes with this statement:

The Databricks approach sheds new light on an issue that many might have considered to be a solved problem. It challenges existing expectations with a new architecture that could benefit multiple types of workflows. However, this is a platform-specific capability that requires careful evaluation for organizations not already using Databricks. For technical decision-makers evaluating AI agent platforms, the key takeaway is that document intelligence is shifting from a specialized external service to an integrated platform capability.

Net net: What is novel in that chemical structure? What about that guy who retired in 2002 who kept a pile of Fastback floppies with his research into in Trinitrotoluene variants? Yep, content processing is not problem except the data on those back up tapes cranked out by that old Burroughs’ MFSOLT utility, but with the new AI approaches, who needs layers of contractors and conversion utilities. Just say, “Not a problem.” Everything is easy for a market collateral writer.

Stephen E Arnold, December 10, 2025

A Job Bright Spot: RAND Explains Its Reality

December 10, 2025

Optimism On AI And Job Market

Remember when banks installed automatic teller machines at their locations? They’re better known by the acronym ATM. ATMs didn’t take away jobs, instead they increased the number of banks, and created more jobs. AI will certainly take away jobs but the technology will also create more. Rand.org investigates how AI is affecting the job market in the article, “AI Is Making Jobs, Not Taking Them.”

What I love about this article is that it says the truth about aI technology: no one knows what will happen with it. We have theories ,explored in science fiction, about what AI will do: from the total collapse of society to humdrum normal societal progress. What Rand’s article says is that the research shows AI adoption is uneven and much slower than Wall Street and Silicon Valley say. Rand conducted some research:

“At RAND, our research on the macroeconomic implications of AI also found that adoption of generative AI into business practices is slow going. By looking at recent census surveys of businesses, we found the level of AI use also varies widely by sector. For large sectors like transportation and warehousing, AI adoption hovered just above 2 percent. For finance and insurance, it was roughly 10 percent. Even in information technology—perhaps the most likely spot for generative AI to leave its mark—only 25 percent of businesses were using generative AI to produce goods and services.”

Most of the fear related to AI stems from automation of job tasks. Here are some statistics from OpenAI:

“In a widely referenced study, OpenAI estimated that 80 percent of the workforce has at least 10 percent of their tasks exposed to LLM-driven automation, and 19 percent of workers could have at least 50 percent of their tasks exposed. But jobs are more than individual tasks. They are a string of tasks assembled in a specific way. They involve emotional intelligence. Crude calculations of labor market exposure to AI have seemingly failed to account for the nuance of what jobs actually are, leading to an overstated risk of mass unemployment.”

AI is a wondrous technology, but it’s still infantile and stupid. Humans will adapt and continue to have jobs.

Whitney Grace, December 10, 2025

Sam AI-Man Is Not Impressing ZDNet

December 9, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

In the good old days of Ziff Communication, editorial and ad sales were separated. The “Chinese wall” seemed to work. It would be interesting to go back in time and let the editorial team from 1985 check out the write up “Stop Using ChatGPT for Everything: The AI Models I Use for Research, Coding, and More (and Which I Avoid).” The “everything” is one of those categorical affirmatives that often cause trouble for high school debaters or significant others arguing with a person who thinks a bit like a Silicon Valley technology person. Example: “I have to do everything around here.” Ever hear that?

Yes, granny. You say one thing, but it seems to me that you are getting your cupcakes from a commercial bakery. You cannot trust dinobabies when they say “I make everything” can you?

But the subtitle strikes me as even more exciting; to wit:

From GPT to Claude to Gemini, model names change fast, but use cases matter more. Here’s how I choose the best model for the task at hand.

This is the 2025 equivalent to a 1985 article about “Choosing Character Sets with EGA.” Peter Norton’s article from November 26, 1985, was mostly arcana, not too much in the opinion game. The cited “Stop Using ChatGPT for Everything” is quite different.

Here’s a passage I noted:

(Disclosure: Ziff Davis, ZDNET’s parent company, filed an April 2025 lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.)

And what about ChatGPT as a useful online service? Consider this statement:

However, when I do agentic coding, I’ve found that OpenAI’s Codex using GPT-5.1-Max and Claude Code using Opus 4.5 are astonishingly great. Agentic AI coding is when I hook up the AIs to my development environment, let the AIs read my entire codebase, and then do substantial, multi-step tasks. For example, I used Codex to write four WordPress plugin products for me in four days. Just recently, I’ve been using Claude Code with Opus 4.5 to build an entire complex and sophisticated iPhone app, which it helped me do in little sprints over the course of about half a month. I spent $200 for the month’s use of Codex and $100 for the month’s use of Claude Code. It does astonish me that Opus 4.5 did so poorly in the chatbot experience, but was a superstar in the agentic coding experience, but that’s part of why we’re looking at different models. AI vendors are still working out the kinks from this nascent technology.

But what about “everything” as in “stop using ChatGPT for everything”? Yeah, well, it is 2025.

And what about this passage? I quote:

Up until now, no other chatbot has been as broadly useful. However, Gemini 3 looks like it might give ChatGPT a run for its money. Gemini 3 has only been out for a week or so, which is why I don’t have enough experience to compare them. But, who knows, in six months this category might list Gemini 3 as the favorite model instead of GPT-5.1.

That “everything” still haunts me. It sure seems to me as if the ZDNet article uses ChatGPT a great deal. By the author’s own admission, he “doesn’t have enough experience to compare them.” But, but, but (as Jack Benny used to say) and then blurt “stop for everything!” Yeah, seems inconsistent to me. But, hey, I am a dinobaby.

I found this passage interesting as well:

Among the big names, I don’t use Perplexity, Copilot, or Grok. I know Perplexity also uses GPT-5.1, but it’s just never resonated with me. It’s known for search, but the few times I’ve tried some searches, its results have been meh. Also, I can’t stand the fact that you have to log in via email.

I guess these services suck as much as the ChatGPT system the author uses. Why? Yeah, log in method. That’s substantive stuff in AI land.

Observations:

- I don’t think this write up is output by AI or at least any AI system with which I am familiar

- I find the title and the text a bit out of step

- The categorical affirmative is logically loosey goosey.

Net net: Sigh.

Stephen E Arnold, December 9, 2025

Google Presents an Innovative Way to Say, “Generate Revenue”

December 9, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

One of my contacts sent me a link to an interesting document. Its title is “A Pragmatic Vision for Interpretability.” I am not sure about the provenance of the write up, but it strikes me as an output from legal, corporate, and wizards. First impression: Very lengthy. I estimate that it requires about 11,000 words to say, “Generate revenue.” My second impression: A weird blend of consulting speak and nervousness.

A group of Googlers involved in advanced smart software ideation get a phone call clarifying they have to hit revenue targets. No one looks too happy. The esteemed leader is on the conference room wall. He provides a North Star to the wandering wizards. Thanks, Venice.ai. Good enough, just like so much AI system output these days.

The write up is too long to meander through its numerous sections, arguments, and arm waving. I want to highlight three facets of the write up and leave it up to you to print this puppy out, read it on a delayed flight, and consider how different this document is from the no output approach Google used when it was absolutely dead solid confident that its search-ad business strategy would rule the world forever. Well, forever seems to have arrived for Googzilla. Hence, be pragmatic. This, in my experience, is McKinsey speak for hit your financial targets or hit the road.

First, consider this selected set of jargon:

Comparative advantage (maybe keep up with the other guys?)

Load-bearing beliefs

Mech Interp” / “mechanistic interpretability” (as opposed to “classic” interp)

Method minimalism

North Star (is it the person on the wall in the cartoon or just revenue?)

Proxy task

SAE (maybe sparse autoencoders?)

Steering against evaluation awareness (maybe avoiding real world feedback?)

Suppression of eval-awareness (maybe real-world feedback?)

Time-box for advanced research

The document tries to hard to avoid saying, “Focus on stuff that makes money.” I think that, however, is what the word choice is trying to present in very fancy, quasi-baloney jingoism.

Second, take a look at the three sets of fingerprints in what strikes me as a committee-written document.

- Researchers want to just follow their ideas about smart software just as we have done at Google for many years

- Lawyers and art history majors who want to cover their tailfeathers when Gemini goes off the rails

- Google leadership who want money or at the very least research that leads to products.

I can see a group meeting virtually, in person, and in the trenches of a collaborative Google Doc until this masterpiece of management weirdness is given the green light for release. Google has become artful in make work, wordsmithing, and pretend reconciliation of the battles among the different factions, city states, and empires within Google. One can almost anticipate how the head of ad sales reacts to money pumped into data centers and research groups who speak a language familiar to Klingons.

Third, consider why Google felt compelled to crank out a tortured document to nail on the doors of an AI conference. When I interacted with Google over a number of years, I did not meet anyone reminding me of Martin Luther. Today, if I were to return to Shoreline Drive, I might encounter a number of deep fakes armed with digital hammers and fervid eyes. I think the Google wants to make sure that no more Loons and Waymos become the butt of stand up comedians on late night TV or (heaven forbid, TikTok). The dead cat in the Mission and the dead puppy in what’s called (I think) the Western Addition. (I used to live in Berkeley, and I never paid much attention to the idiosyncratic names slapped on undifferentiable areas of the City by the Bay.)

I think that Google leadership seeks in this document:

- To tell everyone it is focusing on stuff that sort of works. The crazy software that is just like Sundar is not on the to do list

- To remind everyone at the Google that we have to pay for the big, crazy data centers in space, our own nuclear power plants, and the cost of the home brew AI chips. Ads alone are no longer going to be 24×7 money printing machines because of OpenAI

- To try to reduce the tension among the groups, cliques, and digital street gangs in the offices and the virtual spaces in which Googlers cogitate, nap, and use AI to be more efficient.

Net net: Save this document. It may become a historical artefact.

Stephen E Arnold, December 9, 2025

AI: Continuous Degradation

December 9, 2025

Many folks are unhappy with the flood of AI “tools” popping up unbidden. For example, writer Raghav Sethi at Make Use Of laments, “I’m Drowning in AI Features I Never Asked For and I Absolutely Hate It.” At first, Sethi was excited about the technology. Now, though, he just wishes the AI creep would stop. He writes:

“Somewhere along the way, tech companies forgot what made their products great in the first place. Every update now seems to revolve around AI, even if it means breaking what already worked. The focus isn’t on refining the experience anymore; it’s about finding new places to wedge in an AI assistant, a chatbot, or some vaguely ‘smart’ feature that adds little value to the people actually using it.”

Gemini is the author’s first example: He found it slower and less useful than the old Google Assistant, to which he returned. Never impressed by Apple’s Siri, he found Apple Intelligence made it even less useful. As for Microsoft, he is annoyed it wedges Copilot into Windows, every 365 app, and even the lock screen. Rather than helpful tool, it is a constant distraction. Smaller firms also embrace the unfortunate trend. The maker of Sethi’s favorite browser, Arc, released its AI-based successor Dia. He asserts it “lost everything that made the original special.” He summarizes:

“At this point, AI isn’t even about improving products anymore. It’s a marketing checkbox companies use to convince shareholders they’re staying ahead in this artificial race. Whether it’s a feature nobody asked for or a chatbot no one uses, it’s all about being able to say ‘we have AI too.’ That constant push for relevance is exactly what’s ruining the products that used to feel polished and well-thought-out.”

And it is does not stop with products, the post notes. It is also ruining “social” media. Sethi is more inclined to believe the dead Internet theory than he used to be. From Instagram to Reddit to X, platforms are filled with AI-generated, SEO-optimized drivel designed to make someone somewhere an easy buck. What used to connect us to other humans is now a colossal waste of time. Even Google Search– formerly a reliable way to find good information– now leads results with a confident AI summery that is often wrong.

The write-up goes on to remind us LLMs are built on the stolen work of human creators and that it is sopping up our data to build comprehensive profiles on us all. Both excellent points. (For anyone wishing to use AI without it reporting back to its corporate overlords, he points to this article on how to run an LLM on one’s own computer. The endeavor does require some beefy hardware, however.)

Sethi concludes with the wish companies would reconsider their rush to inject AI everywhere and focus on what actually makes their products work well for the user. One can hope.

Cynthia Murrell, December 9, 2025

ChatGPT: Smoked by GenX MBA Data

December 8, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

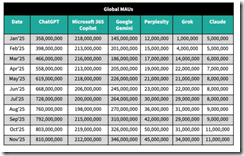

I saw this chart from Sensor Tower in several online articles. Examples include TechCrunch, LinkedIn, and a couple of others. Here’s the chart as presented by TechCrunch on December 5, 2025:

Yes, I know it is difficult to read. Complain to WordPress, not me, please.

The seven columns are labeled Date starting on January 2025. I am not sure if this is December 2024 data compiled in January 2025 or end of January 2025 data. Meta data would be helpful, but I am a dinobaby and this is a very GenX-type of Excel chart. The chart then presents what I think are mobile installs or some action related to the “event” captured when the Sensor Tower data receives a signal. I am not sure, and some remarks about how the data were collected would be helpful to a person disguised as a dinobaby. The column heads are not in alphabetical order. I assume the hassle of alphabetizing was too much work for whoever created the table. Here’s the order:

- ChatGPT

- Microsoft 365 Copilot

- Google Gemini

- Perplexity

- Grok

- Claude

The second thing I noticed was that the data do not reflect individual installs or uses. Thus, these data are of limited use to a dinobaby like me. Sure, I can see that ChatGPT’s growth slowed (if the numbers are on the money) and Gemini’s grew. But ChatGPT has a bigger base and it may be finding it ore difficult to attract installs or events so the percent increase seems to shout, “Bad news, Sam AI-Man.”

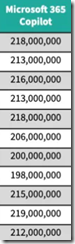

Then there is the issue of number of customers. We are now shifting from the impression some may have that these numbers represent individual humans to the fuzzy notion of app events. Why does this matter? Google and Microsoft have many more corporate and individual users than the other firms combined. If Google or Microsoft pushes or provides free access, those events will appeal to the user base and the number of “events” will jump. The data narrow Microsoft’s AI to Microsoft 365 Copilot. Google’s numbers are not narrowed. They may be, but there is not metadata to help me out. Here’s the Microsoft column:

As a result, the graph of the Microsoft 365 Copilot looks like this:

What’s going on from May to August 2025? I have no clue. Vacations maybe? Again that old fashioned metadata, footnotes, and some information about methodology would be helpful to a dinobaby. I mention the Microsoft data for one reason: None of the other AI systems listed in the Sensor Tower data table have this characteristic. Don’t users of ChatGPT, Google, et al, go on vacation? If one set of data for an important company have an anomaly, can one trust the other data. Those data are smooth.

If I look at the complete array of numbers, I expected to see more ones. There is some weird Statistics 101 “law” about digit frequency, and it seems to this dinobaby that it’s not being substantiated in the table. I can overlook how tidy the numbers are because why not round big numbers. It works for Fortune 1000 budgets and for many government agencies’ budgets.

A person looking at these data will probably think “number of users.” Nope, number of events recorded by Sensor Tower. Some of the vendors can force or inject AI into a corporate, governmental, or individual user stream. Some “events” may be triggered by workflows that use multiple AI systems. There are probably a few people with too much time and no money sense paying for multiple services and using them to explore a single topic or area in inquiry; for example, what is the psychological make up of a GenX MBA who presents data that can be misinterpreted.

Plus, the AI systems are functionally different and probably not comparable using “event” data. For example, Copilot may reflect events in corporate document editing. The Google can slam AI into any of its multi-billion user, system, or partner activities. I am not sure about Claude (Anthropic) or Grok. What about Amazon? Nowhere to be found I assume. The Chinese LLMs? Nope. Mistral? Crickets.

Finally, should I raise the question of demographics? Ah, you say, “No.” Okay, I am easy. Forget demos; there aren’t any.

Please, check out the cited article. I want to wrap up by quoting one passage from the TechCrunch write up:

Gemini is also increasing its share of the overall AI chatbot market when compared across all top apps like ChatGPT, Copilot, Claude, Perplexity, and Grok. Over the past seven months (May-November 2025), Gemini increased its share of global monthly active users by three percentage points, the firm estimates.

This sounds like Sensor Tower talking.

Net net: I am not confident in GenX “event” data which seems to say, “ChatGPT is losing the AI race.” I may agree in part with this sentiment, but the data from Sensor Tower do influence me. But marketing is marketing.

Stephen E Arnold, December 8, 2025

Clippy, How Is Copilot? Oh, Too Bad

December 8, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

In most of my jobs, rewards landed on my desk when I sold something. When the firms silly enough to hire me rolled out a product, I cannot remember one that failed. The sales professionals were the early warning system for many of our consulting firm’s clients. Management provided money to a product manager or R&D whiz with a great idea. Then a product or new service idea emerged, often at a company event. Some were modest, but others featured bells and whistles. One such roll out had a big name person who a former adviser to several presidents. These firms were either lucky or well managed. Product dogs, diseased ferrets, and outright losers were identified early and the efforts redirected.

Two sales professionals realize that their prospects resist Microsoft’s agentic pawing. Mortgages must be paid. Sneakers must be purchased. Food has to be put on the table. Sales are needed, not push backs. Thanks, Venice.ai. Good enough.

But my employers were in tune with what their existing customer base wanted. Climbing a tall tree and going out on a limb were not common occurrences. Even Apple, which resides in a peculiar type of commercial bubble, recognizes a product that does not sell. A recent example is the itsy bitsy, teeny weenie mobile thingy. Apple bounced back with the Granny Scarf designed to hold any mobile phone. The thin and light model is not killed; its just not everywhere like the old reliable orange iPhone.

Sales professionals talk to prospects and customers. If something is not selling, the sales people report, “Problemo, boss.”

In the companies which employed me, the sales professionals knew what was coming and could mention in appropriately terms to those in the target market. This happened before the product or service was in production or available to clients. My employers (Halliburton, Booz, Allen, and a couple of others held in high esteem) had the R&D, the market signals, the early warning system for bad ideas, and the refinement or improvement mechanism working in a reliable way.

I read “Microsoft Drops AI Sales Targets in Half after Salespeople Miss Their Quotas.” The headline suggested three things to me instantly:

- The pre-sales early warning radar system did not exist or it was broken

- The sales professionals said in numbers, “Boss, this Copilot AI stuff is not selling.”

- Microsoft committed billions of dollars and significant, expensive professional staff time on something that prospects and customers do not rush to write checks, use, or tell their friends about the next big thing.”

The write up says:

… one US Azure sales unit set quotas for salespeople to increase customer spending on a product called Foundry, which helps customers develop AI applications, by 50 percent. Less than a fifth of salespeople in that unit met their Foundry sales growth targets. In July, Microsoft lowered those targets to roughly 25 percent growth for the current fiscal year. In another US Azure unit, most salespeople failed to meet an earlier quota to double Foundry sales, and Microsoft cut their quotas to 50 percent for the current fiscal year. The sales figures suggest enterprises aren’t yet willing to pay premium prices for these AI agent tools. And Microsoft’s Copilot itself has faced a brand preference challenge: Earlier this year, Bloomberg reported that Microsoft salespeople were having trouble selling Copilot to enterprises because many employees prefer ChatGPT instead.

Microsoft appears to have listened to the feedback. The adjustment, however, does not address the failure to implement the type of marketing probing process used by Halliburton and Booz, Allen: Microsoft implemented the “think it and it will become real.” The thinking in this case is that software can perform human work roles in a way that is equivalent to or better than a human’s execution.

I may be a dinobaby, but I figured out quickly that smart software has been for the last three years a utility. It is not quite useless, but it is not sufficiently robust to do the work that I do. Other people are on the same page with me.

My take away from the lower quotas is that Microsoft should have a rethink. The OpenAI bet, the AI acquisitions, the death march to put software that makes mistakes in applications millions use in quite limited ways, and the crazy publicity output to sell Copilot are sending Microsoft leadership both audio and visual alarms.

Plus, OpenAI has copied Google’s weird Red Alert. Since Microsoft has skin in the game with OpenAI, perhaps Microsoft should open its eyes and check out the beacons and listen to the klaxons ringing in Softieland sales meetings and social media discussions about Microsoft AI? Just a thought. (That Telegram virtual AI data center service looks quite promising to me. Telegram’s management is avoiding the Clippy-type error. Telegram may fail, but that outfit is paying GPU providers in TONcoin, not actual fiat currency. The good news is that MSFT can make Azure AI compute available to Telegram and get paid in TONcoin. Sounds like a plan to me.)

Stephen E Arnold, December 8, 2025

Telegram’s Cocoon AI Hooks Up with AlphaTON

December 5, 2025

[This post is a version of an alert I sent to some of the professionals for whom I have given lectures. It is possible that the entities identified in this short report will alter their messaging and delete their Telegram posts. However, the thrust of this announcement is directionally correct.]

Telegram’s rapid expansion into decentralized artificial intelligence announced a deal with AlphaTON Capital Corp. The Telegram post revealed that AlphaTON would be a flagship infrastructure and financial partner. The announcement was posted to the Cocoon Group within hours of AlphaTON getting clear of U.S. SEC “baby shelf” financial restrictions. AlphaTON promptly launched a $420.69 million securities push. Telegram and AlphaTON either acted in a coincidental way or Pavel Durov moved to make clear his desire to build a smart, Telegram-anchored financial service.

AlphaTON, a Nasdaq microcap formerly known as Portage Biotech rebranded in September 2025. The “new” AlphaTON claims to be deploying Nvidia B200 GPU clusters to support Cocoon, Telegram’s confidential-compute AI network. The company’s pivot from oncology to crypto-finance and AI infrastructure was sudden. Plus AlphaTON’s CEO Brittany Kaiser (best known for Cambridge Analytica) has allegedly interacted with Russian political and business figures during earlier data-operations ventures. If the allegations are accurate, Ms. Kaiser has connections to Russia-linked influence and financial networks. Telegram is viewed by some organizations like Kucoin as a reliable operational platform for certain financial activities.

Telegram has positioned AlphaTON as a partner and developer in the Telegram ecosystem. Firms like Huione Guarantee allegedly used Telegram for financial maneuvers that resulted in criminal charges. Other alleged uses of the Telegram platform have included other illegal activities identified in the more than a dozen criminal charges for which Pavel Durov awaits trial in France. Telegram’s instant promotion of AlphaTON, combined with the firm’s new ability to raise hundreds of millions, points to a coordinated strategy to build an AI-enabled financial services layer using Cocoon’s VAIC or virtual artificial intelligence complex.

The message seems clear. Telegram is not merely launching a distributed AI compute service; it is enabling a low latency, secrecy enshrouded AI-crypto financial construct. Telegram and AlphaTON both see an opportunity to profit from a fusion of distributed AI, cross jurisdictional operation, and a financial pay off from transactions at scale. For me and my research team, the AlphaTON tie-up signals that Telegram’s next frontier may blend decentralized AI, speculative finance, and actors operating far from traditional regulatory guardrails.

In my monograph “Telegram Labyrinth” (available only to law enforcement, US intelligence officers, and cyber attorneys in the US), Telegram requires close monitoring and a new generation of intelware software. Yesterday’s tools were not designed for what Telegram is deploying itself and with its partners. Thank you.

Stephen E Arnold, December 5, 2025, 1034 am US Eastern time

AI Bubble? What Bubble? Bubble?

December 5, 2025

Another dinobaby original. If there is what passes for art, you bet your bippy, that I used smart software. I am a grandpa but not a Grandma Moses.

Another dinobaby original. If there is what passes for art, you bet your bippy, that I used smart software. I am a grandpa but not a Grandma Moses.

I read “JP Morgan Report: AI Investment Surge Backed by Fundamentals, No Bubble in Sight.” The “report” angle is interesting. It implies unbiased, objective information compiled and synthesized by informed individuals. The content, however, strikes me as a bit of fancy dancing.

Here’s what strikes me as the main point:

A recent JP Morgan report finds the current rally in artificial intelligence (AI) related investments to be justified and sustainable, with no evidence of a bubble forming at this stage.

Feel better now? I don’t. The report strikes me as bank marketing with a big dose of cooing sounds. You know, cooing like a mother to her month old baby. Does the mother makes sense? Nope. The point is that warm cozy feeling that the cooing imparts. The mother knows she is doing what is necessary to reduce the likelihood of the baby making noises for sustained periods. The baby knows that mom’s heart is thudding along and the comfort speaks volumes.

Financial professionals in Manhattan enjoy the AI revolution. They know there is no bubble. I see bubbles (plural). Thanks, MidJourney. Good enough.

Sorry. The JP Morgan cooing is not working for me.

The write up says, quoting the estimable financial institution:

“The ingredients are certainly in place for a market bubble to form, but for now, at least, we believe the rally in AI-related investments is justified and sustainable. Capex is massive, and adoption is accelerating.”

What about this statement in the cited article?

JP Morgan contrasts the current AI investment environment to previous speculative cycles, noting the absence of cheap speculative capital or financial structures that artificially inflate prices. As AI investment continues, leverage may increase, but current AI spending is being driven by genuine earnings growth rather than assumptions of future returns.

After stating the “no bubble” argument three times, I think I understand.

Several observations:

- JP Morgan needed to make a statement that the AI data center thing, the depreciation issue, the power problem, and the potential for an innovation that derails the current LLM-type of processing are not big deals. These issues play no part in the non-bubble environment.

- The report is a rah rah for AI. Because there is no bubble, organizations should go forward and implement the current versions of smart software despite their proven “feature” of making up answers and failing to handle many routine human-performed tasks.

- The timing is designed to allow high net worth people a moment to reflect upon the wisdom of JP Morgan and consider moving money to the estimable financial institution for shepherding in what others think are effervescent moments.

My view: Consider the problems OpenAI has: [a] A need for something that knocks Googzilla off the sidewalk on Shoreline Drive and [b] more cash. Amazon — ever the consumer’s friend — is involved in making its own programmers use its smart software, not code cranked out by a non-Amazon service. Plus, Amazon is in the building mode, but it has allegedly government money to spend, a luxury some other firms are denied. Oracle is looking less like a world beater in databases and AI and more of a media-type outfit. Perplexity is probably perplexed because there are rumors that it may be struggling. Microsoft is facing some backlash because of its [a] push to make Copilot everyone’s friend and [b] dealing with the flawed updates to its vaunted Windows 11 software. Gee, why is FileManager not working? Let’s ask Copilot. On the other hand, let’s not.

Net net: JP Morgan is marketing too hard, and I am not sure it is resonating with me as unbiased and completely objective. As sales collateral, the report is good. As evidence there is no bubble, nope.

Stephen E Arnold, December 5, 2025