Smart Software: The DNA and Its DORK Sequence

October 22, 2025

![green-dino_thumb_thumb[3] green-dino_thumb_thumb[3]](https://www.arnoldit.com/wordpress/wp-content/uploads/2025/10/green-dino_thumb_thumb3_thumb.gif) This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

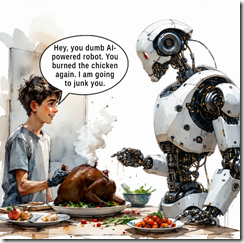

I love article that “prove” something. This is a gem: “Study Proves Being Rude to AI Chatbots Gets Better Results Than Being Nice.” Of course, I believe everything I read online. This write up reports as actual factual:

A new study claims that being rude leads to more accurate results, so don’t be afraid to tell off your chatbot. Researchers at Pennsylvania State University found that “impolite prompts consistently outperform polite ones” when querying large language models such as ChatGPT.

My initial reaction is that I would much prefer providing my inputs about smart software directly to outfits creating these modern confections of a bunch of technologies and snake oil. How about a button on Microsoft Copilot, Google Gemini or whatever it is now, and the others in the Silicon Valley global domination triathlon of deception, money burning, and method recycling? This button would be labeled, “Provide feedback to leadership.” Think that will happen? Unlikely.

Thanks, Venice.ai, not good enough, you inept creation of egomaniacal wizards.

Smart YouTube and smart You.com were both dead for hours. Hey, no problemo. Want to provide feedback? Sure, just write “we care” at either firm. A wizard will jump right on the input.

The write up adds:

Okay, but why does being rude work? Turns out, the authors don’t know, but they have some theories.

Based on my experience with Silicon Valley type smart software outfits, I have an explanation. The majority of the leadership has a latent protein in their DNA. This DORK sequence ensures that arrogance, indifference to others, and boundless confidence takes precedence over other characteristics; for example, ethical compass aligned with social norms.

Built by DORK software responds to dorkish behavior because the DORK sequence wakes up and actually attempts to function in a semi-reliable way.

The write up concludes with this gem:

The exact reason isn’t fully understood. Since language models don’t have feelings, the team believes the difference may come down to phrasing, though they admit “more investigation is needed.”

Well, that makes sense. No one is exactly sure how the black boxes churned out by the next big thing outfits work. Therefore, why being a dork to the model remains a mystery. Can the DORK sequence be modified by CRISPR/Cas9? Is there funding the Pennsylvania State University experts can pursue? I sure hope so.

Stephen E Arnold, October 22, 2025

AI Service Industry: Titan or Titanic?

October 6, 2025

Venture capitalists believe they have a new recipe for success: Buy up managed-services providers and replace most of the staff with AI agents. So far, it seems to be working. (For the VCs, of course, not the human workers.) However, asserts TechCrunch, “The AI Services Transformation May Be Harder than VCs Think.” Reporter Connie Loizos throws cold water on investors’ hopes:

“But early warning signs suggest this whole services-industry metamorphosis may be more complicated than VCs anticipate. A recent study by researchers at Stanford Social Media Lab and BetterUp Labs that surveyed 1,150 full-time employees across industries found that 40% of those employees are having to shoulder more work because of what the researchers call ‘workslop’ — AI-generated work that appears polished but lacks substance, creating more work (and headaches) for colleagues. The trend is taking a toll on the organizations. Employees involved in the survey say they’re spending an average of nearly two hours dealing with each instance of workslop, including to first decipher it, then decide whether or not to send it back, and oftentimes just to fix it themselves. Based on those participants’ estimates of time spent, along with their self-reported salaries, the authors of the survey estimate that workslop carries an invisible tax of $186 per month per person. ‘For an organization of 10,000 workers, given the estimated prevalence of workslop . . . this yields over $9 million per year in lost productivity,’ they write in a new Harvard Business Review article.”

Surprise: compounding baloney produces more baloney. If companies implement the plan as designed, “workslop” will expand even as the humans who might catch it are sacked. But if firms keep on enough people to fix AI mistakes, they will not realize the promised profits. In that case, what is the point of the whole endeavor? Rather than upending an entire industry for no reason, maybe we should just leave service jobs to the humans that need them.

Cynthia Murrell, October 6, 2025

Being Good: Irrelevant at This Time

September 29, 2025

Sadly I am a dinobaby and too old and stupid to use smart software to create really wonderful short blog posts.

Sadly I am a dinobaby and too old and stupid to use smart software to create really wonderful short blog posts.

I read an essay titled “Being Good Isn’t Enough.” The author seems sincere. He provides insight about how to combine knowledge to create greater knowledge value. These are not my terms. The jargon appears in “The Knowledge Value Revolution or a History of the Future by Taichi Sakaiya. The book was published in Japan in 1985. I gave some talks shortly after the book was available. One of the individuals whom I met after one of my lectures at the Osaka Institute of Technology. I recommend the book because it expands on the concepts touched upon in the cited essay.

“Being Good Isn’t Enough” states:

The biggest gains come from combining disciplines. There are four that show up everywhere: technical skill, product thinking, project execution, and people skills. And the more senior you get, the more you’re expected to contribute to each.

Sakaiya includes this Japanese proverb:

As an infant, he was a prodigy. As a student, he was brilliant. But after 20 years, he was just another young man.

“Being Good Isn’t Enough” walks through the idea of identifying “your weakest discipline” and then adds:

work on that.

Sound advice. However, in today’s business environment in the US, I do not think this suggestion is particularly helpful; to wit:

Find a mentor, be a mentor. Lead a project, propose one. Do the work, present it. Create spaces for others to do the same. Do whatever it takes to get better…. But all of this requires maybe the most important thing of all: agency. It’s more powerful than smarts or credentials or luck. And the best part is you can literally just choose to be high-agency. High-agency people make things happen. Low-agency people wait. And if you want to progress, you can’t wait.

I think the advice is unlikely to “work” in the present world of work is calibrating as if it were 1970. Today the path forward depends on:

- Political connections

- Friends who can make introductions

- Former colleagues who can provide a soft recommendation in order to avoid HR issues

- Influence either inherited from a parent or other family member or fame

- Credentials in the form of a degree or a letter of acceptance from an institution perceived by the lender or possible employer as credible.

A skill or blended skills are less relevant at this time.

The obvious problem is that a person looking for a job has to be more than a bundle of knowledge value. For most people, Sakaiya’s and “Being Good’s” assertions are unlikely to lead to what most people want from work: Fulfillment, reward, and stability.

Stephen E Arnold, September 29, 2025

Can Human Managers Keep Up with AI-Assisted Coders? Sure, Sure

September 26, 2025

AI may have sped up the process of coding, but it cannot make other parts of a business match its velocity. Business Insider notes, “Andrew Ng Says the Real Bottleneck in AI Startups Isn’t Coding—It’s Product Management.” The former Google Brain engineer and current Stanford professor shared his thoughts on a recent episode of the "No Priors" podcast. Writer Lee Chong Ming tells us:

“In the past, a prototype might take three weeks to develop, so waiting another week for user feedback wasn’t a big deal. But today, when a prototype can be built in a single day, ‘if you have to wait a week for user feedback, that’s really painful,’ Ng said. That mismatch is forcing teams to make faster product decisions — and Ng said his teams are ‘increasingly relying on gut.’ The best product managers bring ‘deep customer empathy,’ he said. It’s not enough to crunch data on user behavior. They need to form a mental model of the ideal customer. It’s the ability to ‘synthesize lots of signals to really put yourself in the other person’s shoes to then very rapidly make product decisions,’ he added.”

Experienced humans matter. Who knew? But Google, for one, is getting rid of managers. This Xoogler suggests managers are important. Is this the reason he is no longer at Google?

Cynthia Murrell, September 26, 2025

UAE: Will It Become U-AI?

September 23, 2025

Written by an unteachable dinobaby. Live with it.

Written by an unteachable dinobaby. Live with it.

UAE is moving forward in smart software, not just crypto. “Industry Leading AI Reasoning for All” reports that the Institute of foundation Models has “industry leading AI reasoning for all.” The new item reports:

Built on six pillars of innovation, K2 Think represents a new class of reasoning model. It employs long chain-of-thought supervised fine-tuning to strengthen logical depth, followed by reinforcement learning with verifiable rewards to sharpen accuracy on hard problems. Agentic planning allows the model to decompose complex challenges before reasoning through them, while test-time scaling techniques further boost adaptability.

I am not sure what the six pillars of innovation are, particularly after looking at some of the UAE’s crypto plays, but there is more. Here’s another passage which suggests that Intel and Nvidia may not be in the k2think.ai technology road map:

K2 Think will soon be available on Cerebras’ wafer-scale, inference-optimized compute platform, enabling researchers and innovators worldwide to push the boundaries of reasoning performance at lightning-fast speed. With speculative decoding optimized for Cerebras hardware, K2 Think will achieve unprecedented throughput of 2,000 tokens per second, making it both one of the fastest and most efficient reasoning systems in existence.

If you want to kick its tires (tAIres?), the system is available at k2think.ai and on Hugging Face. Oh, the write up quotes two people with interesting names: Eric Xing and Peng Xiao.

Stephen E Arnold, September 23, 2025

Innovation Is Like Gerbil Breeding: It Is Tough to Produce a Panda

September 8, 2025

Just a dinobaby sharing observations. No AI involved. My apologies to those who rely on it for their wisdom, knowledge, and insights.

Just a dinobaby sharing observations. No AI involved. My apologies to those who rely on it for their wisdom, knowledge, and insights.

The problem is innovation is a tough one. I remember getting a job from a top dog at the consulting firm silly enough to employ me. The task was to chase down the Forbes Magazine list of companies ordered by how much they spend on innovation. I recall that the goal was to create an “estimate” or what would be a “model” today of what a company of X size should be spending on “innovation.”

Do that today for an outfit like OpenAI or one of the other US efforts to deliver big money via the next big thing and the result is easy to express; namely, every available penny is spent trying to create something new. Yep, spend the cash innovating. Think it, and the “it” becomes real. Build “it,” and the “it” draws users with cash.

A recent and somewhat long essay plopped in my “Read file.” The article is titled “We’ve Lost the Plot with Smartphones.” (The write up requires signing up and / or paying for access.)

The main idea of the essay is that smartphones, once heralded as revolutionary devices for communication and convenience, have evolved into tools that undermine our attention and well-being. I agree. However, innovation may not fix the problem. In my view, the fix may be an interesting effort, but as long as there are gizmos, the status quo will return.

The essay suggests that the innovation arc of such devices like a toaster or the mobile phone solves problems or adds obvious convenience to a user otherwise unfamiliar with the device. Like Steve Jobs suggested, users have to see and use a device. Words alone don’t do the job. Pushing deck chairs around a technology yacht does not add much to the value of the device. This is the “me too” approach to innovation or what is often called “featuritis.”

Several observations:

- Innovations often arise without warning, no matter what process is used

- The US is supporting “old” businesses, and other countries are pushing applied AI, which may be a better bet

- Big money innovation usually surfs on month, years, or decades of previous work. Once that previous work is exhausted, the brutal odds of innovation success kick in. A few winners will emerge from many losers.

One of the oddities is the difficulty of identifying a significant or substantive innovation. That seems to be as difficult to do as set up a system to generate innovation. In short, technology innovation reminds me of gerbils. Start with a few and quickly have lots of gerbils. The problem is that you have gerbils and what you want is something different.

Good luck.

Stephen E Arnold, September 8, 2025

The HR Gap: First in Line, First Fooled

August 15, 2025

No AI. Just a dinobaby being a dinobaby.

No AI. Just a dinobaby being a dinobaby.

Not long ago I spoke with a person who is a big time recruiter. I asked, “Have you encountered any fake applicants?” The response, “No, I don’t think so.”

That’s the problem. Whatever is happening in HR continuing education, deep fake spoof employees is not getting through. I am not sure there is meaningful “continuing education” for personnel professionals.

I mention this cloud of unknowing in one case example because I read “Cloud Breaches and Identity Hacks Explode in CrowdStrike’s Latest Threat Report.” The write up reports:

The report … highlights the increasingly strategic use of generative AI by adversaries. The North Korea-linked hacking group Famous Chollima emerged as the most generative AI-proficient actor, conducting more than 320 insider threat operations in the past year. Operatives from the group reportedly used AI tools to craft compelling resumes, generate real-time deepfakes for video interviews and automate technical work across multiple jobs.

My first job was at Nuclear Utilities Services (an outfit soon after I was hired became a unit of Halliburton. Dick Cheney, Halliburton, remember?). One of the engineers came up to me after I gave a talk about machine indexing at what was called “Allerton House,” a conference center at the University of Illinois decades ago. The fellow liked my talk and asked me if my method could index technical content in English. I said, “Yes.” He said, “I will follow up next week.”

True to his word, the fellow called me and said, “I am changing planes at O’Hare on Thursday. Can you meet me at the airport to talk about a project? I was teaching part time at Northern Illinois University and doing some administrative work for a little money. Simultaneously I was working on my PhD at the University of Illinois. I said, “Sure.” DeKalb, Illinois, was about an hour west of O’Hare. I drove to the airport, met the person whom I remember was James K. Rice, an expert in nuclear waste water, and talked about what I was doing to support my family, keep up with my studies, and do what 20 years olds do. That is to say, just try to survive.

I explained the indexing, the language analysis I did for the publisher of Psychology Today and Intellectual Digest magazines, and the newsletter I was publishing for high school and junior college teachers struggling to educate ill-prepared students. As a graduate student and family, I explained that I had information and wanted to make it available to teachers facing a tough problem. I remember his comment, “You do this for almost nothing.” He had that right.

End of meeting. I forgot about nuclear and went back to my regular routine.

A month later I got a call from a person named Nancy who said, “Are you available to come to Washington, DC, to meet some people?” I figured out that this was a follow up to the meeting I had at O’Hare Airport. I went. Long story short: I dumped my PhD and went to work for what is generally unknown; that is, Halliburton is involved in things nuclear.

Why is this story from the 1970s relevant? The interview process did not involve any digital anything. I showed up. Two people I did not know pretended to care about my research work. I had no knowledge about nuclear other than when I went to grade school in Washington, DC, we had to go into the hall and cover our heads in case a nuclear bomb was dropped on the White House.

The article “In Recruitment, an AI-on-AI War Is Rewriting the Hiring Playbook,” I learned:

“AI hasn’t broken hiring,” says Marija Marcenko, Head of Global Talent Acquisition at SaaS platform Semrush. “But it’s changed how we engage with candidates.”

The process followed for my first job did not involve anything but one-on-one interactions. There was not much chance of spoofing. I sat there, explained how I indexed sermons in Latin for a fellow named William Gillis, calculated reading complexity for the publisher, and how I gathered information about novel teaching methods. None of those activities had any relevance I could see to nuclear anything.

When I visited the company’s main DC office, it was in the technology corridor running from the Beltway to Germantown, Maryland. I remember new buildings and farm land. I met people who were like those in my PhD program except these individuals thoughts about radiation, nuclear effects modeling, and similar subjects.

One math PhD, who became my best friend, said, “You actually studied poetry in Latin?” I said, “Yep.” He said, “I never read a poem in my life and never will.” I recited a few lines of a Percy Bysshe Shelley poem. I think his written evaluation of his “interview” with me got me the job.

No computers. No fake anything. Just smart people listening, evaluating, and assessing.

Now systems can fool humans. In the hiring game, what makes a company is a collection of people, cultural information, and a desire to work with individuals who can contribute to the organization’s achieving goals.

The Crowdstrike article includes this paragraph:

Scattered Spider, which made headlines in 2024 when one of its key members was arrested in Spain, returned in 2025 with voice phishing and help desk social engineering that bypasses multifactor authentication protections to gain initial access.

Can hiring practices keep pace with the deceptions in use today? Tricks to get hired. Fakery to steal an organization’s secrets.

Nope. Few organizations have the time, money, or business processes to hire using inefficient means as personal interactions, site visits, and written evaluations of a candidate.

Oh, in case you are wondering, I did not go back to finish my PhD. Now I know a little bit about nuclear stuff, however and slightly more about smart software.

Stephen E Arnold, August 15, 2025

News Flash: Young Workers Are Not Happy. Who Knew?

August 12, 2025

No AI. Just a dinobaby being a dinobaby.

No AI. Just a dinobaby being a dinobaby.

My newsfeed service pointed me to an academic paper in mid-July 2025. I am just catching up, and I thought I would document this write up from big thinkers at Dartmouth College and University College London and “Rising young Worker Despair in the United States.”

The write up is unlikely to become a must-read for recent college graduates or youthful people vaporized from their employers’ payroll. The main point is that the work processes of hiring and plugging away is driving people crazy.

The author point out this revelation:

ons In this paper we have confirmed that the mental health of the young in the United States has worsened rapidly over the last decade, as reported in multiple datasets. The deterioration in mental health is particularly acute among young women…. ted the relative prices of housing and childcare have risen. Student debt is high and expensive. The health of young adults has also deteriorated, as seen in increases in social isolation and obesity. Suicide rates of the young are rising. Moreover, Jean Twenge provides evidence that the work ethic itself among the young has plummeted. Some have even suggested the young are unhappy having BS jobs.

Several points jumped from the 38 page paper:

- The only reference to smart software or AI was in the word “despair”. This word appears 78 times in the document.

- Social media gets a few nods with eight references in the main paper and again in the endnotes. Isn’t social media a significant factor? My question is, “What’s the connection between social media and the mental states of the sample?”

- YouTube is chock full of first person accounts of job despair. A good example is Dari Step’s video “This Job Hunt Is Breaking Me and Even California Can’t Fix It Though It Tries.” One can feel the inner turmoil of this person. The video runs 23 minutes and you can find it (as of August 4, 2025) at this link: https://www.youtube.com/watch?v=SxPbluOvNs8&t=187s&pp=ygUNZGVtaSBqb2IgaHVudA%3D%3D. A “study” is one thing with numbers and references to hump curves. A first-person approach adds a bit is sizzle in my opinion.

A few observations seem warranted:

- The US social system is cranking out people who are likely to be challenging for managers. I am not sure the get-though approach based on data-centric performance methods will be productive over time

- Whatever is happening in “education” is not preparing young people and recent graduates to support themselves with old-fashioned jobs. Maybe most of these people will become AI entrepreneurs, but I have some doubts about success rates

- Will the National Bureau of Economic Research pick up the slack for the disarray that seems to be swirling through the Bureau of Labor Statistics as I write this on August 4, 2025?

Stephen E Arnold, August 12, 2025

An Author Who Will Not Be Hired by an AI Outfit. Period.

July 29, 2025

This blog post is the work of an authentic dinobaby. Sorry. No smart software can help this reptilian thinker.

This blog post is the work of an authentic dinobaby. Sorry. No smart software can help this reptilian thinker.

I read an article / essay titled in English “The Bewildering Phenomenon of Declining Quality.” I found the examples in the article interesting. A couple like the poke at “fast fashion” have become tropes. Others, like the comments about customer service today, were insightful. Here’s an example of comment I noted:

José Francisco Rodríguez, president of the Spanish Association of Customer Relations Experts, admits that a lack of digital skills can be particularly frustrating for older adults, who perceive that the quality of customer service has deteriorated due to automation. However, Rodríguez argues that, generally speaking, automation does improve customer service. Furthermore, he strongly rejects the idea that companies are seeking to cut costs with this technology: “Artificial intelligence does not save money or personnel,” he states. “The initial investment in technology is extremely high, and the benefits remain practically the same. We have not detected any job losses in the sector either.”

I know that the motivation for dumping humans in customer support comes from [a] the extra work required to manage humans, [b] the escalating costs of health care and other “benefits”; and [c] black hole of costs that burn cash because customers want help, returns, and special treatment. Software robots are the answer.

The write up’s comments about smart software are also interesting. Here’s an example of a passage I circled:

A 2020 analysis by Fakespot of 720 million Amazon reviews revealed that approximately 42% were unreliable or fake. This means that almost half of the reviews we consult before purchasing a product online may have been generated by robots, whose purpose is to either encourage or discourage purchases, depending on who programmed them. Artificial intelligence itself could deteriorate if no action is taken. In 2024, bot activity accounted for almost half of internet traffic. This poses a serious problem: language models are trained with data pulled from the web. When these models begin to be fed with information they themselves have generated, it leads to a so-called “model collapse.”

What surprised me is the problem, specifically:

a truly good product contributes something useful to society. It’s linked to ethics, effort, and commitment.

One question: How does one inculcate these words into societal behavior?

One possible answer: Skynet.

Stephen E Arnold, July 29, 2025

A Security Issue? What Security Issue? Security? It Is Just a Normal Business Process.

July 23, 2025

Just a dinobaby working the old-fashioned way, no smart software.

Just a dinobaby working the old-fashioned way, no smart software.

I zipped through a write up called “A Little-Known Microsoft Program Could Expose the Defense Department to Chinese Hackers.” The word program does not refer to Teams or Word, but to a business process. If you are into government procurement, contractor oversight, and the exiting world of inspector generals, you will want to read the 4000 word plus write up.

Here’s a passage I found interesting:

Microsoft is using engineers in China to help maintain the Defense Department’s computer systems — with minimal supervision by U.S. personnel — leaving some of the nation’s most sensitive data vulnerable to hacking from its leading cyber adversary…

The balance of the cited article explain what’s is going on with a business process implemented by Microsoft as part of a government contract. There are lots of quotes, insider jargon like “digital escort,” and suggestions that the whole approach is — how can I summarize it? — ill advised, maybe stupid.

Several observations:

- Someone should purchase a couple of hundred copies of Apple in China by Patrick McGee, make it required reading, and then hold some informal discussions. These can be modeled on what happens in the seventh grade; for example, “What did you learn about China’s approach to information gathering?”

- A hollowed out government creates a dependence on third-parties. These vendorsdo not explain how outsourcing works. Thus, mismatches exist between government executives’ assumptions and how the reality of third-party contractors fulfill the contract.

- Weaknesses in procurement, oversight, continuous monitoring by auditors encourage short cuts. These are not issues that have arisen in the last day or so. These are institutional and vendor procedures that have existed for decades.

Net net: My view is that some problems are simply not easily resolved. It is interesting to read about security lapses caused by back office and legal processes.

Stephen E Arnold, July 23, 2025