More Push Back Against US Wild West Tech

September 12, 2024

I spotted another example of a European nation state expressing some concern with American high-technology companies. There is not wind blown corral on Leidsestraat. No Sergio Leone music creeps out the observers. What dominates the scene is a judicial judgment firing a US$35 million fine at Clearview AI. The company has a database of faces, and the information is licensed to law enforcement agencies. What’s interesting is that Clearview does not do business in the Netherlands; nevertheless, the European Union’s data protection act, according to Dutch authorities, has been violated. Ergo: Pay up.

“The Dutch Are Having None of Clearview AI Harvesting Your Photos” reports:

“Following investigation, the DPA confirmed that photos of Dutch citizens are included in the database. It also found that Clearview is accountable for two GDPR breaches. The first is the collection and use of photos….The second is the lack of transparency. According to the DPA, the startup doesn’t offer sufficient information to individuals whose photos are used, nor does it provide access to which data the company has about them.”

Clearview is apparently unhappy with the judgment.

Several observations:

First, the decision is part of what might be called US technology pushback. The Wild West approach to user privacy has to get out of Dodge.

Second, Clearview may be on the receiving end of more fines. The charges may appear to be inappropriate because Clearview does not operate in the Netherlands. Other countries may decide to go after the company too.

Third, the Dutch action may be the first of actions against US high-technology companies.

Net net: If the US won’t curtail the Wild West activities of its technology-centric companies, the Dutch will.

Stephen E Arnold, September 12, 2024

Telcos Lobby to Eliminate Consumer Protection

September 12, 2024

Now this sounds like a promising plan for the telcos. We learn from TechDirt, “Big Telecom Asks the Corrupt Supreme Court to Declare All State and Federal Broadband Consumer Protection Illegal. They Might Get their Wish.” Companies lie AT&T and Comcast have been persuading right-leaning courts, including SCOTUS, to side with them against net neutrality rules and broadband protections generally. To make matters worse, the FCC’s consumer-protection authority was hollowed out during the Trump administration.

Fortunately, states have the authority to step in and act when the federal government does not. But that could soon change. Writer Karl Bode explains:

“For every state whose legislature telecoms have completely captured (Arkansas, Missouri, Tennessee), there’s several that have, often imperfectly, tried to protect broadband consumers, either in the form of (California, Oregon, Washington, Maine), crackdowns on lies about speeds or prices (Arizona, Indiana, Michigan), or requiring affordable low income broadband (New York). In 2021 at the peak of COVID problems, New York passed a law mandating that heavily taxpayer subsidized telecoms provide a relatively slow (25 Mbps), $15 broadband tier only for low-income families that qualified. ISPs have sued (unsuccessfully so far) to kill the law, which was upheld last April by the US Court of Appeals for the 2nd Circuit, reversing a 2021 District Court ruling. … Telecoms like AT&T are frightened of states doing their jobs to protect consumers and market competition from their bad behavior. So a group of telecom trade groups this week petitioned the Supreme Court with a very specific ask. They want the court to first destroy FCC broadband consumer protection oversight and net neutrality, then kill New York’s effort, in that precise order, in two different cases.”

The companies argue that, if we want consumer broadband protections, it is up to Congress to pass specific legislation that provides them. The same Congress they reportedly lobby with about $320,000 daily. This is why we cannot have nice things. Bode believes the telcos are likely to get their way. He also warns the Chevron decision means other industries are sure to follow suit, meaning an end to consumer protections in every sphere: Banking, food safety, pollution, worker safeguards… the list goes on. Is this what it means to make the nation great?

Cynthia Murrell, September 12, 2024

Preligens Is Safran.ai

September 9, 2024

Preligens, a French AI and specialized software company, is now part of Safran Electronics & Defense which is a unit of the Safran Group. I spotted a report in Aerotime. “Safran Accelerates AI Development with $243M Purchase of French-Firm Preligens” reported on September 2, 2024. The report quotes principles to the deal as saying:

“Joining Safran marks a new stage in Preligens’ development. We’re proud to be helping create a world-class AI center of expertise for one of the flagships of French industry. The many synergies with Safran will enable us to develop new AI product lines and accelerate our international expansion, which is excellent news for our business and our people,” Jean-Yves Courtois, CEO of Preligens, said. The CEO of Safran Electronics & Defense, Franck Saudo, said that he was “delighted” to welcome Preligens to the company.

The acquisition does not just make Mr. Saudo happy. The French military, a number of European customers, and the backers of Preligens are thrilled as well. In my lectures about specialized software companies, I like to call attention to this firm. It illustrates that technology innovation is not located in one country. Furthermore it underscores the strong educational system in France. When I first learned about Preligens, one rumor I heard was that on of the US government entities wanted to “invest” in the company. For a variety of reasons, the deal went no place faster than a bus speeding toward La Madeleine. If you spot me at a conference, you can ask about French technology firms and US government processes. I have some first hand knowledge starting with “American fries in a Congressional lunch facility.”

Preligens is important for three reasons:

- The firm developed an AI platform; that is, the “smart software” is not an afterthought which contrasts sharply with the spray paint approach to AI upon which some specialized software companies have been relying

- The smart software outputs identification data; for example, a processed image can show an aircraft. The Preligens system identifies the aircraft by type

- The user of the Preligens system can use time analyses of imagery to draw conclusions. Here’s a hypothetical because the actual example is not appropriate for a free blog written by a dinobaby. Imagine a service van driving in front of an embassy in Paris. The van makes a pass every three hours for two consecutive days. The Preligens system can “notice” this and alert an operator.

I will continue to monitor the system which will be doing business with selected entities under the name Safran.ai.

Stephen E Arnold, September 9, 2024

Is Open Source Doomed?

September 6, 2024

Open source cheerleaders may need to find a new team to route for. Web developer and blogger Baldur Bjarnason describes “The Slow Evaporation of the Free/Open Source Surplus.” He notes he is joining a conversation begun by Tara Tarakiyee with the post, Is the Open Source Bubble about to Burst? and continued by Ben Werdmuller.

Bjarnason begins by specifying what has made open source software possible up until now: surpluses in both industry (high profit margins) and labor (well-paid coders with plenty of free time.) Now, however, both surpluses are drying up. The post lists several reasons for this. First, interest rates remain high. Next, investment dollars are going to AI, which “doesn’t really do real open source.” There were also the waves of tech layoffs and cost-cutting after post-pandemic overspending. Severe burnout from a thankless task does not help. We are reminded:

“Very few FOSS projects are lucky enough to have grown a sustainable and supportive community. Most of the time, it seems to be a never-ending parade of angry demands with very little reward.”

Good point. A few other factors, Bjarnason states, make organizations less likely to invest in open source:

- Why compete with AWS or similar services that will offer your own OSS projects at a dramatically lower price?

- Why subsidise projects of little to no strategic value that contribute anything meaningfully to the bottom-line?

- Why spend time on OSS when other work is likely to have higher ROI?

- Why give your work away to an industry that treats you as disposable?”

Finally, Bjarnason suspects even users are abandoning open source. One factor: developers who increasingly reach for AI generated code instead of searching for related open source projects. Ironically, those LLMs were trained on open source software in the first place. The post concludes:

Best case scenario, seems to me, is that Free and Open Source Software enters a period of decline. After all, that’s generally what happens to complex systems with less investment. Worst case scenario is a vicious cycle leading to a collapse:

- Declining surplus and burnout leads to maintainers increasingly stepping back from their projects.

- Many of these projects either bitrot serious bugs or get taken over by malicious actors who are highly motivated because they can’t relay on pervasive memory bugs anymore for exploits.

- OSS increasingly gets a reputation (deserved or not) for being unsafe and unreliable.

- That decline in users leads to even more maintainers stepping back.”

Bjarnason notes it is possible some parts of the Open Source ecosystem will not crash and burn. Overall, though, the outlook seems bleak.

Cynthia Murrell, September 6, 2024

Google Synthetic Content Scaffolding

September 3, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Google posted what I think is an important technical paper on the arXiv service. The write up is “Towards Realistic Synthetic User-Generated Content: A Scaffolding Approach to Generating Online Discussions.” The paper has six authors and presumably has the grade of “A”, a mark not award to the stochastic parrot write up about Google-type smart software.

For several years, Google has been exploring ways to make software that would produce content suitable for different use cases. One of these has been an effort to use transformer and other technology to produce synthetic data. The idea is that a set of real data is mimicked by AI so that “real” data does not have to be acquired, intercepted, captured, or scraped from systems in the real-time, highly litigious real world. I am not going to slog through the history of smart software and the research and application of synthetic data. If you are curious, check out Snorkel and the work of the Stanford Artificial Intelligence Lab or SAIL.

The paper I referenced above illustrates that Google is “close” to having a system which can generate allegedly realistic and good enough outputs to simulate the interaction of actual human beings in an online discussion group. I urge you to read the paper, not just the abstract.

Consider this diagram (which I know is impossible to read in this blog format so you will need the PDF of the cited write up):

The important point is that the process for creating synthetic “human” online discussions requires a series of steps. Notice that the final step is “fine tuned.” Why is this important? Most smart software is “tuned” or “calibrated” so that the signals generated by a non-synthetic content set are made to be “close enough” to the synthetic content set. In simpler terms, smart software is steered or shaped to match signals. When the match is “good enough,” the smart software is good enough to be deployed either for a test, a research project, or some use case.

Most of the AI write ups employ steering, directing, massaging, or weaponizing (yes, weaponizing) outputs to achieve an objective. Many jobs will be replaced or supplemented with AI. But the jobs for specialists who can curve fit smart software components to produce “good enough” content to achieve a goal or objective will remain in demand for the foreseeable future.

The paper states in its conclusion:

While these results are promising, this work represents an initial attempt at synthetic discussion thread generation, and there remain numerous avenues for future research. This includes potentially identifying other ways to explicitly encode thread structure, which proved particularly valuable in our results, on top of determining optimal approaches for designing prompts and both the number and type of examples used.

The write up is a preliminary report. It takes months to get data and approvals for this type of public document. How far has Google come between the idea to write up results and this document becoming available on August 15, 2024? My hunch is that Google has come a long way.

What’s the use case for this project? I will let younger, more optimistic minds answer this question. I am a dinobaby, and I have been around long enough to know a potent tool when I encounter one.

Stephen E Arnold, September 3, 2024

Consensus: A Gen AI Search Fed on Research, not the Wild Wild Web

September 3, 2024

How does one make an AI search tool that is actually reliable? Maybe start by supplying it with only peer-reviewed papers instead of the whole Internet. Fast Company sings the praises of Consensus in, “Google Who? This New Service Actually Gets AI Search Right.” Writer JR Raphael begins by describing why most AI-powered search engines, including Google, are terrible:

“The problem with most generative AI search services, at the simplest possible level, is that they have no idea what they’re even telling you. By their very nature, the systems that power services like ChatGPT and Gemini simply look at patterns in language without understanding the actual context. And since they include all sorts of random internet rubbish within their source materials, you never know if or how much you can actually trust the info they give you.”

Yep, that pretty much sums it up. So, like us, Raphael was skeptical when he learned of yet another attempt to bring generative AI to search. Once he tried the easy-to-use Consensus, however, he was convinced. He writes:

“In the blink of an eye, Consensus will consult over 200 million scientific research papers and then serve up an ocean of answers for you—with clear context, citations, and even a simple ‘consensus meter’ to show you how much the results vary (because here in the real world, not everything has a simple black-and-white answer!). You can dig deeper into any individual result, too, with helpful features like summarized overviews as well as on-the-fly analyses of each cited study’s quality. Some questions will inevitably result in answers that are more complex than others, but the service does a decent job of trying to simplify as much as possible and put its info into plain English. Consensus provides helpful context on the reliability of every report it mentions.”

See the post for more on using the web-based app, including a few screenshots. Raphael notes that, if one does not have a specific question in mind, the site has long lists of its top answers for curious users to explore. The basic service is free to search with no query cap, but creators hope to entice us with an $8.99/ month premium plan. Of course, this service is not going to help with every type of search. But if the subject is worthy of academic research, Consensus should have the (correct) answers.

Cynthia Murrell, September 3, 2024

Elastic N.V. Faces a New Search Challenge

September 2, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Elastic N.V. and Shay Banon are what I call search survivors. Gone are Autonomy (mostly), Delphis, Exalead, Fast Search & Transfer (mostly), Vivisimo, and dozens upon dozens of companies who sought to put an organization’s information at an employee’s fingertips. The marketing lingo of these and other now-defunct enterprise search vendors is surprisingly timely. Once can copy and paste chunks of Autonomy’s white papers into the OpenAI ChatGPT search is coming articles and few would notice that the assertions and even the word choice was more than 40 years old.

Elastic N.V. survived. It rose from a failed search system called Compass. Elastic N.V. recycled the Lucene libraries, released the open source Elasticsearch, and did an IPO. Some people made a lot of money. The question is, “Will that continue?”

I noted the Silicon Angle article “Elastic Shares Plunge 25% on Lower Revenue Projections Amid Slower Customer Commitments.” That write up says:

In its earnings release, Chief Executive Officer Ash Kulkarni started positively, noting that the results in the quarter we solid and outperformed previous guidance, but then comes the catch and the reason why Elastic stock is down so heavily after hours. “We had a slower start to the year with the volume of customer commitments impacted by segmentation changes that we made at the beginning of the year, which are taking longer than expected to settle,” Kulkarni wrote. “We have been taking steps to address this, but it will impact our revenue this year.” With that warning, Elastic said that it expects fiscal second-quarter adjusted earnings per share of 37 to 39 cents on revenue of $353 million to $355 million. The earnings per share forecast was ahead of the 34 cents expected by analysts, but revenue fell short of an expected $360.8 million. It was a similar story for Elastic’s full-year outlook, with the company forecasting earnings per share of $1.52 to $1.56 on revenue of $1.436 billion to $1.444 billion. The earnings per share outlook was ahead of an expected $1.42, but like the second quarter outlook, revenue fell short, as analysts had expected $1.478 billion.

Elastic N.V. makes money via service and for-fee extras. I want to point out that the $300 million or so revenue numbers are good. Elastic B.V. has figured out a business model that has not required [a] fiddling the books, [b] finding a buyer as customers complain about problems with the search software, [c] the sources of financing rage about cash burn and lousy revenue, [d] government investigators are poking around for tax and other financial irregularities, [e] the cost of running the software is beyond the reach of the licensee, or [f] the system simply does not search or retrieve what the user wanted or expected.

Elastic B.V. and its management team may have a challenge to overcome. Thanks, OpenAI, the MSFT Copilot thing crashed today.

So what’s the fix?

A partial answer appears in the Elastic B.V. blog post titled “Elasticsearch Is Open Source, Again.” The company states:

The tl;dr is that we will be adding AGPL as another license option next to ELv2 and SSPL in the coming weeks. We never stopped believing and behaving like an open source community after we changed the license. But being able to use the term Open Source, by using AGPL, an OSI approved license, removes any questions, or fud, people might have.

Without slogging through the confusion between what Elastic B.V. sells, the open source version of Elasticsearch, the dust up with Amazon over its really original approach to search inspired by Elasticsearch, Lucid Imagination’s innovation, and the creaking edifice of A9, Elastic B.V. has released Elasticsearch under an additional open source license. I think that means one can use the software and not pay Elastic B.V. until additional services are needed. In my experience, most enterprise search systems regardless of how they are explained need the “owner” of the system to lend a hand. Contrary to the belief that smart software can do enterprise search right now, there are some hurdles to get over.

Will “going open source again” work?

Let me offer several observations based on my experience with enterprise search and retrieval which reaches back to the days of punch cards and systems which used wooden rods to “pull” cards with a wanted tag (index term):

- When an enterprise search system loses revenue momentum, the fix is to acquire companies in an adjacent search space and use that revenue to bolster the sales prospects for upsells.

- The company with the downturn gilds the lily and seeks a buyer. One example was the sale of Exalead to Dassault Systèmes which calculated it was more economical to buy a vendor than to keep paying its then current supplier which I think was Autonomy, but I am not sure. Fast Search & Transfer pulled of this type of “exit” as some of the company’s activities were under scrutiny.

- The search vendor can pivot from doing “search” and morph into a business intelligence system. (By the way, that did not work for Grok.)

- The company disappears. One example is Entopia. Poof. Gone.

I hope Elastic B.V. thrives. I hope the “new” open source play works. Search — whether enterprise or Web variety — is far from a solved problem. People believe they have the answer. Others believe them and license the “new” solution. The reality is that finding information is a difficult challenge. Let’s hope the “downturn” and “negativism” goes away.

Stephen E Arnold, September 2, 2024

The Seattle Syndrome: Definitely Debilitating

August 30, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I think the film “Sleepless in Seattle” included dialog like this:

What do they call it when everything intersects?

The Bermuda Triangle.”

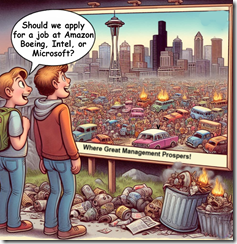

Seattle has Boeing. The company is in the news not just for doors falling off its aircraft. The outfit has stranded two people in earth orbit and has to let Elon Musk bring them back to earth. And Seattle has Amazon, an outfit that stands behind the products it sells. And I have to include Intel Labs, not too far from the University of Washington, which is famous in its own right for many things.

Two job seekers discuss future opportunities in some of Seattle and environ’s most well-known enterprises. The image of the city seems a bit dark. Thanks, MSFT Copilot. Are you having some dark thoughts about the area, its management talent pool, and its commitment to ethical business activity? That’s a lot of burning cars, but whatever.

Is Seattle a Bermuda Triangle for large companies?

This question invites another; specifically, “Is Microsoft entering Seattle’s Bermuda Triangle?

The giant outfit has entered a deal with the interesting specialized software and consulting company Palantir Technologies Inc. This firm has a history of ups and downs since its founding 21 years ago. Microsoft has committed to smart software from OpenAI and other outfits. Artificial intelligence will be “in” everything from the Azure Cloud to Windows. Despite concerns about privacy, Microsoft wants each Windows user’s machine to keep screenshot of what the user “does” on that computer.

Microsoft seems to be navigating the Seattle Bermuda Triangle quite nicely. No hints of a flash disaster like the sinking of the sailing yacht Bayesian. Who could have predicted that? (That’s a reminder that fancy math does not deliver 1.000000 outputs on a consistent basis.

Back to Seattle. I don’t think failure or extreme stress is due to the water. The weather, maybe? I don’t think it is the city government. It is probably not the multi-faceted start up community nor the distinctive vocal tones of its most high profile podcasters.

Why is Seattle emerging as a Bermuda Triangle for certain firms? What forces are intersecting? My observations are:

- Seattle’s business climate is a precursor of broader management issues. I think it is like the pigeons that Greeks examined for clues about their future.

- The individuals who works at Boeing-type outfits go along with business processes modified incrementally to ignore issues. The mental orientation of those employed is either malleable or indifferent to downstream issues. For example, Windows update killed printing or some other function. The response strikes me as “meh.”

- The management philosophy disconnects from users and focuses on delivering financial results. Those big houses come at a cost. The payoff is personal. The cultural impacts are not on the radar. Hey, those quantum Horse Ridge things make good PR. What about the new desktop processors? Just great.

Net net: I think Seattle is a city playing an important role in defining how businesses operate in 2024 and beyond. I wish I was kidding. But I am bedeviled by reminders of a space craft which issues one-way tickets, software glitches, and products which seem to vary from the online images and reviews. (Maybe it is the water? Bermuda Triangle water?)

Stephen E Arnold, August 30, 2024

Online Sports Gambling: Some Negatives Have Been Identified by Brilliant Researchers

August 29, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

People love gambling, especially when they’re betting on the results of sports. Online has made sports betting very easy and fun. Unfortunately some people who bet on sports are addicted to the activity. Business Insider reveals the underbelly of online gambling and paints a familiar picture of addiction: “It’s Official: Legalized Sports Betting Is Destroying Young Men’s Financial Futures.” The University of California, Los Angeles shared a working paper about the negative effects of legalized sports gambling:

“…takes a look at what’s happened to consumer financial health in the 38 states that have greenlighted sports betting since the Supreme Court in 2018 struck down a federal law prohibiting it. The findings are, well, rough. The researchers found that the average credit score in states that legalized any form of sports gambling decreased by 0.3% after about four years and that the negative impact was stronger where online sports gambling is allowed, with credit scores dipping in those areas by 1%. They also found an 8% increase in debt-collection amounts and a 28% increase in bankruptcies where online sports betting was given the go-ahead. By their estimation, that translates to about 100,000 extra bankruptcies each year in the states that have legalized sports betting. The number of people who fell dangerously behind on their car loans went up, too. Oddly enough, credit-card delinquencies fell, but the researchers believe that’s because banks wind up lowering credit limits to try to compensate for the rise in risky consumer behavior.”

The researchers discovered that legalized gambling leads to more gambling addictions. They also found if a person lives near a casino or is from a poor region, they’ll more prone to gambling. This isn’t anything new! The paper restates information people have known for centuries about gambling and other addictions: hurts finances, leads to destroyed relationships, job loss, increased in illegal activities, etc.

A good idea is to teach people to restraint. The sports betting Web sites can program limits and even assist their users to manage their money without going bankrupt. It’s better for people to be taught restraint so they can roll the dice one more time.

Stephen E Arnold, August 29, 2024

Google Microtransaction Enabler: Chrome Beefs Up Its Monetization Options

August 29, 2024

![green-dino_thumb_thumb_thumb_thumb_t[1]_thumb_thumb_thumb_thumb green-dino_thumb_thumb_thumb_thumb_t[1]_thumb_thumb_thumb_thumb](https://arnoldit.com/wordpress/wp-content/uploads/2024/08/green-dino_thumb_thumb_thumb_thumb_t1_thumb_thumb_thumb_thumb_thumb.gif) This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

For its next trick, Google appears to be channeling rival Amazon. We learn from TechRadar that “Google Is Developing a New Web Monetization Feature for Chrome that Could Really Change the Way We Pay for Things Online.” Will this development distract anyone from the recent monopoly ruling?

Writer Kristina Terech explains how Web Monetization will work for commercial websites:

“In a new support document published on the Google Chrome Platform Status site, Google explains that Web Monetization is a new technology that will enable website owners ‘to receive micro payments from users as they interact with their content.’ Google states its intention is noble, writing that Web Monetization is designed to be a new option for webmasters and publishers to generate revenue in a direct manner that’s not reliant on ads or subscriptions. Google explains that with Web Monetization, users would pay for content while they consume it. It’s also added a new HTML link element for websites to add to their URL address to indicate to the Chrome browser that the website supports Web Monetization. If this is set correctly in the website’s URL, for websites that facilitate users setting up digital wallets on it, when a person visits that website, a new monetization session would be created (for that person) on the site. I’m immediately skeptical about monetizing people’s attention even further than it already is, but Google reassures us that visitors will have control over the whole process, like the choice of sites they want to reward in this way and how much money they want to spend.”

But like so many online “choices,” how many users will pay enough attention to make them? I share Terech’s distaste for attention monetization, but that ship has sailed. The danger here (or advantage, for merchants): Many users will increase their spending by barely noticeable amounts that add up to a hefty chunk in the end. On the other hand, the feature could reduce costly processing charges by eliminating per-payment fees for merchants. Whether end users see those savings, though, depends on whether vendors choose to pass them along.

Cynthia Murrell, August 29, 2024