AI Overviews: A He Said, She Said Argument

May 29, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Google has begun the process of setting up an AI Overview object in search results. The idea is that Google provides an “answer.” But the machine-generated response is a platform for selling sentences, “meaning,” and probably words. Most people who have been exposed to the Overview object point out some of the object’s flaws. Those “mistakes” are not the point. Before I offer some ideas about the advertising upside of an AI Overview, I want to highlight both sides of this “he said, she said” dust up. Those criticizing the Google’s enhancement to search results miss the point of generating a new way to monetize information. Those who are taking umbrage at the criticism miss the point of people complaining about how lousy the AI Overviews are perceived to be.

The criticism of Google is encapsulated in “Why Google Is (Probably) Stuck Giving Out AI Answers That May or May Not Be Right.” A “real” journalist explains:

What happens if people keep finding Bad Answers on Google and Google can’t whac-a-mole them fast enough? And, crucially, what if regular people, people who don’t spend time reading or talking about tech news, start to hear about Google’s Bad And Potentially Dangerous Answers? Because that would be a really, really big problem. Google does a lot of different things, but the reason it’s worth more than $2 trillion is still its two core products: search, and the ads that it generates alongside search results. And if people — normal people — lose confidence in Google as a search/answer machine … Well, that would be a real problem.

The idea is that the AI Overview makes Google Web search less useful than it was before AI. Whether the idea is accurate or not makes no difference to the “he said, she said” argument. The “real” news is that Google is doing something that many people may perceive as a negative. The consequence is that Google’s shiny carapace will be scratched and dented. A more colorful approach to this side of the “bad Google” argument appears in Android Authority. “Shut It Down: Google’s AI Search Results Are Beyond Terrible” states:

The new Google AI Overview feature is offering responses to queries that range from bizarre and funny to very dangerous.

Ooof. Bizarre and dangerous. Yep, that’s the new Google AI Overview.

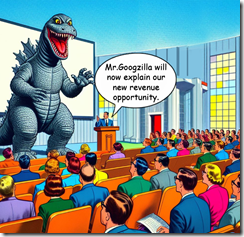

The Red Alert Google is not taking the criticism well. Instead of Googzilla retreating into a dark, digital cave, the beastie is coming out fighting. Imagine. Google is responding to pundit criticism. Fifteen years ago, no one would have paid any attention to a podcaster writer and a mobile device news service. Times have indeed changed.

“Google Scrambles to Manually Remove Weird AI Answers in Search” provides an allegedly accurate report about how Googzilla is responding to criticism. In spite of the split infinitive, the headline makes clear that the AI-infused online advertising machine is using humans (!) to fix up wonky AI Overviews. The write up pontificates:

Google continues to say that its AI Overview product largely outputs “high quality information” to users. “Many of the examples we’ve seen have been uncommon queries, and we’ve also seen examples that were doctored or that we couldn’t reproduce,” Google spokesperson Meghann Farnsworth said in an email to The Verge. Farnsworth also confirmed that the company is “taking swift action” to remove AI Overviews on certain queries “where appropriate under our content policies, and using these examples to develop broader improvements to our systems, some of which have already started to roll out.”

Google seems to acknowledge that action is required. But the Google is not convinced that it has stepped on a baby duckling or two with its AI Overview innovation.

AI Overviews represent a potential revenue flow into Alphabet. The money, not the excellence of the outputs, is what matters in today’s Google. Thanks, MSFT Copilot. Back online and working on security today?

Okay, “he said, she said.” What’s the bigger picture? I worked on a project which required setting up an ad service which sold words in a text passage. I am not permitted to name the client or the outfit with the idea. On a Web page, some text would appear with an identified like an underline or bold face. When the reader of the Web page clicked (often inadvertently) on the word, that user would be whisked to another Web site or a pop up ad. The idea is that instead of an Oingo (Applied Semantics)-type of related concept expansion, the advertiser was buying a word. Brilliant.

The AI Overview, based on my team’s look at what the Google has been crafting, sets up a similar opportunity. Here’s a selection from our discussion at lunch on Friday, May 24, 2024 at a restaurant which featured a bridge club luncheon. Wow, was it noisy? Here’s what emerged from our frequently disrupted conversation:

- The AI Overview is a content object. It sits for now at the top of the search results page unless the “user” knows to add the string udm=14 to a query

- Advertising can be “sold” to the advertiser[s] who want[s] to put a message on the “topic” or “main concept” of the search

- Advertising can be sold to the organizations wanting to be linked to a sentence or a segment of a sentence in the AI Overview

- Advertising can be sold to the organizations wanting to be linked to a specific word in the AI Overview

- Advertising can be sold to the organizations wanting to be linked to a specific concept in the AI Overview.

Whether the AI Overview is good, bad, or indifferent will make zero difference in practice to the Google advertising “machine,” its officers, and its soon-to-be replaced by smart software staff makes no, zero, zip difference. AI has given Google the opportunity to monetize a new content object. That content object and its advertising is additive. People who want “traditional” Google online advertising can still by it. Furthermore, as one of my team pointed out, the presence of the new content object “space” on a search results page opens up additional opportunities to monetize certain content types. One example is buying a link to a related video which appears as an icon below, along side, or within the content object space. The monetization opportunities seem to have some potential.

Net net: Googzilla may be ageing. To poobahs and self-appointed experts, Google may be lost in space, trembling in fear, and growing deaf due to the blaring of the Red Alert klaxons. Whatever. But the AI Overview may have some upside even if it is filled with wonky outputs.

Stephen E Arnold, May 29, 2024

Bullying Google Is a Thing

May 24, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Imagine the smartest kid in the fifth grade. The classmates are not jealous, but they are keenly aware of the brightest star having an aloof, almost distracted attitude. Combine that with a credit in a TV commercial when the budding wizard was hired to promote an advanced mathematics course developed by the child’s mother and father. The blessed big brain finds itself the object of ridicule. The PhD parents, the proud teacher, and the child’s tutor who works at Lawrence Livermore National Laboratory cannot understand why the future Master of the Universe is being bullied. Remarkable, is it not?

Herewith is an illustration of a fearsome creature, generated in gloomy colors, by the MidJourney bot, roaring its superiority. However, those observing the Big Boy are convulsed with laughter. Why laugh at an ageing money machine with big teeth?

I read “Google’s AI Search Feature Suggested Using Glue to Keep Cheese Sticking to a Pizza.” Yep fourth grade bullying may be part of the poking and prodding of a quite hapless but wealthy, successful Googzilla. Here’s an example of the situation in which the Google, which I affectionately call “Googzilla,” finds itself:

Google’s new search feature, AI Overviews, seems to be going awry. The tool, which gives AI-generated summaries of search results, appeared to instruct a user to put glue on pizza when they searched "cheese not sticking to pizza."

In another write up, Business Insider asserted:

But in searches shared on X, users have gotten contradictory instructions on boiling taro and even been encouraged to run with scissors after the AI appeared to take a joke search seriously. When we asked whether a dog had ever played in the NHL, Google answered that one had, apparently confused by a charity event for rescue pups.

My reaction to this digital bullying is mixed. On one hand, Google has demonstrated that its Code Red operating mode is cranking out half-cooked pizza. Sure, the pizza may have some non-poisonous glue, but Google is innovating. A big event provided a platform for the online advertising outfit to proclaim, “We are the leaders in smart software.” On the other hand, those observing Google’s outputs find the beastie a follower; for example, OpenAI announced ChatGPT4o the day before Google’s “reveal.” Then Microsoft presented slightly more coherent applications using AI, including the privacy special service which records everything a person does on a reinvented Windows on Arm device.

Several observations are warranted:

- Googzilla finds itself back in grade school with classmates of lesser ability, wealth, and heritage making fun of the entity. Wow, remember the shame? Remember the fun one had poking fun at an outsider? Humans are wonderful, are they not?

- “Users” or regular people who rely on Google seem to have a pent up anger with the direction in which Googzilla has been going. Since the company does not listen to its “users,” calling attention to Googzilla’s missteps is an easy way to say, “Hey, Big Fella, you are making us unhappy.” Will Google pay attention to these unexpected signals?

- Google, the corporate entity, seems to be struggling with Management 101 tasks; for example, staff or people resources. The CFO is heading to the exit. Competition, while flawed in some ways, continues to nibble at Google’s advertising perpetual motion machine. Google innovation focuses on gamesmanship and trying to buy digital marketing revenue.

Net net: I anticipate more coverage of Google’s strategy and tactical missteps. The bullying will continue and probably grow unless the company puts on its big boy pants and neutralizes the school yard behavior its critics and cynics deliver.

Stephen E Arnold, May 24, 2024

The Death of the Media: Remember Clay Tablets?

May 24, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Did the home in which you grew from a wee one to a hyperspeed teen have a plaster cast which said, “Home sweet home” or “Welcome” hanging on the wall. My mother had those craft sale treasures everywhere. I have none. The point is that the clay tablets from ancient times were not killed, put out of business, or bankrupted because someone wrote on papyrus, sheep skin, or bits of wood. Eliminating a communications medium is difficult. Don’t believe me? Go to an art fair and let me know if you were unable to spot something made of clay with writing or a picture on it.

I mention these older methods of disseminating a message because I read “Publishers Horrified at New Google AI Feature That Could Kill What’s Left of Journalism.” Really?

The write up states:

… preliminary studies on Google’s use of AI in its search engine has the potential to reduce website traffic by 25 percent, The Associated Press reports. That could be billions in revenue lost, according to an interview with Marc McCollum, chief innovation officer for content creator consultancy Raptive, who was interviewed by the AP.

The idea is that “real” journalism depends on Google for revenue. If the revenue from Google’s assorted ad programs tossing pennies to Web sites goes away, so will the “real” journalism on these sites.

If my dinobaby memory is working, the AP (Associated Press) was supported by newspapers. Then the AP was supported by Google. What’s next? I don’t know, but the clay tablet fellows appear to have persisted. The producers of the tablets probably shifted to tableware. Those who wrote on the tablets learned to deal with ink and sheepskin.

Chilling in the room thinking thoughts of doom. Thanks, MSFT Copilot. Keep following your security recipe.

AI seems to be capable of creating stories like those in Smartnews or one of the AI-powered spam outfits. The information is recycled. But it is good enough. Some students today seem incapable of tearing themselves from their mobile devices to read words. The go-to method for getting information is a TikTok-type service. People who write words may be fighting to make the shift to new media.

One thing is reasonably clear: Journalists and media-mavens are concerned that a person will take an answered produced by a Google-like service. The entering a query approach to information is a “hot medium thing.” Today kicking back and letting video do the work seems to be a winner.

Google, however, has in my opinion been fiddling with search since it “innovated” in its implementation of the GoTo.com/Overture.com approach to “pay to play” search. If you want traffic, buy ads. The more one spends, the more traffic one’s site gets. That’s simple. There are some variations, but the same Google model will be in effect with or without Google little summaries. The lingo may change, but where there are clicks. When there are clicks, advertisers will pay to be there.

Google can, of course, kill its giant Googzilla mom laying golden eggs. That will take some time. Googzilla is big. My theory is that enterprising people with something to say will find a way to get paid for their content outputs regardless of their form. True, there is the cost of paying, but that’s the same hit the clay table took thousands of years ago. But those cast plaster and porcelain art objects are probably on sale at an art fair this weekend.

Observations:

- The fear is palpable. Why not direct it to a positive end? Griping about Google which has had 25 years to do what it wanted to do means Google won’t change too much. Do something to generate money. Complaining is unlikely to produce a result.

- The likelihood Google shaft a large number of outfits and individuals is nearly 99 percent. Thus, moving in a spritely manner may be a good idea. Google is not a sprinter as its reaction to Microsoft’s Davos marketing blitz made clear.

- New things do appear. I am not sure what the next big thing will be. But one must pay attention.

Net net: The sky may be falling. The question is, “How fast?” Another is, “Can you get out of the way?”

Stephen E Arnold, May 24, 2024

Google Takes Stand — Against Questionable Content. Will AI Get It Right?

May 24, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

The Internet is the ultimate distribution system for illicit material, especially pornography. A simple Google search yields access to billions of lewd material for free and behind paywalls. Pornography already has people in a tizzy but the advent of deepfake porn material is making things worse. Google is upset about deepfakes and decided to take a moral stand Extreme Tech says: “Google Bans Ads For Platforms That Generate Deepfake Pornography.”

Beginning May 30, Google won’t allow platforms that create deepfake porn, explain how to make it, or promote/compare services to place ads through the Google Ads system. Google already has an Inappropriate Content Policy in place. It prohibits the promotion of hate groups, self-harm, violence, conspiracy theories, and sharing explicit images to garner attention. The policy also bans advertising sex work and sexual abuse.

Violating the content policy results in a ban from Google Ads. Google is preparing for future problems as AI becomes better:

“The addition of deepfake pornography to the Inappropriate Content Policy is undoubtedly the result of increasingly accessible and adept generative AI. In 2022, Google banned deepfake training on Colab, its mostly free public computing resource. Even six years ago, Pornhub and Reddit had to go out of their way to ban AI-generated pornography, which often depicts real people (especially celebrities) engaging in sexual acts they didn’t perform or didn’t consent to recording. Whether we’d like to or not, most of us know just how much better AI has gotten at creating fake faces since then. If deepfake pornography looked a bit janky back in 2018, it’s bound to look a heck of a lot more realistic now.”

If it weren’t for the moral center of humanity, Google’s minions would allow lead material and other illicit content on Google Ads. Porn sells. It always has.

Whitney Grace, May 24, 2024

Googzilla Makes a Move in a High Stakes Contest

May 22, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

The trusted “real news” outfit Thomson Reuters published this popular news story about dancing with Googzilla. The article is titled by the click seekers as “Google Cuts Mystery Check to US in Bid to Sidestep Jury Trial.” I love the “mystery check.” I thought FinCEN was on the look out for certain types of transactions.

The contest is afoot. Thanks, MSFT Copilot.

Here’s the core of the story: On one side of the multi-dimensional Go board is the US Department of Justice. Yes, that was the department with the statues in the area where employees once were paid each week. On the other side of the game board is Googzilla. This is the digital construct which personifies the Alphabet, Google, YouTube, DeepMind, et al outfit. Some in Google’s senior management are avid game players. After all, one must set up a system in which no matter who plays a Googzilla-branded game, the “just average wizards” who run the company wins. The mindset has worked wonders in the online advertising and SEO sector. The SEO “experts” were the people who made a case to their clients for the truism “If you want traffic, it is a pay-to-play operation.” The same may be said for YouTube and content creators who make content so Google can monetize that digital flow and pay a sometimes unknown amount to a creator who is a one-person 1930s motion picture production company. Ditto for the advertisers who use the Google system to buy advertising and benefit by providing advertising space. What’s Google do? It makes the software that controls the game.

Where’s this going? Google is playing a game with the Department of Justice. I am certain some in the DoJ understand this approach. Others may not grasp the concept of Googzilla’s absolute addiction to gaming and gamesmanship. Casinos are supposed to make money. There are exceptions, of course. I can think of a high-profile case history of casino failure, but Google is a reasonably competent casino operator. Sure, there are some technical problems when the Cloud back end fails and the staff become a news event because they protest with correctly spelled signage. But overall, I would suggest that the depth of Googzilla’s game playing is not appreciated by its users, its competition, or some of the governments trying to regain data and control of information pumped into the creatures financial blood bank.

Let’s look at the information the trusted outfit sought to share as bait for a begging-for-dollars marketing play:

Google has preemptively paid damages to the U.S. government, an unusual move aimed at avoiding a jury trial in the Justice Department’s antitrust lawsuit over its digital advertising business. Google disclosed the payment, but not the amount, in a court filing last week that said the case should be heard and decided by a judge directly. Without a monetary damages claim, Google argued, the government has no right to a jury trial.

That’s the move. The DoJ now has to [a] ignore the payment and move forward to a trial with a jury deciding if Googzilla is a “real” monopoly or a plain vanilla, everyday business like the ones Amazon, Facebook, and Microsoft have helped go out of business. [b] Cash the check and go back to scanning US government job listings for a positive lateral arabesque on a quest to the SES (senior executive service). [c] Keep the check and pile on more legal pressure because the money was an inducement, not a replacement for the US justice system. With an election coming up, I can see option [d] on the horizon: Do nothing.

The idea is that in multi-dimensional Go, Google wants to eliminate the noise of legal disputes. Google wins if the government cashes the check. Google wins if the on-rushing election causes a slow down of an already slow process. Google wins if the DoJ keeps piling on the pressure. Google has the money and lawyers to litigate. The government has a long memory but that staff and leadership turnover shifts the odds to Googzilla. Google Calendar keeps its attorneys filing before deadlines and exploiting the US legal system to its fullest extent. If the US government sues Google because the check was a bribe, Google wins. The legal matter shifts to resolving the question about the bribe because carts rarely are put in front of horses.

In this Googzilla-influenced games, Googzilla has created options and set the stage to apply the same tactic to other legal battles. The EU may pass a law prohibiting pre-payment in lieu of a legal process, but if that does not move along at the pace of AI hyperbole, Google’s DoJ game plan can be applied to the lucky officials in Brussels and Strasbourg.

The Reuters’ report says:

Stanford Law School’s Mark Lemley told Reuters he was skeptical Google’s gambit would prevail. He said a jury could ultimately decide higher damages than whatever Google put forward.

“Antitrust cases regularly go to juries. I think it is a sign that Google is worried about what a jury will do,” Lemley said. Another legal scholar, Herbert Hovenkamp of the University of Pennsylvania’s law school, called Google’s move "smart" in a post on X. “Juries are bad at deciding technical cases, and further they do not have the authority to order a breakup,” he wrote.

Okay, two different opinions. The Google check is proactive.

Why? Here are some reasons my research group offered this morning:

- Google has other things to do with its legal resources; namely, deal with the copyright litigation which is knocking on its door

- The competitive environment is troubling so Googzilla wants to delete annoyances like the DoJ and staff who don’t meet the new profile of the ideal Googler any longer

- Google wants to set a precedent so it can implement its pay-to-play game plan for legal hassles.

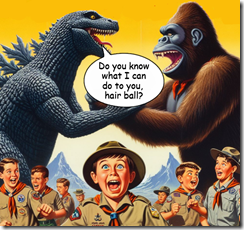

I am 99 percent confident that Google is playing a game. I am not sure that others perceive the monopoly litigation as one. Googzilla has been refining its game plan, its game-playing skills, and its gaming business systems for 25 years. How long has the current crop of DoJ experts been playing Googley games? I am not going to bet against Googzilla. Remember what happened in the 2021 film Godzilla vs. Kong. Both beasties make peace and go their separate ways. If that happens, Googzilla wins.

Stephen E Arnold, May 22, 2024

Google Dings MSFT: Marketing Motivated by Opportunism

May 21, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

While not as exciting as Jake Paul versus Mike Tyson, but the dust up is interesting. The developments leading up to this report about Google criticizing Microsoft’s security methods have a bit of history:

- Microsoft embraced OpenAI, Mistral, and other smart software because regulators are in meetings about regulating

- Google learned that after tire kicking, Apple found OpenAI (Microsoft’s pal) more suitable to the now innovation challenged iPhone. Google became a wallflower, a cute one, but a wallflower nevertheless

- Google faces trouble on three fronts: [a] Its own management of technology and its human resources; [b] threats to its online advertising and brokering business; and [c] challenges in cost control. (Employees get fired, and CFOs leave for a reason.)

Google is not a marketing outfit nor is it one that automatically evokes images associated with trust, data privacy, and people sensitivity. Google seized an opportunity to improve Web search. When forced to monetize, the company found inspiration in the online advertising “pay to play” ideas of Yahoo (Overture and GoTo). There was a legal dust up and Google paid up for that Eureka! moment. Then Google rode the demand for matching ads to queries. After 25 years, Google remains dependent on its semi-automated ad business. Now that business must be supplemented with enterprise cloud revenue.

Two white collar victims of legal witch hunts discuss “trust”. Good enough, MSFT Copilot.

How does the company market while the Red Alert klaxon blares into the cubicles, Google Meet sessions, and the Foosball game areas.?

The information in “Google Attacks Microsoft Cyber Failures in Effort to Steal Customers.” I wonder if Foundem and the French taxation authority might find the Google bandying about the word “steal”? I don’t know the answer to this question. The title indicates that Microsoft’s security woes, recently publicized by the US government, provide a marketing opportunity.

The article reports Google’s grand idea this way:

Government agencies that switch 500 or more users to Google Workspace Enterprise Plus for three years will get one year free and be eligible for a “significant discount” for the rest of the contract, said Andy Wen, the senior director of product management for Workspace. The Alphabet Inc. division is offering 18 months free to corporate customers that sign a three-year contract, a hefty discount after that and incident response services from Google’s Mandiant security business. All customers will receive free consulting services to help them make the switch.

The idea that Google is marketing is an interesting one. Like Telegram, Google has not been a long-time advocate of Madison Avenue advertising, marketing, and salesmanship. I was once retained by a US government agency to make a phone call to one of my “interaction points” at Google so that the director of the agency could ask a question about the quite pricey row of yellow Google Search Appliances. I made the call and obtained the required information. I also got paid. That’s some marketing in my opinion. An old person from rural Kentucky intermediating between a senior government official and a manager in one of Google’s mind boggling puzzle palace.

I want to point out that Google’s assertions about security may be overstated. One recent example is the Register’s report “Google Cloud Shows It Can Break Things for Lots of Customers – Not Just One at a Time.” Is this a security issue? My hunch is that whenever something breaks, security becomes an issue. Why? Rushed fixes may introduce additional vulnerabilities on top of the “good enough” engineering approach implemented by many high-flying, boastful, high-technology outfits. The Register says:

In the week after its astounding deletion of Australian pension fund UniSuper’s entire account, you might think Google Cloud would be on its very best behavior. Nope.

So what? When one operates at Google scale, the “what” is little more than users of 33 Google Cloud services were needful of some of that YouTube TV Zen moment stuff.

My reaction is that these giant outfits which are making clear that single points of failure are the norm in today’s online environment may not do the “fail over” or “graceful recovery” processes with the elegance of Mikhail Baryshnikov’s tuning point solo move. Google is obviously still struggling with the after effects of Microsoft’s OpenAI announcement and the flops like the Sundar & Prabhakar Comedy Show in Paris and the “smart software” producing images orthogonal to historical fact.

Online advertising expertise may not correlate with marketing finesse.

Stephen E Arnold, May 21, 2024

Allegations about Medical Misinformation Haunt US Tech Giants

May 17, 2024

Access to legal and safe abortions also known as the fight for reproductive rights are controversial issues in the United States and countries with large Christian populations. Opposition against abortions often spread false information about the procedure. They’re also known to spread misinformation about sex education, especially birth control. Mashable shares the unfortunate story that tech giants “Meta And Google Fuel Abortion Misinformation Across Africa, Asia, And Latin America, Report Finds.”

The Center for Countering Digital Hate (CCDH) and MSI Reproductive Choices (MSI) released a new report that found Meta and sometimes Google restricted abortion information and disseminated misinformation and abuse in Latin America, Asia, and Africa. Abortion providers are prevented placing ads globally on Google and Meta. Meta also earns revenue from anti-abortion ads bought in the US and targeted at the aforementioned areas.

MSI claims in the report that Meta removed or rejected its ads in Vietnam, Nigeria, Nepal, Mexica, Kenya, and Ghana because of “sensitive content.” Meta also has a blanket advertising restrictions on MSI’s teams in Vietnam and Nepal without explanation. Google blocked ads with the keyword “pregnancy options” in Ghana and MSI claimed they were banned from using that term in a Google Adwords campaign.

Google offered an explanation:

“Speaking to Mashable, Google representative Michael Aciman said, ‘This report does not include a single example of policy violating content on Google’s platform, nor any examples of inconsistent enforcement. Without evidence, it claims that some ads were blocked in Ghana for referencing ‘pregnancy options’. To be clear, these types of ads are not prohibited from running in Ghana – if the ads were restricted, it was likely due to our longstanding policies against targeting people based on sensitive health categories, which includes pregnancy.’”

Google and Meta have been vague and inconsistent about why they’re removing pregnancy option ads, while allowing pro-life groups the spread unchecked misinformation about abortion. Meta, Google, and other social media companies mine user information, but they do scant to protect civil liberties and human rights.

Organizations like MSI and CCDH are doing what they can to fight bad actors. It’s an uphill battle and it would be easier if social media companies helped.

Whitney Grace, May 17, 2024

Ho Hum: The Search Sky Is Falling

May 15, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

“Google’s Broken Link to the Web” is interesting for two reasons: [a] The sky is falling — again and [b] search has been broken for a long time and suddenly I should worry.

The write up states:

When it comes to the company’s core search engine, however, the image of progress looks far muddier. Like its much-smaller rivals, Google’s idea for the future of search is to deliver ever more answers within its walled garden, collapsing projects that would once have required a host of visits to individual web pages into a single answer delivered within Google itself.

Nope. The walled garden has been in the game plan for a long, long time. People who lusted for Google mouse pads were not sufficiently clued in to notice. Google wants to be the digital Hotel California. Smarter software is just one more component available to the system which controls information flows globally. How many people in Denmark rely on Google search whether it is good, bad, or indifferent? The answer is, “99 percent.” What about people who let Google Gmail pass along their messages? How about 67 percent in the US. YouTube is video in many countries even with the rise of TikTok, the Google is hanging in there. Maps? Ditto. Calendars? Ditto. Each of these ubiquitous services are “search.” They have been for years. Any click can be monetized one way or another.

Who will pay attention to this message? Regulators? Users of search on an iPhone? How about commuters and Waze? Thanks, MSFT Copilot. Good enough. Working on those security issues today?

Now the sky is falling? Give me a break. The write up adds:

where the company once limited itself to gathering low-hanging fruit along the lines of “what time is the super bowl,” on Tuesday executives showcased generative AI tools that will someday plan an entire anniversary dinner, or cross-country-move, or trip abroad. A quarter-century into its existence, a company that once proudly served as an entry point to a web that it nourished with traffic and advertising revenue has begun to abstract that all away into an input for its large language models. This new approach is captured elegantly in a slogan that appeared several times during Tuesday’s keynote: let Google do the Googling for you.

Of course, if Google does it, those “search” abstractions can be monetized.

How about this statement?

But to everyone who depended even a little bit on web search to have their business discovered, or their blog post read, or their journalism funded, the arrival of AI search bodes ill for the future. Google will now do the Googling for you, and everyone who benefited from humans doing the Googling will very soon need to come up with a Plan B.

Okay, what’s the plan B? Kagi? Yandex? Something magical from one of the AI start ups?

People have been trying to out search Google for a quarter century. And what has been the result? Google’s technology has been baked into the findability fruit cakes.

If one wants to be found, buy Google advertising. The alternative is what exactly? Crazy SEO baloney? Hire a 15 year old and pray that person can become an influencer? Put ads on Tubi?

The sky is not falling. The clouds rolled in and obfuscated people’s ability to see how weaponized information has seized control of multiple channels of information. I don’t see a change in weather any time soon. If one wants to run around saying the sky is falling, be careful. One might run into a wall or trip over a fire plug.

Stephen E Arnold, May 15, 2024

Google Lessons in Management: Motivating Some, Demotivating Others

May 14, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

I spotted an interesting comment in “Google Workers Complain of Decline in Morale’ as CEO Sundar Pichai Grilled over Raises, Layoffs: Increased distrust.” Here’s the passage:

Last month, the company fired 200 more workers, aside from the roughly 50 staffers involved in the protests, and shifted jobs abroad to Mexico and India.

I paired this Xhitter item with “Google Employees Question Execs over Decline in Morale after Blowout Earnings.” That write up asserted:

At an all-hands meeting last week, Google employees questioned leadership about cost cuts, layoffs and “morale” issues following the company’s better-than-expected first-quarter earnings report. CEO Sundar Pichai and CFO Ruth Porat said the company will likely have fewer layoffs in the second half of 2024.

Poor, poor Googzilla. I think the fearsome alleged monopolist could lose a few pounds. What do you think? Thanks, MSFT Copilot good enough work just like some security models we know and love.

Not no layoffs. Just “fewer layoffs.” Okay, that a motivator.

The estimable “real” news service stated:

Alphabet’s top leadership has been on the defensive for the past few years, as vocal staffers have railed about post-pandemic return-to-office mandates, the company’s cloud contracts with the military, fewer perks and an extended stretch of layoffs — totaling more than 12,000 last year — along with other cost cuts that began when the economy turned in 2022. Employees have also complained about a lack of trust and demands that they work on tighter deadlines with fewer resources and diminished opportunities for internal advancement.

What’s wrong with this management method? The answer: Absolutely nothing. The write up included this bit of information:

She [Ruth Porat, Google CFO, who is quitting the volleyball and foosball facility] also took the rare step of admitting to leadership’s mistakes in its prior handling of investments. “The problem is a couple of years ago — two years ago, to be precise — we actually got that upside down and expenses started growing faster than revenues,” said Porat, who announced nearly a year ago [in 2023] that she would be stepping down from the CFO position but hasn’t yet vacated the office. “The problem with that is it’s not sustainable.”

Ever tactful, Sundar Pichai (the straight man in the Sundar & Prabhakar Comedy Team is quoted as saying in silky tones:

“I think you almost set the record for the longest TGIF answer,” he said. Google all-hands meetings were originally called TGIFs because they took place on Fridays, but now they can occur on other days of the week. Pichai then joked that leadership should hold a “Finance 101” Ted Talk for employees. With respect to the decline in morale brought up by employees, Pichai said “leadership has a lot of responsibility here, adding that “it’s an iterative process.”

That’s a demonstration of tactful high school science club management-speak, in my opinion. To emphasize the future opportunities for the world’s smartest people, he allegedly said, according to the write up:

Pichai said the company is “working through a long period of transition as a company” which includes cutting expenses and “driving efficiencies.” Regarding the latter point, he said, “We want to do this forever.” [Editor note: Emphasis added]

Forever is a long, long time, isn’t it?

Poor, addled Googzilla. Litigation to the left, litigation to the right. Grousing world’s smartest employees. A legacy of baby making in the legal department. Apple apparently falling in lust with OpenAI. Amazon and its pesky Yellow Pages approach to advertising.

The sky is not falling, but there are some dark clouds overhead. And, speaking of overhead, is Google ever going to be able to control its costs, pay off its technical debt, write checks to the governments when the firm is unjustly found guilty of assorted transgressions?

For now, yes. Forever? Sure, why not?

Stephen E Arnold, May 14, 2024

Will Google Behave Like Telegram?

May 10, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

I posted a short item on LinkedIn about Telegram’s blocking of Ukraine’s information piped into Russia via Telegram. I pointed out that Pavel Durov, the founder of VK and Telegram, told Tucker Carlson that he was into “free speech.” A few weeks after the interview, Telegram blocked the data from Ukraine for Russia’s Telegram users. One reason given, as I recall, was that Apple was unhappy. Telegram rolled over and complied with a request that seems to benefit Russia more than Apple. But that’s just my opinion. The incident, which one of my team verified with a Ukrainian interacting with senior professionals in Ukraine, the block. Not surprisingly, Ukraine’s use of Telegram is under advisement. I think that means, “Find another method of sending encrypted messages and use that.” Compromised communications can translate to “Rest in Peace” in real time.

A Hong Kong rock band plays a cover of the popular hit Glory to Hong Kong. The bats in the sky are similar to those consumed in Shanghai during a bat festival. Thanks, MSFT Copilot. What are you working on today? Security or AI?

I read “Hong Kong Puts Google in Hot Seat With Ban on Protest Song.” That news story states:

The Court of Appeal on Wednesday approved the government’s application for an injunction order to prevent anyone from playing Glory to Hong Kong with seditious intent. While the city has a new security law to punish that crime, the judgment shifted responsibility onto the platforms, adding a new danger that just hosting the track could expose companies to legal risks. In granting the injunction, judges said prosecuting individual offenders wasn’t enough to tackle the “acute criminal problems.”

What’s Google got to do with it that toe tapper Glory to Hong Kong?

The write up says:

The injunction “places Google, media platforms and other social media companies in a difficult position: Essentially pitting values such as free speech in direct conflict with legal obligations,” said Ryan Neelam, program director at the Lowy Institute and former Australian diplomat to Hong Kong and Macau. “It will further the broader chilling effect if foreign tech majors do comply.”

The question is, “Roll over as Telegram allegedly has, or fight Hong Kong and by extension everyone’s favorite streaming video influencer, China?” What will Google do? Scrub Glory to Hong Kong, number one with a bullet on someone’s hit parade I assume.

My guess is that Google will go to court, appeal, and then take appropriate action to preserve whatever revenue is at stake. I do know The Sundar & Prabhakar Comedy Show will not use Glory to Hong Kong as its theme for its 2024 review.

Stephen E Arnold, May 10, 2024