The Fixed Network Lawful Interception Business is Booming

September 11, 2024

It is not just bad actors who profit from an increase in cybercrime. Makers of software designed to catch them are cashing in, too. The Market Research Report 224 blog shares “Fixed Network Lawful Interception Market Region Insights.” Lawful interception is the process by which law enforcement agencies, after obtaining the proper warrants of course, surveil circuit and packet-mode communications. The report shares findings from a study by Data Bridge Market Research on this growing sector. Between 2021 and 2028, this market is expected to grow by nearly 20% annually and hit an estimated value of $5,340 million. We learn:

“Increase in cybercrimes in the era of digitalization is a crucial factor accelerating the market growth, also increase in number of criminal activities, significant increase in interception warrants, rising surge in volume of data traffic and security threats, rise in the popularity of social media communications, rising deployment of 5G networks in all developed and developing economies, increasing number of interception warrants and rising government of both emerging and developed nations are progressively adopting lawful interception for decrypting and monitoring digital and analog information, which in turn increases the product demand and rising virtualization of advanced data centers to enhance security in virtual networks enabling vendors to offer cloud-based interception solutions are the major factors among others boosting the fixed network lawful interception market.”

Furthermore, the pace of these developments will likely increase over the next few years. The write-up specifies key industry players, a list we found particularly useful:

“The major players covered in fixed network lawful interception market report are Utimaco GmbH, VOCAL TECHNOLOGIES, AQSACOM, Inc, Verint, BAE Systems., Cisco Systems, Telefonaktiebolaget LM Ericsson, Atos SE, SS8 Networks, Inc, Trovicor, Matison is a subsidiary of Sedam IT Ltd, Shoghi Communications Ltd, Comint Systems and Solutions Pvt Ltd – Corp Office, Signalogic, IPS S.p.A, ZephyrTel, EVE compliancy solutions and Squire Technologies Ltd among other domestic and global players.”

See the press release for notes on Data Bridge’s methodology. It promises 350 pages of information, complete with tables and charts, for those who purchase a license. Formed in 2014, Data Bridge is based in Haryana, India.

Cynthia Murrell, September 11, 2024

Why Is the Telegram Übermensch Rolling Over Like a Good Dog?

September 10, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I have been following the story of Pavel Durov’s detainment in France, his hiring of a lawyer with an office on St Germaine de Pres, and his sudden cooperativeness. I want to offer come observations on this about face. To begin, let me quote from his public statement at t.me/durov/342:

… we [Pavel and Nikolai] hear voices saying that it’s not enough. Telegram’s abrupt increase in user count to 950M caused growing pains that made it easier for criminals to abuse our platform. That’s why I made it my personal goal to ensure we significantly improve things in this regard. We’ve already started that process internally, and I will share more details on our progress with you very soon.

The Telegram French bulldog flexes his muscles at a meeting with French government officials. Thanks, Microsoft. Good enough like Recall I think.

First, the key item of information is the statement “user count to 950M” [million] users. Telegram’s architecture makes it possible for the company to offer a range of advertising services to those with the Telegram “super app” installed. With the financial success of advertising revenue evidenced by the financial reports from Amazon, Facebook, and Google, the brothers Durov, some long-time collages, and a handful of alternative currency professionals do not want to leave money on the table. Ideals are one thing; huge piles of cash are quite another.

Second, Telegram’s leadership demonstrated Cirque de Soleil-grade flexibility when doing a flip flop on censorship. Regardless of the reason, Mr. Durov chatted up a US news personality. In an interview with a former Murdoch luminary, Mr. Durov complained about the US and sang the praises of free speech. Less than two weeks, Telegram blocked Ukrainian Telegram messages to Russians in Russia about Mr. Putin’s historical “special operation.” After 11 years of pumping free speech, Telegram changed direction. Why? One can speculate but the free speech era at least for Ukraine-to-Russia Messenger traffic ended.

Third, Mr. Durov’s digital empire extends far beyond messaging (whether basic or the incredibly misunderstood “secret” function). As I write this, Mr. Durov’s colleagues who work at arm’s length from Telegram, have rolled out a 2024 version of VKontakte or VK called TONsocial. The idea is to extend the ecosystem of The One Network and its TON alternative currency. (Some might use the word crypto, but I will stick with “alternative”.) Even though these entities and their staff operate at arm’s length, TON is integrated into the Telegram super app. Furthermore, clever alternative currency games are attracting millions of users. The TON alternative currency is complemented with Telegram STAR, another alternative currency available within the super app. In the last month, one of these “games”—technically a dApp or distributed application — has amassed over 35 million users and generates revenue with videos on YouTube. The TON Foundation — operating at arm’s length from Telegram — has set up a marketing program, a developer outreach program with hard currency incentives for certain types of work, and videos on YouTube which promote Telegram-based distributed applications, the alternative currency, and the benefits of the TON ecosystem.

So what’s causing Mr. Durov to shift from the snarling Sulimov to goofy French bulldog? Telegram wants to pull off at IPO or an initial public offering. In order to do that after the US Securities & Exchange Commission shut down his first TON alternative currency play, the brothers Durov and their colleagues cooked up a much less problematic approach to monetize the Telegram ecosystem. An IPO would produce money and fame. An IPO could legitimize a system which some have hypothesized retains strong technical and financial ties to some Russian interests.

The conversion from free speech protector with fangs and money to scratch-my-ears French bulldog may be little more than a desire for wealth and fame… maybe power or an IPO. Mr. Durov has an alleged 100 or more children. That’s a lot of college tuition to pay I imagine. Therefore, I am not surprised: Mr. Durov will:

- Cooperate with the French

- Be more careful with his travel operational security in the future

- Be the individual who can, should he choose, access the metadata and the messages or everyone of the 950 million Telegram users (with so darned few in the EU to boot)

- Sell advertising

- Cook up a new version of VKontakte

- Be a popular person among influential certain other countries’ government professionals.

But as long as he is rich, he will be okay. He watches what he eats, he exercises, and he has allegedly good cosmetic surgeons at his disposal. He is flexible obviously. I can hear the French bulldog emitting dulcet sounds now as it sticks out its chest and perks its ears.

Stephen E Arnold, September 10, 2024

When Egos Collide in Brazil

September 10, 2024

Why the Supreme Federal Court of Brazil has Suspended X

It all started when Brazilian Supreme Court judge Alexandre de Moraes issued a court order requiring X to block certain accounts for spewing misinformation and hate speech. Notably, these accounts belonged to right-wing supporters of former Brazilian President Jair Bolsonaro. After taking his ball and going home, Musk responded with some misinformation and hate speech of his own. He published some insulting AI-generated images of de Moraes, because apparently that is a thing he does now. He has also blatantly refused to pay the fines and appoint the legal representative required by the court. Musk’s tantrums would be laughable if his colossal immaturity were not matched by his dangerous wealth and influence.

But De Moraes seems to be up for the fight. The judge has now added Musk to an ongoing investigation into the spread of fake news and has launched a separate probe into the mogul for obstruction of justice and incitement to crime. We turn to Brazil’s Globo for de Moraes’ perspective in the article, “Por Unanimidade, 1a Turma do STF Mantém X Suspenso No Brasil.” Or in English, “Unanimously, 1st Court of the Supreme Federal Court Maintains X Suspension in Brazil.” Reporter Márcio Falcão writes (in Google Translate’s interpretation):

“Moraes also affirmed that Elon Musk confuses freedom of expression with a nonexistent freedom of aggression and deliberately confuses censorship with the constitutional prohibition of hate speech and incitement to antidemocratic acts. The minister said that ‘the criminal instrumentalization of various social networks, especially network X, is also being investigated in other countries.’ I quote an excerpt from the opinion of Attorney General Paulo Gonet, who agrees with the decision to suspend In this sixth edition. Alexandre de Moraes also affirmed that there have been ‘repeated, conscious, and voluntary failures to comply with judicial orders and non-implementation of daily fines applied, in addition to attempts not to submit to the Brazilian legal system and Judiciary, to ‘Instituting an environment of total impunity and ‘terra sem lei’ [‘lawless land’] in Brazilian social networks, including during the 2024 municipal elections.’”

“A nonexistent freedom of aggression” is a particularly good burn. Chef’s kiss. The article also shares viewpoints from the four other judges who joined de Moraes to suspend X. The court also voted to impose huge fines for any Brazilians who continue to access the platform through a VPN, though The Federal Council of Advocates of Brazil asked de Moraes to reconsider that measure. (Here’s Google’s translation of that piece.) What will be next in this dramatic standoff? And what precedent(s) will be set?

Cynthia Murrell, September 10, 2024

What are the Real Motives Behind the Zuckerberg Letter?

September 5, 2024

Senior correspondent at Vox Adam Clarke Estes considers the motives behind Mark Zuckerberg’s recent letter to Rep. Jim Jordan. He believes “Mark Zuckerberg’s Letter About Facebook Censorship Is Not What it Seems.” For those who are unfamiliar: The letter presents no new information, but reminds us the Biden administration pressured Facebook to stop the spread of Covid-19 misinformation during the pandemic. Zuckerberg also recalls his company’s effort to hold back stories about Hunter Biden’s laptop after the FBI warned they might be part of a Russian misinformation campaign. Now, he insists, he regrets these actions and vows never to suppress “freedom of speech” due to political pressure again.

Naturally, Republicans embrace the letter as further evidence of wrongdoing by the Biden-Harris administration. Many believe it is evidence Zuckerberg is kissing up to the right, even though he specifies in the missive that his goal is to be apolitical. Estes believes there is something else going on. He writes:

“One theory comes from Peter Kafka at Business Insider: ‘Zuckerberg very carefully gave Jordan just enough to claim a political victory — but without getting Meta in any further trouble while it defends itself against a federal antitrust suit. To be clear, Congress is not behind the antitrust lawsuit. The case, which dates back to 2021, comes from the FTC and 40 states, which say that Facebook illegally crushed competition when it acquired Instagram and WhatsApp, but it must be top of mind for Zuckerberg. In a landmark antitrust case less than a month ago, a federal judge ruled against Google, and called it a monopoly. So antitrust is almost certainly on Zuckerberg’s mind. It’s also possible Zuckerberg was just sick of litigating events that happened years ago and wanted to close the loop on something that has caused his company massive levels of grief. Plus, allegations of censorship have been a distraction from his latest big mission: to build artificial general intelligence.”

So is it coincidence this letter came out during the final weeks of a severely close, high-stakes presidential election? Perhaps. An antitrust ruling like the one against Google could be inconvenient for Meta. Curious readers can navigate to the article for more background and more of Estes reasoning.

Cynthia Murrell, September 5, 2024

Accountants: The Leaders Like Philco

September 4, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

AI or smart software has roiled the normal routine of office gossip. We have shifted from “What is it?” to “Who will be affected next?” The integration of AI into work processes, however, is not a new thing. Most people don’t know or don’t recall that when a consultant could do a query from a clunky device like the Texas Instrument Silent 700, AI was already affecting jobs. Whose? Just ask a special librarian who worked when an intermediary was not needed to retrieve information from an online database.

A nervous smart robot running state-of-the-art tax software is sufficiently intelligent to be concerned about the meeting with an IRS audit team. Thanks, MSFT Copilot. How’s that security push coming along? Oh, too bad.

I read “Why America’s Most Boring Job Is on the Brink of Extinction.” I think the story was crafted by a person who received either a D or an F in Accounting 100. The lingo links accountants with being really dull people and the nuking of an entire species. No meteor is needed; just smart software, the silent killer. By the way, my two accountants are quite sporty. I rarely fall asleep when they explain life from their point of view. I listen, and I urge you to be attentive as well. Smart software can do some excellent things, but not everything related to tax, financial planning, and keeping inside the white lines of the quite fluid governmental rules and regulations.

Nevertheless, the write up cited above states:

Experts say the industry is nearing extinction because the 150-hour college credit rule, the intense entry exam and long work hours for minimal pay are unappealing to the younger generation.

The “real” news article includes some snappy quotes too. Here’s one I circled: “’The pay is crappy, the hours are long, and the work is drudgery, and the drudgery is especially so in their early years.’”

I am not an accountant, so I cannot comment on the accuracy of this statement. My father was an accountant, and he was into detail work and was able to raise a family. None of us ended up in jail or in the hospital after a gang fight. (I was and still am a sissy. Imagine that: An 80 year old dinobaby sissy with the DNA of an accountant. I am definitely exciting.)

With fewer people entering the field of accounting, the write up makes a remarkable statement:

… Accountants are becoming overworked and it is leading to mistakes in their work. More than 700 companies cited insufficient staff in accounting and other departments as a reason for potential errors in their quarterly earnings statements…

Does that mean smart software will become the accountants of the future? Some accountants may hope that smart software cannot do accounting. Others will see smart software as an opportunity to improve specific aspects of accounting processes. The problem, however, is not the accountants. The problem will AI is the companies or entrepreneurs who over promise and under deliver.

Will smart software replace the insight and timeline knowledge of an experienced numbers wrangler like my father or the two accountants upon whom I rely?

Unlikely. It is the smart software vendors and their marketers who are most vulnerable to the assertions about Philco, the leader.

Stephen E Arnold, September 4, 2024

Social Media Cowboys, the Ranges Are Getting Fences

September 2, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

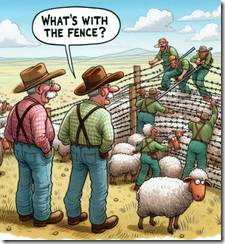

Several recent developments suggest that the wide open and free ranges are being fenced in. How can I justify this statement, pardner? Easy. Check out these recent developments:

- The founder of Telegram is Pavel Durov. He was arrested on Saturday, August 26, 2024, at Le Bourget airport near Paris

- TikTok will stand trial for the harms to children caused by the “algorithm”

- Brazil has put up barbed wire to keep Twitter (now X.com) out of the country.

I am not the smartest dinobaby in the rest home, but even I can figure out that governments are taking action after decades of thinking about more weighty matters than the safety of children, the problems social media causes for parents and teachers, and the importance of taking immediate and direct action against those breaking laws.

A couple of social media ranchers are wondering about the actions of some judicial officials. Thanks, MSFT Copilot. Good enough like most software today.

Several questions seem to be warranted.

First, the actions are uncoordinated. Brazil, France, and the US have reached conclusions about different social media companies and acted without consulting one another. How quickly with other countries consider their particular situation and reach similar conclusions about free range technology outfits?

Second, why have legal authorities and legislators in many countries failed to recognize the issues radiating from social media and related technology operators? Was it the novelty of technology? Was it a lack of technology savvy? Was it moral or financial considerations?

Third, how will the harms be remediated? Is it enough to block a service or change penalties for certain companies?

I am personally not moved by those who say speech must be free and unfettered. Sorry. The obvious harms outweigh that self-serving statement from those who are mesmerized by online or paid to have that idea and promote it. I understand that a percentage of students will become high achievers with or without traditional reading, writing, and arithmetic. However, my concern is the other 95 percent of students. Structured learning is necessary for a society to function. That’s why there is education.

I don’t have any big ideas about ameliorating the obvious damage done by social media. I am a dinobaby and largely untouched by TikTok-type videos or Facebook-type pressures. I am, however, delighted to be able to cite three examples of long overdue action by Brazilian, French, and US officials. Will some of these wild west digital cowboys end up in jail? I might support that, pardner.

Stephen E Arnold, September 2, 2024

Can an AI Journalist Be Dragged into Court and Arrested?

August 28, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “Being on Camera Is No Longer Sensible: Persecuted Venezuelan Journalists Turn to AI.” The main idea is that a video journalist can present the news, not a “real” human journalist. The write up says:

In daily broadcasts, the AI-created newsreaders have been telling the world about the president’s post-election crackdown on opponents, activists and the media, without putting the reporters behind the stories at risk.

The write up points out:

The need for virtual-reality newscasters is easy to understand given the political chill that has descended on Venezuela since Maduro was first elected in 2013, and has worsened in recent days.

Suppression of information seems to be increasing. With the detainment of Pavel Durov, Russia has expressed concern about this abrogation of free speech. Ukrainian government officials might find this rallying in support of Mr. Durov ironic. In April 2024, Telegram filtered content from Ukraine to Russian citizens.

An AI news presenter sitting in a holding cell. Government authorities want to discuss her approach to “real” news. Thanks, MSFT Copilot. Good enough.

Will AI “presenters” or AI “content” prevent the type of intervention suggested by Venezuelan-type government officials?

Several observations:

- Individual journalists may find that the AI avatar “plays” may not fool or amuse certain government authorities. It is possible that the use of AI and the coverage of the tactic in highly-regarded “real” news services exacerbates the problem. Somewhere, somehow a human is behind the avatar. The obvious question is, “Who is that person?”

- Once the individual journalist behind an avatar has been identified and included in an informal or formal discussion, who or what is next in the AI food chain? Is it an organization associated with “free speech”, an online service, or an organization like a giant high-technology company. What will a government do to explore a chat with these entities?

- Once the organization has been pinpointed, what about the people who wrote the software powering the avatar? What will a government do to interact with these individuals?

Step 1 seems fairly simple. Step 2 may involve some legal back and forth, but the process is not particularly novel. However, Step 3 presents a bit of a conundrum, and it presents some challenges. Lawyers and law enforcement for the country whose “laws” have been broken have to deal with certain protocols. Embracing different techniques can have significant political consequences.

My view is that using AI intermediaries is an interesting use case for smart software. The AI doomsayers invoke smart software taking over. A more practical view of AI is that its use can lead to actions which are at first tempests in tea pots. Then when a cluster of AI tea pots get dumped over, difficult to predict activities can emerge. The Venezuelan government’s response to AI talking heads delivering the “real” news is a precursor and worth monitoring.

Stephen E Arnold, August 28, 2024

Meta Leadership: Thank you for That Question

August 26, 2024

Who needs the Dark Web when one has Facebook? We learn from The Hill, “Lawmakers Press Meta Over Illicit Drug Advertising Concerns.” Writer Sarah Fortinsky pulls highlights from the open letter a group of House representatives sent directly to Mark Zuckerberg. The rebuke follows a March report from The Wall Street Journal that Meta was under investigation for “facilitating the sale of illicit drugs.” Since that report, the lawmakers lament, Meta has continued to run such ads. We learn:

The Tech Transparency Project recently reported that it found more than 450 advertisements on those platforms that sell pharmaceuticals and other drugs in the last several months. ‘Meta appears to have continued to shirk its social responsibility and defy its own community guidelines. Protecting users online, especially children and teenagers, is one of our top priorities,’ the lawmakers wrote in their letter, which was signed by 19 lawmakers. ‘We are continuously concerned that Meta is not up to the task and this dereliction of duty needs to be addressed,’ they continued. Meta uses artificial intelligence to moderate content, but the Journal reported the company’s tools have not managed to detect the drug advertisements that bypass the system.”

The bipartisan representatives did not shy from accusing Meta of dragging its heels because it profits off these illicit ad campaigns:

“The lawmakers said it was ‘particularly egregious’ that the advertisements were ‘approved and monetized by Meta.’ … The lawmakers noted Meta repeatedly pushes back against their efforts to establish greater data privacy protections for users and makes the argument ‘that we would drastically disrupt this personalization you are providing,’ the lawmakers wrote. ‘If this personalization you are providing is pushing advertisements of illicit drugs to vulnerable Americans, then it is difficult for us to believe that you are not complicit in the trafficking of illicit drugs,’ they added.”

The letter includes a list of questions for Meta. There is a request for data on how many of these ads the company has discovered itself and how many it missed that were discovered by third parties. It also asks about the ad review process, how much money Meta has made off these ads, what measures are in place to guard against them, and how minors have interacted with them. The legislators also ask how Meta uses personal data to target these ads, a secret the company will surely resist disclosing. The letter gives Zuckerberg until September 6 to respond.

Cynthia Murrell, August 26, 2024

Which Is It, City of Columbus: Corrupted or Not Corrupted Data

August 23, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I learned that Columbus, Ohio, suffered one of those cyber security missteps. But the good news is that I learned from the ever reliable Associated Press, “Mayor of Columbus, Ohio, Says Ransomware Attackers Stole Corrupted, Unusable Data.” But then I read the StateScoop story “Columbus, Ohio, Ransomware Data Might Not Be Corrupted After All.”

The answer is, “I don’t know.” Thanks, MSFT Copilot. Good enough.

The story is a groundhog day tale. A bad actor compromises a system. The bad actor delivers ransomware. The senior officers know little about ransomware and even less about the cyber security systems marketed as a proactive, intelligent defense against bad stuff like ransomware. My view, as you know, is that it is easier to create sales decks and marketing collateral than it is is to deliver cyber security software that works. Keep in mind that I am a dinobaby. I like products that under promise and over deliver. I like software that works, not sort of works or mostly works. Works. That’s it.

What’s interesting about Columbus other than its zoo, its annual flower festival, and the OCLC organization is that no one can agree on this issue. I believe this is a variation on the Bud Abbott and Lou Costello routine “Who’s on First.”

StateScoop’s story reported:

An anonymous cybersecurity expert told local news station WBNS Tuesday that the personal information of hundreds of thousands of Columbus residents is available on the dark web. The claim comes one day after Columbus Mayor Andrew Ginther announced to the public that the stolen data had been “corrupted” and most likely “unusable.” That assessment was based on recent findings of the city’s forensic investigation into the incident.

The article noted:

Last week, the city shared a fact sheet about the incident, which explains: “While the city continues to evaluate the data impacted, as of Friday August 9, 2024, our data mining efforts have not revealed that any of the dark web-posted data includes personally identifiable information.”

What are the lessons I have learned from these two stories about a security violation and ransomware extortion?

- Lousy cyber security is a result of indifferent (maybe lousy) management? How do I know? The City of Columbus cannot generate a consistent story.

- The compromised data were described in two different and opposite ways. The confusion underscores that the individuals involved are struggling with basic data processes. Who’s on first? I don’t know. No, he’s on third.

- The generalization that no one wants the data misses an important point. Data, once available, is of considerable interest to state actors who might be interested in the employees associated with either the university, Chemical Abstracts, or some other information-centric entity in Columbus, Ohio.

Net net: The incident is one more grim reminder of the vulnerabilities which “managers” choose to ignore or leave to people who may lack certain expertise. The fix may begin in the hiring process.

Stephen E Arnold, August 23, 2024

An Ed Critique That Pans the Sundar & Prabhakar Comedy Act

August 16, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read Ed.

Ed refers to Edward Zitron, the thinker behind Where’s Your Ed At. The write up which caught my attention is “Monopoly Money.” I think that Ed’s one-liners will not be incorporated into the Sundar & Prabhakar comedy act. The flubbed live demos are knee slappers, but Ed’s write up is nipping at the heels of the latest Googley gaffe.

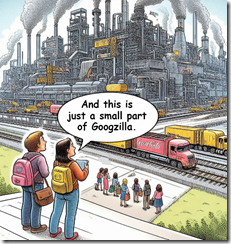

Young people are keen observers of certain high-technology companies. What happens if one of the giants becomes virtual and moves to a Dubai-type location? Who has jurisdiction? Regulatory enforcement delayed means big high-tech outfits are more portable than old-fashioned monopolies. Thanks, MSFT Copilot. Big industrial images are clearly a core competency you have.

Ed’s focus is on the legal decision which concluded that the online advertising company is a monopoly in “general text advertising.” The essay states:

The ruling precisely explains how Google managed to limit competition and choice in the search and ad markets. Documents obtained through discovery revealed the eye-watering amounts Google paid to Samsung ($8 billion over four years) and Apple ($20 billion in 2022 alone) to remain the default search engine on their devices, as well as Mozilla (around $500 million a year), which (despite being an organization that I genuinely admire, and that does a lot of cool stuff technologically) is largely dependent on Google’s cash to remain afloat.

Ed notes:

Monopolies are a big part of why everything feels like it stopped working.

Ed is on to something. The large technology outfits in the US control online. But one of the downstream consequences of what I call the Silicon Valley way or the Googley approach to business is that other industries and market sectors have watched how modern monopolies work. The result is that concentration of power has not been a regulatory priority. The role of data aggregation has been ignored. As a result, outfits like Kroger (a grocery company) is trying to apply Googley tactics to vegetables.

Ed points out:

Remember when “inflation” raised prices everywhere? It’s because the increasingly-dwindling amount of competition in many consumer goods companies allowed them to all raise their prices, gouging consumers in a way that should have had someone sent to jail rather than make $19 million for bleeding Americans dry. It’s also much, much easier for a tech company to establish one, because they often do so nestled in their own platforms, making them a little harder to pull apart. One can easily say “if you own all the grocery stores in an area that means you can control prices of groceries,” but it’s a little harder to point at the problem with the tech industry, because said monopolies are new, and different, yet mostly come down to owning, on some level, both the customer and those selling to the customer.

Blue chip consulting firms flip this comment around. The points Ed makes are the recommendations and tactics the would-be monopolists convert to action plans. My reaction is, “Thanks, Silicon Valley. Nice contribution to society.”

Ed then gets to artificial intelligence, definitely a hot topic. He notes:

Monopolies are inherently anti-consumer and anti-innovation, and the big push toward generative AI is a blatant attempt to create another monopoly — the dominance of Large Language Models owned by Microsoft, Amazon, Google and Meta. While this might seem like a competitive marketplace, because these models all require incredibly large amounts of cloud compute and cash to both train and maintain, most companies can’t really compete at scale.

Bingo.

I noted this Ed comment about AI too:

This is the ideal situation for a monopolist — you pay them money for a service and it runs without you knowing how it does so, which in turn means that you have no way of building your own version. This master plan only falls apart when the “thing” that needs to be trained using hardware that they monopolize doesn’t actually provide the business returns that they need to justify its existence.

Ed then makes a comment which will cause some stakeholders to take a breath:

As I’ve written before, big tech has run out of hyper-growth markets to sell into, leaving them with further iterations of whatever products they’re selling you today, which is a huge problem when big tech is only really built to rest on its laurels. Apple, Microsoft and Amazon have at least been smart enough to not totally destroy their own products, but Meta and Google have done the opposite, using every opportunity to squeeze as much revenue out of every corner, making escape difficult for the customer and impossible for those selling to them. And without something new — and no, generative AI is not the answer — they really don’t have a way to keep growing, and in the case of Meta and Google, may not have a way to sustain their companies past the next decade. These companies are not built to compete because they don’t have to, and if they’re ever faced with a force that requires them to do good stuff that people like or win a customer’s love, I’m not sure they even know what that looks like.

Viewed from a Googley point of view, these high-technology outfits are doing what is logical. That’s why the Google advertisement for itself troubled people. The person writing his child willfully used smart software. The fellow embodied a logical solution to the knotty problem of feelings and appropriate behavior.

Ed suggests several remedies for the Google issue. These make sense, but the next step for Google will be an appeal. Appeals take time. US government officials change. The appetite to fight legions of well resourced lawyers can wane. The decision reveals some interesting insights into the behavior of Google. The problem now is how to alter that behavior without causing significant market disruption. Google is really big, and changes can have difficult-to-predict consequences.

The essay concludes:

I personally cannot leave Google Docs or Gmail without a significant upheaval to my workflow — is a way that they reinforce their monopolies. So start deleting sh*t. Do it now. Think deeply about what it is you really need — be it the accounts you have and the services you need — and take action. They’re not scared of you, and they should be.

Interesting stance.

Several observations:

- Appeals take time. Time favors outfits like losers of anti-trust cases.

- Google can adapt and morph. The size and scale equip the Google in ways not fathomable to those outside Google.

- Google is not Standard Oil. Google is like AT&T. That break up resulted in reconsolidation and two big Baby Bells and one outside player. So a shattered Google may just reassemble itself. The fancy word for this is emergent.

Ed hits some good points. My view is that the Google fumbles forward putting the Sundar & Prabhakar Comedy Act in every city the digital wagon can reach.

Stephen E Arnold, August 16, 2024