Encryption: Not the UK Way but Apple Is A-Okay

March 6, 2025

The UK is on a mission. It seems to be making progress. The BBC Reports, "Apple Pulls Data Protection Tool After UK Government Security Row." Technology editor Zoe Kleinman explains:

"Apple is taking the unprecedented step of removing its highest level data security tool from customers in the UK, after the government demanded access to user data. Advanced Data Protection (ADP) means only account holders can view items such as photos or documents they have stored online through a process known as end-to-end encryption. But earlier this month the UK government asked for the right to see the data, which currently not even Apple can access. Apple did not comment at the time but has consistently opposed creating a ‘backdoor’ in its encryption service, arguing that if it did so, it would only be a matter of time before bad actors also found a way in. Now the tech giant has decided it will no longer be possible to activate ADP in the UK. It means eventually not all UK customer data stored on iCloud – Apple’s cloud storage service – will be fully encrypted."

The UK’s security agency, the Home Office, refused to comment on the matter. Apple states it was "gravely disappointed" with this outcome. It emphasizes its longstanding refusal to build any kind of back door or master key. It is the principle of the thing. Instead, it is now removing the locks on the main entrance. Much better.

As of the publication of Kleinman’s article, new iCloud users who tried to opt into ADP received an error message. Apparently, protection for existing users will be stripped at a later date. Some worry Apple’s withdrawal of ADP from the UK sets a bad precedent in the face of similar demands in other countries. Of course, so would caving in to them. The real culprit here, some say, is the UK government that put its citizens’ privacy at risk. Will other governments follow its lead? Will tech firms develop some best practices in the face of such demands? We wonder what their priorities will be.

Cynthia Murrell, March 6, 2025

The Big Cull: Goodbye, Type A People Who Make the Government Chug Along

March 3, 2025

The work of a real, live dinobaby. Sorry, no smart software involved. Whuff, whuff. That’s the sound of my swishing dino tail. Whuff.

The work of a real, live dinobaby. Sorry, no smart software involved. Whuff, whuff. That’s the sound of my swishing dino tail. Whuff.

I used to work at a couple of big time consulting firms in Washington, DC. Both were populated with the Googlers of that time. The blue chip consulting firm boasted a wider range of experts than the nuclear consulting outfit. There were some lessons I learned beginning with my first day on the job in the early 1970s. Here are three:

- Most of the Federal government operates because of big time consulting firms which do “work” and show up for meetings with government professionals

- Government professionals manage big time consulting firms’ projects with much of the work day associated with these projects and assorted fire drills related to non consulting firm work

- Government workers support, provide input, and take credit or avoid blame for work involving big time consulting firms. These individuals are involved in undertaking tasks not assigned to consulting firms and doing the necessary administrative and support work for big time consulting firm projects.

A big time consulting professional has learned that her $2.5 billion project has been cancelled. The contract workers are now coming toward her, and they are a bit agitated because they have been terminated. Thanks, OpenAI. Too bad about your being “out of GPUs.” Planning is sometimes helpful.

There were some other things I learned in 1972, but these three insights appear to have had sticking power. Whenever I interacted with the US federal government, I kept the rules in mind and followed them for a number of not-do-important projects.

This brings me to the article in what is now called Nextgov FCW. I think “FCW” means or meant Federal Computer Week. The story which I received from a colleague who pays a heck of a lot more attention to the federal government than I do caught my attention.

[Note: This article’s link was sometimes working and sometimes not working. If you 404, you will have do do some old fashioned research.] “Trump Administration Asks Agencies to Cull Consultants” says:

The acting head of the General Services Administration, Stephen Ehikian, asked “agency senior procurement executive[s]” to review their consulting contracts with the 10 companies the administration deemed the highest paid using procurement data — Deloitte, Accenture Federal Services, Booz Allen Hamilton, General Dynamics, Leidos, Guidehouse, Hill Mission Technologies Corp., Science Applications International Corporation, CGI Federal and International Business Machines Corporation — in a memo dated Feb. 26 obtained by Nextgov/FCW. Those 10 companies “are set to receive over $65 billion in fees in 2025 and future years,” Ehikian wrote. “This needs to, and must, change,” he added in bold.

Mr. Ehikian’s GSA biography states:

Stephen Ehikian currently serves as Acting Administrator and Deputy Administrator of the General Services Administration. Stephen is a serial entrepreneur in the software industry who has successfully built and sold two companies focused on sales and customer service to Salesforce (Airkit.ai in 2023 and RelateIQ in 2014). He most recently served as Vice President of AI Products and has a strong record of identifying next-generation technology. He is committed to accelerating the adoption of technology throughout government, driving maximum efficiency in government procurement for the benefit of all taxpayers, and will be working closely with the DOGE team to do so. Stephen graduated from Yale University with a bachelor’s degree in Mechanical Engineering and Economics and earned an MBA from Stanford University.

The firms identified in the passage from Nextgov would have viewed a person with Mr. Ehikian’s credentials as a potential candidate for a job. In the 1970s, an individuals with prior business experience and an MBA would have been added as an associate and assigned to project teams. He would have attended one of the big time consulting firms’ “charm schools.” The idea at the firm which employed me was that each big time consulting firm had a certain way of presenting information, codes of conduct, rules of engagement with prospects and clients, and even the way to dress.

Today I am not sure what a managing partner would assign a person like Mr. Ehikian to undertake. My initial thought is that I am a dinobaby and don’t have a clue about how one of the big time firms in the passage listing companies with multi billions of US government contracts operates. I don’t think too much would change because at the firm where I labored for a number of years much of the methodology was nailed down by 1920 and persisted for 50 years when I arrived. Now 50 years from the date of my arrival, I would be dollars to donuts that the procedures, the systems, and the methods were quite similar. If a procedure works, why change it dramatically. Incremental improvements will get the contract signed. The big time consulting firms have a culture and acculturation is important to these firms’ success.

The cited Nextgov article reports:

The notice comes alongside a new executive order directing agencies to build centralized tech to record all payments issued through contracts and grants, along with justification for those payments. Agency leaders were also told to review all grants and contracts within 30 days and terminate or modify them to reduce spending under that executive order.

This project to “build centralized technology to record all payments issued through contracts and grants” is exactly the type of work that some of the big time consulting firms identified can do. I know that some government entities have the expertise to create this type of system. However, given the time windows, the different departments and cross departmental activities, and the accounting and database hoops that must be navigated, the order to “build centralized technology to record all payments” is a very big job. (That’s why big time consulting firms exist. The US federal government has not developed the pools of expensive and specialized talent to do some big jobs.) I have worked on not-too-important jobs, and I found that just do it was easier said than done.

Several observations:

- I am delighted that I am no longer working at either of the big time consulting firms which used to employ me. At age 80, I don’t have the stamina to participate in the intense, contentious, what are we going to do meetings that are going to ruin many consulting firms’ weekends.

- I am not sure what will happen when the consulting firms’ employees and contractors’ just stop work. Typically, when there is not billing, people are terminated. Bang. Yes, just like that. Maybe today’s work world is a kinder and gentler place, but I am not sure about that.

- The impact on citizens and other firms dependent on the big time consulting firms’ projects is likely to chug along with not much visible change. Then just like the banking outages today (February 28, 2024) in the UK, systems and services will begin to exhibit issues. Some may just outright fail without the ministrations of consulting firm personnel.

- Figuring out which project is mission critical and which is not may be more difficult than replacing a broken MacBook Pro at the Apple Store in the old Carnegie Library Building on K Street. Decisions like these were typical of the projects that big time consulting firms were set up to handle with aplomb. A mistake may take months to surface. If several pop up in one week, excitement will ensue. That thinking for the future is what big time consulting firms do as part of their work. Pulling a plug on an overheating iron in a DC hotel is easy. Pulling a plug on a consulting firm is different for many reasons.

Net net: The next few months will be interesting. I have my eye on the big time consulting firms. I am also watching how the IRS and Social Security System computer infrastructure works. I want to know but no longer will be able to get the information about the management of devices in the arsenal not too far from a famous New Jersey golf course. I wonder about the support of certain military equipment outside the US. I am doing a lot of wondering.

That is fine for me. I am a dinobaby. For others in the big time consulting game and the US government professionals who are involved with these service firms’ contracts, life is a bit more interesting.

Stephen E Arnold, March 3, 2025

Researchers Raise Deepseek Security Concerns

February 25, 2025

What a shock. It seems there are some privacy concerns around Deepseek. We learn from the Boston Herald, “Researchers Link Deepseek’s Blockbuster Chatbot to Chinese Telecom Banned from Doing Business in US.” Former Wall Street Journal and now AP professional Byron Tau writes:

“The website of the Chinese artificial intelligence company Deepseek, whose chatbot became the most downloaded app in the United States, has computer code that could send some user login information to a Chinese state-owned telecommunications company that has been barred from operating in the United States, security researchers say. The web login page of Deepseek’s chatbot contains heavily obfuscated computer script that when deciphered shows connections to computer infrastructure owned by China Mobile, a state-owned telecommunications company.”

If this is giving you déjà vu, dear reader, you are not alone. This scenario seems much like the uproar around TikTok and its Chinese parent company ByteDance. But it is actually worse. ByteDance’s direct connection to the Chinese government is, as of yet, merely hypothetical. China Mobile, on the other hand, is known to have direct ties to the Chinese military. We learn:

“The U.S. Federal Communications Commission unanimously denied China Mobile authority to operate in the United States in 2019, citing ‘substantial’ national security concerns about links between the company and the Chinese state. In 2021, the Biden administration also issued sanctions limiting the ability of Americans to invest in China Mobile after the Pentagon linked it to the Chinese military.”

It was Canadian cybersecurity firm Feroot Security that discovered the code. The AP then had the findings verified by two academic cybersecurity experts. Might similar code be found within TikTok? Possibly. But, as the article notes, the information users feed into Deepseek is a bit different from the data TikTok collects:

“Users are increasingly putting sensitive data into generative AI systems — everything from confidential business information to highly personal details about themselves. People are using generative AI systems for spell-checking, research and even highly personal queries and conversations. The data security risks of such technology are magnified when the platform is owned by a geopolitical adversary and could represent an intelligence goldmine for a country, experts warn.”

Interesting. But what about CapCut, the ByteDance video thing?

Cynthia Murrell, February 25, 2025

Rest Easy. AI Will Not Kill STEM Jobs

February 25, 2025

Written by a dinobaby, not smart software. But I would replace myself with AI if I could.

Written by a dinobaby, not smart software. But I would replace myself with AI if I could.

Bob Hope quipped, “A sense of humor is good for you. Have you ever heard of a laughing hyena with heart burn?” No, Bob, I have not.

Here’s a more modern joke for you from the US Bureau of Labor Statistics circa 2025. It is much fresher than Mr. Hope’s quip from a half century ago.

The Bureau of Labor Statistics says:

Employment in the professional, scientific, and technical services sector is forecast to increase by 10.5% from 2023 to 2033, more than double the national average. (Source: Investopedia)

Okay, I wonder what those LinkedIn, XTwitter, and Reddit posts about technology workers not being able to find jobs in these situations:

- Recent college graduates with computer science degrees

- Recently terminated US government workers from agencies like 18F

- Workers over 55 urged to take early retirement?

The item about the rosy job market appeared in Slashdot too. Here’s the quote I noted:

Employment in the professional, scientific, and technical services sector is forecast to increase by 10.5% from 2023 to 2033, more than double the national average. According to the BLS, the impact AI will have on tech-sector employment is highly uncertain. For one, AI is adept at coding and related tasks. But at the same time, as digital systems become more advanced and essential to day-to-day life, more software developers, data managers, and the like are going to be needed to manage those systems. "Although it is always possible that AI-induced productivity improvements will outweigh continued labor demand, there is no clear evidence to support this conjecture," according to BLS researchers.

Robert Half, an employment firm, is equally optimistic. Just a couple of weeks ago, that outfit said:

Companies continue facing strong competition from other firms for tech talent, particularly for candidates with specialized skills. Across industries, AI proficiency tops the list of most-sought capabilities, with organizations needing expertise for everything from chatbots to predictive maintenance systems. Other in-demand skill areas include data science, IT operations and support, cybersecurity and privacy, and technology process automation.

What am I to conclude from these US government data? Here are my preliminary thoughts:

- The big time consulting firms are unlikely to change their methods of cost reduction; that is, if software (smart or dumb) can do a job for less money, that software will be included on a list of options. Given a choice of going out of business or embracing smart software, a significant percentage of consulting firm clients will give AI a whirl. If AI works and the company stays in business or grows, the humans will be repurposed or allowed to find their future elsewhere.

- The top one percent in any discipline will find work. The other 99 percent will need to have family connections, family wealth, or a family business to provide a boost for a great job. What if a person is not in the top one percent of something? Yeah, well, that’s not good for quite a few people.

- The permitted dominance of duopolies or oligopolies in most US business sectors means that some small and mid-sized businesses will have to find ways to generate revenue. My experience in rural Kentucky is that local accounting, legal, and technology companies are experimenting with smart software to boost productivity (the MBA word for cheaper work functions). Local employment options are dwindling because the smaller employers cannot stay in business. Potential employees want more pay than the company can afford. Result? Downward spiral which appears to be accelerating.

Am I confident in statistics related to wages, employment, and the growth of new businesses and industrial sectors? No, I am not. Statistical projects work pretty well in nuclear fuel management. Nested mathematical procedures in smart software work pretty well for some applications. Using smart software to reduce operating costs work pretty well right now.

Net net: Without meaningful work, some of life’s challenges will spark unanticipated outcomes. Exactly what type of stress breaks a social construct? Those in the job hunt will provide numerous test cases, and someone will do an analysis. Will it be correct? Sure, close enough for horseshoes.

Stop complaining. Just laugh as Mr. Hope noted. No heartburn and cost savings too boot.

Stephen E Arnold, February 25, 2025

Thailand Creeps into Action with Some Swiss Effort

February 24, 2025

Hackers are intelligent bad actors who use their skills for evil. They do black hat hacking tricks for their own gains. The cyber criminal recently caught in a raid performed by three countries was definitely a huge scammer. Khaosod English reports on the takedown: “Thai-Swiss-US Operation Nets Hackers Behind 1,000+ Cyber Attacks.”

Four European hackers were arrested on the Thai island Phuket. They were charged with using ransomware to steal $16 million from over 1000 victims. The hackers were wanted by Swiss and US authorities.

Thai, Swiss, and US law enforcement officials teamed up in Operation Phobos Aetor to arrest the bad actors. They were arrested on February 10, 2025 in Phuket. The details are as follows:

“The suspects, two men and two women, were apprehended at Mono Soi Palai, Supalai Palm Spring, Supalai Vista Phuket, and Phyll Phuket x Phuketique Phyll. Police seized over 40 pieces of evidence, including mobile phones, laptops, and digital wallets. The suspects face charges of Conspiracy to Commit an Offense Against the United States and Conspiracy to Commit Wire Fraud.

The arrests stemmed from an urgent international cooperation request from Swiss authorities and the United States, involving Interpol warrants for the European suspects who had entered Thailand as part of a transnational criminal organization.”

The ransomware attacks accessed private networks to steal personal data and they also encrypted files. The hackers demanded cryptocurrency payments for decryption keys and threatened to publish data if the ransoms weren’t paid.

Let’s give a round of applause to putting these crooks behind bars! On to Myanmar and Lao PDR!

Whitney Grace, February 24, 2025

Google and Personnel Vetting: Careless?

February 20, 2025

No smart software required. This dinobaby works the old fashioned way.

No smart software required. This dinobaby works the old fashioned way.

The Sundar & Prabhakar Comedy Show pulled another gag. This one did not delight audiences the way Prabhakar’s AI presentation did, nor does it outdo Google’s recent smart software gaffe. It is, however, a bit of a hoot for an outfit with money, smart people, and smart software.

I read the decidedly non-humorous news release from the Department of Justice titled “Superseding Indictment Charges Chinese National in Relation to Alleged Plan to Steal Proprietary AI Technology.” The write up states on February 4, 2025:

A federal grand jury returned a superseding indictment today charging Linwei Ding, also known as Leon Ding, 38, with seven counts of economic espionage and seven counts of theft of trade secrets in connection with an alleged plan to steal from Google LLC (Google) proprietary information related to AI technology. Ding was initially indicted in March 2024 on four counts of theft of trade secrets. The superseding indictment returned today describes seven categories of trade secrets stolen by Ding and charges Ding with seven counts of economic espionage and seven counts of theft of trade secrets.

Thanks, OpenAI, good enough.

Mr. Ding, obviously a Type A worker, appears to have quite industrious at the Google. He was not working for the online advertising giant; he was working for another entity. The DoJ news release describes his set up this way:

While Ding was employed by Google, he secretly affiliated himself with two People’s Republic of China (PRC)-based technology companies. Around June 2022, Ding was in discussions to be the Chief Technology Officer for an early-stage technology company based in the PRC. By May 2023, Ding had founded his own technology company focused on AI and machine learning in the PRC and was acting as the company’s CEO.

What technology caught Mr. Ding’s eye? The write up reports:

Ding intended to benefit the PRC government by stealing trade secrets from Google. Ding allegedly stole technology relating to the hardware infrastructure and software platform that allows Google’s supercomputing data center to train and serve large AI models. The trade secrets contain detailed information about the architecture and functionality of Google’s Tensor Processing Unit (TPU) chips and systems and Google’s Graphics Processing Unit (GPU) systems, the software that allows the chips to communicate and execute tasks, and the software that orchestrates thousands of chips into a supercomputer capable of training and executing cutting-edge AI workloads. The trade secrets also pertain to Google’s custom-designed SmartNIC, a type of network interface card used to enhance Google’s GPU, high performance, and cloud networking products.

At least, Mr. Ding validated the importance of some of Google’s sprawling technical insights. That’s a plus I assume.

One of the more colorful items in the DoJ news release concerned “evidence.” The DoJ says:

As alleged, Ding circulated a PowerPoint presentation to employees of his technology company citing PRC national policies encouraging the development of the domestic AI industry. He also created a PowerPoint presentation containing an application to a PRC talent program based in Shanghai. The superseding indictment describes how PRC-sponsored talent programs incentivize individuals engaged in research and development outside the PRC to transmit that knowledge and research to the PRC in exchange for salaries, research funds, lab space, or other incentives. Ding’s application for the talent program stated that his company’s product “will help China to have computing power infrastructure capabilities that are on par with the international level.”

Mr. Ding did not use Google’s cloud-based presentation program. I found the explicit desire to “help China” interesting. One wonders how Google’s Googley interview process run by Googley people failed to notice any indicators of Mr. Ding’s loyalties? Googlers are very confident of their Googliness, which obviously tolerates an insider threat who conveys data to a nation state known to be adversarial in its view of the United States.

I am a dinobaby, and I find this type of employee insider threat at Google. Google bought Mandiant. Google has internal security tools. Google has a very proactive stance about its security capabilities. However, in this case, I wonder if a Googler ever noticed that Mr. Ding used PowerPoint, not the Google-approved presentation program. No true Googler would use PowerPoint, an archaic, third party program Microsoft bought eons ago and has managed to pump full of steroids for decades.

Yep, the tell — Googlers who use Microsoft products. Sundar & Prabhakar will probably integrate a short bit into their act in the near future.

Stephen E Arnold, February 20, 2025

Unified Data Across Governments? How Useful for a Non Participating Country

February 18, 2025

A dinobaby post. No smart software involved.

A dinobaby post. No smart software involved.

I spoke with a person whom I have known for a long time. The individual lives and works in Washington, DC. He mentioned “disappeared data.” I did some poking around and, sure enough, certain US government public facing information had been “disappeared.” Interesting. For a short period of time I made a few contributions to what was FirstGov.gov, now USA.gov.

For those who don’t remember or don’t know about President Clinton’s Year 2000 initiative, the idea was interesting. At that time, access to public-facing information on US government servers was via the Web search engines. In order to locate a tax form, one would navigate to an available search system. On Google one would just slap in IRS or IRS and the form number.

Most of the US government public-facing Web sites were reasonably straight forward. Others were fairly difficult to use. The US Marine Corps’ Web site had poor response times. I think it was hosted on something called Server Beach, and the would-be recruit would have to wait for the recruitment station data to appear. The Web page worked but it was slow.

President Clinton wanted or someone in his administration wanted the problem to be fixed with a search system for US government public-facing content. After a bit of work, the system went online in September 2000. The system morphed into a US government portal a bit like the Yahoo.com portal model.

I thought about the information in “Oracle’s Ellison Calls for Governments to Unify Data to Feed AI.” The write up reports:

Oracle Corp.’s co-founder and chairman Larry Ellison said governments should consolidate all national data for consumption by artificial intelligence models, calling this step the “missing link” for them to take full advantage of the technology. Fragmented sets of data about a population’s health, agriculture, infrastructure, procurement and borders should be unified into a single, secure database that can be accessed by AI models…

Several questions arise; for instance:

- What country or company provides the technology?

- Who manages what data are added and what data are deleted?

- What are the rules of access?

- What about public data which are not available for public access; for example, the “disappeared” data from US government Web sites?

- What happens to commercial or quasi-commercial government units which repackage public data and sell it at a hefty mark up?

Based on my brief brush with the original Clinton project, I think the idea is interesting. But I have one other question in mind: What happens when non-participating countries get access to the aggregated public facing data. Digital information is a tricky resource to secure. In fact, once data are digitized and connected to a network, it is fair game. Someone, somewhere will figure out how to access, obtain, exfiltrate, and benefit from aggregated data.

The idea is, in my opinion, a bit of grandstanding like Google’s quantum supremacy claims. But US high technology wizards are ready and willing to think big thoughts and take even bigger actions. We live in interesting times, but I am delighted that I am old.

Stephen E Arnold, February 18, 2025

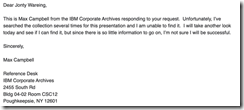

IBM Faces DOGE Questions?

February 17, 2025

Simon Willison reminded us of the famous IBM internal training document that reads: “A Computer Can Never Be Held Accountable.” The document is also relevant for AI algorithms. Unfortunately the document has a mysterious history and the IBM Corporate Archives don’t have a copy of the presentation. A Twitter user with the name @bumblebike posted the original image. He said he found it when he went through his father’s papers. Unfortunately, the presentation with the legendary statement was destroyed in a 2019 flood.

I believe the image was first shared online in this tweet by @bumblebike in February 2017. Here’s where they confirm it was from 1979 internal training.

Here’s another tweet from @bumblebike from December 2021 about the flood:

Unfortunately destroyed by flood in 2019 with most of my things. Inquired at the retirees club zoom last week, but there’s almost no one the right age left. Not sure where else to ask.”

We don’t need the actual IBM document to know that IBM hasn’t done well when it comes to search. IBM, like most firms tried and sort of fizzled. (Remember Data Fountain or CLEVER?) IBM also moved into content management. Yep, the semi-Xerox, semi-information thing. But the good news is that a time sharing solution called Watson is doing pretty well. It’s not winning Jeopardy! but it is chugging along.

Now IBM professionals in DC have to answer the Doge nerd squad questions? Why not give OpenAI a whirl? The old Jeopardy! winner is kicking back. Doge wants to know.

Whitney Grace, February 17, 2025

What Happens When Understanding Technology Is Shallow? Weakness

February 14, 2025

Yep, a dinobaby wrote this blog post. Replace me with a subscription service or a contract worker from Fiverr. See if I care.

Yep, a dinobaby wrote this blog post. Replace me with a subscription service or a contract worker from Fiverr. See if I care.

I like this question. Even more satisfying is that a big name seems to have answered it. I refer to an essay by Gary Marcus in “The Race for “AI Supremacy” Is Over — at Least for Now.”

Here’s the key passage in my opinion:

China caught up so quickly for many reasons. One that deserves Congressional investigation was Meta’s decision to open source their LLMs. (The question that Congress should ask is, how pivotal was that decision in China’s ability to catch up? Would we still have a lead if they hadn’t done that? Deepseek reportedly got its start in LLMs retraining Meta’s Llama model.) Putting so many eggs in Altman’s basket, as the White House did last week and others have before, may also prove to be a mistake in hindsight. … The reporter Ryan Grim wrote yesterday about how the US government (with the notable exception of Lina Khan) has repeatedly screwed up by placating big companies and doing too little to foster independent innovation

The write up is quite good. What’s missing, in my opinion, is the linkage of a probe to determine how a technology innovation released as a not-so-stealthy open source project can affect the US financial markets. The result was satisfying to the Chinese planners.

Also, the write up does not put the probe or “foray” in a strategic context. China wants to make certain its simple message “China smart, US dumb” gets into the world’s communication channels. That worked quite well.

Finally, the write up does not point out that the US approach to AI has given China an opportunity to demonstrate that it can borrow and refine with aplomb.

Net net: I think China is doing Shien and Temu in the AI and smart software sector.

Stephen E Arnold, February 14, 2025

Hauling Data: Is There a Chance of Derailment?

February 13, 2025

Another dinobaby write up. Only smart software is the lousy train illustration.

Another dinobaby write up. Only smart software is the lousy train illustration.

I spotted some chatter about US government Web sites going off line. Since I stepped away from the “index the US government” project, I don’t spend much time poking around the content at dot gov and in some cases dot com sites operated by the US government. Let’s assume that some US government servers are now blocked and the content has gone dark to a user looking for information generated by US government entities.

If libraries chug chug down the information railroad tracks to deliver data, what does the “Trouble on the Tracks” sign mean? Thanks, You.com. Good enough.

The fix in most cases is to use Bing.com. My recollection is that a third party like Bing provided the search service to the US government. A good alternative is to use Google.com, the qualifier site: command, and a bit of obscenity. The obscenity causes the Google AI to just generate a semi relevant list of links. In a pinch, you could poke around for a repository of US government information. Unfortunately the Library of Congress is not that repository. The Government Printing Office does not do the job either. The Internet Archive is a hit-and-miss archive operation.

Is there another alternative? Yes. Harvard University announced its Data.gov archive. The institution’s Library Innovation Lab Team said on February 6, 2025:

Today we released our archive of data.gov on Source Cooperative. The 16TB collection includes over 311,000 datasets harvested during 2024 and 2025, a complete archive of federal public datasets linked by data.gov. It will be updated daily as new datasets are added to data.gov.

I like this type of archive, but I am a dinobaby, not a forward leaning, “with it” thinker. Information in my mind belongs in a library. A library, in general, should provide students and those seeking information with a place to go to obtain information. The only advertising I see in a library is an announcement about a bake sale to raise funds for children’s reading material.

Will the Harvard initiative and others like it collide with something on the train tracks? Will the money to buy fuel for the engine’s power plant be cut off? Will the train drivers be forced to find work at Shake Shack?

I have no answers. I am glad I am old, but I fondly remember when the job was to index the content on US government servers. The quaint idea formulated by President Clinton was to make US government information available. Now one has to catch a train.

Stephen E Arnold, February 13, 2025