Is Google Really Clever and Well Managed or the Other Way Round?

December 19, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

“Google Will Pay $700 Million to Settle a Play Store Antitrust Lawsuit with All 50 US States” reports that Google put up a blog post. (You can read that at this link. The title of the post is worth the click.) Neowin.net reported that Google will “make some changes.”

“What’s happened to our air vent?” asks one government regulatory professional. Thanks, MSFT Copilot. Good enough.

Change is good. It is better if that change is organic in my opinion. But change is change. I noted this statement in the Neowin.net article:

The public reveal of this settlement between Google and the US state attorney generals comes just a few days after a jury ruled against Google in a similar case with developer Epic Games. The jury agreed with Epic’s view that Google was operating an illegal monopoly with its Play Store on Android devices. Google has stated it will appeal the jury’s decision.

Yeah, timing.

Several observations:

- It appears that some people perceive Google as exercising control over their decisions and the framing of those decisions

- The business culture creating the need to pay a $700 million penalty are likely to persist because the people who write checks at Google are not the people facilitating the behaviors creating the legal issue in my opinion

- The payday, when distributed, is not the remedy for some of those snared in the Googley approach to business.

Net net: Other nation states may look at the $700 million number and conclude, “Let’s take another look at that outfit.”

Stephen E Arnold, December 19, 2023

Google and Its Epic Magic: Will It Keep on Thrilling?

December 17, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The Financial Times (the orange newspaper) published a paywalled essay/interview with Epic Games’s CEO Tim Sweeney. The hook for the sit down was the decision that a court proceeding determined that Google had acted in an illegal way. How? Google developed Android, then Google used that mobile system as a platform for revenue generation. These appear to have involved one-off special deals with some companies and a hefty commission on sales made via the Google Play Store.

Will the magic show continue to surprise and entertain the innocent at the party? Thanks, MSFT Copilot. Close enough for horseshoes, but I wanted a Godzilla monster in a tuxedo doing the tricks. But that’s forbidden.

Several items struck me in the article “Epic Games Chief Concerned Google Will Get Away with App Store Charges.”

First, the trial made clear that Google was unable to back up certain data. Here’s how the Financial Times’s story phrased this matter:

The judge in the case, US district judge James Donato, also criticized the company for its failure to preserve evidence, with internal policies for deleting chats. He instructed the jury that they were free to conclude Google’s chat deletion policies were designed to conceal incriminating evidence. “The Google folks clearly knew what they were doing,” Sweeney said. “They had very lucid writings internally as they were writing emails to each other, though they destroyed most of the chats.” “And then there was the massive document destruction,” Sweeney added. “It’s astonishing that a trillion-dollar corporation at the pinnacle of the American tech industry just engages in blatantly dishonest processes, such as putting all of their communications in a form of chat that is destroyed every 24 hours.” Google has since changed its chat deletion policy.

Taking steps to obscure evidence suggests to me that Google operates in an ethical zone with which I and the judge find uncomfortable. The behavior also implies that Google professionals are not just clever, but that they do what pays off within a governance system which is comfortable with a philosophy of entitlement. Google does what Google does. Oh, that is a problem for others. Well, that’s too bad.

Second, according to the article, Google would pursue “alternative payment methods.” The online ad giant would then slap a fee to list a product in the Google Play Store. The method has a number of variations which can include a fee for promoting a product to offering different size listings. The idea is similar to a grocery chain charging a manufacturer to put annoying free standing displays of breakfast foods in the center of a high traffic aisle.

Third , Mr. Sweeney seems happy with the evidence about payola which emerged during the trial. Google appears to have payed Samsung to sell its digital goods via the Google Play Store. The pay-to-play model apparently prevented the South Korean company from setting up an alternative store for Android equipped mobile devices.

Several observations:

- The trial, unlike the proceedings in the DC monopoly probe produced details about what Google does to generate lock in, money, and Googliness

- The destruction of evidence makes clear a disdain for behavior which preserves the trust and integrity of certain norms of behavior

- The trial makes clear that Google wants to preserve its dominant position and will pay to remain Number One.

Net net: Will Google’s magic wow everyone as it did when the company was gaining momentum? For some, yes. For others, no, sorry. I think the costume Google has worn for decades is now weakening at the seams. But the show must go on.

Stephen E Arnold, December 17, 2023

An Effort to Put Spilled Milk Back in the Bottle

December 15, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Microsoft was busy when the Activision Blizzard saga began. I dimly recall thinking, “Hey, one way to distract people from the SolarWinds’ misstep would be to become an alleged game monopoly.” I thought that Microsoft would drop the idea, but, no. I was wrong. Microsoft really wanted to be an alleged game monopoly. Apparently the successes (past and present) of Nintendo and Sony, the failure of Google’s Grand Slam attempt, and the annoyance of refurbished arcade game machines was real. Microsoft has focus. And guess what government agency does not? Maybe the Federal Trade Commission?

Two bureaucrats to be engage in a mature discussioin about the rules for the old-fashioned game of Monopoly. One will become a government executive; the other will become a senior legal professional at a giant high-technology outfit. Thanks, MSFT Copilot. You capture the spirit of rational discourse in a good enough way.

The MSFT game play may not be over. “The FTC Is Trying to Get Back in the Ring with Microsoft Over Activision Deal” asserts:

Nearly five months later, the FTC has appealed the court’s decision, arguing that the lower court essentially just believed whatever Microsoft said at face value…. We said at the time that Microsoft was clearly taking the complaints from various regulatory bodies as some sort of paint by numbers prescription as to what deals to make to get around them. And I very much can see the FTC’s point on this. It brought a complaint under one set of facts only to have Microsoft alter those facts, leading to the courts slamming the deal through before the FTC had a chance to amend its arguments. But ultimately it won’t matter. This last gasp attempt will almost certainly fail. American regulatory bodies have dull teeth to begin with and I’ve seen nothing that would lead me to believe that the courts are going to allow the agency to unwind a closed deal after everything it took to get here.

From my small office in rural Kentucky, the government’s desire or attempt to get “back in the ring” is interesting. It illustrates how many organizations approach difficult issues.

The advantage goes to the outfit with [a] the most money, [b] the mental wherewithal to maintain some semblance of focus, and [c] a mechanism to keep moving forward. The big four wheel drive will make it through the snow better than a person trying to ride a bicycle in a blizzard.

The key sentence in the cited article, in my opinion, is:

“I fail to understand how giving somebody a monopoly of something would be pro-competitive,” said Imad Dean Abyad, an FTC attorney, in the argument Wednesday before the appeals court. “It may be a benefit to some class of consumers, but that is very different than saying it is pro-competitive.”

No problem with that logic.

And who is in charge of today Monopoly games?

Stephen E Arnold, December 15, 2023

23andMe: Fancy Dancing at the Security Breach Ball

December 11, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Here’s a story I found amusing. Very Sillycon Valley. Very high school science clubby. Navigate to “23andMe Moves to Thwart Class-Action Lawsuits by Quietly Updating Terms.” The main point of the write up is that the firm’s security was breached. How? Probably those stupid customers or a cyber security vendor installing smart software that did not work.

How some influential wizards work to deflect actions hostile to their interests. In the cartoon, the Big Dog tells a young professional, “Just change the words.” Logical, right? Thanks, MSFT Copilot. Close enough for horseshoes.

The article reports:

Following a hack that potentially ensnared 6.9 million of its users, 23andMe has updated its terms of service to make it more difficult for you to take the DNA testing kit company to court, and you only have 30 days to opt out.

I have spit in a 23andMe tube. I’m good at least for this most recent example of hard-to-imagine security missteps. The article cites other publications but drives home what I think is a useful insight into the thought process of big-time Sillycon Valley firms:

customers were informed via email that “important updates were made to the Dispute Resolution and Arbitration section” on Nov. 30 “to include procedures that will encourage a prompt resolution of any disputes and to streamline arbitration proceedings where multiple similar claims are filed.” Customers have 30 days to let the site know if they disagree with the terms. If they don’t reach out via email to opt out, the company will consider their silence an agreement to the new terms.

No more neutral arbitrators, please. To make the firm’s intentions easier to understand, the cited article concludes:

The new TOS specifically calls out class-action lawsuits as prohibited. “To the fullest extent allowed by applicable law, you and we agree that each party may bring disputes against the only party only in an individual capacity, and not as a class action or collective action or class arbitration” …

I like this move for three reasons:

- It provides another example of the tactics certain Information Highway contractors view the Rules of the Road. In a word, “flexible.” In another word, “malleable.”

- The maneuver is one that seems to be — how shall I phrase it — elephantine, not dainty and subtle.

- The “fix” for the problem is to make the estimable company less likely to get hit with massive claims in a court. Courts, obviously, are not to be trusted in some situations.

I find the entire maneuver chuckle invoking. Am I surprised at the move? Nah. You can’t kid this dinobaby.

Stephen E Arnold, December 11, 2023

The High School Science Club Got Fined for Its Management Methods

December 4, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I almost missed this story. “Google Reaches $27 Million Settlement in Case That Sparked Employee Activism in Tech” which contains information about the cost of certain management methods. The write up asserts:

Google has reached a $27 million settlement with employees who accused the tech giant of unfair labor practices, setting a record for the largest agreement of its kind, according to California state court documents that haven’t been previously reported.

The kindly administrator (a former legal eagle) explains to the intelligent teens in the high school science club something unpleasant. Their treatment of some non sci-club types will cost them. Thanks, MSFT Copilot. Who’s in charge of the OpenAI relationship now?

The article pegs the “worker activism” on Google. I don’t know if Google is fully responsible. Googzilla’s shoulders and wallet are plump enough to carry the burden in my opinion. The article explains:

In terminating the employee, Google said the person had violated the company’s data classification guidelines that prohibited staff from divulging confidential information… Along the way, the case raised issues about employee surveillance and the over-use of attorney-client privilege to avoid legal scrutiny and accountability.

Not surprisingly, the Google management took a stand against the apparently unjust and unwarranted fine. The story notes via a quote from someone who is in the science club and familiar with its management methods::

“While we strongly believe in the legitimacy of our policies, after nearly eight years of litigation, Google decided that resolution of the matter, without any admission of wrongdoing, is in the best interest of everyone,” a company spokesperson said.

I want to point out that the write up includes links to other articles explaining how the Google is refining its management methods.

Several questions:

- Will other companies hit by activist employees be excited to learn the outcome of Google’s brilliant legal maneuvers which triggered a fine of a mere $27 million

- Has Google published a manual of its management methods? If not, for what is the online advertising giant waiting?

- With more than 170,000 (plus or minus) employees, has Google found a way to replace the unpredictable, expensive, and recalcitrant employees with its smart software? (Let’s ask Bard, shall we?)

After 25 years, the Google finds a way to establish benchmarks in managerial excellence. Oh, I wonder if the company will change it law firm line up. I mean $27 million. Come on. Loose the semantic noose and make more ads “relevant.”

Stephen E Arnold, December 4, 2023

PicRights in the News: Happy Holidays

November 28, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

With the legal eagles cackling in their nests about artificial intelligence software using content without permission, the notion of rights enforcement is picking up steam. One niche in rights enforcement is the business of using image search tools to locate pictures and drawings which appear in blogs or informational Web pages.

StackOverflow hosts a thread by a developer who linked to or used an image more than a decade ago. On November 23, 2023, the individual queried those reading Q&A section about a problem. “Am I Liable for Re-Sharing Image Links Provided via the Stack Exchange API?”

The legal eagle jabs with his beak at the person who used an image, assuming it was open source. The legal eagle wants justice to matter. Thanks, MSFT Copilot. A couple of tries, still not on point, but good enough.

The explanation of the situation is interesting to me for three reasons: [a] The alleged infraction took place in 2010; [b] Stack Exchange is a community learning and sharing site which manifests some open sourciness; and [c] information about specific rights, content ownership, and data reached via links is not front and center.

Ignorance of the law, at least in some circles, is not excuse for a violation. The cited post reveals that an outfit doing business as PicRights wants money for the unlicensed use of an image or art in 2010 (at least that’s how I read the situation).

What’s interesting is the related data provided by those who are responding to the request for information; for example:

- A law firm identified as Higbee & Asso. is the apparent pointy end of the spear pointed at the alleged offender’s wallet

- A link to an article titled “Is PicRights a Scam? Are Higbee & Associates Emails a Scam?”

- A marketing type of write up called “How To Properly Deal With A PicRights Copyright Unlicensed Image Letter”.

How did the story end? Here’s what the person accused of infringing did:

According to Law I am liable. I have therefore decided to remove FAQoverflow completely, all 90k+ pages of it, and will negotiate with PicRights to pay them something less than the AU$970 that they are demanding.

What are the downsides to the loss of the FAQoverflow content? I don’t know. But I assume that the legal eagles, after gobbling one snack, are aloft and watching the AI companies. That’s where the big bucks will be. Legal eagles have a fondness for big bucks I believe.

Net net: Justice is served by some interesting birds, eagles and whatnot.

Stephen E Arnold, November 28, 2023

Microsoft, the Techno-Lord: Avoid My Galloping Steed, Please

November 27, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The Merriam-Webster.com online site defines “responsibility” this way:

re·?spon·?si·?bil·?I·?ty

1 : the quality or state of being responsible: such as

: moral, legal, or mental accountability

: RELIABILITY, TRUSTWORTHINESS

: something for which one is responsible

The online sector has a clever spin on responsibility; that is, in my opinion, the companies have none. Google wants people who use its online tools and post content created with those tools to make sure that what the Google system outputs does not violate any applicable rules, regulations, or laws.

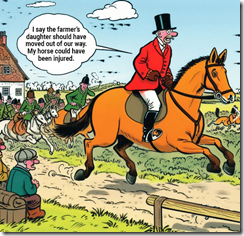

In a traditional fox hunt, the hunters had the “right” to pursue the animal. If a farmer’s daughter were in the way, it was the farmer’s responsibility to keep the silly girl out of the horse’s path. That will teach them to respect their betters I assume. Thanks, MSFT Copilot. I know you would not put me in a legal jeopardy, would you? Now what are the laws pertaining to copyright for a cartoon in Armenia? Darn, I have to know that, don’t I.

Such a crafty way of defining itself as the mere creator of software machines has inspired Microsoft to follow a similar path. The idea is that anyone using Microsoft products, solutions, and services is “responsible” to comply with applicable rules, regulations, and laws.

Tidy. Logical. Complete. Just like a nifty algebra identity.

Microsoft believes they have no liability if an AI, like Copilot, is used to infringe on copyrighted material.

The write up includes this passage:

So this all comes down to, according to Microsoft, that it is providing a tool, and it is up to users to use that tool within the law. Microsoft says that it is taking steps to prevent the infringement of copyright by Copilot and its other AI products, however, Microsoft doesn’t believe it should be held legally responsible for the actions of end users.

The write up (with no Jimmy Kimmel spin) includes this statement, allegedly from someone at Microsoft:

Microsoft is willing to work with artists, authors, and other content creators to understand concerns and explore possible solutions. We have adopted and will continue to adopt various tools, policies, and filters designed to mitigate the risk of infringing outputs, often in direct response to the feedback of creators. This impact may be independent of whether copyrighted works were used to train a model, or the outputs are similar to existing works. We are also open to exploring ways to support the creative community to ensure that the arts remain vibrant in the future.

From my drafty office in rural Kentucky, the refusal to accept responsibility for its business actions, its products, its policies to push tools and services on users, and the outputs of its cloudy system is quite clever. Exactly how will a user of products pushed at users like Edge and its smart features prevent a user from acquiring from a smart Microsoft system something that violates an applicable rule, regulation, or law?

But legal and business cleverness is the norm for the techno-feudalists. Let the surfs deal with the body of the child killed when the barons chase a fox through a small leasehold. I can hear the brave royals saying, “It’s your fault. Your daughter was in the way. No, I don’t care that she was using the free Microsoft training materials to learn how to use our smart software.”

Yep, responsible. The death of the hypothetical child frees up another space in the training course.

Stephen E Arnold, November 27, 2023

A Rare Moment of Constructive Cooperation from Tech Barons

November 23, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Platform-hopping is one way bad actors have been able to cover their tracks. Now several companies are teaming up to limit that avenue for one particularly odious group. TechNewsWorld reports, “Tech Coalition Launches Initiative to Crackdown on Nomadic Child Predators.” The initiative is named Lantern, and the Tech Coalition includes Discord, Google, Mega, Meta, Quora, Roblox, Snap, and Twitch. Such cooperation is essential to combat a common tactic for grooming and/ or sextortion: predators engage victims on one platform then move the discussion to a more private forum. Reporter John P. Mello Jr. describes how Lantern works:

Participating companies upload ‘signals’ to Lantern about activity that violates their policies against child sexual exploitation identified on their platform.

Signals can be information tied to policy-violating accounts like email addresses, usernames, CSAM hashes, or keywords used to groom as well as buy and sell CSAM. Signals are not definitive proof of abuse. They offer clues for further investigation and can be the crucial piece of the puzzle that enables a company to uncover a real-time threat to a child’s safety.

Once signals are uploaded to Lantern, participating companies can select them, run them against their platform, review any activity and content the signal surfaces against their respective platform policies and terms of service, and take action in line with their enforcement processes, such as removing an account and reporting criminal activity to the National Center for Missing and Exploited Children and appropriate law enforcement agency.”

The visually oriented can find an infographic of this process in the write-up. We learn Lantern has been in development for two years. Why did it take so long to launch? Part of it was designing the program to be effective. Another part was to ensure it was managed responsibly: The project was subjected to a Human Rights Impact Assessment by the Business for Social Responsibility. Experts on child safety, digital rights, advocacy of marginalized communities, government, and law enforcement were also consulted. Finally, we’re told, measures were taken to ensure transparency and victims’ privacy.

In the past, companies hesitated to share such information lest they be considered culpable. However, some hope this initiative represents a perspective shift that will extend to other bad actors, like those who spread terrorist content. Perhaps. We shall see how much tech companies are willing to cooperate. They wouldn’t want to reveal too much to the competition just to help society, after all.

Cynthia Murrell, November 23, 2023

EU Objects to Social Media: Again?

November 21, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Social media is something I observe at a distance. I want to highlight the information in “X Is the Biggest Source of Fake News and Disinformation, EU Warns.” Some Americans are not interested in what the European Union thinks, says, or regulates. On the other hand, the techno feudalistic outfits in the US of A do pay attention when the EU hands out reprimands, fines, and notices of auditions (not for the school play, of course).

This historic photograph shows a super smart, well paid, entitled entrepreneur letting the social media beast out of its box. Now how does this genius put the creature back in the box? Good questions. Thanks, MSFT Copilot. You balked, but finally output a good enough image.

The story in what I still think of as “the capitalist tool” states:

European Commission Vice President Vera Jourova said in prepared remarks that X had the “largest ratio of mis/disinformation posts” among the platforms that submitted reports to the EU. Especially worrisome is how quickly those spreading fake news are able to find an audience.

The Forbes’ article noted:

The social media platforms were seen to have turned a blind eye to the spread of fake news.

I found the inclusion of this statement a grim reminder of what happens when entities refuse to perform content moderation:

“Social networks are now tailor-made for disinformation, but much more should be done to prevent it from spreading widely,” noted Mollica [a teacher at American University]. “As we’ve seen, however, trending topics and algorithms monetize the negativity and anger. Until that practice is curbed, we’ll see disinformation continue to dominate feeds.”

What is Forbes implying? Is an American corporation a “bad” actor? Is the EU parking at a dogwood, not a dog? Is digital information reshaping how established processes work?

From my point of view, putting a decades old Pandora or passel of Pandoras back in a digital box is likely to be impossible. Once social fabrics have been disintegrated by massive flows of unfiltered information, the woulda, coulda, shoulda chatter is ineffectual. X marks the spot.

Stephen E Arnold, November 2023

The Power of Regulation: Muscles MSFT Meets a Strict School Marm

November 17, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “The EU Will Finally Free Windows Users from Bing.” The EU? That collection of fractious states which wrangle about irrelevant subjects; to wit, the antics of America’s techno-feudalists. Yep, that EU.

The “real news” write up reports:

Microsoft will soon let Windows 11 users in the European Economic Area (EEA) disable its Bing web search, remove Microsoft Edge, and even add custom web search providers — including Google if it’s willing to build one — into its Windows Search interface. All of these Windows 11 changes are part of key tweaks that Microsoft has to make to its operating system to comply with the European Commission’s Digital Markets Act, which comes into effect in March 2024

The article points out that the DMA includes a “slew” of other requirements. Please, do not confuse “slew” with “stew.” These are two different things.

The old fashioned high school teacher says to the high school super star, “I don’t care if you are an All-State football player, you will do exactly as I say. Do you understand?” The outsized scholar-athlete scowls and say, “Yes, Mrs. Ee-You. I will comply.” Thank you MSFT Copilot. You converted the large company into an image I had of its business practices with aplomb.

Will Microsoft remove Bing — sorry, Copilot — from its software and services offered in the EU? My immediate reaction is that the Redmond crowd will find a way to make the magical software available. For example, will such options as legalese and a check box, a new name, a for fee service with explicit disclaimers and permissions, and probably more GenZ ideas foreign to me do the job?

The techno weight lifter should not be underestimated. Those muscles were developed moving bundles of money, not dumb “belles.”

Stephen E Arnold, November 17, 2023