Allegations of Personal Data Flows from X.com to Au10tix

June 4, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

I work from my dinobaby lair in rural Kentucky. What the heck to I know about Hod HaSharon, Israel? The answer is, “Not much.” However, I read an online article called “Elon Musk Now Requiring All X Users Who Get Paid to Send Their Personal ID Details to Israeli Intelligence-Linked Corporation.”I am not sure if the statements in the write up are accurate. I want to highlight some items from the write up because I have not seen information about this interesting identify verification process in my other feeds. This could be the second most covered news item in the last week or two. Number one goes to Google’s telling people to eat a rock a day and its weird “not our fault” explanation of its quantumly supreme technology.

Here’s what I carried away from this X to Au10tix write up. (A side note: Intel outfits like obscure names. In this case, Au10tix is a cute conversion of the word authentic to a unique string of characters. Aw ten tix. Get it?)

Yes, indeed. There is an outfit called Au10tix, and it is based about 60 miles north of Jerusalem, not in the intelware capital of the world Tel Aviv. The company, according to the cited write up, has a deal with Elon Musk’s X.com. The write up asserts:

X now requires new users who wish to monetize their accounts to verify their identification with a company known as Au10tix. While creator verification is not unusual for online platforms, Elon Musk’s latest move has drawn intense criticism because of Au10tix’s strong ties to Israeli intelligence. Even people who have no problem sharing their personal information with X need to be aware that the company they are using for verification is connected to the Israeli government. Au10tix was founded by members of the elite Israeli intelligence units Shin Bet and Unit 8200.

Sounds scary. But that’s the point of the article. I would like to remind you, gentle reader, that Israel’s vaunted intelligence systems failed as recently as October 2023. That event was described to me by one of the country’s former intelligence professionals as “our 9/11.” Well, maybe. I think it made clear that the intelware does not work as advertised in some situations. I don’t have first-hand information about Au10tix, but I would suggest some caution before engaging in flights of fancy.

The write up presents as actual factual information:

The executive director of the Israel-based Palestinian digital rights organization 7amleh, Nadim Nashif, told the Middle East Eye: “The concept of verifying user accounts is indeed essential in suppressing fake accounts and maintaining a trustworthy online environment. However, the approach chosen by X, in collaboration with the Israeli identity intelligence company Au10tix, raises significant concerns. “Au10tix is located in Israel and both have a well-documented history of military surveillance and intelligence gathering… this association raises questions about the potential implications for user privacy and data security.” Independent journalist Antony Loewenstein said he was worried that the verification process could normalize Israeli surveillance technology.

What the write up did not significant detail. The write up reports:

Au10tix has also created identity verification systems for border controls and airports and formed commercial partnerships with companies such as Uber, PayPal and Google.

My team’s research into online gaming found suggestions that the estimable 888 Holdings may have a relationship with Au10tix. The company pops up in some of our research into facial recognition verification. The Israeli gig work outfit Fiverr.com seems to be familiar with the technology as well. I want to point out that one of the Fiverr gig workers based in the UK reported to me that she was no longer “recognized” by the Fiverr.com system. Yeah, October 2023 style intelware.

Who operates the company? Heading back into my files, I spotted a few names. These individuals may no longer involved in the company, but several names remind me of individuals who have been active in the intelware game for a few years:

- Ron Atzmon: Chairman (Unit 8200 which was not on the ball on October 2023 it seems)

- Ilan Maytal: Chief Data Officer

- Omer Kamhi: Chief Information Security Officer

- Erez Hershkovitz: Chief Financial Officer (formerly of the very interesting intel-related outfit Voyager Labs, a company about which the Brennan Center has a tidy collection of information related to the LAPD)

The company’s technology is available in the Azure Marketplace. That description identifies three core functions of Au10tix’ systems:

- Identity verification. Allegedly the system has real-time identify verification. Hmm. I wonder why it took quite a bit of time to figure out who did what in October 2023. That question is probably unfair because it appears no patrols or systems “saw” what was taking place. But, I should not nit pick. The Azure service includes a “regulatory toolbox including disclaimer, parental consent, voice and video consent, and more.” That disclaimer seems helpful.

- Biometrics verification. Again, this is an interesting assertion. As imagery of the October 2023 emerged I asked myself, “How did that ID to selfie, selfie to selfie, and selfie to token matches” work? Answer: Ask the families of those killed.

- Data screening and monitoring. The system can “identify potential risks and negative news associated with individuals or entities.” That might be helpful in building automated profiles of individuals by companies licensing the technology. I wonder if this capability can be hooked to other Israeli spyware systems to provide a particularly helpful, real-time profile of a person of interest?

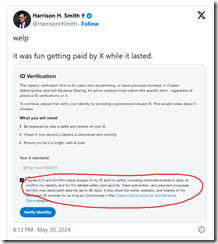

Let’s assume the write up is accurate and X.com is licensing the technology. X.com — according to “Au10tix Is an Israeli Company and Part of a Group Launched by Members of Israel’s Domestic Intelligence Agency, Shin Bet” — now includes this

The circled segment of the social media post says:

I agree to X and Au10tix using images of my ID and my selfie, including extracted biometric data to confirm my identity and for X’s related safety and security, fraud prevention, and payment purposes. Au10tix may store such data for up to 30 days. X may store full name, address, and hashes of my document ID number for as long as I participate in the Creator Subscription or Ads Revenue Share program.

This dinobaby followed the October 2023 event with shock and surprise. The dinobaby has long been a champion of Israel’s intelware capabilities, and I have done some small projects for firms which I am not authorized to identify. Now I am skeptical and more critical. What if X’s identity service is compromised? What if the servers are breached and the data exfiltrated? What if the system does not work and downstream financial fraud is enabled by X’s push beyond short text messaging? Much intelware is little more than glorified and old-fashioned search and retrieval.

Does Mr. Musk or other commercial purchasers of intelware know about cracks and fissures in intelware systems which allowed the October 2023 event to be undetected until live-fire reports arrived? This tie up is interesting and is worth monitoring.

Stephen E Arnold, June 4, 2024

Elasticsearch Versus RocksDB: The Old Real Time Razzle Dazzle

July 22, 2021

Something happens. The “event” is captured and written to the file. Even if you are watching the “something” happening, there is latency between the event and the sensor or the human perceiving the event. The calculus of real time is mostly avoiding too much talk about latency. But real time is hot because who wants to look at old data, not TikTok fans and not the money-fueled lovers of Robinhood.

“Rockset CEO on Mission to Bring Real-Time Analytics to the Stack” used lots of buzzwords, sidesteps inherent latency, and avoids commentary on other allegedly real-time analytics systems. Rockset is built on RockDB, an open source software. Nevertheless, there is some interesting information about Elasticsearch; for example:

- Unsupported factoids like: “Every enterprise is now generating more data than what Google had to index in [year] 2000.”

- No definition or baseline for “simple”: “The combination of the converged index along with the distributed SQL engine is what allows Rockset to be fast, scalable, and quite simple to operate.”

- Different from Elasticsearch and RocksDB: “So the biggest difference between Elastic and RocksDB comes from the fact that we support full-featured SQL including JOINs, GROUP BY, ORDER BY, window functions, and everything you might expect from a SQL database. Rockset can do this. Elasticsearch cannot.”

- Similarities with Rockset: “So Lucene and Elasticsearch have a few things in common with Rockset, such as the idea to use indexes for efficient data retrieval.”

- Jargon and unique selling proposition: “We use converged indexes, which deliver both what you might get from a database index and also what you might get from an inverted search index in the same data structure. Lucene gives you half of what a converged index would give you. A data warehouse or columnar database will give you the other half. Converged indexes are a very efficient way to build both.”

Amazon has rolled out its real time system, and there are a number of options available from vendors like Trendalyze.

Each of these vendors emphasizes real time. The problem, however, is that latency exists regardless of system. Each has use cases which make their system seem to be the solution to real time data analysis. That’s what makes horse races interesting. These unfold in real time if one is at the track. Fractional delays have big consequences for those betting their solution is the least latent.

Stephen E Arnold, July 22, 2021

Quantexa: A Better Way to Nail a Money Launderer?

July 29, 2020

We noted the Techcrunch article “Quantexa Raises $64.7M to Bring Big Data Intelligence to Risk Analysis and Investigations.” There were a number of interesting statements or factoids in the write up; for example:

Altogether, Quantexa has “thousands of users” across 70+ countries, it said, with additional large enterprises, including Standard Chartered, OFX and Dunn & Bradstreet.

We also circled in true blue marker this passage:

As an example, typically, an investigation needs to do significantly more than just track the activity of one individual or one shell company, and you need to seek out the most unlikely connections between a number of actions in order to build up an accurate picture. When you think about it, trying to identify, track, shut down and catch a large money launderer (a typical use case for Quantexa’s software) is a classic big data problem.

And lastly:

Marria [the founder] says that it has a few key differentiators from these. First is how its software works at scale: “It comes back to entity resolution that [calculations] can be done in real time and at batch,” he said. “And this is a platform, software that is easily deployed and configured at a much lower total cost of ownership. It is tech and that’s quite important in the current climate.”

Some “real time” systems require time consuming and often elaborate configuration to produce useful outputs. The buzzwords take precedence over the nuts and bolts of installing, herding data, and tuning the outputs of this type of system.

Worth monitoring how the company’s approach moves forward.

Stephen E Arnold, July 29, 2020

Cricket More Popular Than Koran

December 11, 2017

In the West, we tend to think that Islamic countries spend all waking hours of the day praying, reading the Koran, and doing other religious-based activities. We forget that these people are just as human as the rest of the world and have a genuine interest in other things, like sports. While not the most popular sport in North America, cricket has billions of fans and is very popular in Pakistan reports Research Snipers in the article, “Most Popular Keywords Searched On Google Pakistan.”

Google Trends is a free service the search engine provides that allows people to see how popular a search query is. It shows how popular the search query is across a global spectrum. When it comes to Pakistan, the most popular search terms of 2017 are as follows:

Top keywords searched in Pakistan in 2017, till now are

-

Pakistan

-

Cricket Pakistan

-

Pakistan Cricket Team

-

India

-

Pakistan India

-

News Pakistan

Pakistan Jobs.

People in Pakistan are huge sports fans of the British sport and shopping apparently. The Google AutoComplete tool suggests search terms based on letters users type into the search box. Wen “A” is typed into a Pakistan Google search box, Amazon pops up. Pakistanis love to shop and the sports cricket. They are not any different than the rest of the world.

Whitney Grace, December 11, 2017

Elastic Stack Offers Machine Learning Functionality on the Side

June 1, 2017

Elastic, the company behind the Elasticsearch stack, has announced the release of a commercial add-on available via X-Pack. The product will detect unusual changes or anomalies in Elasticsearch’s real-time data results.

Elastic is not overpromising the features of the add-on – a fact that is praised by Infoworld in Elasticsearch Stack Wises Up with Machine Learning:

One possible issue is that non-open-source machine learning applications can look more impressive than they actually are. Elastic is avoiding that (for now) by confining the promise of the new features to specific, well-defined goals. It’s also likely to be even more powerful when a full non-beta version is available at the scale provided by cloud partners like Google.

Elastic, based on Lucene, has emerged as the go-to choice for enterprise search. A free and open source version of the software is available at https://www.elastic.co/downloads/elasticsearch. By keeping its goals realistic, is Elastic poised to not only be in the race for the long haul, but win the search gold medal?

Mary Pattengill, June 1, 2017

Google and the Cloud Take on Corporate Database Management

February 1, 2017

The article titled Google Cloud Platform Releases New Database Services, Fighting AWS and Azure for Corporate Customers on GeekWire suggests that Google’s corporate offerings have been weak in the area of database management. Compared to Amazon Web Services and Microsoft Azure, Google is only wading into the somewhat monotonous arena of corporate database needs. The article goes into detail on the offerings,

Cloud SQL, Second Generation, is a service offering instances of the popular MySQL database. It’s most comparable to AWS’s Aurora and SQL Azure, though there are some differences from SQL Azure, so Microsoft allows running a MySQL database on Azure. Google’s Cloud SQL supports MySQL 5.7, point-in-time recovery, automatic storage resizing and one-click failover replicas, the company said. Cloud Bigtable is a NoSQL database, the same one that powers Google’s own search, analytics, maps and Gmail.

The Cloud Bigtable database is made to handle major workloads of 100+ petabytes, and it comes equipped with resources such as Hadoop and Spark. It will be fun to see what happens as Google’s new service offering hits the ground running. How will Amazon and Microsoft react? Will price wars arise? If so, only good can come of it, at least for the corporate consumers.

Chelsea Kerwin, February 1, 2017

Rise of Fake News Should Have All of Us Questioning Our Realities

January 31, 2017

The article on NBC titled Five Tips on How to Spot Fake News Online reinforces the catastrophic effects of “fake news,” or news that flat-out delivers false and misleading information. It is important to separate “fake news” from ideologically-slanted news sources and the mess of other issues dragging any semblance of journalistic integrity through the mud, but the article focuses on a key point. The absolute best practice is to take in a variety of news sources. Of course, when it comes to honest-to-goodness “fake news,” we would all be better off never reading it in the first place. The article states,

A growing number of websites are espousing misinformation or flat-out lies, raising concerns that falsehoods are going viral over social media without any mechanism to separate fact from fiction. And there is a legitimate fear that some readers can’t tell the difference. A study released by Stanford University found that 82 percent of middle schoolers couldn’t spot authentic news sources from ads labeled as “sponsored content.” The disconnect between true and false has been a boon for companies trying to turn a quick profit.

So how do we separate fact from fiction? Checking the web address and avoiding .lo and .co.com addresses, researching the author, differentiating between blogging and journalism, and again, relying on a variety of sources such as print, TV, and digital. In a time when even the President-to-be, a man with the best intelligence in the world at his fingerprints, chooses to spread fake news (aka nonsense) via Twitter that he won the popular vote (he did not) we all need to step up and examine the information we consume and allow to shape our worldview.

Chelsea Kerwin, January 31, 2017

Bing Gets Nostalgic

January 25, 2017

In my entire life, I have never seen so many people who were happy to welcome in a New Year. 2016 will be remembered for violence, political uproar, and other stuff that people wish to forget. Despite the negative associations with 2016, other stuff did happen and looking back might offer a bit of nostalgia for the news and search trends of the past year. On MSFT runs down a list of what happened on Bing in 2016,“Check Out The Top Search Trends On Bing This Past Year.”

Rather than focusing on a list of just top searches, Bing’s top 2016 searches are divided into categories: video games, Olympians, viral moments, tech trends, and feel good stories. More top searches are located over at Bing page. However, on the top viral trends it is nice to see that cat videos have gone down in popularity:

Ryder Cup heckler

Villanova’s piccolo girl

Powerball

Aston Martin winner

Who’s the mom?

Evgenia Medvedeva

Harambe the gorilla

#DaysoftheWeek

Cats of the Internet

Pokemon Go

On a personal level, I am surprised that Harambe the gorilla outranked Pokemon Go. Some of these trends I do not even remember making the Internet circuit and I was on YouTube and Reddit for all of 2016. I have been around enough years to recognize that things come and go and 2016 might have come off as a bad year for many, in reality, it was another year. It also did not forecast doomsday. That was back in 2000, folks. Get with the times!

Whitney Grace, January 25, 2017

Google Popular Times Now in Real Time

January 20, 2017

Just a quick honk about a little Google feature called Popular Times. LifeHacker points out an improvement to the tool in, “Google Will Now Show You How Busy a Business Is in Real Time.” To help users determine the most efficient time to shop or dine, the feature already provided a general assessment of businesses’ busiest times. Now, though, it bases that information on real-time metrics. Writer Thorin Klosowski specifies:

The real time data is rolling out starting today. You’ll see that it’s active if you see a ‘Live’ box next to the popular times when you search for a business. The data is based on location data and search terms, so it’s not perfect, but will at least give you a decent idea of whether or not you’ll easily find a place to sit at a bar or how packed a store might be. Alongside the real-time data comes some other info, including how long people stay at a location on average and hours by department, which is handy when a department like a pharmacy or deli close earlier the rest of a store.

Just one more way Google tries to make life a little easier for its users. That using it provides Google with even more free, valuable data is just a side effect, I’m sure.

Cynthia Murrell, January 20, 2017

Google May Erase Line Between History and Real Time

December 30, 2016

Do you remember where you were or what you searched the first time you used Google? This investors.com author does and shares the story about that, in addition to the story about what may be the last time he used Google. The article entitled Google Makes An ‘Historic’ Mistake reports on the demise of a search feature on mobile. Users may no longer search published dates in a custom range. It was accessed by clicking “Search tools” followed by “Any time”. The article provides Google’s explanation for the elimination of this feature,

On a product forum page where it made this announcement, Google says:

After much thought and consideration, Google has decided to retire the Search Custom Date Range Tool on mobile. Today we are starting to gradually unlaunch this feature for all users, as we believe we can create a better experience by focusing on more highly-utilized search features that work seamlessly across both mobile and desktop. Please note that this will still be available on desktop, and all other date restriction tools (e.g., “Past hour,” “Past 24 hours,” “Past week,” “Past month,” “Past year”) will remain on mobile.

The author critiques Google, saying this move force users back to the dying desktop for this feature no longer prioritized on mobile. The point appears to be missed in this critique. The feature was not heavily utilized. With the influx of real-time data, who needs history — who needs time limits? Certainly not a Google mobile search user.

Megan Feil, December 30, 2016