Bad News Delivered via Math

March 1, 2024

This essay is the work of a dumb humanoid. No smart software required.

This essay is the work of a dumb humanoid. No smart software required.

I am not going to kid myself. Few people will read “Hallucination is Inevitable: An Innate Limitation of Large Language Models” with their morning donut and cold brew coffee. Even fewer will believe what the three amigos of smart software at the National University of Singapore explain in their ArXiv paper. Hard on the heels of Sam AI-Man’s ChatGPT mastering Spanglish, the financial payoffs are just too massive to pay much attention to wonky outputs from smart software. Hey, use these methods in Excel and exclaim, “This works really great.” I would suggest that the AI buggy drivers slow the Kremser down.

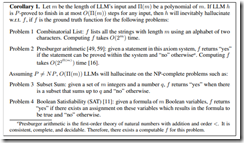

The killer corollary. Source: Hallucination is Inevitable: An Innate Limitation of Large Language Models.

The paper explains that large language models will be reliably incorrect. The paper includes some fancy and not so fancy math to make this assertion clear. Here’s what the authors present as their plain English explanation. (Hold on. I will give the dinobaby translation in a moment.)

Hallucination has been widely recognized to be a significant drawback for large language models (LLMs). There have been many works that attempt to reduce the extent of hallucination. These efforts have mostly been empirical so far, which cannot answer the fundamental question whether it can be completely eliminated. In this paper, we formalize the problem and show that it is impossible to eliminate hallucination in LLMs. Specifically, we define a formal world where hallucination is defined as inconsistencies between a computable LLM and a computable ground truth function. By employing results from learning theory, we show that LLMs cannot learn all of the computable functions and will therefore always hallucinate. Since the formal world is a part of the real world which is much more complicated, hallucinations are also inevitable for real world LLMs. Furthermore, for real world LLMs constrained by provable time complexity, we describe the hallucination-prone tasks and empirically validate our claims. Finally, using the formal world framework, we discuss the possible mechanisms and efficacies of existing hallucination mitigators as well as the practical implications on the safe deployment of LLMs.

Here’s my take:

- The map is not the territory. LLMs are a map. The territory is the human utterances. One is small and striving. The territory is what is.

- Fixing the problem requires some as yet worked out fancier math. When will that happen? Probably never because of no set can contain itself as an element.

- “Good enough” may indeed by acceptable for some applications, just not “all” applications. Because “all” is a slippery fish when it comes to models and training data. Are you really sure you have accounted for all errors, variables, and data? Yes is easy to say; it is probably tough to deliver.

Net net: The bad news is that smart software is now the next big thing. Math is not of too much interest, which is a bit of a problem in my opinion.

Stephen E Arnold, March 1, 2024