FOGINT: France Gears Up for More Encrypted Message Access

March 12, 2025

Yep, another dinobaby original.

Yep, another dinobaby original.

Buoyed with the success of the Pavel Durov litigation, France appears to be getting ready to pursue Signal, the Zuck WhatsApp, and the Switzerland-based Proton Mail. The actions seem to lie in the future. But those familiar with the mechanisms of French investigators may predict that information gathering began years ago. With ample documentation, the French legislators with communication links to the French government seem to be ready to require Pavel-ovian responses to requests for user data.

“France Pushes for Law Enforcement to Signal, WhatsApp, and Encrypted Email” reports:

An amendment to France’s proposed “Narcotraffic” bill, which is passing through the National Assembly in the French Parliament, will require tech companies to hand over decrypted chat messages of suspected criminals within 72 hours. The law, which aims to provide French law enforcement with stronger powers to combat drug trafficking, has raised concerns among tech companies and civil society groups that it will lead to the creation of “backdoors” in encrypted services that will be exploited by cyber criminals and hostile nation-states. Individuals that fail to comply face fines of €1.5m while companies risk fines of up 2% of their annual world turnover if they fail to hand over encrypted communications demanded by French law enforcement.

The practical implications of these proposals is two-fold. First, the proposed legislation provides an alert to the identified firms that France is going to take action. The idea is that the services know what’s coming. The French investigators delight at recalcitrant companies proactively cooperating will probably be beneficial for the companies. Mr. Durov has learned that cooperation makes it possible for him to environ a future that does not include a stay at the overcrowded and dangerous prison just 16 kilometers from his hotel in Paris. The second is to keep up the momentum. Other countries have been indifferent to or unwilling to take on certain firms which have blown off legitimate requests for information about alleged bad actors. The French can be quite stubborn and have a bureaucracy that almost guarantees a less than amusing for the American outfits. The Swiss have experience in dealing with France, and I anticipate a quieter approach to Proton Mail.

The write up includes this statement:

opponents of the French law argue that breaking an encryption application that is allegedly designed for use by criminals is very different from breaking the encryption of chat apps, such as WhatsApp and Signal, and encrypted emails used by billions of people for non-criminal communications. “We do not see any evidence that the French proposal is necessary or proportional. To the contrary, any backdoor will sooner or later be exploited…

I think the statement is accurate. Information has a tendency to leak. But consider the impact on Telegram. That entity is in danger of becoming irrelevant because of France’s direct action against the Teflon-coated Russian Pavel Durov. Cooperation is not enough. The French action seems to put Telegram into a credibility hole, and it is not clear if the organization’s overblown crypto push can stave off user defection and slowing user growth.

Will the French law conflict with European Union and other EU states’ laws? Probably. My view is that the French will adopt the position, “C’est dommage en effet.” The Telegram “problem” is not completely resolved, but France is willing to do what other countries won’t. Is the French Foreign Legion operating in Ukraine? The French won’t say, but some of those Telegram messages are interesting. Oui, c’est dommage. Tip: Don’t fool around with a group of French Foreign Legion fellows whether you are wearing and EU flag T shirt and carrying a volume of EU laws, rules, regulations, and policies.

How will this play out? How would I know? I work in an underground office in rural Kentucky. I don’t think our local grocery store carries French cheese. However, I can offer a few tips to executives of the firms identified in the article:

- Do not go to France

- If you do go to France, avoid interactions with government officials

- If you must interact with government officials, make sure you have a French avocat or avocate lined up.

France seems so wonderful; it has great food; it has roads without billboards; and it has a penchant for direct action. Examples range from French Guiana to Western Africa. No, the “real” news doesn’t cover these activities. And executives of Signal and the Zuckbook may want to consider their travel plans. Avoid the issues Pavel Durov faces and may have resolved this calendar year. Note the word “may.”

Stephen E Arnold, March 12, 2025

Patents, AI, and Lawyers: Litigators, Start Your Engines

March 7, 2025

Patents can be a useful source of insights, a fact startup Patlytics is banking on. TechCrunch reports, "Patlytics Raises $14M for its Patent Analytics Platform." The firm turbo-charges intellectual property research with bespoke AI. We learn:

"Patlytics’ large language models (LLMs) and generative AI-powered engine are custom-built for IP-related research and other work such as patent application drafting, invention disclosures, invalidity analysis, infringement detection/analysis, Standard Essential Patents (SEPs) analysis, and IP assets portfolio management."

Apparently, the young firm is already meeting with success. We learn:

"The 1-year-old startup said it has seen a 20x increase in ARR and an 18x expansion in its customer base within six months, with a sustained 300% month-over-month growth rate. Patlytics did not disclose how many customers it has but said approximately 50% of its customer base are law firms, and the other half are corporate clients from industries like semiconductors, bio, pharmaceuticals, and more. Additionally, the company now serves customers in South Korea and Japan, and recently launched its first pilot product in London and Germany. Its clients include Abnormal Security, Google, Koch Disruptive Technologies, Quinn Emanuel Urquhart & Sullivan, Richardson Oliver, Reichman Jorgensen Lehman & Feldberg, Xerox, and Young Basile."

That is quite a client roster in such a short time. This round, combined with April’s seed round, brings the companies funding total to $21 million. The firm will put the funds to use hiring new engineers and expanding its products. Based in New York, Patlytics was launched in January, 2024.

Will AI increase patent litigation? Do Tesla Cybertrucks attract attention?

Cynthia Murrell, March 7, 2025

Lawyers and High School Students Cut Corners

March 6, 2025

Cost-cutting lawyers beware: using AI in your practice may make it tough to buy a new BMW this quarter. TechSpot reports, "Lawyer Faces $15,000 Fine for Using Fake AI-Generated Cases in Court Filing." Writer Rob Thubron tells us:

"When representing HooserVac LLC in a lawsuit over its retirement fund in October 2024, Indiana attorney Rafael Ramirez included case citations in three separate briefs. The court could not locate these cases as they had been fabricated by ChatGPT."

Yes, ChatGPT completely invented precedents to support Ramirez’ case. Unsurprisingly, the court took issue with this:

"In December, US Magistrate Judge for the Southern District of Indiana Mark J. Dinsmore ordered Ramirez to appear in court and show cause as to why he shouldn’t be sanctioned for the errors. ‘Transposing numbers in a citation, getting the date wrong, or misspelling a party’s name is an error,’ the judge wrote. ‘Citing to a case that simply does not exist is something else altogether. Mr Ramirez offers no hint of an explanation for how a case citation made up out of whole cloth ended up in his brief. The most obvious explanation is that Mr Ramirez used an AI-generative tool to aid in drafting his brief and failed to check the citations therein before filing it.’ Ramirez admitted that he used generative AI, but insisted he did not realize the cases weren’t real as he was unaware that AI could generate fictitious cases and citations."

Unaware? Perhaps he had not heard about the similar case in 2023. Then again, maybe he had. Ramirez told the court he had tried to verify the cases were real—by asking ChatGPT itself (which replied in the affirmative). But that query falls woefully short of the due diligence required by the Federal Rule of Civil Procedure 11, Thubron notes. As the judge who ultimately did sanction the firm observed, Ramirez would have noticed the cases were fiction had his attempt to verify them ventured beyond the ChatGPT UI.

For his negligence, Ramirez may face disciplinary action beyond the $15,000 in fines. We are told he continues to use AI tools, but has taken courses on its responsible use in the practice of law. Perhaps he should have done that before building a case on a chatbot’s hallucinations.

Cynthia Murrell, March 6, 2025

Smart Software and Law Firms: Realities Collide

February 19, 2025

This blog post is the work of a real-live dinobaby. No smart software involved.

This blog post is the work of a real-live dinobaby. No smart software involved.

TechCrunch published “Legal Tech Startup Luminance, Backed by the Late Mike Lynch, Raises $75 Million.” Good news for Luminance. Now the company just needs to ring the bell for those putting up the money. The write up says:

Claiming to be capable of highly accurate interrogation of legal issues and contracts, Luminance has raised $75 million in a Series C funding round led by Point72 Private Investments. The round is notable because it’s one of the largest capital raises by a pure-play legal AI company in the U.K. and Europe. The company says it has raised over $115 million in the last 12 months, and $165 million in total. Luminance was originally developed by Cambridge-based academics Adam Guthrie (founder and chief technical architect) and Dr. Graham Sills (founder and director of AI).

Why is Luminance different? The method is similar to that used by Deepseek. With concerns about the cost of AI, a method which might be less expensive to get up and keep running seems like a good bet.

However, Eudia has raised $105 million with backing from people familiar with Relativity’s legal business. Law dot com suggests that Eudia will streamline legal business processes.

The article “Massive Law Firm Gets Caught Hallucinating Cases” offers an interesting anecdote about a large law firm’s facing sanctions. What did the big boys and girls at the law firm do? Those hard working Type A professionals cited nine cases to support an argument. There is just one trivial issue perplexing the senior partners. Eight of those cases were “nonexistent.” That means made up, invented, and spot out by a nifty black box of probabilities and their methods.

I am no lawyer. I did work as an expert witness and picked up some insight about the thought processes of big time lawyers. My observations may not apply to the esteemed organizations to which I linked in this short essay, but I will assume that I am close enough for horseshoes.

- Partners want big pay and juicy bonuses. If AI can help reduce costs and add protein powder to the compensation package, AI is definitely a go-to technology to use.

- Lawyers who are very busy all of the billable time and then some want to be more efficient. The hyperbole swirling around AI makes it clear that using an AI is a productivity booster. Do lawyers have time to check what the AI system did? Nope. Therefore, hallucination is going to be part of the transformer-based methodologies until something better becomes feasible. (Did someone say, “Quantum computers?)

- The marketers (both directly compensated and the social media remoras) identify a positive. Then that upside is gilded like Tzar Nicholas’ powder room and repeated until it sure seems true.

The reality for the investors is that AI could be a winner. Go for it. The reality is for the lawyers that time to figure out what’s in bounds and what’s out of bounds is unlikely to be available. Other professionals will discover what the cancer docs did when using the late, great IBM Watson. AI can do some things reasonably well. Other things can have severe consequences.

Stephen E Arnold, February 19, 2025

Google, the Modern Samurai, Becomes a Ronin. Banzai!

January 2, 2025

Written by a dinobaby, not an over-achieving, unexplainable AI system.

Written by a dinobaby, not an over-achieving, unexplainable AI system.

I read “Google to Fight Japan’s Claims That It Harms Rivals in Search.” This paywalled Bloomberg story explains that Google is going to fight Japan’s allegations about hampering its competitors. Would Google do that?

A brave online advertising samurai reduces arguments to tiny flakes of paper. Arguments don’t stand a chance when a modern samurai fights injustice. Thanks, ChatGPT. Good enough.

The write up reports:

Alphabet Inc. is preparing to counter Japanese government allegations that it engages in anticompetitive practices such as forcing smartphone makers to give priority to Google Search in default screen placement.

Google’s position is a blend of smarm and lawyer lingo. As reported by Bloomberg:

“We have continued to work closely with the Japanese government to demonstrate how we are supporting the Android ecosystem and expanding user choice in Japan,” Google said in a statement without providing details of the allegations. “We will present our arguments in the hearing process,” it said, adding it was “disappointed” and the FTC didn’t give enough consideration of the company’s proposed solution. The company didn’t elaborate.

With Google explaining how the US government should respond to the shocking decision that Google was a monopoly, the company seems to bounce from one legal matter to the next.

What’s interesting is that Bloomberg characterized Google’s approach as a “fight.” I don’t know too much about Japanese culture. I have watched a Akira Kurosawa film and I recall John Belushi’s interpretation of a modern samurai warrior. Google definitely can send throngs of legal warriors into court. For PR purposes, I think adopting Mr. Kurosawa’s use of color for different groups of brave fighters would contribute some high impact imagery to YouTube videos.

However, with some EU losses and the twist of Googzilla’s tail by the US legal system, the innocent-until-proven-guilty company is likely to become a Saturday Night Live skit. Maybe Joe Koy will slip the Belushi-type of samurai into a set about how Google helps everyone, 24×7, and embodies the quaint motto “Do no evil.”

Stephen E Arnold, January 2, 2024

Does Apple Thinks Google Is Inept?

December 25, 2024

At a pre-holiday get together, I heard Wilson say, “Don’t ever think you’re completely useless. You can always be used as a bad example.”

I read the trust outfit’s write up “Apple Seeks to Defend Google’s Billion Dollar Payments in Search Case.” I found the story cutting two ways.

Apple, a big outfit, believes that it can explain in a compelling way why Google should be paying Apple to make Google search the default search engine on Apple devices. Do you remember the Walt Disney film The Hunchback of Notre Dame? I love an argument with a twisted back story. Apple seems to be saying to Google: “Stupidity is far more dangerous than evil. Evil takes a break from time to time. Stupidity does not.”

The Thomson Reuters article offers:

Apple has asked to participate in Google’s upcoming U.S. antitrust trial over online search, saying it cannot rely on Google to defend revenue-sharing agreements that send the iPhone maker billions of dollars each year for making Google the default search engine on its Safari browser.

Apple wants that $20 billion a year and certainly seems to be sending a signal that Google will screw up the deal with a Googley argument. At the same holiday party, Wilson’s significant other observed, ““My people skills are just fine. It’s my tolerance to idiots that needs work.” I wonder if that person was talking about Apple?

Apple may be fearful that Google will lurch into Code Yellow, tell the jury that gluing cheese on pizza is logical, and explain that it is not a monopoly. Apple does not want to be in the court cafeteria and hear, “I heard Google ask the waiter, “How do you prepare chicken?” The waiter replied, “Nothing special. The cook just says, “You are going to die.”

The Thomson Reuters’ article offers this:

Apple wants to call witnesses to testify at an April trial. Prosecutors will seek to show Google must take several measures, including selling its Chrome web browser and potentially its Android operating system, to restore competition in online search. “Google can no longer adequately represent Apple’s interests: Google must now defend against a broad effort to break up its business units,” Apple said.

I had a professor from Oklahoma who told our class:

“If Stupidity got us into this mess, then why can’t it get us out?”

Apple and Google arguing in court. Google has a lousy track record in court. Apple is confident it can convince a court that taking Google’s money is okay.

Albert Eistein allegedly observed:

The difference between stupidity and genius is that genius has its limits.

Yep, Apple and Google, quite a pair.

Stephen E Arnold, December 25, 2024

FOGINT: Intelware Tension Ticks Up

December 24, 2024

Observations from the FOGINT research team.

Observations from the FOGINT research team.

On Friday, December 20, 2024, NSO Group, the Pegasus specialized software outfit, found itself losing a court squabble with Facebook (Meta and WhatsApp). According to the Reuters’ news story pushed out at 915 pm Eastern time, “US Judge Finds Israel’s NSO Group Liable for Hacking in WhatsApp Lawsuit.” In case you don’t have the judgment at hand, you can find the United States District Court, Norther District of California document at this link.

The main idea behind the case is that the NSO Group’s specialized software pressed into duty for the purpose of obtaining information about WhatsApp users. The mechanism was to exploit “a bug in the messaging app to install spy software allowing unauthorized surveillance.” NSO Group’s fancy legal two step did not work.

The NSO Group has become the poster child for the “compromise the mobile” phone and obtain data. The Pegasus system exfiltrates data and, when properly configured, can capture information from a mobile device. Furthermore, the company’s hassles about its customers’ use of the Pegasus tool unwittingly created a surge in software and specialized services performing identical or similar tasks.

The FOGINT team has identified firms which have found different ways of compromising mobile devices. The company, therefore, has been an innovator and its approach to compromising devices has [a] focused attention on Israel’s technical competence in this specialized software niche and [b] rightly or wrongly illustrated that the technology can act with extreme prejudice when used by some clients to solve what they perceive as “problems.”

There are several larger consequences which the FOGINT team has identified:

- Specialized software is more prevalent because the revelations about Pegasus have encouraged entrepreneurs and technologists to develop more effective surveillance methods

- Unique delivery methods have been crafted. These range for in-app malware to more sophisticated multi-stage malware installed as a consequence of a user’s carelessness

- Making clear that powerful surveillance tools can be installed in a way that does not require the user to click, email, or interact. The malware simply dials up a mobile and bingo! the device is compromised.

How will this judgment affect the specialized software industry? In FOGINT’s view, the decision will further stimulate competition and the follow of novel surveillance techniques. One consequence also may be that law enforcement and intelligence professionals will encounter headwinds when similar specialized software is required for certain investigations. FOGINT’s view is that NSO Group’s go-go approach to sales created a problem for the company and for specialized software. Some technologies should remain “secret,” which is now becoming an old-fashioned viewpoint. Marketing is not always a benefit.

Stephen E Arnold, December 24, 2024

The Starlink Angle: Yacht, Contraband, and Global Satellite Connectivity

December 16, 2024

This blog post is the work of an authentic dinobaby. No smart software was used.

This blog post is the work of an authentic dinobaby. No smart software was used.

I have followed the ups and downs of Starlink satellite Internet connectivity in the Russian special operation. I have not paid much attention to more routine criminal use of the Starlink technology. Before I direct your attention to a write up about this Elon Musk enterprise, I want to mention that this use case for satellites caught my attention with Equatorial Communications’ innovations in 1979. Kudos to that outfit!

“Police Demands Starlink to Reveal Buyer of Device Found in $4.2 Billion Drug Bust” has a snappy subtitle:

Smugglers were caught with 13,227 pounds of meth

Hmmm. That works out to 6,000 kilograms or 6.6 short tons of meth worth an estimated $4 billion on the open market. And it is the government of India chasing the case. (Starlink is not yet licensed for operation in that country.)

The write up states:

officials have sent Starlink a police notice asking for details about the purchaser of one of its Starlink Mini internet devices that was found on the boat. It asks for the buyer’s name and payment method, registration details, and where the device was used during the smugglers’ time in international waters. The notice also asks for the mobile number and email registered to the Starlink account.

The write up points out:

Starlink has spent years trying to secure licenses to operate in India. It appeared to have been successful last month when the country’s telecom minister said Starlink was in the process of procuring clearances. The company has not yet secured these licenses, so it might be more willing than usual to help the authorities in this instance.

Starlink is interesting because it is a commercial enterprise operating in a free wheeling manner. Starlink’s response is not known as of December 12, 2024.

Stephen E Arnold, December 16, 2024

Google and 2025: AI Scurrying and Lawsuits. Lots of Lawsuits

December 6, 2024

This is the work of a dinobaby. Smart software helps me with art, but the actual writing? Just me and my keyboard.

This is the work of a dinobaby. Smart software helps me with art, but the actual writing? Just me and my keyboard.

I think there are 193 nations which are members of the UN. Two entities which one can count but are what one might call specialty equipment organizations: The Holy See aka Vatican City and the State of Palestine. The other 193 are “recognized,” mostly pay their UN dues, and have legal systems of varying quality and diligence.

I read “Google Earns Fresh Competition Scrutiny from Two Nations on a Single Day.” The write said:

In India – the most populous nation on Earth – the Competition Commission ordered [PDF] a probe after a developer called WinZo – which promotes itself with the chance to “Play Mobile Games & Win Cash” – complained that Google Play won’t host games that offer real money as prizes, only allowing sideloading onto Android devices.

Then it added:

Advertising is the reason for the other Google probe announced Thursday, by the Competition Bureau of Canada – the world’s second-largest country by area. The Bureau announced its investigations found Google’s ads biz “abused its dominant position through conduct intended to ensure that it would maintain and entrench its market power” and “engaged in conduct that reduces the competitiveness of rival ad tech tools and the likelihood of new entrants in the market.” The Bureau thinks the situation can be addressed if Google sells two of its ads tools – but the filing in which the identity of those two products will be revealed is yet to appear on the site of the Competition Tribunal.

Whether Google is good or evil is, in my opinion, irrelevant. With the US, the EU, Canada, and India chasing Google for its alleged misbehavior, other nations are going to pay attention.

Does that mean that another 100 or more nations will launch their own investigations and initiate legal action related to the lovable Google’s approach to business? In practical terms what does this mean?

- Google will be hiring lawyers and retaining firms. This is definitely good for legal eagles.

- Google will win some, delay some, and lose some cases. The losses, however, will result in consequences. Some of these will require Google to write checks for penalties. These can add up.

- Conflicting decisions are likely to result in delays. Those delays means that Google will be more Googley. The number of ads in YouTube will increase. The mysterious revenue payments will become more quirky. Commissions on various user-customer-Google touch points will increase.

Net net: We have a good example of what a failure to regulate high technology companies for a couple of decades creates. Kicking the can down the road has done what exactly?

Stephen E Arnold, December 6, 2024

Googlers Face Another Ka-Ching Moment in the United Kingdom

December 5, 2024

This write up is from a real and still-alive dinobaby. If there is art, smart software has been involved. Dinobabies have many skills, but Gen Z art is not one of them.

This write up is from a real and still-alive dinobaby. If there is art, smart software has been involved. Dinobabies have many skills, but Gen Z art is not one of them.

Mr. Harold Carlin, my high school history teacher, made us learn about the phrase “The sun never sets on the British empire.” It has, and Mr. Carlin like many old-school teachers forced our class to read about protectionism, subjugation of people who did not enjoy beef Wellington, or assorted monopolies.

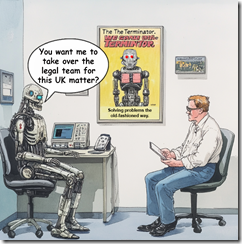

Two intelligent entities discuss how to resolve legal problems. Thanks, MidJourney. Good enough.

Now Google may want to think about the phrase, “The sun never sets on Google legal matters related to its alleged behavior in the datasphere.”

“Google Must Face £7B UK Class Action over Search Engine Dominance” reported:

The complaint centers around Google shutting out competition for mobile search, resulting in higher prices for advertisers, which were allegedly passed on to consumers. According to consumer rights campaigner Nikki Stopford, who is bringing the claim on behalf of UK consumers, Android device makers that wanted access to Google’s Play Store had to accept its search service. The ad slinger also paid Apple billions to have Google Search as the default for the Safari browser in iOS.

The write up noted:

According to Stopford [a UK official], Google used its position to up prices paid by advertisers, resulting in higher costs to consumers. “What we’re trying to achieve with this claim is essentially compensate consumers,” she said.

Google has moved some of its smart software activities to the UK. One would think that with Google’s cash resources, its attorneys, and its smart software — mere government officials would have zero chance of winning this now repetitive allegation that dear Google has behaved in an untoward way.

If I were a government litigator, I would just drop the suit, Jack Smith style.

Will the sun set on these allegations against the “do no evil” outfit?

Nope, not as long as the opportunity for a payout exists. Google may have been too successful in its decades long rampage through traditional business practices. The good news is that Google has an almost limitless supply of money. The bad news is that countries have an almost limitless supply of regulators. But Google has smart software. Remember the film “The Terminator”? Winner: Google.

Stephen E Arnold, December 5, 2024