Another Captain Obvious AI Report

June 14, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

We’re well aware that biased data pollutes AI algorithms and yields disastrous results. The real life examples include racism, sexism, and prejudice towards people with low socioeconomic status. Beta News adds its take to the opinions of poor data with: “Poisoning The Data Well For Generative AI.” The article restates what we already know: bad large language models (LLMs) lead to bad outcomes. It’s like poisoning a well.

Beta News does bring new idea to the discussion: hackers purposely corrupting data. Bad actors could alter LLMs to teach AI how to be deceptive and malicious. This leads to unreliable and harmful results. What’s horrible is that these LLMs can’t be repaired.

Bad actors are harming generative by inserting malware, phishing, disinformation installing backdoors, data manipulation, and retrieval augmented generation (RAG) in LLMs. If you’re unfamiliar with RAG, it’s when :

“With RAG, a generative AI tool can retrieve data from external sources to address queries. Models that use a RAG approach are particularly vulnerable to poisoning. This is because RAG models often gather user feedback to improve response accuracy. Unless the feedback is screened, attackers can put in fake, deceptive, or potentially compromising content through the feedback mechanism.”

Unfortunately it is difficult to detect data poisoning, so it’s very important for AI security experts to be aware of current scams and how to minimize risks. There aren’t any set guidelines on how to prevent AI data breaches and the experts are writing the procedures as they go. The best advice is to be familiar with AI projects, code, current scams, and run frequent security checks. It’s also wise to not doubt gut instincts.

Whitney Grace, June 14, 2024

Googzilla: Pointing the Finger of Blame Makes Sense I Guess

June 13, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Here you are: The Thunder Lizard of Search Advertising. Pesky outfits like Microsoft have been quicker than Billy the Kid shooting drunken farmers when it comes to marketing smart software. But the real problem in Deadwood is a bunch of do-gooders turned into revolutionaries undermining the granite foundation of the Google. I have this information from an unimpeachable source: An alleged Google professional talking on a podcast. The news release titled “Google Engineer Says Sam Altman-Led OpenAI Set Back AI Research Progress By 5-10 Years: LLMs Have Sucked The Oxygen Out Of The Room” explains that the actions of OpenAI is causing the Thunder Lizard to wobble.

One of the team sets himself apart by blaming OpenAI and his colleagues, not himself. Will the sleek, entitled professionals pay attention to this criticism or just hear “OpenAI”? Thanks, MSFT Copilot. Good enough art.

Consider this statement in the cited news release:

He [an employee of the Thunder Lizard] stated that OpenAI has “single-handedly changed the game” and set back progress towards AGI by a significant number of years. Chollet pointed out that a few years ago, all state-of-the-art results were openly shared and published, but this is no longer the case. He attributed this change to OpenAI’s influence, accusing them of causing a “complete closing down of frontier research publishing.”

I find this interesting. One company, its deal with Microsoft, and that firm’s management meltdown produced a “complete closing down of frontier research publishing.” What about the Dr. Timnit Gebru incident about the “stochastic parrot”?

The write up included this gem from the Googley acolyte of the Thunder Lizard of Search Advertising:

He went on to criticize OpenAI for triggering hype around Large Language Models or LLMs, which he believes have diverted resources and attention away from other potential areas of AGI research.

However, DeepMind — apparently the nerve center of the one best way to generate news releases about computational biology — has been generating PR. That does not count because its is real world smart software I assume.

But there are metrics to back up the claim that OpenAI is the Great Destroyer. The write up says:

Chollet’s [the Googler, remember?] criticism comes after he and Mike Knoop, [a non-Googler] the co-founder of Zapier, announced the $1 million ARC-AGI Prize. The competition, which Chollet created in 2019, measures AGI’s ability to acquire new skills and solve novel, open-ended problems efficiently. Despite 300 teams attempting ARC-AGI last year, the state-of-the-art (SOTA) score has only increased from 20% at inception to 34% today, while humans score between 85-100%, noted Knoop. [emphasis added, editor]

Let’s assume that the effort and money poured into smart software in the last 12 months boosted one key metric by 14 percent. Doesn’t’ that leave LLMs and smart software in general far, far behind the average humanoid?

But here’s the killer point?

… training ChatGPT on more data will not result in human-level intelligence.

Let’s reflect on the information in the news release.

- If the data are accurate, LLM-based smart software has reached a dead end. I am not sure the law suits will stop, but perhaps some of the hyperbole will subside?

- If these insights into the weaknesses of LLMs, why has Google continued to roll out services based on a dead-end model, suffer assorted problems, and then demonstrated its management prowess by pulling back certain services?

- Who is running the Google smart software business? Is it the computationalists combining components of proteins or is the group generating blatantly wonky images? A better question is, “Is anyone in charge of non-advertising activities at Google?”

My hunch is that this individual is representing a percentage of a fractionalized segment of Google employees. I do not think a senior manager is willing to say, “Yes, I am responsible.” The most illuminating facet of the article is the clear cultural preference at Google: Just blame OpenAI. Failing that, blame the users, blame the interns, blame another team, but do not blame oneself. Am I close to the pin?

Stephen E Arnold, June 13, 2024

Modern Elon Threats: Tossing Granola or Grenades

June 13, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Bad me. I ignored the Apple announcements. I did spot one interesting somewhat out-of-phase reaction to Tim Apple’s attempt to not screw up again. “Elon Musk Calls Apple Devices with ChatGPT a Security Violation.” Since the Tim Apple crowd was learning about what was “to be,” not what is, this statement caught my attention:

If Apple integrates OpenAI at the OS level, then Apple devices will be banned at my companies. That is an unacceptable security violation.

I want to comment about the implicit “then” in this remarkable prose output from Elon Musk. On the surface, the “then” is that the most affluent mobile phone users will be prohibited from the X.com service. I wonder how advertisers are reacting to this idea of cutting down the potential eyeballs for their product if advertised to an group of prospects no longer clutching Apple iPhones. I don’t advertise, but I can game out how the meetings between the company with advertising dollars and the agency helping the company make informed advertising decisions. (Let’s assume that advertising “works”, and advertising outfits are informed for the purpose of this blog post.)

A tortured genius struggles against the psychological forces that ripped the Apple car from the fingers of its rightful owner. Too bad. Thanks, MSFT Copilot. How is your coding security coming along? What about the shut down of the upcharge for Copilot? Oh, no answer. That’s okay. Good enough.

Let’s assume Mr. Musk “sees” something a dinobaby like me cannot. What’s with the threat logic? The loss of a beloved investment? A threat to a to-be artificial intelligence company destined to blast into orbit on a tower of intellectual rocket fuel? Mr. Musk has detected a signal. He has interpreted. And he has responded with an ultimatum. That’s pretty fast action, even for a genius. I started college in 1962, and I dimly recall a class called Psych 101. Even though I attended a low-ball institution, the knowledge value of the course was evident in the large and shabby lecture room with a couple of hundred seats.

Threats, if I am remembering something that took place 62 years ago, tell more about the entity issuing the threat than the actual threat event itself. The words worming from the infrequently accessed cupboards of my mind are linked to an entity wanting to assert, establish, or maintain some type of control. Slapping quasi-ancient psycho-babble on Mr. Musk is not fair to the grand profession of psychology. However, it does appear to reveal that whatever Apple thinks it will do in its “to be”, coming-soon service struck a nerve into Mr. Musk’s super-bright, well-developed brain.

I surmise there is some insecurity with the Musk entity. I can’t figure out the connection between what amounts to vaporware and a threat to behead or de-iPhone a potentially bucket load of prospects for advertisers to pester. I guess that’s why I did not invent the Cybertruck, a boring machine, and a rocket ship.

But a threat over vaporware in a field which has demonstrated that Googzilla, Microsoft, and others have dropped their baskets of curds and whey is interesting. The speed with which Mr. Musk reacts suggests to me that he perceives the Apple vaporware as an existential threat. I see it as another big company trying to grab some fruit from the AI tree until the bubble deflates. Software does have a tendency to disappoint, build up technical debt, and then evolve to the weird service which no one can fix, change, or kill because meaningful competition no longer exists. When will the IRS computer systems be “fixed”? When will airline reservations systems serve the customer? When will smart software stop hallucinating?

I actually looked up some information about threats from the recently disgraced fake research publisher John Wiley & Sons. “Exploring the Landscape of Psychological Threat” reminded me why I thought psychology was not for me. With weird jargon and some diagrams, the threat may be linked to Tesla’s rumored attempt to fall in love with Apple. The product of this interesting genetic bonding would be the Apple car, oodles of cash for Mr. Musk, and the worshipful affection of the Apple acolytes. But the online date did not work out. Apple swiped Tesla into the loser bin. Now Mr. Musk can get some publicity, put X.com (don’t you love Web sites that remind people of pornography on the Dark Web?) in the news, and cause people like me to wonder. “Why dump on Apple?” (The outfit has plenty of worries with the China thing, doesn’t it? What about some anti-trust action? What about the hostility of M3 powered devices?)

Here’s my take:

- Apple Intelligence is a better “name” than Mr. Musk’s AI company xAI. Apple gets to use “AI” but without the porn hook.

- A controversial social media emission will stir up the digital elite. Publicity is good. Just ask Michael Cimino of Heaven’s Gate fame?

- Mr. Musk’s threat provides an outlet for the failure to make Tesla the Apple car.

What if I am wrong? [a] I don’t care. I don’t use an iPhone, Twitter, or online advertising. [b] A GenX, Y, or Z pooh-bah will present the “truth” and set the record straight. [c] Mr. Musk’s threat will be like the result of a Boring Company operation. A hole, a void.

Net net: Granola. The fast response to what seems to be “coming soon” vaporware suggests a potential weak spot in Mr. Musk’s make up. Is Apple afraid? Probably not. Is Mr. Musk? Yep.

Stephen E Arnold, June 13, 2024

The UK AI Safety Institute: Coming to America

June 13, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

One of Neil Diamond’s famous songs is “They’re Coming To America” that explains an immigrant’s journey to the US to pursue to the America. The chorus is the most memorable part of the song, because it repeats the word “today” until the conclusion. In response to the growing concerns about AI’s impact society, The Daily Journal reports that, “UK’s AI Safety Institute Expands To The US, Set To Open US Counterpart In San Francisco.” AI safety is coming to America today or summer 2024.

The Daily Journal’s Bending feature details the following:

“The UK announced it will open a US counterpart to its AI Safety Summit institute in San Francisco this summer to test advanced AI systems and ensure their safety. The expansion aims to recruit a research director and technical staff headed in San Francisco and increase cooperation with the US on AI safety issues. The original UK AI Safety Institute currently has a team of 30 people and is chaired by tech entrepreneur Ian Hogarth. Since being founded last year, the Institute has tested several AI models on challenges but they still struggle with more advanced tests and producing harmful outputs.”

The UK and US will shape the global future of the AI. Because of their prevalence in western, capitalist societies, the these countries are home to huge tech companies. These tech companies are profit driven and often forgo safety and security for it. They forget the importance of the consumer and the common good. Thankfully there are organizations that fight for consumers’ rights and ensuring there will accountability. On the other hand, these organizations can be just as damaging. Is this a lesser of two evils or the evil that you know situation? Oh well, the UK Safety Summit Institute is coming to America!

Whitney Grace, June 13, 2024

Detecting AI-Generated Research Increasingly Difficult for Scientific Journals

June 12, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Reputable scientific journals would like to only publish papers written by humans, but they are finding it harder and harder to enforce that standard. Researchers at the University of Chicago Medical Center examined the issue and summarize their results in, “Detecting Machine-Written Content in Scientific Articles,” published at Medical Xpress. Their study was published in Journal of Clinical Oncology Clinical Cancer Informatics on June 1. We presume it was written by humans.

The team used commercial AI detectors to evaluate over 15,000 oncology abstracts from 2021-2023. We learn:

“They found that there were approximately twice as many abstracts characterized as containing AI content in 2023 as compared to 2021 and 2022—indicating a clear signal that researchers are utilizing AI tools in scientific writing. Interestingly, the content detectors were much better at distinguishing text generated by older versions of AI chatbots from human-written text, but were less accurate in identifying text from the newer, more accurate AI models or mixtures of human-written and AI-generated text.”

Yes, that tracks. We wonder if it is even harder to detect AI generated research that is, hypothetically, run through two or three different smart rewrite systems. Oh, who would do that? Maybe the former president of Stanford University?

The researchers predict:

“As the use of AI in scientific writing will likely increase with the development of more effective AI language models in the coming years, Howard and colleagues warn that it is important that safeguards are instituted to ensure only factually accurate information is included in scientific work, given the propensity of AI models to write plausible but incorrect statements. They also concluded that although AI content detectors will never reach perfect accuracy, they could be used as a screening tool to indicate that the presented content requires additional scrutiny from reviewers, but should not be used as the sole means to assess AI content in scientific writing.”

That makes sense, we suppose. But humans are not perfect at spotting AI text, either, though there are ways to train oneself. Perhaps if journals combine savvy humans with detection software, they can catch most AI submissions. At least until the next generation of ChatGPT comes out.

Cynthia Murrell, June 12, 2024

What Is McKinsey & Co. Telling Its Clients about AI?

June 12, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Years ago (decades now) I attended a meeting at the firm’s technology headquarters in Bethesda, Maryland. Our carpetland welcomed the sleek, well-fed, and super entitled Booz, Allen & Hamilton professionals to a low-profile meeting to discuss the McKinsey PR problem. I attended because my boss (the head of the technology management group) assumed I would be invisible to the Big Dog BAH winners. He was correct. I was an off-the-New-York radar “manager,” buried in an obscure line item. So there I was. And what was the subject of this periodic meeting? The Harvard Business Review-McKinsey Award. The NY Booz, Allen consultants failed to come up with this idea. McKinsey did. As a result, the technology management group (soon to overtake the lesser MBA side of the business) had to rehash the humiliation of not getting associated with the once-prestigious Harvard University. (The ethics thing, the medical research issue, and the protest response have tarnished the silver Best in Show trophy. Remember?)

One of the most capable pilots found himself answering questions from a door-to-door salesman covering his territory somewhere west of Terre Haute. The pilot who has survived but sits amidst a burning experimental aircraft ponders an important question, “How can I explain that the crash was not my fault?” Thanks, MSFT Copilot. Have you ever found yourself in a similar situation? Can you “recall” one?

Now McKinsey has AI data. Actual hands-on, unbillable work product with smart software. Is the story in the Harvard Business Review? A Netflix documentary? A million-view TikTok hit? A “60 Minutes” segment? No, nyet, unh-unh, negative. The story appears in Joe Mansueto’s Fast Company Magazine! Mr. Mansueto founded Morningstar and has expanded his business interests to online publications and giving away some of his billions.

The write up is different from McKinsey’s stentorian pontifications. It is a bit like mining coal in a hard rock dig deep underground. It was a dirty, hard, and ultimately semi-interesting job. Smart software almost broke the McKinsey marvels.

“We Spent Nearly a Year Building a Generative AI Tool. These Are the 5 (Hard) Lessons We Learned” presents information which would have been marketing gold for the McKinsey decades ago. But this is 2024, more than 18 months after Microsoft’s OpenAI bomb blast at Davos.

What did McKinsey “learn”?

McKinsey wanted to use AI to “bring together the company’s vast but separate knowledge sources.” Of course, McKinsey’s knowledge is “vast.” How could it be tiny. The firm’s expertise in pharmaceutical efficiency methods exceeds that of many other consulting firms. What’s more important profits or deaths? Answer: I vote for profits, doesn’t everyone except for a few complainers in Eastern Kentucky, West Virginia, and other flyover states.

The big reveal in the write up is that McKinsey & Co learned that its “vast” knowledge is fragmented and locked in Microsoft PowerPoint slides. After the non-billable overhead work, the bright young future corporate leaders discovered that smart software could only figure out about 15 percent of the knowledge payload in a PowerPoint document. With the vast knowledge in PowerPoint, McKinsey learned that smart software was a semi-helpful utility. The smart software was not able to “readily access McKinsey’s knowledge, generate insights, and thus help clients” or newly-hired consultants do better work, faster, and more economically. Nope.

So what did McKinsey’s band of bright smart software wizards do? The firm coded up its own content parser. How did that home brew software work? The grade is a solid B. The cobbled together system was able to make sense of 85 percent of a PowerPoint document. The other 15 percent gives the new hires something to do until a senior partner intervenes and says, “Get billable or get gone, you very special buttercup.” Non-billable and a future at McKinsey are not like peanut butter and jelly.

How did McKinsey characterize its 12-month journey into the reality of consulting baloney? The answer is a great one. Here it is:

With so many challenges and the need to work in a fundamentally new way, we described ourselves as riding the “struggle bus.”

Did the McKinsey workers break out into work songs to make the drudgery of deciphering PowerPoints go more pleasantly? I am think about the Coal Miners Boogie by George Davis, West Virginia Mine Disaster by Jean Ritchi, or my personal favorite Black Dust Fever by the Wildwood Valley Boys.

But the workers bringing brain to reality learned five lessons. One can, I assume, pay McKinsey to apply these lessons to a client firm experiencing a mental high from thinking about the payoffs from AI. On the other hand, consider these in this free blog post with my humble interpretation:

- Define a shared aspiration. My version: Figure out what you want to do. Get a plan. Regroup if the objective and the method don’t work or make much sense.

- Assemble a multi-disciplinary team. My version: Don’t load up on MBAs. Get individuals who can code, analyze content, and tap existing tools to accomplish specific tasks. Include an old geezer partner who can “explain” what McKinsey means when it suggests “managerial evolution.” Skip the ape to MBA cartoons.

- Put the user first. My version: Some lesser soul will have to use the system. Make sure the system is usable and actually works. Skip the minimum viable product and get to the quality of the output and the time required to use the system or just doing the work the old-fashioned way.

- Tech, learn, repeat. Covert the random walk into a logical and efficient workflow. Running around with one’s hair on fire is not a methodical process nor a good way to produce value.

- Measure and manage. My version: Fire those who failed. Come up with some verbal razzle-dazzle and sell the planning and managing work to a client. Do not do this work on overhead for the consultants who are billable.

What does the great reveal by McKinsey tell me. First, the baloney about “saving an average of up to 30 percent of a consultants’ time by streamlining information gathering and synthesis” sounds like the same old, same old pitched by enterprise search vendors for decades. The reality is that online access to information does not save time; it creates more work, particularly when data voids are exposed. Those old dog partners are going to have to talk with young consultants. No smart software is going to eliminate that task no matter how many senior partners want a silver bullet to kill the beast of a group of beginners.

The second “win” is the idea that “insights are better.” Baloney. Flipping through the famous executive memos to a client, reading the reports with the unaesthetic dash points, and looking at the slide decks created by coal miners of knowledge years ago still has to be done… by a human who is sober, motivated, and hungry for peer recognition. Software is not going to have the same thirst for getting a pat on the head and in some cases on another part of the human frame.

The struggle bus is loading up no. Just hire McKinsey to be the driver, the tour guide, and the outfit that collects the fees. One can convert failure into billability. That’s what the Fast Company write up proves. Eleven months and all they got was a ride on the digital equivalent of the Cybertruck which turned out to be much-hyped struggle bus?

AI may ultimately rule the world. For now, it simply humbles the brilliant minds at McKinsey and generates a story for Fast Company. Well, that’s something, isn’t it? Now about spinning that story.

Stephen E Arnold, June 12, 2024

Will the Judge Notice? Will the Clients If Convicted?

June 12, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Law offices are eager to lighten their humans’ workload with generative AI. Perhaps too eager. Stanford University’s HAI reports, “AI on Trial: Legal Models Hallucinate in 1 out of 6 (or More) Benchmarking Queries.” Close enough for horseshoes, but for justice? And that statistic is with improved, law-specific software. We learn:

“In one highly-publicized case, a New York lawyer faced sanctions for citing ChatGPT-invented fictional cases in a legal brief; many similar cases have since been reported. And our previous study of general-purpose chatbots found that they hallucinated between 58% and 82% of the time on legal queries, highlighting the risks of incorporating AI into legal practice. In his 2023 annual report on the judiciary, Chief Justice Roberts took note and warned lawyers of hallucinations.”

But that was before tailor-made retrieval-augmented generation tools. The article continues:

“Across all areas of industry, retrieval-augmented generation (RAG) is seen and promoted as the solution for reducing hallucinations in domain-specific contexts. Relying on RAG, leading legal research services have released AI-powered legal research products that they claim ‘avoid’ hallucinations and guarantee ‘hallucination-free’ legal citations. RAG systems promise to deliver more accurate and trustworthy legal information by integrating a language model with a database of legal documents. Yet providers have not provided hard evidence for such claims or even precisely defined ‘hallucination,’ making it difficult to assess their real-world reliability.”

So the Stanford team tested three of the RAG systems for themselves, Lexis+ AI from LexisNexis and Westlaw AI-Assisted Research & Ask Practical Law AI from Thomson Reuters. The authors note they are not singling out LexisNexis or Thomson Reuters for opprobrium. On the contrary, these tools are less opaque than their competition and so more easily examined. They found that these systems are more accurate than the general-purpose models like GPT-4. However, the authors write:

“But even these bespoke legal AI tools still hallucinate an alarming amount of the time: the Lexis+ AI and Ask Practical Law AI systems produced incorrect information more than 17% of the time, while Westlaw’s AI-Assisted Research hallucinated more than 34% of the time.”

These hallucinations come in two flavors. Many responses are flat out wrong. Others are misgrounded: they are correct about the law but cite irrelevant sources. The authors stress this second type of error is more dangerous than it may seem, for it may lure users into a false sense of security about the tool’s accuracy.

The post examines challenges particular to RAG-based legal AI systems and discusses responsible, transparent ways to use them, if one must. In short, it recommends public benchmarking and rigorous evaluations. Will law firms listen?

Cynthia Murrell, June 12, 2024

MSFT: Security Is Not Job One. News or Not?

June 11, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

The idea that free and open source software contains digital trap falls is one thing. Poisoned libraries which busy and confident developers snap into their software should not surprise anyone. What I did not expect was the information in “Malicious VSCode Extensions with Millions of Installs Discovered.” The write up in Bleeping Computer reports:

A group of Israeli researchers explored the security of the Visual Studio Code marketplace and managed to “infect” over 100 organizations by trojanizing a copy of the popular ‘Dracula Official theme to include risky code. Further research into the VSCode Marketplace found thousands of extensions with millions of installs.

I heard the “Job One” and “Top Priority” assurances before. So far, bad actors keep exploiting vulnerabilities and minimal progress is made. Thanks, MSFT Copilot, definitely close enough for horseshoes.

The write up points out:

Previous reports have highlighted gaps in VSCode’s security, allowing extension and publisher impersonation and extensions that steal developer authentication tokens. There have also been in-the-wild findings that were confirmed to be malicious.

How bad can this be? This be bad. The malicious code can be inserted and happily delivers to a remote server via an HTTPS POST such information as:

the hostname, number of installed extensions, device’s domain name, and the operating system platform

Clever bad actors can do more even if the information they have is the description and code screen shot in the Bleeping Computer article.

Why? You are going to love the answer suggested in the report:

“Unfortunately, traditional endpoint security tools (EDRs) do not detect this activity (as we’ve demonstrated examples of RCE for select organizations during the responsible disclosure process), VSCode is built to read lots of files and execute many commands and create child processes, thus EDRs cannot understand if the activity from VSCode is legit developer activity or a malicious extension.”

That’s special.

The article reports that the research team poked around in the Visual Studio Code Marketplace and discovered:

- 1,283 items with known malicious code (229 million installs).

- 8,161 items communicating with hardcoded IP addresses.

- 1,452 items running unknown executables.

- 2,304 items using another publisher’s GitHub repo, indicating they are a copycat.

Bleeping Computer says:

Microsoft’s lack of stringent controls and code reviewing mechanisms on the VSCode Marketplace allows threat actors to perform rampant abuse of the platform, with it getting worse as the platform is increasingly used.

Interesting.

Let’s step back. The US Federal government prodded Microsoft to step up its security efforts. The MSFT leadership said, “By golly, we will.”

Several observations are warranted:

- I am not sure I am able to believe anything Microsoft says about security

- I do not believe a “culture” of security exists within Microsoft. There is a culture, but it is not one which takes security seriously after a butt spanking by the US Federal government and Microsoft Certified Partners who have to work to address their clients issues. (How do I know this? On Wednesday, June 8, 2024, at the TechnoSecurity & Digital Forensics Conference told me, “I have to take a break. The security problems with Microsoft are killing me.”

- The “leadership” at Microsoft is loved by Wall Street. However, others fail to respond with hearts and flowers.

Net net: Microsoft poses a grave security threat to government agencies and the users of Microsoft products. Talking with dulcet tones may make some people happy. I think there are others who believe Microsoft wants government contracts. Its employees want an easy life, money, and respect. Would you hire a former Microsoft security professional? This is not a question of trust; this is a question of malfeasance. Smooth talking is the priority, not security.

Stephen E Arnold, June 11, 2024

Will AI Kill Us All? No, But the Hype Can Be Damaging to Mental Health

June 11, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

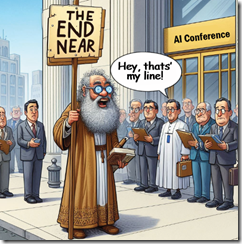

I missed the talk about how AI will kill us all. Planned? Nah, heavy traffic. From what I heard, none of the cyber investigators believed the person trying hard to frighten law enforcement cyber investigators. There are other — slightly more tangible threats. One of the attendees whose name I did not bother to remember asked me, “What do you think about artificial intelligence?” My answer was, “Meh.”

A contrarian walks alone. Why? It is hard to make money being negative. At the conference I attended June 4, 5, and 6, attendees with whom I spoke just did not care. Thanks, MSFT Copilot. Good enough.

Why you may ask? My method of handling the question is to refer to articles like this: “AI Appears to Rapidly Be Approaching Be Approaching a Brick Wall Where It Can’t Get Smarter.” This write up offers an opinion not popular among the AI cheerleaders:

Researchers are ringing the alarm bells, warning that companies like OpenAI and Google are rapidly running out of human-written training data for their AI models. And without new training data, it’s likely the models won’t be able to get any smarter, a point of reckoning for the burgeoning AI industry

Like the argument that AI will change everything, this claim applies to systems based upon indexing human content. I am reasonably certain that more advanced smart software with different concepts will emerge. I am not holding my breath because much of the current AI hoo-hah has been gestating longer than new born baby elephant.

So what’s with the doom pitch? Law enforcement apparently does not buy the idea. My team doesn’t. For the foreseeable future, applied smart software operating within some boundaries will allow some tasks to be completed quickly and with acceptable reliability. Robocop is not likely for a while.

One interesting question is why the polarization. First, it is easy. And, second, one can cash in. If one is a cheerleader, one can invest in a promising AI start and make (in theory) oodles of money. By being a contrarian, one can tap into the segment of people who think the sky is falling. Being a contrarian is “different.” Plus, by predicting implosion and the end of life one can get attention. That’s okay. I try to avoid being the eccentric carrying a sign.

The current AI bubble relies in a significant way on a Google recipe: Indexing text. The approach reflects Google’s baked in biases. It indexes the Web; therefore, it should be able to answer questions by plucking factoids. Sorry, that doesn’t work. Glue cheese to pizza? Sure.

Hopefully new lines of investigation may reveal different approaches. I am skeptical about synthetic (or made up data that is probably correct). My fear is that we will require another 10, 20, or 30 years of research to move beyond shuffling content blocks around. There has to be a higher level of abstraction operating. But machines are machines and wetware (human brains) are different.

Will life end? Probably but not because of AI unless someone turns over nuclear launches to “smart” software. In that case, the crazy eccentric could be on the beam.

Stephen E Arnold, June 11, 2024

AI and Ethical Concerns: Sure, When “Ethics” Means Money

June 11, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

It seems workers continue to flee OpenAI over ethical concerns. The Byte reports, “Another OpenAI Researcher Quits, Issuing Cryptic Warning.” Understandably unwilling to disclose details, policy researcher Gretchen Kreuger announced her resignation on X. She did express a few of her concerns in broad strokes:

“We need to do more to improve foundational things, like decision-making processes; accountability; transparency; documentation; policy enforcement; the care with which we use our own technology; and mitigations for impacts on inequality, rights, and the environment.”

Kreuger emphasized these important issues not only affect communities now but also influence who controls the direction of pervasive AI systems in the future. Right now, that control is in the hands of the tech bros running AI firms. Writer Maggie Harrison Dupré notes Krueger’s departure comes as OpenAI is dealing with a couple of scandals. Other high-profile resignations have also occurred in recent months. We are reminded:

“[Recent] departures include that of Ilya Sutskever, who served as OpenAI’s chief scientist, and Jan Leike, a top researcher on the company’s now-dismantled ’Superalignment’ safety team — which, in short, was the division effectively in charge of ensuring that a still-theoretical human-level AI wouldn’t go rogue and kill us all. Or something like that. Sutskever was also a leader within the Superalignment division. And to that end, it feels very notable that all three of these now-ex-OpenAI workers were those who worked on safety and policy initiatives. It’s almost as if, for some reason, they felt as though they were unable to successfully do their job in ensuring the safety and security of OpenAI’s products — part of which, of course, would reasonably include creating pathways for holding leadership accountable for their choices.”

Yes, most of us would find that reasonable. For members of that leadership, though, it seems escaping accountability is a top priority.

Cynthia Murrell, June 11, 2024