Another Cultural Milestone for Social Media

April 16, 2024

Well this is an interesting report. PsyPost reports, “Researchers Uncover ‘Pornification’ Trend Among Female Streamers on Twitch.” Authored by Kristel Anciones-Anguita and Mirian Checa-Romero, the study was published in the Humanities and Social Sciences Communications journal. The team analyzed clips from 1,920 livestreams on Twitch.tv, a platform with a global daily viewership of 3 million. They found women streamers sexualize their presentations much more often, and more intensely, than the men. Also, the number of sexy streams depends on the category. Not surprisingly, broadcasters in categories like ASMR and “Pools, Hot Tubs & Beaches” are more self-sexualized than, say, gamer girls. Shocking, we know.

The findings are of interest because Twitch broadcasters formulate their own images, as opposed to performers on traditional media. There is a longstanding debate, even among feminists, whether using sex to sell oneself is empowering or oppressive. Or maybe both. Writer Eric W. Dolan notes:

“Studies on traditional media (such as TV and movies) have extensively documented the sexualization of women and its consequences. However, the interactive and user-driven nature of new digital platforms like Twitch.tv presents new dynamics that warrant exploration, especially as they become integral to daily entertainment and social interaction. … This autonomy raises questions about the factors driving self-sexualization, including societal pressures, the pursuit of popularity, and the platform’s economic incentives.”

Or maybe women are making fully informed choices and framing them as victims of outside pressure is condescending. Just a thought. The issue gets more murky when the subjects, or their audiences, are underage. The write-up observes:

“These patterns of self-sexualization also have potential implications for the shaping of audience attitudes towards gender and sexuality. … ‘Our long-term goals for this line of research include deepening our understanding of how online sexualized culture affects adolescent girls and boys and how we can work to create more inclusive and healthy online communities,’ Anciones-Anguita said. ‘This study is just the beginning, and there is much more to explore in terms of the pornification of culture and its psychological impact on users.”

Indeed there is. See the article for more details on what the study considered “sexualization” and what it found.

Cynthia Murrell, April 16, 2024

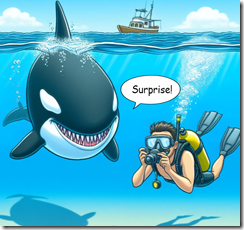

Social Media: Do You See the Hungry Shark?

April 2, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

After years of social media’s diffusion, those who mostly ignored how flows of user-generated content works like a body shop’s sandblaster. Now that societal structures are revealing cracks in the drywall and damp basements, I have noticed an uptick in chatter about Facebook- and TikTok-type services. A recent example of Big Thinkers’ wrestling with what is a quite publicly visible behavior of mobile phone fiddling is the write up in Nature “The Great Rewiring: Is Social Media Really Behind an Epidemic of Teenage Mental Illness?”

Thanks, MSFT Copilot. How is your security initiative coming along? Ah, good enough.

The article raises an interesting question: Are social media and mobile phones the cause of what many of my friends and colleagues see as a very visible disintegration of social conventions. The fabric of civil behavior seems to be fraying and maybe coming apart. I am not sure the local news in the Midwest region where I live reports the shootings that seem to occur with some regularity.

The write up (possibly written by a person who uses social media and demonstrates polished swiping techniques) wrestles with the possibility that the unholy marriage of social media and mobile devices may not be the “problem.” The notion that other factors come into play is an example of an established source of information working hard to take a balanced, rational approach to what is the standard of behavior.

The write up says:

Two things can be independently true about social media. First, that there is no evidence that using these platforms is rewiring children’s brains or driving an epidemic of mental illness. Second, that considerable reforms to these platforms are required, given how much time young people spend on them.

Then the article wraps up with this statement:

A third truth is that we have a generation in crisis and in desperate need of the best of what science and evidence-based solutions can offer. Unfortunately, our time is being spent telling stories that are unsupported by research and that do little to support young people who need, and deserve, more.

Let me offer several observations:

- The corrosive effect of digital information flows is simply not on the radar of those who “think about” social media. Consequently, the inherent function of online information is overlooked, and therefore, the rational statements are fluffy.

- The only way to constrain digital information and the impact of its flows is to pull the plug. That will not happen because of the drug cartel-like business models produce too much money.

- The notion that “research” will light the path forward is interesting. I cannot “trust” peer reviewed papers authored by the former president of Stanford University or the research of the former Top Dog at Harvard University’s “ethics” department. Now I am supposed to believe that “research” will provide answers. Not so fast, pal.

Net net: The failure to understand a basic truth about how online works means that fixes are not now possible. Sound gloomy? You are getting my message. Time to adapt and remain flexible. The impacts are just now being seen as more than a post-Covid or economic downturn issue. Online information is a big fish, and it remains mostly invisible. The good news is that some people have recognized that the water in the data lake has powerful currents.

Stephen E Arnold, April 2, 2024

NSO Group: Pegasus Code Wings Its Way to Meta and Mr. Zuckerberg

March 7, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

NSO Group’s senior managers and legal eagles will have an opportunity to become familiar with an okay Brazilian restaurant and a waffle shop. That lovable leader of Facebook, Instagram, Threads, and WhatsApp may have put a stick in the now-ageing digital bicycle doing business as NSO Group. The company’s mark is pegasus, which is a flying horse. Pegasus’s dad was Poseidon, and his mom was the knock out Gorgon Medusa, who did some innovative hair treatments. The mythical pegasus helped out other gods until Zeus stepped in an acted with extreme prejudice. Quite a myth.

Poseidon decides to kill the mythical Pegasus, not for its software, but for its getting out of bounds. Thanks, MSFT Copilot. Close enough.

Life imitates myth. “Court Orders Maker of Pegasus Spyware to Hand Over Code to WhatsApp” reports that the hand over decision:

is a major legal victory for WhatsApp, the Meta-owned communication app which has been embroiled in a lawsuit against NSO since 2019, when it alleged that the Israeli company’s spyware had been used against 1,400 WhatsApp users over a two-week period. NSO’s Pegasus code, and code for other surveillance products it sells, is seen as a closely and highly sought state secret. NSO is closely regulated by the Israeli ministry of defense, which must review and approve the sale of all licenses to foreign governments.

NSO Group hired former DHS and NSA official Stewart Baker to fix up NSO Group gyro compass. Mr. Baker, who is a podcaster and affiliated with the law firm Steptoe and Johnson. For more color about Mr. Baker, please scan “Former DHS/NSA Official Stewart Baker Decides He Can Help NSO Group Turn A Profit.”

A decade ago, Israel’s senior officials might have been able to prevent a social media company from getting a copy of the Pegasus source code. Not anymore. Israel’s home-grown intelware technology simply did not thwart, prevent, or warn about the Hamas attack in the autumn of 2023. If NSO Group were battling in court with Harris Corp., Textron, or Harris Corp., I would not worry. Mr. Zuckerberg’s companies are not directly involved with national security technology. From what I have heard at conferences, Mr. Zuckerberg’s commercial enterprises are responsive to law enforcement requests when a bad actor uses Facebook for an allegedly illegal activity. But Mr. Zuckerberg’s managers are really busy with higher priority tasks. Some folks engaged in investigations of serious crimes must be patient. Presumably the investigators can pass their time scrolling through #Shorts. If the Guardian’s article is accurate, now those Facebook employees can learn how Pegasus works. Will any of those learnings stick? One hopes not.

Several observations:

- Companies which make specialized software guard their systems and methods carefully. Well, that used to be true.

- The reorganization of NSO Group has not lowered the firm’s public relations profile. NSO Group can make headlines, which may not be desirable for those engaged in national security.

- Disclosure of the specific Pegasus systems and methods will get a warm, enthusiastic reception from those who exchange ideas for malware and related tools on private Telegram channels, Dark Web discussion groups, or via one of the “stealth” communication services which pop up like mushrooms after rain in rural Kentucky.

Will the software Pegasus be terminated? I remain concerned that source code revealing how to perform certain tasks may lead to downstream, unintended consequences. Specialized software companies try to operate with maximum security. Now Pegasus may be flying away unless another legal action prevents this.

Where is Zeus when one needs him?

Stephen E Arnold, March 7, 2024

Flipboard: A Pivot, But Is the Crowd Impressed?

February 26, 2024

This essay is the work of a dumb humanoid. No smart software required.

This essay is the work of a dumb humanoid. No smart software required.

Twitter’s (X’s) downward spiral is leading more interest in decentralized social networks like Mastodon, BlueSky, and Pixelfed. Now a certain news app is following the trend. TechCrunch reports, “Flipboard Just Brought Over 1,000 of its Social Magazines to Mastodon and the Fediverse.” One wonders: do these "flip" or just lead to blank pages and dead ends? Writer Sarah Perez tells us:

“After sensing a change in the direction that social media was headed, Flipboard last year dropped support for Twitter/X in its app, which today allows users to curate content from around the web in ‘magazines’ that are shared with other readers. In Twitter’s place, the company embraced decentralized social media, and last May became the first app to support BlueSky, Mastodon, and Pixelfed (an open source Instagram rival) all in one place. While those first integrations allowed users to read, like, reply, and post to their favorite apps from within Flipboard’s app, those interactions were made possible through APIs.”

An enthusiastic entrepreneur makes a sudden pivot on the ice. The crowd is not impressed. Good enough, MidJourney.

In December Flipboard announced it would soon support the Fediverse’s networking protocol, ActivityPub. That shift has now taken place, allowing users of decentralized platforms to access its content. Flipboard has just added 20 publishers to those that joined its testing phase in December. Each has its own native ActivityPub feed for maximum Fediverse discoverability. Flipboard’s thematic nature allows users to keep their exposure to new topics to a minimum. We learn:

“The company explains that allowing users to follow magazines instead of other accounts means they can more closely track their particular interests. While a user may be interested in the photography that someone posts, they may not want to follow their posts about politics or sports. Flipboard’s magazines, however, tend to be thematic in nature, allowing users to browse news, articles, and social posts referencing a particular topic, like healthy eating, climate tech, national security, and more.”

Perez notes other platforms are likewise trekking to the decentralized web. Medium and Mozilla have already made the move, and Instagram (Meta) is working on an ActivityPub integration for Threads. WordPress now has a plug-in for all its bloggers who wish to post to the Fediverse. With all this new interest, will ActivityPub be able to keep (or catch) up?

Our view is that news aggregation via humans may be like the young Bob Hope rising to challenge older vaudeville stars. But motion pictures threaten the entire sector. Is this happening again? Yep.

Cynthia Murrell, February 26, 2024

It Is Here: The AI Generation

February 2, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Yes, another digital generation has arrived. The last two or three have been stunning, particularly when compared to my childhood in central Illinois. We played hide and seek; now the youthful create fake Taylor Swift videos. Ah, progress.

I read “Qustodio Releases 5th Annual Report Studying Children’s Digital Habits, Born Connected: The Rise of the AI Generation.” I have zero clue if the data are actual factual. With the recent information about factual creativity at the Harvard medical brain trust, nothing will surprise me. Nevertheless, let me highlight several factoids and then, of course, offer some unwanted Beyond Search comments. Hey, it is a free blog, and I have some friskiness in my dinobaby step.

Memories. Thanks, MSFT Copilot Bing thing. Not even close to what I specified.

The sample involved “400,000 families and schools.” I don’t remember too much about my Statistics 101 course 60 years ago, but the sample size seems — interesting. Here’s what Qustodio found:

YouTube is number one for streaming, kiddies spent 60 percent more time on TikTok

How much time goes to couch potato-ing? Here’s the answer:

TikTok continued to captivate with children spending a global average of 112 minutes daily on the app – up from 107 in 2022. UK kids were particularly fond of the bottomless scroll as they racked up 127 mins/day.

Why read, play outdoors, or fiddle with a chemistry set? Just kick back and check out ASMR, being thin, and dance move videos. Sounds tasty, doesn’t it?

And what is the most popular kiddie app? Here’s the answer:

Snapchat.

If you want to buy the full report, click this link.

Several observations:

- The smart software angle may be in the full report, but the summary skirts the issue, recycling the same grim numbers: More video, less of other activities like being a child

- Will this “generation” of people be able to differentiate reality from fake anything? My hunch is that the belief that these young folks have super tuned baloney radar may be — baloney.

- A sample of 400,000? Yeah.

Net net: I am glad to be an old dinobaby. Really, really happy.

Stephen E Arnold, February 2, 2024

Techno Feudalist Governance: Not a Signal, a Rave Sound Track

January 31, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

One of the UK’s watchdog outfits published a 30-page report titled “One Click Away: A Study on the Prevalence of Non-Suicidal Self Injury, Suicide, and Eating Disorder Content Accessible by Search Engines.” I suggest that you download the report if you are interested in what the consequences of poor corporate governance are. I recommend reading the document while watching your young children or grand children playing with their mobile phones or tablet devices.

Let me summarize the document for you because its contents provide some color and context for the upcoming US government hearings with a handful of techno feudalist companies:

Web search engines and social media services are one-click gateways to self-harm and other content some parents and guardians might deem inappropriate.

Does this report convey information relevant to the upcoming testimony of selected large US technology companies in the Senate? I want to say, “Yes.” However, the realistic answer is, “No.”

Techmeme, an online information service, displayed its interest in the testimony with these headlines on January 31, 2024:

Screenshots are often difficult to read. The main story is from the weird orange newspaper whose content is presented under this Techmeme headline:

Ahead of the Senate Hearing, Mark Zuckerberg Calls for Requiring Apple and Google to Verify Ages via App Stores…

Ah, ha, is this a red herring intended to point the finger at outfits not on the hot seat in the true blue Senate hearing room?

The New York Times reports on a popular DC activity: A document reveal:

Ahead of the Senate Hearing, US Lawmakers Release 90 Pages of Internal Meta Emails…

And to remind everyone that an allegedly China linked social media service wants to do the right thing (of course!), Bloomberg’s angle is:

In Prepared Senate Testimony, TikTok CEO Shou Chew Says the Company Plans to Spend $2B+ in 2024 on Trust and Safety Globally…

Therefore, the Senate hearing on January 31, 2024 is moving forward.

What will be the major take-away from today’s event? I would suggest an opportunity for those testifying to say, “Senator, thank you for the question” and “I don’t have that information. I will provide that information when I return to my office.”

And the UK report? What? And the internal governance of certain decisions related to safety in the techno feudal firms? Secondary to generating revenue perhaps?

Stephen E Arnold, January 31, 2024

Why Stuff Does Not Work: Airplane Doors, Health Care Services, and Cyber Security Systems, Among Others

January 26, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

“The Downward Spiral of Technology” stuck a chord with me. Think about building monuments in the reign of Cleopatra. The workers can check out the sphinx and giant stone blocks in the pyramids and ask, “What happened to the technology? We are banging with bronze and crappy metal compounds and those ancient dudes were zipping along with snappier tech.? That conversation is imaginary, of course.

The author of “The Downward Spiral” is focusing on less dusty technology, the theme might resonate with my made up stone workers. Modern technology lacks some of the zing of the older methods. The essay by Thomas Klaffke hit on some themes my team has shared whilst stuffing Five Guys’s burgers in their shark-like mouths.

Here are several points I want to highlight. In closing, I will offer some of my team’s observations on the outcome of the Icarus emulators.

First, let’s think about search. One cannot do anything unless one can find electronic content. (Lawyers, please, don’t tell me you have associates work through the mostly-for-show books in your offices. You use online services. Your opponents in court print stuff out to make life miserable. But electronic content is the cat’s pajamas in my opinion.)

Here’s a table from the Mr. Klaffke essay:

Two things are important in this comparison of the “old” tech and the “new” tech deployed by the estimable Google outfit. Number one: Search in Google’s early days made an attempt to provide content relevant to the query. The system was reasonably good, but it was not perfect. Messrs. Brin and Page fancy danced around issues like disambiguation, date and time data, date and time of crawl, and forward and rearward truncation. Flash forward to the present day, the massive contributions of Prabhakar Raghavan and other “in charge of search” deliver irrelevant information. To find useful material, navigate to a Google Dorks service and use those tips and tricks. Otherwise, forget it and give Swisscows.com, StartPage.com, or Yandex.com a whirl. You are correct. I don’t use the smart Web search engines. I am a dinobaby, and I don’t want thresholds set by a 20 year old filtering information for me. Thanks but no thanks.

The second point is that search today is a monopoly. It takes specialized expertise to find useful, actionable, and accurate information. Most people — even those with law degrees, MBAs, and the ability to copy and paste code — cannot cope with provenance, verification, validation, and informed filtering performed by a subject matter expert. Baloney does not work in my corner of the world. Baloney is not a favorite food group for me or those who are on my team. Kudos to Mr. Klaffke to make this point. Let’s hope someone listens. I have given up trying to communicate the intellectual issues lousy search and retrieval creates. Good enough. Nope.

Yep, some of today’s tools are less effective than modern gizmos. Hey, how about those new mobile phones? Thanks, MSFT Copilot Bing thing. Good enough. How’s the MSFT email security today? Oh, I asked that already.

Second, Mr Klaffke gently reminds his reader that most people do not know snow cones from Shinola when it comes to information. Most people assume that a computer output is correct. This is just plain stupid. He provides some useful examples of problems with hardware and user behavior. Are his examples ones that will change behaviors. Nope. It is, in my opinion, too late. Information is an undifferentiated haze of words, phrases, ideas, facts, and opinions. Living in a haze and letting signals from online emitters guide one is a good way to run a tiny boat into a big reef. Enjoy the swim.

Third, Mr. Klaffke introduces the plumbing of the good-enough mentality. He is accurate. Some major social functions are broken. At lunch today, I mentioned the writings about ethics by Thomas Dewey and William James. My point was that these fellows wrote about behavior associated with a world long gone. It would be trendy to wear a top hat and ride in a horse drawn carriage. It would not be trendy to expect that a person would work and do his or her best to do a good job for the agreed-upon wage. Today I watched a worker who played with his mobile phone instead of stocking the shelves in the local grocery store. That’s the norm. Good enough is plenty good. Why work? Just pay me, and I will check out Instagram.

I do not agree with Mr. Klaffke’s closing statement; to wit:

The problem is not that the “machine” of humanity, of earth is broken and therefore needs an upgrade. The problem is that we think of it as a “machine”.

The problem is that worldwide shared values and cultural norms are eroding. Once the glue gives way, we are in deep doo doo.

Here are my observations:

- No entity, including governments, can do anything to reverse thousands of years of cultural accretion of norms, standards, and shared beliefs.

- The vast majority of people alive today are reverting back to some fascinating behaviors. “Fascinating” is not a positive in the sense in which I am using the word.

- Online has accelerated the stress on social glue; smart software is the turbocharger of abrupt, hard-to-understand change.

Net net: Please, read Mr. Klaffke’s essay. You may have an idea for remediating one or more of today’s challenges.

Stephen E Arnold, January 25, 2024

How Do You Foster Echo Bias, Not Fake, But Real?

January 24, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Research is supposed to lead to the truth. It used to when research was limited and controlled by publishers, news bureaus, and other venues. The Internet liberated information access but it also unleashed a torrid of lies. When these lies are stacked and manipulated by algorithms, they become powerful and near factual. Nieman Labs relates how a new study shows the power of confirmation in, “Asking People ‘To Do The Research’ On Fake News Stories Makes Them Seem More Believable, Not Less.”

Nature reported on a paper by Kevin Aslett, Zeve Sanderson, William Godel, Nathaniel Persily, Jonathan Nagler, and Joshua A. Tucker. The paper abstract includes the following:

Here, across five experiments, we present consistent evidence that online search to evaluate the truthfulness of false news articles actually increases the probability of believing them. To shed light on this relationship, we combine survey data with digital trace data collected using a custom browser extension. We find that the search effect is concentrated among individuals for whom search engines return lower-quality information. Our results indicate that those who search online to evaluate misinformation risk falling into data voids, or informational spaces in which there is corroborating evidence from low-quality sources. We also find consistent evidence that searching online to evaluate news increases belief in true news from low-quality sources, but inconsistent evidence that it increases belief in true news from mainstream sources. Our findings highlight the need for media literacy programs to ground their recommendations in empirically tested strategies and for search engines to invest in solutions to the challenges identified here.”

All of the tests were similar in that they asked participants to evaluate news articles that had been rated “false or misleading” by professional fact checkers. They were asked to read the articles, research and evaluate the stories online, and decide if the fact checkers were correct. Controls were participants who were asked not to research stories.

The tests revealed that searching online increase misinformation belief. The fifth test in the study explained that exposure to lower-quality information in search results increased the probability of believing in false news.

The culprit for bad search engine results is data voids akin to rabbit holes of misinformation paired with SEO techniques to manipulate people. People with higher media literacy skills know how to better use search engines like Google to evaluate news. Poor media literacy people don’t know how to alter their search queries. Usually they type in a headline and their results are filled with junk.

What do we do? We need to revamp media literacy, force search engines to limit number of paid links at the top of results, and stop chasing sensationalism.

Whitney Grace, January 24, 2024

TikTok Weaponized? Who Knows

January 10, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

“TikTok Restricts Hashtag Search Tool Used by Researchers to Assess Content on Its Platform” makes clear that transparency from a commercial entity is a work in progress or regress as the case may be. NBC reports:

TikTok has restricted one tool researchers use to analyze popular videos, a move that follows a barrage of criticism directed at the social media platform about content related to the Israel-Hamas war and a study that questioned whether the company was suppressing topics that don’t align with the interests of the Chinese government. TikTok’s Creative Center – which is available for anyone to use but is geared towards helping brands and advertisers see what’s trending on the app – no longer allows users to search for specific hashtags, including innocuous ones.

An advisor to TikTok who works at a Big Time American University tells his students that they are not permitted to view the data the mad professor has gathered as part of his consulting work for a certain company affiliated with the Middle Kingdom. The students don’t seem to care. Each is viewing TikTok videos about restaurants serving super sized burritos. Thanks, MSFT Copilot Bing thing. Good enough.

Does anyone really care?

Those with sympathy for the China-linked service do. The easiest way to reduce the hassling from annoying academic researchers or analysts at non-governmental organizations is to become less transparent. The method has proven its value to other firms.

Several observations can be offered:

- TikTok is an immensely influential online service for young people. Blocking access to data about what’s available via TikTok and who accesses certain data underscores the weakness of certain US governmental entities. TikTok does something to reduce transparency and what happens? NBC news does a report. Big whoop as one of my team likes to say.

- Transparency means that scrutiny becomes more difficult. That decision immediately increases my suspicion level about TikTok. The action makes clear that transparency creates unwanted scrutiny and criticism. The idea is, “Let’s kill that fast.”

- TikTok competitors have their work cut out for them. No longer can their analysts gather information directly. Third party firms can assemble TikTok data, but that is often slow and expensive. Competing with TikTok becomes a bit more difficult, right, Google?

To sum up, social media short form content can be weaponized. The value of a weapon is greater when its true nature is not known, right, TikTok?

Stephen E Arnold, January 10, 2024

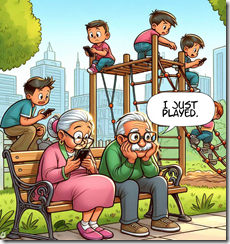

Kiddie Control: Money and Power. What Is Not to Like?

January 2, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I want to believe outputs from Harvard University. But the ethics professor who made up data about ethics and the more recent the recent publicity magnet from the possibly former university president nag at me. Nevertheless, let’s assume that some of the data in “Social Media Companies Made $11 Billion in US Ad Revenue from Minors, Harvard Study Finds” are semi-correct or at least close enough for horseshoes. (You may encounter a paywall or a 404 error. Well, just trust a free Web search system to point you to a live version of the story. I admit that I was lucky. The link from my colleague worked.)

The senior executive sets the agenda for the “exploit the kiddies” meeting. Control is important. Ignorant children learn whom to trust, believe, and follow. Does this objective require an esteemed outfit like the Harvard U. to state the obvious? Seems like it. Thanks, MSFT Copilot, you output child art without complaint. Consistency is not your core competency, is it?

From the write up whose authors I hope are not crossing their fingers like some young people do to neutralize a lie.

Check this statement:

The researchers say the findings show a need for government regulation of social media since the companies that stand to make money from children who use their platforms have failed to meaningfully self-regulate. They note such regulations, as well as greater transparency from tech companies, could help alleviate harms to youth mental health and curtail potentially harmful advertising practices that target children and adolescents.

The sentences contain what I think are silly observations. “Self regulation” is a bit of a sci-fi notion in today’s get-rich-quick high-technology business environment. The idea of getting possible oligopolists together to set some rules that might hurt revenue generation is something from an alternative world. Plus, the concept of “government regulation” strikes me as a punch line for a stand up comedy act. How are regulatory agencies and elected officials addressing the world of digital influencing? Answer: Sorry, no regulation. The big outfits are in many situations are the government. What elected official or Washington senior executive service professional wants to do something that cuts off the flow of nifty swag from the technology giants? Answer: No one. Love those mouse pads, right?

Now consider these numbers which are going to be tough to validate. Have you tried to ask TikTok about its revenue? What about that much-loved Google? Nevertheless, these are interesting if squishy:

According to the Harvard study, YouTube derived the greatest ad revenue from users 12 and under ($959.1 million), followed by Instagram ($801.1 million) and Facebook ($137.2 million). Instagram, meanwhile, derived the greatest ad revenue from users aged 13-17 ($4 billion), followed by TikTok ($2 billion) and YouTube ($1.2 billion). The researchers also estimate that Snapchat derived the greatest share of its overall 2022 ad revenue from users under 18 (41%), followed by TikTok (35%), YouTube (27%), and Instagram (16%).

The money is good. But let’s think about the context for the revenue. Is there another payoff from hooking minors on a particular firm’s digital content?

Control. Great idea. Self regulation will definitely address that issue.

Stephen E Arnold, January 2, 2023