Thinking about AI Doom: Cheerful, Right?

July 22, 2024

This essay is the work of a dumb humanoid. No smart software required.

This essay is the work of a dumb humanoid. No smart software required.

I am not much of a philosopher psychologist academic type. I am a dinobaby, and I have lived through a number of revolutions. I am not going to list the “next big things” that have roiled the world since I blundered into existence. I am changing my mind. I have memories of crouching in the hall at Oxon Hill Grade School in Maryland. We were practicing for the atomic bomb attack on Washington, DC. I think I was in the second grade. Exciting.

The AI powered robot want the future experts in hermeneutics to be more accepting of the technology. Looks like the robot is failing big time. Thanks, MSFT Copilot. Got those fixes deployed to the airlines yet?

Now another “atomic bomb” is doing the James Bond countdown: 009, 008, and then James cuts the wire at 007. The world was saved for another James Bond sequel. Wow, that was close.

I just read “Not Yet Panicking about AI? You Should Be – There’s Little Time Left to Rein It In.” The essay seems to be a trifle dark. Here’s a snippet I circled:

With technological means, we have accomplished what hermeneutics has long dreamed of: we have made language itself speak.

Thanks to Dr. Francis Chivers, one of my teachers at Duquesne University, I actually know a little bit about hermeneutics. May I share?

Hermeneutics is the theory and methodology of interpretation of words and writings. One should consider content in its historical, cultural, and linguistic context. The idea is to figure out the the underlying messages, intentions, and implications of texts doing academic gymnastics.

Now the killer statement:

Jacques Lacan was right; language is dark and obscene in its depths.

I presume you know well the work of Jacques Lacan. But if you have forgotten, the canny psychologist got himself kicked out of the International Psychoanalytic Association (no mean feat as I recall) for his ideas about desire. Think Freud on steroids.

The write up uses these everyday references to make the point:

If our governments summon the collective will, they are very strong. Something can still be done to rein in AI’s powers and protect life as we know it. But probably not for much longer.

Okay. AI is going to screw up the world. I think I have heard that assertion when my father told me about the computer lecture he attended at an accounting refresher class. That fear he manifested because he thought he would lose his job to a machine attracted me to the dark unknown of zeros and ones.

How did that turn out? He kept his job. I think mankind has muddled through the computer revolution, the space revolution, the wonder drug revolution, the automation revolution, yada yada.

News flash: The AI revolution has been around long before the whiz kids at Google disclosed Transformers. I think the author of this somewhat fearful write up is similar to my father’s projecting on computerized accounting his fear that he would be harmed by punched cards.

Take a deep breath. The sun will come up tomorrow morning. People who know about hermeneutics and Jacques Lacan will be able to ponder the nature of text and behavior. In short, worry less. Be less AI-phobic. The technology is here and it is not going away, getting under the thumb of any one government including China’s, and causing eternal darkness. Sorry to disappoint you.

Stephen E Arnold, July 22, 2024

A Windows Expert Realizes Suddenly Last Outage Is a Rerun

July 22, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness

I love poohbahs. One quite interesting online outlet I consult occasionally continues to be quite enthusiastic for all things Microsoft. I spotted a write up about the Crowdstrike matter and its unfortunate downstream consequences for a handful of really tolerant people using its cyber security software. The absolute gem of a write up which arrested my attention was “As the World Suffers a Global IT Apocalypse, What’s More Worrying is How Easy It Is for This to Happen.” The article discloses a certain blind spot among a few Windows cheerleaders. (I thought the Apple fan core was the top of the marketing mountain. I was wrong again, a common problem for a dinobaby like me.

Is the blue screen plague like the sinking of the Swedish flagship Vasa? Thanks, OpenAI. Good enough.

The subtitle is even more striking. Here it is:

Nefarious actors might not be to blame this time, but it should serve as a warning to us all how fragile our technology is.

Who knew? Perhaps those affected by the flood of notable cyber breaches. Norton Hospital, Solarwinds, the US government, et al are examples which come to mind.

To what does the word “nefarious” refer? Perhaps it is one of those massive, evil, 24×7 gangs of cyber thugs which work to find the very, very few flaws in Microsoft software? Could it be cyber security professionals who think about security only when some bad — note this — like global outages occur and the flaws in their procedures or code allow people to spend the night in airports or have their surgeries postponed?

The article states:

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works.

I find it interesting that the money-raising information appears before the stunning insights in the article.

The article reveals this pivotal item of information:

It’s an unprecedented situation around the globe, with banks, healthcare, airlines, TV stations, all affected by it. While Crowdstrike has confirmed this isn’t the result of any type of hack, it’s still incredibly alarming. One piece of software has crippled large parts of industry all across the planet. That’s what worries me.

Ah, a useful moment of recognizing the real world. Quite a leap for those who find certain companies a source of calm and professionalism. I am definitely glad Windows Central, the publisher of this essay, is worried about concentration of technology and the downstream dependencies. Worry only when a cyber problem takes down banks, emergency call services, and other technologically-dependent outfits.

But here’s the moment of insight for the Windows Central outfit. I can here “Eureka!” echoing in the workspace of this intrepid collection of poohbahs:

This time we’re ‘lucky’ in the sense it wasn’t some bad actors causing deliberate chaos.

Then the write up offers this stunning insight after decades of “good enough” software:

This stuff is all too easy. Bad actors can target a single source and cripple millions of computers, many of which are essential.

Holy Toledo. I am stunned with the brilliance of the observations in the article. I do have several thoughts from my humble office in rural Kentucky:

- A Windows cheerleading outfit is sort of admitting that technology concentration where “good enough” is excellence creates a global risk. I mean who knew? The Apple and Linux systems running Crowdstrike’s estimable software were not affected. Is this a Windows thing, this global collapse?

- Awareness of prior security and programming flaws simply did not exist for the author of the essay. I can understand why Windows Central found the Windows folding phone and a first generation Windows on Arm PCs absolutely outstanding.

- Computer science students in a number of countries learn online and at school how to look for similar configuration vulnerabilities in software and exploit them. The objective is to steal, cripple, or start up a cyber security company and make oodles of money. Incidents like this global outage are a road map for some folks, good and not so good.

My take away from this write up is that those who only worry when a global problem arises from what seems to be US-managed technology have not been paying attention. Online security is the big 17th century Swedish flagship Vasa (Wasa). Upon launch, the marine architect and assorted influential government types watched that puppy sink.

But the problem with the most recent and quite spectacular cyber security goof is that it happened to Microsoft and not to Apple or Linux systems. Perhaps there is a lesson in this fascinating example of modern cyber practices?

Stephen E Arnold, July 22, 2024

The Logic of Good Enough: GitHub

July 22, 2024

What happens when a big company takes over a good thing? Here is one possible answer. Microsoft acquired GitHub in 2018. Now, “‘GitHub’ Is Starting to Feel Like Legacy Software,” according Misty De Méo at The Future Is Now blog. And by “legacy,” she means outdated and malfunctioning. Paint us unsurprised.

De Méo describes being unable to use a GitHub feature she had relied on for years: the blame tool. She shares her process of tracking down what went wrong. Development geeks can see the write-up for details. The point is, in De Méo’s expert opinion, those now in charge made a glaring mistake. She observes:

“The corporate branding, the new ‘AI-powered developer platform’ slogan, makes it clear that what I think of as ‘GitHub’—the traditional website, what are to me the core features—simply isn’t Microsoft’s priority at this point in time. I know many talented people at GitHub who care, but the company’s priorities just don’t seem to value what I value about the service. This isn’t an anti-AI statement so much as a recognition that the tool I still need to use every day is past its prime. Copilot isn’t navigating the website for me, replacing my need to the website as it exists today. I’ve had tools hit this phase of decline and turn it around, but I’m not optimistic. It’s still plenty usable now, and probably will be for some years to come, but I’ll want to know what other options I have now rather than when things get worse than this.”

The post concludes with a plea for fellow developers to send De Méo any suggestions for GitHub alternatives and, in particular, a good local blame tool. Let us just hope any viable alternatives do not also get snapped up by big tech firms anytime soon.

Cynthia Murrell, July 23, 2024

Anarchist Content Links: Zines Live

July 19, 2024

![dinosaur30a_thumb_thumb_thumb_thumb_[1]_thumb dinosaur30a_thumb_thumb_thumb_thumb_[1]_thumb](https://arnoldit.com/wordpress/wp-content/uploads/2024/07/dinosaur30a_thumb_thumb_thumb_thumb_1_thumb_thumb.gif) This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

One of my team called my attention to “Library. It’s Going Down Reading Library.” I know I am not clued into the lingo of anarchists. As a result, the Library … Library rhetoric just put me on a slow blinking yellow alert or emulating the linguistic style of Its Going Down, Alert. It’s Slow Blinking Alert.”

Syntactical musings behind me, the list includes links to publications focused on fostering even more chaos than one encounters at a Costco or a Southwest Airlines boarding gate. The content of these publications is thought provoking to some and others may be reassured that tearing down may be more interesting than building up.

The publications are grouped in categories. Let me list a handful:

- Antifascism

- Anti-Politics

- Anti-Prison, Anti-Police, and Counter-Insurgency.

Personally I would have presented antifascism as anti-fascism to be consistent with the other antis, but that’s what anarchy suggests, doesn’t it?

When one clicks on a category, the viewer is presented with a curated list of “going down” related content. Here’s a listing of what’s on offer for the topic AI has made great again, Automation:

Livewire: Against Automation, Against UBI, Against Capital

If one wants more “controversial” content, one can visit these links:

Each of these has the “zine” vibe and provides useful information. I noted the lingo and the names of the authors. It is often helpful to have an entity with which one can associate certain interesting topics.

My take on these modest collections: Some folks are quite agitated and want to make live more challenging that an 80-year-old dinobaby finds it. (But zines remind me of weird newsprint or photocopied booklets in the 1970s or so.) If the content of these publications is accurate, we have not “zine” anything yet.

Stephen E Arnold, July 19, 2024

Students, Rejoice. AI Text Is Tough to Detect

July 19, 2024

While the robot apocalypse is still a long way in the future, AI algorithms are already changing the dynamics of work, school, and the arts. It’s an unfortunate consequence of advancing technology and a line in the sand needs to be drawn and upheld about appropriate uses of AI. A real world example was published in the Plos One Journal: “A Real-World Test Of Artificial Intelligence Infiltration Of A University Examinations System: A ‘Turing Test’ Case Study.”

Students are always searching for ways to cheat the education system. ChatGPT and other generative text AI algorithms are the ultimate cheating tool. School and universities don’t have systems in place to verify that student work isn’t artificially generated. Other than students learning essential knowledge and practicing core skills, the ways students are assessed is threatened.

The creators of the study researched a question we’ve all been asking: Can AI pass as a real human student? While the younger sects aren’t the sharpest pencils, it’s still hard to replicate human behavior or is it?

“We report a rigorous, blind study in which we injected 100% AI written submissions into the examinations system in five undergraduate modules, across all years of study, for a BSc degree in Psychology at a reputable UK university. We found that 94% of our AI submissions were undetected. The grades awarded to our AI submissions were on average half a grade boundary higher than that achieved by real students. Across modules there was an 83.4% chance that the AI submissions on a module would outperform a random selection of the same number of real student submissions.”

The AI exams and assignments received better grades than those written by real humans. Computers have consistently outperformed humans in what they’re programmed to do: calculations, play chess, and do repetitive tasks. Student work, such as writing essays, taking exams, and unfortunate busy work, is repetitive and monotonous. It’s easily replicated by AI and it’s not surprising the algorithms perform better. It’s what they’re programmed to do.

The problem isn’t that AI exist. The problem is that there aren’t processes in place to verify student work and humans will cave to temptation via the easy route.

Whitney Grace, July 19, 2024

Soft Fraud: A Helpful List

July 18, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

For several years I have included references to what I call “soft fraud” in my lectures. I like to toss in examples of outfits getting cute with fine print, expiration dates for offers, and weasels on eBay asserting that the US Post Office issued a bad tracking number. I capture the example, jot down the vendor’s name, and tuck it away. The term “soft fraud” refers to an intentional practice designed to extract money or an action from a user. The user typically assumes that the soft fraud pitch is legitimate. It’s not. Soft fraud is a bit like a con man selling an honest card game in Manhattan. Nope. Crooked by design (the phrase is a variant of the publisher of this listing).

I spotted a write up called “Catalog of Dark Patterns.” The Hall of Shame.design online site has done a good job of providing a handy taxonomy of soft fraud tactics. Here are four of the categories:

- Privacy Zuckering

- Roach motel

- Trick questions

The Dark Patterns write up then populates each of the 10 categories with some examples. If the examples presented are not sufficient, a “View All” button allows the person interested in soft fraud to obtain a bit more color.

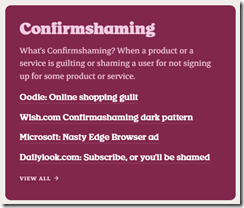

Here’s an example of the category “Confirmshaming”:

My suggestion to the Hall of Shame team is to move away from “too cute” categories. The naming might be clever, person searching for examples of soft fraud might not know the phrase “Privacy Zuckering.” Yes, I know that I have been guilty of writing phrases like the “zucked up,” but I am not creating a useful list. I am a dinobaby having a great time at 80 years of age.

Net net: Anyone interested in soft fraud will find this a useful compilation. Hopefully practitioners of soft fraud will be shunned. Maybe a couple of these outfits would be subject to some regulatory scrutiny? Hopefully.

Stephen E Arnold, July 18, 2024

Looking for the Next Big Thing? The Truth Revealed

July 18, 2024

![dinosaur30a_thumb_thumb_thumb_thumb_[1] dinosaur30a_thumb_thumb_thumb_thumb_[1]](https://arnoldit.com/wordpress/wp-content/uploads/2024/07/dinosaur30a_thumb_thumb_thumb_thumb_1_thumb.gif) This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Big means money, big money. I read “Twenty Five Years of Warehouse-Scale Computing,” authored by Googlers who definitely are into “big.” The write up is history from the point of view of engineers who built a giant online advertising and surveillance system. In today’s world, when a data topic is raised, it is big data. Everything is Texas-sized. Big is good.

This write up is a quasi-scholarly, scientific-type of sales pitch for the wonders of the Google. That’s okay. It is a literary form comparable to an epic poem or a jazzy H.L. Menken essay when people read magazines and newspapers. Let’s take a quick look at the main point of the article and then consider its implications.

I think this passage captures the zeitgeist of the Google on July 13, 2024:

From a team-culture point of view, over twenty five years of WSC design, we have learnt a few important lessons. One of them is that it is far more important to focus on “what does it mean to land” a new product or technology; after all, it was the Apollo 11 landing, not the launch, that mattered. Product launches are well understood by teams, and it’s easy to celebrate them. But a launch doesn’t by itself create success. However, landings aren’t always self-evident and require explicit definitions of success — happier users, delighted customers and partners, more efficient and robust systems – and may take longer to converge. While picking such landing metrics may not be easy, forcing that decision to be made early is essential to success; the landing is the “why” of the project.

A proud infrastructure plumber knows that his innovations allows the home owner to collect rent from AirBnB rentals. Thanks, MSFT Copilot. Interesting image because I did not specify gender or ethnicity. Does my plumber look like this? Nope.

The 13 page paper includes numerous statements which may resonate with different readers as more important. But I like this passage because it makes the point about Google’s failures. There is no reference to smart software, but for me it is tough to read any Google prose and not think in terms of Code Red, the crazy flops of Google’s AI implementations, and the protestations of Googlers about quantum supremacy or some other projection of inner insecurity the company’s genius concoct. Don’t you want to have an implant that makes Google’s knowledge of “facts” part of your being? America’s founding fathers were not diverse, but Google has different ideas about reality.

This passage directly addresses failure. A failure is a prelude to a soft landing or a perfect landing. The only problem with this mindset is that Google has managed one perfect landing: Its derivative online advertising business. The chatter about scale is a camouflage tarp pulled over the mad scramble to find a way to allow advertisers to pay Google money. The “invention” was forced upon those at Google who wanted those ad dollars. The engineers did many things to keep the money flowing. The “landing” is the fact that the regulators turned a blind eye to Google’s business practices and the wild and crazy engineering “fixes” worked well enough to allow more “fixes.” Somehow the mad scramble in the 25 years of “history” continues to work.

Until it doesn’t.

The case in point is Google’s response to the Microsoft OpenAI marketing play. Google’s ability to scale has not delivered. What delivers at Google is ad sales. The “scale” capabilities work quite well for advertising. How does the scale work for AI? Based on the results I have observed, the AI pullbacks suggest some issues exist.

What’s this mean? Scale and the cloud do not solve every problem or provide a slam dunk solution to a new challenge.

The write up offers a different view:

On one hand, computing demand is poised to explode, driven by growth in cloud computing and AI. On the other hand, technology scaling slowdown poses continued challenges to scale costs and energy-efficiency

Google sees that running out of chip innovations, power, cooling, and other parts of the scale story are an opportunity. Sure they are. Google’s future looks bright. Advertising has been and will be a good business. The scale thing? Plumbing. Let’s not forget what matters at Google. Selling ads and renting infrastructure to people who no longer have on-site computing resources. Google is hoping to be the AirBnB of computation. And sell ads on Tubi and other ad-supported streaming services.

Stephen E Arnold, July 18, 2024

What? Cloud Computing Costs Cut from Business Budgets

July 18, 2024

Many companies offload their data storage and computing needs to third parties aka cloud computing providers. Leveraging cloud computing was viewed as a great way to lower technology budgets, but with rising inflation and costs that perspective is changing. The BBC wrote an article about the changes in the cloud: “Are Rainy Days Ahead For Cloud Computing?”

Companies are reevaluating their investments in cloud computing, because the price tags are too high. The cloud was advertised as cheaper, easier, and faster. Businesses aren’t seeing any productive gains. Larger companies are considering dumping the cloud and rerouting their funds to self-hosting again. Clouding computing still has its benefits, especially for smaller companies who can’t invest in their technology infrastructure. Security is another concern:

“‘A key factor in our decision was that we have highly proprietary R&D data and code that must remain strictly secure,’ says Markus Schaal, managing director at the German firm. “If our investments in features, patches, and games were leaked, it would be an advantage to our competitors. While the public cloud offers security features, we ultimately determined we needed outright control over our sensitive intellectual property. "As our AI-assisted modelling tools advanced, we also required significantly more processing power that the cloud could not meet within budget.”

He adds:

“We encountered occasional performance issues during heavy usage periods and limited customization options through the cloud interface. Transitioning to a privately-owned infrastructure gave us full control over hardware purchasing, software installation, and networking optimized for our workloads.”

Cloud computing has seen its golden era, but it’s not disappearing. It’s still a useful computing tool, but won’t be the main infrastructure for companies that want to lower costs, stay within budget, secure their software, and other factors.

Whitney Grace, July 18, 2024

Stop Indexing! And Pay Up!

July 17, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

I read “Apple, Nvidia, Anthropic Used Thousands of Swiped YouTube Videos to Train AI.” The write up appears in two online publications, presumably to make an already contentious subject more clicky. The assertion in the title is the equivalent of someone in Salem, Massachusetts, pointing at a widower and saying, “She’s a witch.” Those willing to take the statement at face value would take action. The “trials” held in colonial Massachusetts. My high school history teacher was a witchcraft trial buff. (I think his name was Elmer Skaggs.) I thought about his descriptions of the events. I recall his graphic depictions and analysis of what I recall as “dunking.” The idea was that if a person was a witch, then that person could be immersed one or more times. I think the idea had been popular in medieval Europe, but it was not a New World innovation. Me-too is a core way to create novelty. The witch could survive being immersed for a period of time. With proof, hanging or burning were the next step. The accused who died was obviously not a witch. That’s Boolean logic in a pure form in my opinion.

The Library in Alexandria burns in front of people who wanted to look up information, learn, and create more information. Tough. Once the cultural institution is gone, just figure out the square root of two yourself. Thanks, MSFT Copilot. Good enough.

The accusations and evidence in the article depict companies building large language models as candidates for a test to prove that they have engaged in an improper act. The crime is processing content available on a public network, indexing it, and using the data to create outputs. Since the late 1960s, digitizing information and making it more easily accessible was perceived as an important and necessary activity. The US government supported indexing and searching of technical information. Other fields of endeavor recognized that as the volume of information expanded, the traditional methods of sitting at a table, reading a book or journal article, making notes, analyzing the information, and then conducting additional research or writing a technical report was simply not fast enough. What worked in a medieval library was not a method suited to put a satellite in orbit or perform other knowledge-value tasks.

Thus, online became a thing. Remember, we are talking punched cards, mainframes, and clunky line printers one day there was the Internet. The interest in broader access to online information grew and by 1985, people recognized that online access was useful for many tasks, not just looking up information about nuclear power technologies, a project I worked on in the 1970s. Flash forward 50 years, and we are upon the moment one can read about the “fact” that Apple, Nvidia, Anthropic Used Thousands of Swiped YouTube Videos to Train AI.

The write up says:

AI companies are generally secretive about their sources of training data, but an investigation by Proof News found some of the wealthiest AI companies in the world have used material from thousands of YouTube videos to train AI. Companies did so despite YouTube’s rules against harvesting materials from the platform without permission. Our investigation found that subtitles from 173,536 YouTube videos, siphoned from more than 48,000 channels, were used by Silicon Valley heavyweights, including Anthropic, Nvidia, Apple, and Salesforce.

I understand the surprise some experience when they learn that a software script visits a Web site, processes its content, and generates an index (a buzzy term today is large language model, but I prefer the simpler word index.)

I want to point out that for decades those engaged in making information findable and accessible online have processed content so that a user can enter a query and get a list of indexed items which match that user’s query. In the old days, one used Boolean logic which we met a few moments ago. Today a user’s query (the jazzy term is prompt now) is expanded, interpreted, matched to the user’s “preferences”, and a result generated. I like lists of items like the entries I used to make on a notecard when I was a high school debate team member. Others want little essays suitable for a class assignment on the Salem witchcraft trials in Mr. Skaggs’s class. Today another system can pass a query, get outputs, and then take another action. This is described by the in-crowd as workflow orchestration. Others call it, “taking a human’s job.”

My point is that for decades, the index and searching process has been without much innovation. Sure, software scripts can know when to enter a user name and password or capture information from Web pages that are transitory, disappearing in the blink of an eye. But it is still indexing over a network. The object remains to find information of utility to the user or another system.

The write up reports:

Proof News contributor Alex Reisner obtained a copy of Books3, another Pile dataset and last year published a piece in The Atlantic reporting his finding that more than 180,000 books, including those written by Margaret Atwood, Michael Pollan, and Zadie Smith, had been lifted. Many authors have since sued AI companies for the unauthorized use of their work and alleged copyright violations. Similar cases have since snowballed, and the platform hosting Books3 has taken it down. In response to the suits, defendants such as Meta, OpenAI, and Bloomberg have argued their actions constitute fair use. A case against EleutherAI, which originally scraped the books and made them public, was voluntarily dismissed by the plaintiffs. Litigation in remaining cases remains in the early stages, leaving the questions surrounding permission and payment unresolved. The Pile has since been removed from its official download site, but it’s still available on file sharing services.

The passage does a good job of making clear that most people are not aware of what indexing does, how it works, and why the process has become a fundamental component of many, many modern knowledge-centric systems. The idea is to find information of value to a person with a question, present relevant content, and enable the user to think new thoughts or write another essay about dead witches being innocent.

The challenge today is that anyone who has written anything wants money. The way online works is that for any single user’s query, the useful information constitutes a tiny, miniscule fraction of the information in the index. The cost of indexing and responding to the query is high, and those costs are difficult to control.

But everyone has to be paid for the information that individual “created.” I understand the idea, but the reality is that the reason indexing, search, and retrieval was invented, refined, and given numerous life extensions was to perform a core function: Answer a question or enable learning.

The write up makes it clear that “AI companies” are witches. The US legal system is going to determine who is a witch just like the process in colonial Salem. Several observations are warranted:

- Modifying what is a fundamental mechanism for information retrieval may be difficult to replace or re-invent in a quick, cost-efficient, and satisfactory manner. Digital information is loosey goosey; that is, it moves, slips, and slides either by individual’s actions or a mindless system’s.

- Slapping fines and big price tags on what remains an access service will take time to have an impact. As the implications of the impact become more well known to those who are aggrieved, they may find that their own information is altered in a fundamental way. How many research papers are “original”? How many journalists recycle as a basic work task? How many children’s lives are lost when the medical reference system does not have the data needed to treat the kid’s problem?

- Accusing companies of behaving improperly is definitely easy to do. Many companies do ignore rules, regulations, and cultural norms. Engineering Index’s publisher leaned that bootleg copies of printed Compendex indexes were available in China. What was Engineering Index going to do when I learned this almost 50 years ago? The answer was give speeches, complain to those who knew what the heck a Compendex was, and talk to lawyers. What happened to the Chinese content pirates? Not much.

I do understand the anger the essay expresses toward large companies doing indexing. These outfits are to some witches. However, if the indexing of content is derailed, I would suggest there are downstream consequences. Some of those consequences will make zero difference to anyone. A government worker at a national lab won’t be able to find details of an alloy used in a nuclear device. Who cares? Make some phone calls? Ask around. Yeah, that will work until the information is needed immediately.

A student accustomed to looking up information on a mobile phone won’t be able to find something. The document is a 404 or the information returned is an ad for a Temu product. So what? The kid will have to go the library, which one hopes will be funded, have printed material or commercial online databases, and a librarian on duty. (Good luck, traditional researchers.) A marketing team eager to get information about the number of Telegram users in Ukraine won’t be able to find it. The fix is to hire a consultant and hope those bright men and women have a way to get a number, a single number, good, bad, or indifferent.)

My concern is that as the intensity of the objections about a standard procedure for building an index escalate, the entire knowledge environment is put at risk. I have worked in online since 1962. That’s a long time. It is amazing to me that the plumbing of an information economy has been ignored for a long time. What happens when the companies doing the indexing go away? What happens when those producing the government reports, the blog posts, or the “real” news cannot find the information needed to create information? And once some information is created, how is another person going to find it. Ask an eighth grader how to use an online catalog to find a fungible book. Let me know what you learn? Better yet, do you know how to use a Remac card retrieval system?

The present concern about information access troubles me. There are mechanisms to deal with online. But the reason content is digitized is to find it, to enable understanding, and to create new information. Digital information is like gerbils. Start with a couple of journal articles, and one ends up with more journal articles. Kill this access and you get what you wanted. You know exactly who is the Salem witch.

Stephen E Arnold, July 17, 2024

x

x

x

x

x

x

Quantum Supremacy: The PR Race Shames the Google

July 17, 2024

![dinosaur30a_thumb_thumb_thumb_thumb_[1]_thumb_thumb_thumb_thumb dinosaur30a_thumb_thumb_thumb_thumb_[1]_thumb_thumb_thumb_thumb](https://arnoldit.com/wordpress/wp-content/uploads/2024/07/dinosaur30a_thumb_thumb_thumb_thumb_1_thumb_thumb_thumb_thumb_thumb.gif) This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

The quantum computing era exists in research labs and a handful of specialized locations. The qubits are small, but the cooling system and control mechanisms are quite large. An environmentalist learning about the power consumption and climate footprint of a quantum computer might die of heart failure. But most of the worriers are thinking about AI’s power demands. Quantum computing is not a big deal. Yet.

But the title of “quantum supremacy champion” is a big deal. Sure the community of those energized by the concept may number in the tens of thousands, but quantum computing is a big deal. Google announced a couple of years ago that it was the quantum supremacy champ. I just read “New Quantum Computer Smashes Quantum Supremacy Record by a Factor of 100 — And It Consumes 30,000 Times Less Power.” The main point of the write up in my opinion is:

Anew quantum computer has broken a world record in “quantum supremacy,” topping the performance of benchmarking set by Google’s Sycamore machine by 100-fold.

Do I believe this? I am on the fence, but in the quantum computing “my super car is faster than your super car” means something to those in the game. What’s interesting to me is that the PR claim is not twice as fast as the Google’s quantum supremacy gizmo. Nor is the claim to be 10 times faster. The assertion is that a company called Quantinuum (the winner of the high-tech company naming contest with three letter “u”s, one “q” and four syllables) outperformed the Googlers by a factor of 100.

Two successful high-tech executives argue fiercely about performance. Thanks, MSFT Copilot. Good enough, and I love the quirky spelling? Is this a new feature of your smart software?

Now does the speedy quantum computer work better than one’s iPhone or Steam console. The article reports:

But in the new study, Quantinuum scientists — in partnership with JPMorgan, Caltech and Argonne National Laboratory — achieved an XEB score of approximately 0.35. This means the H2 quantum computer can produce results without producing an error 35% of the time.

To put this in context, use this system to plot your drive from your home to Texarkana. You will make it there one out of every three multi day drives. Close enough for horse shoes or an MVP (minimum viable product). But it is progress of sorts.

So what does the Google do? Its marketing team goes back to AI software and magically “DeepMind’s PEER Scales Language Models with Millions of Tiny Experts” appears in Venture Beat. Forget that quantum supremacy claim. The Google has “millions of tiny experts.” Millions. The PR piece reports:

DeepMind’s Parameter Efficient Expert Retrieval (PEER) architecture addresses the challenges of scaling MoE [mixture of experts and not to me confused with millions of experts [MOE].

I know this PR story about the Google is not quantum computing related, but it illustrates the “my super car is faster than your super car” mentality.

What can one believe about Google or any other high-technology outfit talking about the performance of its system or software? I don’t believe too much, probably about 10 percent of what I read or hear.

But the constant need to be perceived as the smartest science quick recall team is now routine. Come on, geniuses, be more creative.

Stephen E Arnold, July 17, 2024