Another Open Source AI Voice Speaks: Yo, Meta!

July 3, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

The open source software versus closed source software demonstrates ebbs and flows. Like the “go fast” with AI and “go slow” with AI, strong opinions suggest that big money and power are swirling like the storms on a weather app for Oklahoma in tornado season. The most recent EF5 is captured in “Zuckerberg Disses Closed-Source AI Competitors As Trying to Create God.” The US government seems to be concerned about open source smart software finding its way into the hands of those who are not fans of George Washington-type thinking.

Which AI philosophy will win the big pile of money? Team Blue representing the Zuck? Or, the rag tag proprietary wizards? Thanks, MSFT Copilot. You are into proprietary, aren’t you?

The “move fast and break things” personage of Mark Zuckerberg is into open source smart software. In the write up, he allegedly said in a YouTube bit:

“I don’t think that AI technology is a thing that should be kind of hoarded and … that one company gets to use it to build whatever central, single product that they’re building,” Zuckerberg said in a new YouTube interview with Kane Sutter (@Kallaway).

The write up includes this passage:

In the conversation, Zuckerberg said there needs to be a lot of different AIs that get created to reflect people’s different interests.

One interesting item in the article, in my opinion, is this:

“You want to unlock and … unleash as many people as possible trying out different things,” he continued. “I mean, that’s what culture is, right? It’s not like one group of people getting to dictate everything for people.”

But the killer Meta vision is captured in this passage:

Zuckerberg said there will be three different products ahead of convergence: display-less smart glasses, a heads-up type of display and full holographic displays. Eventually, he said that instead of neural interfaces connected to their brain, people might one day wear a wristband that picks up signals from the brain communicating with their hand. This would allow them to communicate with the neural interface by barely moving their hand. Over time, it could allow people to type, too. Zuckerberg cautioned that these types of inputs and AI experiences may not immediately replace smartphones, though. “I don’t think, in the history of technology, the new platform — it usually doesn’t completely make it that people stop using the old thing. It’s just that you use it less,” he said.

In short, the mobile phone is going down, not tomorrow, but definitely to the junk drawer.

Several observations which I know you are panting to read:

- Never under estimate making something small or re-invented as a different form factor. The Zuck might be “right.”

- The idea of “unleash” is interesting. What happens if employees at WhatsApp unleash themselves? How will the Zuck construct react? Like the Google? Something new like blue chip consulting firms replacing people with smart software? “Unleash” can be interpreted in different ways, but I am thinking of turning loose a pack of hyenas. The Zuck may be thinking about eager kindergartners. Who knows?

- The Zuck’s position is different from the government officials who are moving toward restrictions on “free and open” smart software. Those hallucinating large language models can be repurposed into smart weapons. Close enough for horseshoes with enough RDX may do the job.

Net net: The Zuck is an influential and very powerful information channel owner. “Unleash” what? Hungry predators or those innovating children? Perhaps neither. But as OpenAI seems to be closing; the Zuck AI is into opening. Ah, uncertainty is unfolding before my eyes in real time.

Stephen E Arnold, July 3, 2024

x

x

OpenAI: Do You Know What Open Means? Does Anyone?

July 1, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The backstory for OpenAI was the concept of “open.” Well, the meaning of “open” has undergone some modification. There was a Musk up, a board coup, an Apple announcement that was vaporous, and now we arrive at the word “open” as in “OpenAI.”

Open source AI is like a barn that burned down. Hopefully the companies losing their software’s value have insurance. Once the barn is gone, those valuable animals may be gone. Thanks, MSFT Copilot. Good enough. How’s that Windows update going this week?

“OpenAI Taking Steps to Block China’s Access to Its AI Tools” reports with the same authority Bloomberg used with its “your motherboard is phoning home” crusade a few years ago [Note: If the link doesn’t render, search Bloomberg for the original story]:

OpenAI is taking additional steps to curb China’s access to artificial intelligence software, enforcing an existing policy to block users in nations outside of the territory it supports. The Microsoft Corp.-backed startup sent memos to developers in China about plans to begin blocking their access to its tools and software from July, according to screenshots posted on social media that outlets including the Securities Times reported on Tuesday. In China, local players including Alibaba Group Holding Ltd. and Tencent Holdings Ltd.-backed Zhipu AI posted notices encouraging developers to switch to their own products.

Let’s assume the information in the cited article is on the money. Yes, I know this is risky today, but do you know an 80-year-old who is not into thrills and spills?

According to Claude 3.5 Sonnet (which my team is testing), “open” means:

Not closed or fastened

Accessible or available

Willing to consider or receive

Exposed or vulnerable

The Bloomberg article includes this passage:

OpenAI supports access to its services in dozens of countries. Those accessing its products in countries not included on the list, such as China, may have their accounts blocked or suspended, according to the company’s guidelines. It’s unclear what prompted the move by OpenAI. In May, Sam Altman’s startup revealed it had cut off at least five covert influence operations in past months, saying they were using its products to manipulate public opinion.

I found this “real” news interesting:

From Baidu Inc. to startups like Zhipu, Chinese firms are trying to develop AI models that can match ChatGPT and other US industry pioneers. Beijing is openly encouraging local firms to innovate in AI, a technology it considers crucial to shoring up China’s economic and military standing.

It seems to me that “open” means closed.

Another angle surfaces in the Nature Magazine’s article “Not All Open Source AI Models Are Actually Open: Here’s a Ranking.” OpenAI is not alone in doing some linguistic shaping with the word “open.” The Nature article states:

Technology giants such as Meta and Microsoft are describing their artificial intelligence (AI) models as ‘open source’ while failing to disclose important information about the underlying technology, say researchers who analysed a host of popular chatbot models. The definition of open source when it comes to AI models is not yet agreed, but advocates say that ’full’ openness boosts science, and is crucial for efforts to make AI accountable.

Now this sure sounds to me as if the European Union is defining “open” as different from the “open” of OpenAI.

Let’s step back.

Years ago I wrote a monograph about open source search. At that time IDC was undergoing what might charitably be called “turmoil.” Chapters of my monograph were published by IDC on Amazon. I recycled the material for consulting engagements, but I learned three useful things in the research for that analysis of open source search systems:

- Those making open source search systems available at free and open source software wanted the software [a] to prove their programming abilities, [b] to be a foil for a financial play best embodied in the Elastic go-public and sell services “play”; [c] be a low-cost, no-barrier runway to locking in users; that is, a big company funds the open source software and has a way to make money every which way from the “free” bait.

- Open source software is a product testing and proof-of-concept for developers who are without a job or who are working in a programming course in a university. I witnessed this approach when I lectured in Tallinn, Estonia, in the 2000s. The “maybe this will stick” approach yields some benefits, primarily to the big outfits who co-opt an open source project and support it. When the original developer gives up or gets a job, the big outfit has its hands on the controls. Please, see [c] in item 1 above.

- Open source was a baby buzzword when I was working on my open source search research project. Now “open source” is a full-scale, AI-jargonized road map to making money.

The current mix up in the meaning of “open” is a direct result of people wearing suits realizing that software has knowledge value. Giving value away for nothing is not smart. Hence, the US government wants to stop its nemesis from having access to open source software, specifically AI. Big companies do not want proprietary knowledge to escape unless someone pays for the beast. Individual developers want to get some fungible reward for creating “free” software. Begging for dollars, offering a disabled version of software or crippleware, or charging for engineering “support” are popular ways to move from free to ka-ching. Big companies have another angle: Lock in. Some outfits are inept like IBM’s fancy dancing with Red Hat. Other companies are more clever; for instance, Microsoft and its partners and AI investments which allow “open” to become closed thank you very much.

Like many eddies in the flow of the technology river, change is continuous. When someone says, “Open”, keep in mind that thing may be closed and have a price tag or handcuffs.

Net net: The AI secrets have flown the coop. It has taken about 50 years to reach peak AI. The new angles revealed in the last year are not heart stoppers. That smoking ruin over there. That’s the locked barn that burned down. Animals are gone or “transformed.”

Stephen E Arnold, July 1, 2024

Open Source Drone Mapping Software

May 30, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Photography and 3D image rendering aren’t perfect technologies, but they’ve dramatically advanced since they became readily available. Photorealistic 3D rendering was only available to the ultra wealthy, corporations, law enforcement agencies, universities, and governments. The final products were laughable by today’s standards, but it set the foundation for technology like Open Drone Map.

OpenDroneMap is a cartographer’s dream software that generates, 3D models, digital elevation models, point clouds, and maps from aerial images. Using only a compatible drone, the software, and a little programming know-how, users can make maps that were once the domain of specific industries. The map types include: measurements, plant health, point clouds, orthomosaics, contours (topography), elevation models, ground point controls, and more.

OpenDroneMap is self-described as: “We are creating the most sustainable drone mapping software with the friendliest community on earth.” It’s also called an “open ecosystem:”

“We’re building sustainable solutions for collecting, processing, analyzing and displaying aerial data while supporting the communities built around them. Our efforts are made possible by collaborations with key organizations, individuals and with the help of our growing community.”

The software is run by a board consisting of: Imma Mwanza, Stephen Mather, Näiké Nembetwa Nzali, DK Benjamin, and Arun M. The rest of the “staff” are contributors to the various projects, mostly through GitHub.

There are many projects that are combined for the complete OpenDroneMap software. These projects include: the command line toolkit, user interface, GCP detection, Python SDK, and more. Users can contribute by helping design code and financial donations. OpenDroneMap is a nonprofit, but it has the potential to be a company.

Open source projects like, OpenDroneMap, are how technology should be designed and deployed. The goal behind OpenDroneMap is to create a professional, decisive, and used for good.

Whitney Grace, May 30, 2024

Open Source and Open Doors. Bad Actors, Come On In

May 13, 2024

Open source code is awesome, because it allows developers to create projects without paying proprietary fees and it inspires innovation. Open source code, however, has problems especially when bad actors know how to exploit it. OpenSSF shares how a recent open source back door left many people vulnerable: “Open Source Security (OpenSSF) And OpenJS Foundations Issue Alert For Social Engineer Takeovers Of Open Source Projects.”

The OpenJS Foundation hosts billions of JavaScript websites. The foundation recently discovered a social engineering takeover attempt dubbed XZ Utilz backdoor, similar to another hack in the past. The OpenJS Foundation and the Open Source Security Foundation are alerting developers about the threat.

The OpenJS received a series of suspicious emails from various GitHub emails that advised project administrators to update their JavaScript. The update description was vague and wanted the administrators to allow the bad actors access to projects. The scam emails are part of the endless bag of tricks black hat hackers use to manipulate administrators, so they can access source code.

The foundations are warning administrators about the scams and sharing tips about how to recognize scams. Bad actors exploit open source developers:

“These social engineering attacks are exploiting the sense of duty that maintainers have with their project and community in order to manipulate them. Pay attention to how interactions make you feel. Interactions that create self-doubt, feelings of inadequacy, of not doing enough for the project, etc. might be part of a social engineering attack.

Social engineering attacks like the ones we have witnessed with XZ/liblzma were successfully averted by the OpenJS community. These types of attacks are difficult to detect or protect against programmatically as they prey on a violation of trust through social engineering. In the short term, clearly and transparently sharing suspicious activity like those we mentioned above will help other communities stay vigilant. Ensuring our maintainers are well supported is the primary deterrent we have against these social engineering attacks.”

These scams aren’t surprising. There needs to be more organizations like OpenJS and Open Source Security, because their intentions are to protect the common good. They’re on the side of the little person compared to politicians and corporations.

Whitney Grace, May 13, 2024

Open Source Software: Fool Me Once, Fool Me Twice, Fool Me Once Again

April 1, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Open source is shoved in my face each and every day. I nod and say, “Sure” or “Sounds on point”. But in the back of my mind, I ask myself, “Am I the only one who sees open source as a way to demonstrate certain skills, a Hail, Mary, in a dicey job market, or a bit of MBA fancy dancing. I am not alone. Navigate to “Software Vendors Dump Open Source, Go for Cash Grab.” The write up does a reasonable job of explaining the open source “playbook.”

The write up asserts:

A company will make its program using open source, make millions from it, and then — and only then — switch licenses, leaving their contributors, customers, and partners in the lurch as they try to grab billions.

Yep, billions with a “B”. I think that the goal may be big numbers, but some open source outfits chug along ingesting venture funding and surfing on assorted methods of raising cash and never really get into “B” territory. I don’t want to name names because as a dinobaby, the only thing I dislike more than doctors is a legal eagle. Want proper nouns? Sorry, not in this blog post.

Thanks, MSFT Copilot. Where are you in the open source game?

The write up focuses on Redis, which is a database that strikes me as quite similar to the now-forgotten Pinpoint approach or the clever Inktomi method to speed up certain retrieval functions. Well, Redis, unlike Pinpoint or Inktomi is into the “B” numbers. Two billion to be semi-exact in this era of specious valuations.

The write up says that Redis changed its license terms. This is nothing new. 23andMe made headlines with some term modifications as the company slowly settled to earth and landed in a genetically rich river bank in Silicon Valley.

The article quotes Redis Big Dogs as saying:

“Beginning today, all future versions of Redis will be released with source-available licenses. Starting with Redis 7.4, Redis will be dual-licensed under the Redis Source Available License (RSALv2) and Server Side Public License (SSPLv1). Consequently, Redis will no longer be distributed under the three-clause Berkeley Software Distribution (BSD).”

I think this means, “Pay up.”

The author of the essay (Steven J. Vaughan-Nichols) identifies three reasons for the bait-and-switch play. I think there is just one — money.

The big question is, “What’s going to happen now?”

The essay does not provide an answer. Let me fill the void:

- Open source will chug along until there is a break out program. Then whoever has the rights to the open source (that is, the one or handful of people who created it) will look for ways to make money. The software is free, but modules to make it useful cost money.

- Open source will rot from within because “open” makes it possible for bad actors to poison widely used libraries. Once a big outfit suffers big losses, it will be hasta la vista open source and “Hello, Microsoft” or whoever the accountants and lawyers running the company believe care about their software.

- Open source becomes quasi-commercial. Options range from Microsoft charging for GitHub access to an open source repository becoming a membership operation like a digital Mar-A-Lago. The “hosting” service becomes the equivalent of a golf course, and the people who use the facilities paying fees which can vary widely and without any logic whatsoever.

Which of these three predictions will come true? Answer: The one that affords the breakout open source stakeholders to generate the maximum amount of money.

Stephen E Arnold, April 1, 2024

Commercial Open Source: Fantastic Pipe Dream or Revenue Pipe Line?

March 26, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Open source is a term which strikes me as au courant. Artificial intelligence software is often described as “open source.” The idea has a bit of “do good” mixed with the idea that commercial software puts customers in handcuffs. (I think I hear Kumbaya playing faintly in the background.) Is it possible to blend the idea of free and open software with the principles of commercial software lock in? Notable open source entrepreneurs have become difficult to differentiate from a run-of-the-mill technology company. Examples include RedHat, Elastic, and OpenAI. Ooops. Sorry. OpenAI is a different type of company. I think.

Will open source software, particularly open source AI components, end up like this private playground? Thanks, MSFT Copilot. You are into open source, aren’t you? I hope your commitment is stronger than for server and cloud security.

I had these open source thoughts when I read “AI and Data Infrastructure Drives Demand for Open Source Startups.” The source of the information is Runa Capital, now located in Luxembourg. The firm publishes a report called the Runa Open Source Start Up Index, and it is a “rosy” document. The point of the article is that Runa sees open source as a financial opportunity. You can start your exploration of the tables and charts at this link on the Runa Capital Web site.

I want to focus on some information tucked into the article, just not presented in bold face or with a snappy chart. Here’s the passage I noted:

Defining what constitutes “open source” has its own inherent challenges too, as there is a spectrum of how “open source” a startup is — some are more akin to “open core,” where most of their major features are locked behind a premium paywall, and some have licenses which are more restrictive than others. So for this, the curators at Runa decided that the startup must simply have a product that is “reasonably connected to its open-source repositories,” which obviously involves a degree of subjectivity when deciding which ones make the cut.

The word “reasonably” invokes an image of lawyers negotiating on behalf of their clients. Nothing is quite so far from the kumbaya of the “real” open source software initiative as lawyers. Just look at the licenses for open source software.

I also noted this statement:

Thus, according to Runa’s methodology, it uses what it calls the “commercial perception of open-source” for its report, rather than the actual license the company attaches to its project.

What is “open source”? My hunch it is whatever the lawyers and courts conclude.

Why is this important?

The talk about “open source” is relevant to the “next big thing” in technology. And what is that? ANSWER: A fresh set of money making plays.

I know that there are true believers in open source. I wish them financial and kumbaya-type success.

My take is different: Open source, as the term is used today, is one of the phrases repurposed to breathe life in what some critics call a techno-feudal world. I don’t have a dog in the race. I don’t want a dog in any race. I am a dinobaby. I find amusement in how language becomes the Teflon on which money (one hopes) glides effortlessly.

And the kumbaya? Hmm.

Stephen E Arnold, March 26, 2024

AI Hermeneutics: The Fire Fights of Interpretation Flame

March 12, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

My hunch is that not too many of the thumb-typing, TikTok generation know what hermeneutics means. Furthermore, like most of their parents, these future masters of the phone-iverse don’t care. “Let software think for me” would make a nifty T shirt slogan at a technology conference.

This morning (March 12, 2024) I read three quite different write ups. Let me highlight each and then link the content of those documents to the the problem of interpretation of religious texts.

Thanks, MSFT Copilot. I am confident your security team is up to this task.

The first write up is a news story called “Elon Musk’s AI to Open Source Grok This Week.” The main point for me is that Mr. Musk will put the label “open source” on his Grok artificial intelligence software. The write up includes an interesting quote; to wit:

Musk further adds that the whole idea of him founding OpenAI was about open sourcing AI. He highlighted his discussion with Larry Page, the former CEO of Google, who was Musk’s friend then. “I sat in his house and talked about AI safety, and Larry did not care about AI safety at all.”

The implication is that Mr. Musk does care about safety. Okay, let’s accept that.

The second story is an ArXiv paper called “Stealing Part of a Production Language Model.” The authors are nine Googlers, two ETH wizards, one University of Washington professor, one OpenAI researcher, and one McGill University smart software luminary. In short, the big outfits are making clear that closed or open, software is rising to the task of revealing some of the inner workings of these “next big things.” The paper states:

We introduce the first model-stealing attack that extracts precise, nontrivial information from black-box production language models like OpenAI’s ChatGPT or Google’s PaLM-2…. For under $20 USD, our attack extracts the entire projection matrix of OpenAI’s ada and babbage language models.

The third item is “How Do Neural Networks Learn? A Mathematical Formula Explains How They Detect Relevant Patterns.” The main idea of this write up is that software can perform an X-ray type analysis of a black box and present some useful data about the inner workings of numerical recipes about which many AI “experts” feign total ignorance.

Several observations:

- Open source software is available to download largely without encumbrances. Good actors and bad actors can use this software and its components to let users put on a happy face or bedevil the world’s cyber security experts. Either way, smart software is out of the bag.

- In the event that someone or some organization has secrets buried in its software, those secrets can be exposed. One the secret is known, the good actors and the bad actors can surf on that information.

- The notion of an attack surface for smart software now includes the numerical recipes and the model itself. Toss in the notion of data poisoning, and the notion of vulnerability must be recast from a specific attack to a much larger type of exploitation.

Net net: I assume the many committees, NGOs, and government entities discussing AI have considered these points and incorporated these articles into informed policies. In the meantime, the AI parade continues to attract participants. Who has time to fool around with the hermeneutics of smart software?

Stephen E Arnold, March 12, 2024

Open Source: Free, Easy, and Fast Sort Of

February 29, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Not long ago, I spoke with an open source cheerleader. The pros outweighed the cons from this technologist’s point of view. (I would like to ID the individual, but I try to avoid having legal eagles claw their way into my modest nest in rural Kentucky. Just plug in “John Wizard Doe”, a high profile entrepreneur and graduate of a big time engineering school.)

I think going up suggests a problem.

Here are highlights of my notes about the upside of open source:

- Many smart people eyeball the code and problems are spotted and fixed

- Fixes get made and deployed more rapidly than commercial software which of works on an longer “fix” cycle

- Dead end software can be given new kidneys or maybe a heart with a fork

- For most use cases, the software is free or cheaper than commercial products

- New functions become available; some of which fuel new product opportunities.

There may be a few others, but let’s look at a downside few open source cheerleaders want to talk about. I don’t want to counter the widely held belief that “many smart people eyeball the code.” The method is grab and go. The speed angle is relative. Reviving open source again and again is quite useful; bad actors do this. Most people just recycle. The “free” angle is a big deal. Everyone like “free” because why not? New functions become available so new markets are created. Perhaps. But in the cyber crime space, innovation boils down to finding a mistake that can be exploited with good enough open source components, often with some mileage on their chassis.

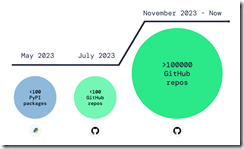

But the one point open source champions crank back on the rah rah output. “Over 100,000 Infected Repos Found on GitHub.” I want to point out that GitHub is a Microsoft, the all-time champion in security, owns GitHub. If you think about Microsoft and security too much, you may come away confused. I know I do. I also get a headache.

This “Infected Repos” API IRO article asserts:

Our security research and data science teams detected a resurgence of a malicious repo confusion campaign that began mid-last year, this time on a much larger scale. The attack impacts more than 100,000 GitHub repositories (and presumably millions) when unsuspecting developers use repositories that resemble known and trusted ones but are, in fact, infected with malicious code.

The write up provides excellent information about how the bad repos create problems and provides a recipe for do this type of malware distribution yourself. (As you know, I am not too keen on having certain information with helpful detail easily available, but I am a dinobaby, and dinobabies have crazy ideas.)

If we confine our thinking to the open source champion’s five benefits, I think security issues may be more important in some use cases.The better question is, “Why don’t open source supporters like Microsoft and the person with whom I spoke want to talk about open source security?” My view is that:

- Security is an after thought or a never thought facet of open source software

- Making money is Job #1, so free trumps spending money to make sure the open source software is secure

- Open source appeals to some venture capitalists. Why? RedHat, Elastic, and a handful of other “open source plays”.

Net net: Just visualize a future in which smart software ingests poisoned code, and programmers who rely on smart software to make them a 10X engineer. Does that create a bit of a problem? Of course not. Microsoft is the security champ, and GitHub is Microsoft.

Stephen E Arnold, February 29, 2024

Map Data: USGS Historical Topos

February 20, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The ESRI blog published “Access Over 181,000 USGS Historical Topographic Maps.” The map outfit teamed with the US Geological Survey to provide access to an additional 1,745 maps. The total maps in the collection is now 181,008.

The blog reports:

Esri’s USGS historical topographic map collection contains historical quads (excluding orthophoto quads) dating from 1884 to 2006 with scales ranging from 1:10,000 to 1:250,000. The scanned maps can be used in ArcGIS Pro, ArcGIS Online, and ArcGIS Enterprise. They can also be downloaded as georeferenced TIFs for use in other applications.

These data are useful. Maps can be viewed with ESRI’s online service called the Historical Topo Map Explorer. You can access that online service at this link.

If you are not familiar with historical topos, ESRI states in an ARCGIS post:

The USGS topographic maps were designed to serve as base maps for geologists by defining streams, water bodies, mountains, hills, and valleys. Using contours and other precise symbolization, these maps were drawn accurately, made mathematically correct, and edited carefully. The topographic quadrangles gradually evolved to show the changing landscape of a new nation by adding symbolization for important highways; canals; railroads; and railway stations; wagon roads; and the sites of cities, towns and villages. New and revised quadrangles helped geologists map the mineral fields, and assisted populated places to develop safe and plentiful water supplies and lay out new highways. Primary considerations of the USGS were the permanence of features; map symbolization and legibility; and the overall cost of compiling, editing, printing and distributing the maps to government agencies, industry, and the general public. Due to the longevity and the numerous editions of these maps they now serve new audiences such as historians, genealogists, archeologists, and people who are interested in the historical landscape of the U.S.

This public facing data service is one example of extremely useful information gathered by US government entities can be made more accessible via a public-private relationship. When I served on the board of the US National Technical Information Service, I learned that other useful information is available, just not easily accessible to US citizens.

Good work, ESRI and USGS! Now what about making that volcano data a bit easier to find and access in real time?

Stephen E Arnold, February 20, 2024

AI Coding: Better, Faster, Cheaper. Just Pick Two, Please

January 29, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Visual Studio Magazine is not on my must-read list. Nevertheless, one of my research team told me that I needed to read “New GitHub Copilot Research Finds “Downward Pressure on Code Quality.” I had no idea what “downward pressure” means. I read the article trying to figure out what the plain English meaning of this tortured phrase meant. Was it the downward pressure on the metatarsals when a person is running to a job interview? Was it the deadly downward pressure exerted on the OceanGate submersible? Was it the force illustrated in the YouTube “Hydraulic Press Channel”?

A partner at a venture firms wants his open source recipients to produce more code better, faster, and cheaper. (He does not explain that one must pick two.) Thanks MSFT Copilot Bing thing. Good enough. But the green? Wow.

Wrong.

The writeup is a content marketing piece for a research report. That’s okay. I think a human may have written most of the article. Despite the frippery in the article, I spotted several factoids. If these are indeed verifiable, excitement in the world of machine generated open source software will ensue. Why does this matter? Well, in the words of the SmartNews content engine, “Read on.”

Here are the items of interest to me:

- Bad code is being created and added to the GitHub repositories.

- Code is recycled, despite smart efforts to reduce the copy-paste approach to programming.

- AI is preparing a field in which lousy, flawed, and possible worse software will flourish.

Stephen E Arnold, January 29, 2024