Allegations of Personal Data Flows from X.com to Au10tix

June 4, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

I work from my dinobaby lair in rural Kentucky. What the heck to I know about Hod HaSharon, Israel? The answer is, “Not much.” However, I read an online article called “Elon Musk Now Requiring All X Users Who Get Paid to Send Their Personal ID Details to Israeli Intelligence-Linked Corporation.”I am not sure if the statements in the write up are accurate. I want to highlight some items from the write up because I have not seen information about this interesting identify verification process in my other feeds. This could be the second most covered news item in the last week or two. Number one goes to Google’s telling people to eat a rock a day and its weird “not our fault” explanation of its quantumly supreme technology.

Here’s what I carried away from this X to Au10tix write up. (A side note: Intel outfits like obscure names. In this case, Au10tix is a cute conversion of the word authentic to a unique string of characters. Aw ten tix. Get it?)

Yes, indeed. There is an outfit called Au10tix, and it is based about 60 miles north of Jerusalem, not in the intelware capital of the world Tel Aviv. The company, according to the cited write up, has a deal with Elon Musk’s X.com. The write up asserts:

X now requires new users who wish to monetize their accounts to verify their identification with a company known as Au10tix. While creator verification is not unusual for online platforms, Elon Musk’s latest move has drawn intense criticism because of Au10tix’s strong ties to Israeli intelligence. Even people who have no problem sharing their personal information with X need to be aware that the company they are using for verification is connected to the Israeli government. Au10tix was founded by members of the elite Israeli intelligence units Shin Bet and Unit 8200.

Sounds scary. But that’s the point of the article. I would like to remind you, gentle reader, that Israel’s vaunted intelligence systems failed as recently as October 2023. That event was described to me by one of the country’s former intelligence professionals as “our 9/11.” Well, maybe. I think it made clear that the intelware does not work as advertised in some situations. I don’t have first-hand information about Au10tix, but I would suggest some caution before engaging in flights of fancy.

The write up presents as actual factual information:

The executive director of the Israel-based Palestinian digital rights organization 7amleh, Nadim Nashif, told the Middle East Eye: “The concept of verifying user accounts is indeed essential in suppressing fake accounts and maintaining a trustworthy online environment. However, the approach chosen by X, in collaboration with the Israeli identity intelligence company Au10tix, raises significant concerns. “Au10tix is located in Israel and both have a well-documented history of military surveillance and intelligence gathering… this association raises questions about the potential implications for user privacy and data security.” Independent journalist Antony Loewenstein said he was worried that the verification process could normalize Israeli surveillance technology.

What the write up did not significant detail. The write up reports:

Au10tix has also created identity verification systems for border controls and airports and formed commercial partnerships with companies such as Uber, PayPal and Google.

My team’s research into online gaming found suggestions that the estimable 888 Holdings may have a relationship with Au10tix. The company pops up in some of our research into facial recognition verification. The Israeli gig work outfit Fiverr.com seems to be familiar with the technology as well. I want to point out that one of the Fiverr gig workers based in the UK reported to me that she was no longer “recognized” by the Fiverr.com system. Yeah, October 2023 style intelware.

Who operates the company? Heading back into my files, I spotted a few names. These individuals may no longer involved in the company, but several names remind me of individuals who have been active in the intelware game for a few years:

- Ron Atzmon: Chairman (Unit 8200 which was not on the ball on October 2023 it seems)

- Ilan Maytal: Chief Data Officer

- Omer Kamhi: Chief Information Security Officer

- Erez Hershkovitz: Chief Financial Officer (formerly of the very interesting intel-related outfit Voyager Labs, a company about which the Brennan Center has a tidy collection of information related to the LAPD)

The company’s technology is available in the Azure Marketplace. That description identifies three core functions of Au10tix’ systems:

- Identity verification. Allegedly the system has real-time identify verification. Hmm. I wonder why it took quite a bit of time to figure out who did what in October 2023. That question is probably unfair because it appears no patrols or systems “saw” what was taking place. But, I should not nit pick. The Azure service includes a “regulatory toolbox including disclaimer, parental consent, voice and video consent, and more.” That disclaimer seems helpful.

- Biometrics verification. Again, this is an interesting assertion. As imagery of the October 2023 emerged I asked myself, “How did that ID to selfie, selfie to selfie, and selfie to token matches” work? Answer: Ask the families of those killed.

- Data screening and monitoring. The system can “identify potential risks and negative news associated with individuals or entities.” That might be helpful in building automated profiles of individuals by companies licensing the technology. I wonder if this capability can be hooked to other Israeli spyware systems to provide a particularly helpful, real-time profile of a person of interest?

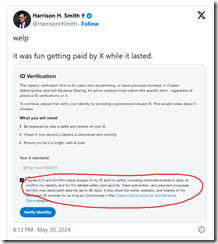

Let’s assume the write up is accurate and X.com is licensing the technology. X.com — according to “Au10tix Is an Israeli Company and Part of a Group Launched by Members of Israel’s Domestic Intelligence Agency, Shin Bet” — now includes this

The circled segment of the social media post says:

I agree to X and Au10tix using images of my ID and my selfie, including extracted biometric data to confirm my identity and for X’s related safety and security, fraud prevention, and payment purposes. Au10tix may store such data for up to 30 days. X may store full name, address, and hashes of my document ID number for as long as I participate in the Creator Subscription or Ads Revenue Share program.

This dinobaby followed the October 2023 event with shock and surprise. The dinobaby has long been a champion of Israel’s intelware capabilities, and I have done some small projects for firms which I am not authorized to identify. Now I am skeptical and more critical. What if X’s identity service is compromised? What if the servers are breached and the data exfiltrated? What if the system does not work and downstream financial fraud is enabled by X’s push beyond short text messaging? Much intelware is little more than glorified and old-fashioned search and retrieval.

Does Mr. Musk or other commercial purchasers of intelware know about cracks and fissures in intelware systems which allowed the October 2023 event to be undetected until live-fire reports arrived? This tie up is interesting and is worth monitoring.

Stephen E Arnold, June 4, 2024

AItoAI Interviews Connecticut Senator James Maroney

May 30, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

AItoAI: Smart Software for Government Uses Cases has published its interview with Senator James Maroney. Senator Maroney is the driving force behind legislation to regulate artificial intelligence in Connecticut. In the 20-minute interview, Senator Maroney elaborated on several facets of the proposed legislation. The interviewers were the father-and-son team of Erik S. (the son) and Stephen E Arnold (father).

Senator James Maroney spearheaded the Connecticut artificial intelligence legislation.

Senator Maroney pointed to the rapid growth of AI products and services. That growth has economic implications for the citizens and businesses in Connecticut. The senator explained that biases in algorithms can have a negative impact. For that reason, specific procedures are required to help ensure that the AI systems operate in a fair way. To help address this issue, Senator Maroney advocates a risk-based approach to AI. The idea is that a low-risk AI service like getting information about a vacation requires less attention than a higher-risk application such as evaluating employee performance. The bill includes provisions for additional training. The senator’s commitment to upskilling links to taking steps to help citizens and organizations of all types use AI in a beneficial manner.

AItoAI wants to call attention to Senator Maroney’s making his time available for the interview. Erik and Stephen want to thank the senator for his time and his explanation of some of the bill’s provisions.

You can view the video at https://youtu.be/ZfcHKLgARJU or listen to the audio of the 20-minute program at https://shorturl.at/ziPgr.

Stephen E Arnold, May 30, 2024

Rentals Are In, Ownership Is Out

May 23, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

We thought the age of rentals was over and we bid farewell to Blockbuster with a nostalgic wave. We were so wrong and it started with SaaS or software as a service. It should also be streaming as a service. The concept sounds good: updated services, tech support, and/or an endless library of entertainment that includes movies, TV shows, music, books, and videogames. The problem is the fees keep getting higher, the tech support doesn’t speak English, the software has bugs, and you don’t own the entertainment.

You don’t own your favorite shows, movies, music, videogames, and books anymore unless you buy physical copies or move the digital downloads off the hosting platform. Australians were scratching their heads over the ownership of their digital media recently The Guardian reported: “‘My Whole Library Is Wiped Out’: What It Means To Own Movies And TV In The Age Of Streaming Services.”

Telstra TV Box Office informed customers that the company would shutter in June and they would lose their purchased media unless they paid another fee to switch everything to Fetch. Customers expected to watch their purchased digital media indefinitely, but they were actually buying into a hosting platform. The problem stems from the entertainment version of SaaS: digital rights management.

People buy digital files but they don’t read the associated terms and conditions. The terms and conditions are long, legalese documents that no one reads. They do clearly state, however, why the digital rights management expectations are. Shaanan Chaney of Melbourne University said it’s unfair for customers to read those documents, but the companies aren’t liable:

“ ‘Such provisions are fairly standard among tech companies. Customers can rent or buy films via Amazon Prime, and the company’s terms of service states the content ‘will generally continue to be available to you for download or streaming … but may become unavailable … Amazon will not be liable to you’.”

Apple iTunes has a similar clause in their terms and conditions, but they suggest customers download their media and backing them up. Digital rights management is important, but it’s run by large corporations that don’t care about consumers (and often creators). Companies do deserve to be paid and run their organizations as they wish, but they should respect their customers (and creators).

Buying physical copies is still a good idea. Life is a subscription and no way to cancel

Whitney Grace, May 23, 2024

TikTok Rings the Alarm for Yelp

May 22, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Social media influencers have been making and breaking restaurants since MySpace was still a thing. GrubStreet, another bastion for foodies and restaurant owners, reported that TikTok now controls the Internet food scene over Yelp: “How TikTok Took Over The Menu.” TikTok, Instagram, and YouTube are how young diners are deciding where to eat. These are essential restaurant discovery tools. Aware of the power of these social media platforms, restaurants are adapting their venues to attract popular influencer food critics. These influencers replace the traditional newspaper food critic and become ad hoc publicists for the restaurants. They’re lured to venues with free food or even a hefty cash payment.

The new restaurant critic business created SOP for ideas business practices, and ingredients to appeal to the social media algorithms. Many influencers ask the businesses “collab” in exchange for a free meal. Established influencers with huge followings not only want a free lunch but also demand paychecks. There are entire companies established on connecting restaurants and other business with social media influencers. The services have an a la carte pricing menu.

Another problem from the new type of food critics are the LED lights required to shoot the food. LED lights are the equivalent of camera flashes and can disturb other diners. Many restaurants welcome filming with the lights while other places ban them. (Filming is still allowed though.)

Huge tactics to lure influencers is creating scarcity and create an experience with table side actions. Another important tactic is almost sinful:

“Above all, the goal is excess; the most unforgivable social-media sin for any restaurant is to project an image of austerity…The chef Eyal Shani knows how to generate this particular energy. His HaSalon restaurants serve 12-foot-long noodles and encourage diners to dance on their tables, waving white napkins over their heads while disco blares from speakers. “Thirty years ago, it was about the content” of a dish or an idea, says Shani, who runs 40 restaurants around the world and has seen trends ebb and flow over the decades. ‘People tried to understand the structure of your creation.’ Today, it’s much more visual: ‘It’s very flat — it’s not about going into depth.’”

If restaurants focus more on shallowness and showmanship, then quality is going to tank. It’s going to go the way of the American attention span. TikTok ruins another thing.

Whitney Grace, May 22, 2023

Dexa: A New Podcast Search Engine

May 21, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

Google, Bing, and DuckDuckGo (a small percentage) dominate US search. Spotify, Apple Podcasts, and other platforms host and aggregate podcast shows. The problem is neither the twain shall meet when people are searching for video or audio content. Riley Tomasek was inspired by the problem and developed the Deva app:

“Dexa is an innovative brand that brings the power of AI to your favorite podcasts. With Dexa’s AI-powered podcast assistants, you can now explore, search, and ask questions related to the knowledge shared by trusted creators. Whether you’re curious about sleep supplements, programming languages, growing an audience, or achieving financial freedom, Dexa has you covered. Dexa unlocks the wisdom of experts like Andrew Huberman, Lex Fridman, Rhonda Patrick, Shane Parrish, and many more.

With Dexa, you can explore the world of podcasts and tap into the knowledge of trusted creators in a whole new way.”

Alex Huberman of Huberman Labs picked up the app and helped it go viral.

From there the Deva team built an intuitive, complex AI-powered search engine that indexes, analyzes, and transcribes podcasts. Since Deva launched nine months ago it has 50,000 users, answered almost one million, and partnered with famous podcasters. A recent update included a chat-based interface, more search and discover options, and ability watch referenced clips in a conversation.

Deva has raised $6 million in seed money and an exclusive partnership with Huberman Lab.

Deva is still a work in progress but it responds like ChatGPT but with a focus of conveying information and searching for content. It’s an intuitive platform that cites its sources directly in the search. It’s probably an interface that will be adopted by other search engines in the future.

Whitney Grace, May 21, 2024

Finding Live Music Performances

April 5, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Here is a niche search category some of our readers will appreciate. Lifehacker shares “The Best Ways to Find Live Gigs for Music You Love.” Writer David Nield describes how one can tap into a combination of sources to stay up to date on upcoming music events. He begins:

“More than once I’ve missed out on shows in my neighborhood put on by bands I like, just because I’ve been out of the loop. Whether you don’t want to miss gigs by artists you know, or you’re keen to get out and discover some new music, there are lots of ways to stay in touch with the live shows happening in your area—you need never miss a gig again. Pick the one(s) that work best for you from this list.”

First are websites dedicated to spreading the musical word, like Songkick and Bandsintown. One can sign up for notices or simply browse the site by artist or location. These sites can also use one’s listening data from streaming apps to inform their results. Or one can go straight to the source and follow artists on social media or their own websites (but that can get overwhelming if one enjoys many bands). Several music apps like Spotify and Deezer will notify you of upcoming concerts and events for artists you choose. Finally, YouTube lists tour details and ticket links beneath videos of currently touring bands, highlighting events near you. If, that is, you have chosen to share your location with the Google-owned site.

Cynthia Murrell, April 5, 2024

How Quickly Will Rights Enforcement Operations Apply Copyright Violation Claims to AI/ML Generated Images?

September 20, 2022

My view is that the outfits which use a business model to obtain payment for images without going through an authorized middleman or middlethem (?) are beavering away at this moment. How do “enforcement operations” work? Easy. There is old and new code available to generate a “digital fingerprint” for an image. You can see how these systems work. Just snag an image from Bing, Google, or some other picture finding service. Save it to you local drive. Then navigate — let’s use the Google, shall we? — to Google Images and search by image. Plug in the location on your storage device and the system will return matches. TinEye works too. What you see are matches generated when the “fingerprint” of the image you upload matches a fingerprint in the system’s “memory.” When an entity like a SPAC thinking Getty Images, PicRights, or similar outfit (these folks have conferences to discuss methods!) spots a “match,” the legal eagles take flight. One example of such a legal entity making sure the ultimate owner of the image and the middlethem gets paid, is — I think — something called “Higbee.” I remember the “bee” because the named reminded me of Eleanor Rigby. (The mind is mysterious, right?) The offender such as a church, a wounded veteran group, or a clueless blogger about cookies is notified of an “infringement.” The idea is that the ultimate owner gets money because why not? The middlethem gets money too. I think the legal eagle involved gets money because lawyers…

I read “AI Art Is Here and the World Is Already Different. How We Work — Even Think — Changes When We Can Instantly Command Convincing Images into Existence” takes a stab at explaining what the impact of AI/ML generated art will be. The write up nicks the topic, but it does not buy the pen and nib into the heart of the copyright opportunity.

Here’s a passage I noted from the cited article:

In contrast with the glib intra-VC debate about avoiding human enslavement by a future superintelligence, discussions about image-generation technology have been driven by users and artists and focus on labor, intellectual property, AI bias, and the ethics of artistic borrowing and reproduction.

Close but not a light saber cutting to the heart of what’s coming.

There is a long and growing list of things people can command into existence with their phones, through contested processes kept hidden from view, at a bargain price: trivia, meals, cars, labor. The new AI companies ask, Why not art?

Wrong question!

My hunch is that the copyright enforcement outfits will gather images, find a way to assign rights, and then sue the users of these images because the users did not know that the images were part of the enforcers furniture of a lawsuit.

Fair? Soft fraud? Something else?

The cited article does not consider these questions. Perhaps someone with a bit more savvy and a reasonably calibrated moral and ethical compass should?

Stephen E Arnold, September 20, 2022

YouTube: Podcasts, Vidcasts, Any Old Casts Will Do for Advertising

September 6, 2022

It appears YouTube is eager to jump onto the podcast bandwagon. The Hustle ponders whether “YouTube = Future Podcast Champ?” Maybe, but Google will have to maintain interest; otherwise, another Google Plus type situation may emerge. Writer Juliet Bennett Rylah reports:

A new podcasts homepage is now available to US users, going live sans fanfare in late July. TechCrunch speculates YouTube is waiting for its creator event next month to make a formal announcement. But YouTube also:

- Hired podcast exec Kai Chuk in 2021 Offered podcasters and networks $50k-$300k to create videos

- Discussed audio ads and new analytics for audio-centric creators in a leaked document

- Partnered with NPR to bring on 20+ of its most popular shows.

Why’s it matter? While YouTube is often seen as a video-first platform, YouTube Music had 2B+ monthly users and 50m+ paid subs as of September 2021. Though competitors including Spotify, Apple, and Amazon have made big moves in the space, a Cumulus Media analysis found YouTube is America’s most popular podcast platform, capturing 24.2% of listeners compared to Spotify’s 23.8% and Apple’s 16%.”

Rylah, fittingly, points us to a podcast for another perspective. On an episode of Marketing Against the Grain, HubSpot’s Kipp Bodnar and Kieran Flanagan assert YouTube subscribers are now the most valuable subscribers on the Internet. They also make a few predictions. For example, the pair believes YouTube’s discovery platform will give its podcasters a leg up. They also suspect the site’s background listening feature is about to become free for everyone, as it currently is in a Canadian pilot program. At the same time, the site may push both podcasts and the brands that support them toward a more visual format. But wouldn’t that just turn them into more video content? What makes a podcast a podcast? Perhaps that is a philosophical question beyond the ken of this humble, text-based content creator.

Cynthia Murrell, September 6, 2022

TikTok: Redefines Regular TV

January 11, 2022

What do most people under the age of 30 want to watch? YouTube? Sure, particularly some folks in Eastern Europe for whom YouTube is a source of “real news” and tips for surviving winter in Siberia. (Tip: Go to Sochi.)

“TikTok videos Will Be Playing at Restaurants, Gyms, Airports Soon” reports:

TikTok partnered with Atmosphere to bring short-form videos to the background of your next gym session, restaurant meal, or airport visit. Startup Atmosphere streams news and entertainment to commercial locations such as restaurants, airports, hotels, doctors’ waiting rooms, and other venues. That content is sourced from a host of free, ad-supported networks, including YouTube, Red Bull TV, AFV TV, World Poker Tour, The Bob Ross Channel, and, now, TikTok—making its out-of-home video service debut.

The airport venue may not be A Number One with a Bullet today, but it has promise, particularly when paired with those surveillance centric smart TVs from some folks in South Korea and elsewhere.

My thought is that the short form video looks like the future of entertainment. Instead of smash cuts, the new programs will be structured like TikTok videos. The idea will be to create an impression with the individual videos providing the shaped or weaponized content.

Dystopia? Nah, just the normal progression of information when new tools, techniques, capabilities, and methods become available. In the case of TikTok, the addition of a China-linked approach adds spice. Perhaps it is time to think in terms of managing the content streams which are set to displace what Boomers and other old timers find reliable.

That requires understanding, will, and commitment. Those are qualities on display in many seats of government, aren’t they?

Stephen E Arnold, January 11, 2022

DarkCyber for May 4, 2021, Now Available

May 4, 2021

The 9th 2021 DarkCyber video is now available on the Beyond Search Web site. Will the link work? If it doesn’t, the Facebook link can assist you. The original version of this 9th program contained video content from an interesting Dark Web site selling malware and footage from the PR department of the university which developed the kid-friendly Snakebot. Got kids? You will definitely want a Snakebot, but the DarkCyber team thinks that US Navy Seals will be in line to get duffle of Snakebots too. These are good for surveillance and termination tasks.

Plus, this 9th program of 2021 addresses five other stories, not counting the Snakebot quick bite. These are: [1] Two notable take downs, [2] iPhone access via the Lightning Port, [3] Instant messaging apps may not be secure, [4] VPNs are now themselves targets of malware, and [5] Microsoft security with a gust of SolarWinds.

The complete program is available — believe it or not — on Tess Arnold’s Facebook page. You can view the video with video inserts of surfing a Dark Web site and the kindergarten swimmer friendly Snakebot at this link: https://bit.ly/2PLjOLz. If you want the YouTube approved version without the video inserts, navigate to this link.

DarkCyber is produced by Stephen E Arnold, publisher of Beyond Search. You can access the current video plus supplemental stories on the Beyond Search blog at www.arnoldit.com/wordpress.

We think smart filtering is the cat’s pajamas, particularly for videos intended for law enforcement, intelligence, and cyber security professionals. Smart software crafted in the Googleplex is on the job.

Kenny Toth, May 4, 2021