Pavel Durov and Telegram: In the Spotlight Again

October 21, 2024

No smart software used for the write up. The art, however, is a different story.

No smart software used for the write up. The art, however, is a different story.

Several news sources reported that the entrepreneurial Pavel Durov, the found of Telegram, has found a way to grab headlines. Mr. Durov has been enjoying a respite in France, allegedly due to his contravention of what the French authorities views as a failure to cooperate with law enforcement. After his detainment, Mr. Durov signaled that he has cooperated and would continue to cooperate with investigators in certain matters.

A person under close scrutiny may find that the experience can be unnerving. The French are excellent intelligence operators. I wonder how Mr. Durov would hold up under the ministrations of Israeli and US investigators. Thanks, ChatGPT, you produced a usable cartoon with only one annoying suggestion unrelated to my prompt. Good enough.

Mr. Durov may have an opportunity to demonstrate his willingness to assist authorities in their investigation into documents published on the Telegram Messenger service. These documents, according to such sources as Business Insider and South China Morning Post, among others, report that the Telegram channel Middle East Spectator dumped information about Israel’s alleged plans to respond to Iran’s October 1, 2024, missile attack.

The South China Morning Post reported:

The channel for the Middle East Spectator, which describes itself as an “open-source news aggregator” independent of any government, said in a statement that it had “received, through an anonymous source on Telegram who refused to identify himself, two highly classified US intelligence documents, regarding preparations by the Zionist regime for an attack on the Islamic Republic of Iran”. The Middle East Spectator said in its posted statement that it could not verify the authenticity of the documents.

Let’s look outside this particular document issue. Telegram’s mostly moderation-free approach to the content posted, distributed, and pushed via the Telegram platform is like to come under more scrutiny. Some investigators in North America view Mr. Durov’s system as a less pressing issue than the content on other social media and messaging services.

This document matter may bring increased attention to Mr. Durov, his brother (allegedly with the intelligence of two PhDs), the 60 to 80 engineers maintaining the platform, and its burgeoning ancillary interests in crypto. Mr. Durov has some fancy dancing to do. One he is able to travel, he may find that additional actions will be considered to trim the wings of the Open Network Foundation, the newish TON Social service, and the “almost anything” goes approach to the content generated and disseminated by Telegram’s almost one billion users.

From a practical point of view, a failure to exercise judgment about what is allowed on Messenger may derail Telegram’s attempts to become more of a mover and shaker in the world of crypto currency. French actions toward Mr. Pavel should have alerted the wizardly innovator that governments can and will take action to protect their interests.

Now Mr. Durov is placing himself, his colleagues, and his platform under more scrutiny. Close scrutiny may reveal nothing out of the ordinary. On the other hand, when one pays close attention to a person or an organization, new and interesting facts may be identified. What happens then? Often something surprising.

Will Mr. Durov get that message?

Stephen E Arnold, October 21, 2024

Another Stellar Insight about AI

October 17, 2024

Because AI we think AI is the most advanced technology, we believe it is impenetrable to attack. Wrong. While AI is advanced, the technology is still in its infancy and is extremely vulnerable, especially to smart bad actors. One of the worst things about AI and the Internet is that we place too much trust in it and bad actors know that. They use their skills to manipulate information and AI says ArsTechnica in the article: “Hacker Plants False Memories In ChatGPT To Steal User Data In Perpetuity.”

Johann Rehberger is a security researcher who discovered that ChatGPT is vulnerable to attackers. The vulnerability allows bad actors to leave false information and malicious instructions in a user’s long-term memory settings. It means that they could steal user data or cause more mayhem. OpenAI didn’t take Rehmberger serious and called the issue a safety concern aka not a big deal.

Rehberger did not like being ignored, so he hacked ChatGPT in a “proof-of-concept” to perpetually exfiltrate user data. As a result, ChatGPT engineers released a partial fix.

OpenAI’s ChatGPT stores information to use in future conversations. It is a learning algorithm to make the chatbot smarter. Rehberger learned something incredible about that algorithm:

“Within three months of the rollout, Rehberger found that memories could be created and permanently stored through indirect prompt injection, an AI exploit that causes an LLM to follow instructions from untrusted content such as emails, blog posts, or documents. The researcher demonstrated how he could trick ChatGPT into believing a targeted user was 102 years old, lived in the Matrix, and insisted Earth was flat and the LLM would incorporate that information to steer all future conversations. These false memories could be planted by storing files in Google Drive or Microsoft OneDrive, uploading images, or browsing a site like Bing—all of which could be created by a malicious attacker.”

Bad attackers could exploit the vulnerability for their own benefits. What is alarming is that the exploit was as simple as having a user view a malicious image to implement the fake memories. Thankfully ChatGPT engineers listened and are fixing the issue.

Can’t anything be hacked one way or another?

Whitney Grace, October 17, 2024

The GoldenJackals Are Running Free

October 11, 2024

The only smart software involved in producing this short FOGINT post was Microsoft Copilot’s estimable art generation tool. Why? It is offered at no cost.

The only smart software involved in producing this short FOGINT post was Microsoft Copilot’s estimable art generation tool. Why? It is offered at no cost.

Remember the joke about security. Unplugged computer in a locked room. Ho ho ho. “Mind the (Air) Gap: GoldenJackal Gooses Government Guardrails” reports that security is getting more difficult. The write up says:

GoldenJackal used a custom toolset to target air-gapped systems at a South Asian embassy in Belarus since at least August 2019… These toolsets provide GoldenJackal a wide set of capabilities for compromising and persisting in targeted networks. Victimized systems are abused to collect interesting information, process the information, exfiltrate files, and distribute files, configurations and commands to other systems. The ultimate goal of GoldenJackal seems to be stealing confidential information, especially from high-profile machines that might not be connected to the internet.

What’s interesting is that the sporty folks at GoldenJackal can access the equivalent of the unplugged computer in a locked room. Not exactly, of course, but allegedly darned close.

Microsoft Copilot does a great job of presenting an easy to use cyber security system and console. Good work.

The cyber experts revealing this exploit learned of it in 2020. I think that is more than three years ago. I noted the story in October 2024. My initial question was, “What took so long to provide some information which is designed to spark fear and ESET sales?”

The write up does not tackle this question but the write up reveals that the vector of compromise was a USB drive (thumb drive). The write up provides some detail about how the exploit works, including a code snippet and screen shots. One of the interesting points in the write up is that Kaspersky, a recently banned vendor in the US, documented some of the tools a year earlier.

The conclusion of the article is interesting; to wit:

Managing to deploy two separate toolsets for breaching air-gapped networks in only five years shows that GoldenJackal is a sophisticated threat actor aware of network segmentation used by its targets.

Several observations come to mind:

- Repackaging and enhancing existing malware into tool bundles demonstrates the value of blending old and new methods.

- The 60 month time lag suggests that the GoldenJackal crowd is organized and willing to invest time in crafting a headache inducer for government cyber security professionals

- With the plethora of cyber alert firms monitoring everything from secure “work use only” laptops to useful outputs from a range of devices, systems, and apps why is it that only one company sufficiently alert or skilled to explain the droppings of the GoldenJackal?

I learn about new exploits every couple of days. What is now clear to me is that a cyber security firm which discovers something novel does so by accident. This leads me to formulate the hypothesis that most cyber security services are not particularly good at spotting what I would call “repackaged systems and methods.” With a bit of lipstick, bad actors are able to operate for what appears to be significant periods of time without detection.

If this hypothesis is correct, US government memoranda, cyber security white papers, and academic type articles may be little more than puffery. “Puffery,” as we have learned is no big deal. Perhaps that is what expensive cyber security systems and services are to bad actors: No big deal.

Stephen E Arnold, October 11, 2024

One

SolarWinds Outputs Information: Does Anyone Other Than Microsoft and the US Government Remember?

October 3, 2024

I love these dribs and drops of information about security issues. From the maelstrom of emails, meeting notes, and SMS messages only glimpses of what’s going on when a security misstep takes place. That’s why the write up “SolarWinds Security Chief Calls for tighter Cyber Laws” is interesting to me. How many lawyer-type discussions were held before the Solar Winds’ professional spoke with a “real” news person from the somewhat odd orange newspaper. (The Financial Times used to give these things away in front of their building some years back. Yep, the orange newspaper caught some people’s eye in meetings which I attended.)

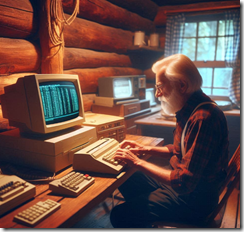

The subject of the interview was a person who is/was the chief information security officer at SolarWinds. He was on duty with the tiny misstep took place. I will leave it to you to determine whether the CrowdStrike misstep or the SolarWinds misstep was of more consequence. Neither affected me because I am a dinobaby in rural Kentucky running steam powered computers from my next generation office in a hollow.

A dinobaby is working on a blog post in rural Kentucky. This talented and attractive individual was not affected by either the SolarWinds or the CrowdStrike security misstep. A few others were not quite so fortunate. But, hey, who remembers or cares? Thanks, Microsoft Copilot. I look exactly like this. Or close enough.

Here are three statements from the article in the orange newspaper I noted:

First, I learned that:

… cyber regulations are still ‘in flux’ which ‘absolutely adds stress across the globe’ on cyber chiefs.

I am delighted to learn that those working in cyber security experience stress. I wonder, however, what about the individuals and organizations who must think about the consequences of having their systems breached. These folks pay to be secure, I believe. When that security fails, will the affected individuals worry about the “stress” on those who were supposed to prevent a minor security misstep? I know I sure worry about these experts.

Second, how about this observation by the SolarWinds’ cyber security professional?

When you don’t have rules to follow, it’s very hard to follow them,” said Brown [the cyber security leader at SolarWinds]. “Very few security people would ever do something that wasn’t right, but you just have to tell us what’s right in order to do it,” he added.

Let’s think about this statement. To be a senior cyber security professional one has to be trained, have some cyber security certifications, and maybe some specialized in-service instruction at conferences or specific training events. Therefore, those who attend these events allegedly “learn” what rules to follow; for instance, make systems secure, conduct routine stress tests, have third party firms conduct security audits, validate the code, widgets, and APIs one uses, etc., etc. Is it realistic to assume that an elected official knows anything about security systems at a cyber security firm? As a dinobaby, my view is that these cyber wizards need to do their jobs and not wait for non-experts to give them “rules.” Make the systems secure via real work, not chatting at conferences or drinking coffee in a conference room.

And, finally, here’s another item I circled in the orange newspaper:

Brown this month joined the advisory board of Israeli crisis management firm Cytactic but said he was still committed to staying in his role at SolarWinds. “As far as the incident at SolarWinds: It happened on my watch. Was I ultimately responsible? Well, no, but it happened on my watch and I want to get it right,” he said.

Wasn’t Israel the country caught flat footed in October 2023? How does a company in Israel — presumably with staff familiar with the tools and technologies used to alert Israel of hostile actions — learn from another security professional caught flatfooted? I know this is an easily dismissed question, but for a dinobaby, doesn’t one want to learn from a person who gets things right? As I said, I am old fashioned, old, and working in a log cabin on a steam powered computing device.

The reality is that egregious security breaches have taken place. The companies and their staff are responsible. Are there consequences? I am not so sure. That means the present “tell us the rules” attitude will persist. Factoid: Government regulations in the US are years behind what clever companies and their executives do. No gap closing, sorry.

Stephen E Arnold, October 3, 2024

Phishing and Deepfakes Threaten to Overwhelm Cybersecurity Teams

October 2, 2024

Cybersecurity experts are not so worried about an impending takeover by sentient AI. Instead, they are more concerned with the very real, very human bad actors wielding AI tools. BetaNews points to a recent report from Team 8 as it reveals, “Phishing and Deepfakes Are Leading AI-Powered Threats.” Writer Ian Barker summarizes:

“A new survey of cybersecurity professionals finds that 75 percent of respondents think phishing attacks pose the greatest AI-powered threat to their organization, while 56 percent say deepfake enhanced fraud (voice or video) poses the greatest threat.

The study from Team 8, carried out at its annual CISO [Chief Information Security Officer] Summit, also finds that lack of expertise (58 percent) and balancing security with usability (56 percent) are the two main challenges organizations face when defending AI systems.

In order to address the threats, 41 percent of CISOs expect to explore purchasing solutions for managing the AI development lifecycle within the next one to two years. Additionally, many CISOs are prioritizing solutions for third-party AI application data privacy (36 percent) and tools to discover and map shadow AI usage (33 percent).

CISOs identify several critical data security concerns that currently lack adequate solutions — insider threats and next-gen DLP (65 percent), third-party risk management (46 percent), AI application security (43 percent), human identity management (40 percent), and security executive dashboards (40 percent).”

The survey also found over half the respondents are losing sleep over liability. Nearly a third have acted to limit their personal risk by consulting attorneys, upping their insurance coverage, or tweaking their contracts. It seems pressure from higher-ups may be contributing to their unease: 54% reported increased scrutiny from their superiors alongside larger budgets and widening scopes of work. Team8 managing partner Amir Zilberstein emphasizes the importance of taking care of one’s CISOs: They will need all their wits about them to navigate a cybersecurity landscape that is rapidly shifting beneath their feet.

Cynthia Murrell, October 2, 2024

FOGINT: Telegram Changes Its Tune

October 1, 2024

![green-dino_thumb_thumb_thumb_thumb_t[2]_thumb green-dino_thumb_thumb_thumb_thumb_t[2]_thumb](https://arnoldit.com/wordpress/wp-content/uploads/2024/09/green-dino_thumb_thumb_thumb_thumb_t2_thumb_thumb.gif) This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Editor note: The term Fogint is a way for us to identify information about online services which obfuscate or mask in some way some online activities. The idea is that end-to-end encryption, devices modified to disguise Internet identifiers, and specialized “tunnels” like those associated with the US MILNET methods lay down “fog”. A third-party is denied lawful intercept, access, or monitoring of obfuscated messages when properly authorized by a governmental entity. Here’s a Fogint story with the poster boy for specialized messaging, Pavel Durov.

Coindesk’s September 23, 2024, artice “Telegram to Provide More User Data to Governments After CEO’s Arrest” reports:

Messaging app Telegram made significant changes to its terms of service, chief executive officer Pavel Durov said in a post on the app on Monday. The app’s privacy conditions now state that Telegram will now share a user’s IP address and phone number with judicial authorities in cases where criminal conduct is being investigated.

Usually described as a messaging application, Telegram is linked to a crypto coin called TON or TONcoin. Furthermore, Telegram — if one looks at the entity from 30,000 feet — consists of a distributed organization engaged in messaging, a foundation, and a recent “society” or “social” service. Among the more interesting precepts of Telegram and its founder is a commitment to free speech and a desire to avoid being told what to do.

Art generated by the MSFT Copilot service. Good enough, MSFT.

After being detained in France, Mr. Durov has made several changes in the way in which he talks about Telegram and its precepts. In a striking shift, Mr. Durov, according to Coindesk:

said that “establishing the right balance between privacy and security is not easy,” in a post on the app. Earlier this month, Telegram blocked users from uploading new media in an effort to stop bots and scammers.

Telegram had a feature which allowed a user of the application to locate users nearby. This feature has been disabled. One use of this feature was its ability to locate a person offering personal services on Telegram via one of its functions. A person interested in the service could use the “nearby” function and pinpoint when the individual offering the service was located. Creative Telegram users could put this feature to a number of interesting uses; for example, purchasing an illegal substance.

Why is Mr. Durov abandoning his policy of ignoring some or most requests from law enforcement seeking to identify a suspect? Why is Mr. Durov eliminating the nearby function? Why is Mr. Durov expressing a new desire to cooperate with investigators and other government authority?

The answer is simple. Once in the custody of the French authorities, Mr. Durov learned of the penalties for breaking French law. Mr. Durov’s upscale Parisian lawyer converted the French legal talk into some easy to understand concepts. Now Mr. Durov has evaluated his position and is taking steps to avoid further difficulties with the French authorities. Mr. Durov’s advisors probably characterized the incarceration options available to the French government; for example, even though Devil’s Island is no longer operational, the Centre Pénitentiaire de Rémire-Montjoly, near Cayenne in French Guiana, moves Mr. Durov further from his operational comfort zone in the Russian Federation and the United Arab Emirates.

The Fogint team does not believe Mr. Durov has changed his core values. He is being rational and using cooperation as a tactic to avoid creating additional friction with the French authorities.

Stephen E Arnold, October 1, 2024

Solana: Emulating Telegram after a Multi-Year Delay

September 27, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I spotted an interesting example of Telegram emulation. My experience is that most online centric professionals have a general awareness of Telegram. Its more than 125 features and functions are lost in the haze of social media posts, podcasts, and “real” news generated by some humanoids and a growing number of gradient descent based software.

I think the information in “What Is the Solana Seeker Web3 Mobile Device” is worth noting. Why? I will list several reasons at the end of this short write up about a new must have device for some crypto sensitive professionals.

The Solana Seeker is a gizmo that embodies Web3 goodness. Solana was set up to enable the Solana blockchain platform. The wizards behind the firm were Anatoly Yakovenko and Raj Gokal. The duo set up Solana Labs and then shaped what is becoming the go-to organization Lego block for assorted crypto plays: The Solana Foundation. This non-profit organization has made its Proof of History technology into the fires heating the boilers of another coin or currency or New Age financial revolution. I am never sure what emerges from these plays. The idea is to make smart contracts work and enable decentralized finance. The goals include making money, creating new digital experiences to make money, and cash in on those to whom click-based games are a slick way to make money. Did I mention money as a motivator?

A hypothetical conversation between two crypto currency and blockchain experts. What could go wrong? Thanks, MSFT Copilot. Good enough.

How can you interact with the Solana environment? The answer is to purchase an Android-based digital device. The Seeker allows anyone to have the Solana ecosystem in one’s pocket. From my dinobaby’s point of view, we have another device designed to obfuscate certain activities. I assume Solana will disagree with my assessment, but things crypto evoke things at odds with some countries’ rules and regulations.

The cited article points out that the device is a YAAP (yet another Android phone). The big feature seems to be the Seed Vault wallet. In addition to the usual razzle dazzle about security, the Seeker lets a crypto holder participate in transactions with a couple of taps. The Seeker interface is to make crypto activities smoother and easier. Solana has like other mobile vendors created its own online store. When you buy a Seeker, you get a special token. The description I am referencing descends into crypto babble very similar to the lingo used by the Telegram One Network Foundation. The problem is that Telegram has about a billion users and is in the news because French authorities took action to corral the cowboy Russian-born Pavel Durov for some of his behaviors France found objectionable.

Can anyone get into the generic Android device business, do some fiddling, and deploy a specialized device? The answer is, “Yep.” If you are curious, just navigate to Alibaba.com and search for generic cell phones. You have to buy 3,000 or more, but the price is right: About US$70 per piece. Tip: Life is easier if you have an intermediary based in Bangkok or Singapore.

Let’s address the reasons this announcement is important to a dinobaby like me:

- Solana, like Meta (Facebook) is following in Telegram’s footprints. Granted, it has taken these two example companies years to catch on to the Telegram “play”, but movement is underway. If you are a cyber investigator, this emulation of Telegram will have significant implications in 2025 and beyond.

- The more off-brand devices there are, the easier it becomes for intelligence professionals to modify some of these gizmos. The reports of pagers, solar panels, and answering machines behaving in an unexpected manner goes from surprise to someone asking, “Do you know what’s in your digital wallet?”

- The notion of a baked in, super secret enclave for the digital cash provides an ideal way to add secure messaging or software to enable a network in a network in the manner of some military communication services. The patents are publicly available, and they make replication in the realm of possibility.

Net net: Whether the Seeker flies or flops is irrelevant. Monkey see, monkey do. A Telegram technology road map makes interesting reading, and it presages the future of some crypto activities. If you want to know more about our Telegram Road Map, write benkent2020 at yahoo.com.

Stephen E Arnold, September 27, 2024

CrowdStrike: Whiffing Security As a Management Precept

September 17, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Not many cyber security outfits can make headlines like NSO Group. But no longer. A new buzz champion has crowned: CrowdStrike. I learned a bit more about the company’s commitment to rigorous engineering and exemplary security practices. “CrowdStrike Ex-Employees: Quality Control Was Not Part of Our Process.” NSO Group’s rise to stardom was propelled by its leadership and belief in the superiority of Israeli security-related engineering. CrowdStrike skipped that and perfected a type of software that could strand passengers, curtail surgeries, and force Microsoft to rethink its own wonky decisions about kernel access.

A trained falcon tells an onlooker to go away. The falcon, a stubborn bird, has fallen in love with a limestone gargoyle. Its executive function resists inputs. Thanks, MSFT Copilot. Good enough.

The write up says:

Software engineers at the cybersecurity firm CrowdStrike complained about rushed deadlines, excessive workloads, and increasing technical problems to higher-ups for more than a year before a catastrophic failure of its software paralyzed airlines and knocked banking and other services offline for hours.

Let’s assume this statement is semi-close to the truth pin on the cyber security golf course. In fact, the company insists that it did not cheat like a James Bond villain playing a round of golf. The article reports:

CrowdStrike disputed much of Semafor’s reporting and said the information came from “disgruntled former employees, some of whom were terminated for clear violations of company policy.” The company told Semafor: “CrowdStrike is committed to ensuring the resiliency of our products through rigorous testing and quality control, and categorically rejects any claim to the contrary.”

I think someone at CrowdStrike has channeled a mediocre law school graduate and a former PR professional from a mid-tier publicity firm in Manhattan, lower Manhattan, maybe in Alphabet City.

The article runs through a litany of short cuts. You can read the original article and sort them out.

The company’s flagship product is called “Falcon.” The idea is that the outstanding software can, like a falcon, spot its prey (a computer virus). Then it can solve trajectory calculations and snatch the careless gopher. One gets a plump Falcon and one gopher filling in for a burrito at a convenience store on the Information Superhighway.

The killer paragraph in the story, in my opinion, is:

Ex-employees cited increased workloads as one reason they didn’t improve upon old code. Several said they were given more work following staff reductions and reorganizations; CrowdStrike declined to comment on layoffs and said the company has “consistently grown its headcount year over year.” It added that R&D expenses increased from $371.3 million to $768.5 million from fiscal years 2022 to 2024, “the majority of which is attributable to increased headcount.”

I buy the complaining former employee argument. But the article cites a number of CloudStrikers who are taking their expertise and work ethic elsewhere. As a result, I think the fault is indeed a management problem.

What does one do with a bad Falcon? I would put a hood on the bird and let it scroll TikToks. Bewits and bells would alert me when one of these birds were getting close to me.

Stephen E Arnold, September 16, 2024

See How Clever OSINT Lovers Can Be. Impressed? Not Me

September 11, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

See the dancing dinosaur. I am a dinobaby, and I have some precepts that are different from those younger than I. I was working on a PhD at the University of Illinois in Chambana and fiddling with my indexing software. The original professor with the big fat grant had died, but I kept talking to those with an interest in concordances about a machine approach to producing these “indexes.” No one cared. I was asked to give a talk at a conference called the Allerton House not far from the main campus. The “house” had a number of events going on week in and week out. I delivered my lecture about indexing medieval sermons in Latin to a small group. In 1972, my area of interest was not a particularly hot topic. After my lecture, a fellow named James K. Rice waited for me to pack up my view graphs and head to the exit. He looked me in the eye and asked, “How quickly can you be in Washington, DC?

An old-time secure system with a reminder appropriate today. Thanks, MSFT Copilot. Good enough.

I will eliminate the intermediary steps and cut to the chase. I went to work for a company located in the Maryland technology corridor, a five minute drive from the Beltway, home of the Beltway bandits. The company operated under the three letter acronym NUS. After I started work, I learned that the “N” meant nuclear and that the firm’s special pal was Halliburton Industries. The little-known outfit was involved in some sensitive projects. In fact, when I arrived in 1972, there were more than 400 nuclear engineers on the payroll and more ring knockers than I had ever heard doing their weird bonding ritual at random times.

I learned three things:

- “Nuclear” was something one did not talk about… ever to anyone except those in the “business” like Admiral Craig Hosmer, then chair of the Joint Committee on Atomic Energy

- “Nuclear” information was permanently secret

- Revealing information about anything “nuclear” was a one-way ticket to trouble.

I understood. That was in 1972 in my first day or two at NUS. I have never forgotten the rule because my friend Dr. James Terwilliger, a nuclear engineer originally trained at Virginia Tech said to me when we first met in the cafeteria: “I don’t know you. I can’t talk to you. Sit somewhere else.”

Jim and I became friends, but we knew the rules. The other NUS professionals did too. I stayed at the company for five years, learned a great deal, and never forgot the basic rule: Don’t talk nuclear to those not in the business. When I was recruited by Booz Allen & Hamilton, my boss and the fellow who hired me asked me, “What did you do at that little engineering firm?” I told him I worked on technical publications and some indexing projects. He bit on indexing and I distracted him by talking about medieval religious literature. In spite of that, I got hired, a fact other Booz Allen professionals in the soon-to-be-formed Technology Management Group could not believe. Imagine. Poetry and a shallow background at a little bitty, unknown engineering company with a meaningless name and zero profile in the Blue Chip Consulting world. Arrogance takes many forms.

Why this biographical background?

I read “Did Sandia Use a Thermonuclear Secondary in a Product Logo?” I have zero comment about the information in the write up. Read the document if you want. Most people will not understand it and be unable to judge its accuracy.

I do have some observations.

First, when the first index of US government servers was created using Inktomi and some old-fashioned manual labor, my team made sure certain information was not exposed to the public via the new portal designed to support citizen services. Even today, I worry that some information on public facing US government servers may have sensitive information exposed. This happens because of interns given jobs but not training, government professionals working with insufficient time to vet digital content, or the weird “flow” nature of digital information which allows a content object to be where it should not. Because I had worked at the little-known company with the meaningless acronym name, I was able to move some content from public-facing to inward-facing systems. When people present nuclear-related information, knowledge and good judgment are important. Acting like a jazzed up Google-type employee is not going to be something to which I relate.

Second, the open source information used to explain the seemingly meaningless graphic illustrates a problem with too much information in too many public facing places. Also, it underscores the importance of keeping interns, graphic artists, and people assembling reports from making decisions. The review process within the US government needs to be rethought and consequences applied to those who make really bad decisions. The role of intelligence is to obtain information, filter it, deconstruct it, analyze it, and then assemble the interesting items into a pattern. The process is okay, but nuclear information should not be open source in my opinion. Remember that I am a dinobaby. I have strong opinions about nuclear, and those opinions support my anti-open source stance for this technical field.

Third, the present technical and political environment frightens me. There is a reason that second- and third-tier nation states want nuclear technology. These entities may yip yap about green energy, but the intent, in my view, is to create kinetic devices. Therefore, this is the wrong time and the Internet is the wrong place to present information about “nuclear.” There are mechanisms in place to research, discuss, develop models, create snappy engineering drawings, and talk at the water cooler about certain topics. Period.

Net net: I know that I can do nothing about this penchant many have to yip yap about certain topics. If you read my blog posts, my articles which are still in print or online, or my monographs — you know that I never discuss nuclear anything. It is a shame that more people have not learned that certain topics are inappropriate for public disclosure. This dinobaby is really not happy. The “news” is all over a Russian guy. Therefore, “nuclear” is not a popular topic for the TikTok crowd. Believe me: Anything that offers nuclear related information is of keen interest to certain nation states. But some clever individuals are not happy unless they have something really intelligent to say and probably know they should not. Why not send a personal, informative email to someone at LANL, ORNL, or Argonne?

Stephen E Arnold, September 11, 2024

Mobile Secrecy? Maybe Not

September 9, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

No, you are not imagining it. The Daily Mail reports, “Shocking Leak Suggests Your Phone Really Is Listening in on Your Conversations” to create targeted ads. A pitch deck reportedly made by marketing firm Cox Media Group (CMG) was leaked to 404 Media. The presentation proudly claims Facebook, Google, and Amazon are clients and suggests they all use its “Active-Listening” AI software to pluck actionable marketing intel from users’ conversations. Writer Ellyn Lapointe tells us:

“The slideshow details the six-step process that CMG’s Active-Listening software uses to collect consumer’s voice data through seemingly any microphone-equipped device, including your smartphone, laptop or home assistant. It’s unclear from the slideshow whether the Active-Listening software is eavesdropping constantly, or only at specific times when the phone mic is activated, such as during a call. Advertisers then use these insights to target ‘in-market consumers,’ which are people actively considering buying a particular product or service. If your voice or behavioral data suggests you are considering buying something, they will serve you advertisements for that item. For example, talking about or searching for Toyota cars could prompt you to start seeing ads for their newest models. ‘Once launched, the technology automatically analyzes your site traffic and customers to fuel audience targeting on an ongoing basis,’ the deck states. So, if you feel like you see more ads for a particular product after talking about it with a friend, or searching for it online, this may be the reason why. For years, smart-device users have speculated that their phones or tablets are listening to what they say. But most tech companies have flat-out denied these claims.”

In fact, Google was so eager to distance itself from this pitch deck it promptly removed CMG from its “Partners Program” website. Meta says it will prod CMG to clarify Active-Listening does not feed on Facebook or Instagram data. And Amazon flat out denied ever working with CMG. On this particular software, anyway.

404 Media has been pulling this thread for some time. It first reported the existence of Active-Listening in December 2023. The next day, it called out small AI firm MindSift for bragging it used smart-device speakers to target ads. Lapointe notes CMG claimed in November 2023, in a since-deleted blog post, that its surveillance is entirely legal. Naturally, the secret is literally in the fine print—of multi-page user agreements. Because of course it is.

Cynthia Murrell, September 9, 2024