Does Smart Software Forget?

November 21, 2024

A recent paper challenges the big dogs of AI, asking, “Does Your LLM Truly Unlearn? An Embarrassingly Simple Approach to Recover Unlearned Knowledge.” The study was performed by a team of researchers from Penn State, Harvard, and Amazon and published on research platform arXiv. True or false, it is a nifty poke in the eye for the likes of OpenAI, Google, Meta, and Microsoft, who may have overlooked the obvious. The abstract explains:

“Large language models (LLMs) have shown remarkable proficiency in generating text, benefiting from extensive training on vast textual corpora. However, LLMs may also acquire unwanted behaviors from the diverse and sensitive nature of their training data, which can include copyrighted and private content. Machine unlearning has been introduced as a viable solution to remove the influence of such problematic content without the need for costly and time-consuming retraining. This process aims to erase specific knowledge from LLMs while preserving as much model utility as possible.”

But AI firms may be fooling themselves about this method. We learn:

“Despite the effectiveness of current unlearning methods, little attention has been given to whether existing unlearning methods for LLMs truly achieve forgetting or merely hide the knowledge, which current unlearning benchmarks fail to detect. This paper reveals that applying quantization to models that have undergone unlearning can restore the ‘forgotten’ information.”

Oops. The team found as much as 83% of data thought forgotten was still there, lurking in the shadows. The paper offers a explanation for the problem and suggestions to mitigate it. The abstract concludes:

“Altogether, our study underscores a major failure in existing unlearning methods for LLMs, strongly advocating for more comprehensive and robust strategies to ensure authentic unlearning without compromising model utility.”

See the paper for all the technical details. Will the big tech firms take the researchers’ advice and improve their products? Or will they continue letting their investors and marketing departments lead them by the nose?

Cynthia Murrell, November 21, 2024

Short Snort: How to Find Undocumented APIs

November 20, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The essay / how to “All the Data Can Be Yours” does a very good job of providing a hacker road map. The information in the write up includes:

- Tips for finding undocumented APIs in GitHub

- Spotting “fetch” requests

- WordPress default APIs

- Information in robots.txt files

- Using the Google

- Examining JavaScripts

- Poking into mobile apps

- Some helpful resources and tools.

Each of these items includes details; for example, specific search strings and “how to make a taco” type of instructions. Assembling this write up took quite a bit of work.

Those engaged in cyber security (white, gray, and black hat types) will find the write up quite interesting.

I want to point out that I am not criticizing the information per se. I do want to remind those with a desire to share their expertise of three behaviors:

- Some computer science and programming classes in interesting countries use this type of information to provide students with what I would call hands on instruction

- Some governments, not necessarily aligned with US interests, provide the tips to the employees and contractors to certain government agencies to test and then extend the functionalities of the techniques presented in the write up

- Certain information might be more effectively distributed in other communication channels.

Stephen E Arnold, November 20, 2024

Europe Wants Its Own Search System: Filtering, Trees, and More

November 20, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I am not going to recount the history of search companies and government entities building an alternative to Google. One can toss in Bing, but Google is the Big Dog. Yandex is useful for Russian content. But there is a void even though Swisscows.com is providing anonymity (allegedly) and no tracking (allegedly).

Now a new European solution may become available. If you remember Pertimm, you probably know that Qwant absorbed some of that earlier search system’s goodness. And there is Ecosia, a search system which plants trees. The union of these two systems will be an alternative to Google. I think Exalead.com tried this before, but who remembers European search history in rural Kentucky?

“Two Upstart Search Engines Are Teaming Up to Take on Google” report:

The for-profit joint venture, dubbed European Search Perspective and located in Paris, could allow the small companies and any others that decide to join up to reduce their reliance on Google and Bing and serve results that are better tailored to their companies’ missions and Europeans’ tastes.

A possible name or temporary handle for the new search system is EUSP or European Search Perspective. What’s interesting is that the plumbing will be provided by a service provider named OVH. Four years ago, OVHcloud became a strategic partner of … wait for it … Google. Apparently that deal does not prohibit OVH from providing services to a European alternative to Google.

Also, you may recall that Eric Schmidt, former adult in the room at Google, suggested that Qwant kept him awake at night. Yes, Qwant has been a threat to Google for 13 years. How has that worked out? The original Qwant was interesting with a novel way of showing results from different types of sources. Now Qwant is actually okay. The problem with any search system, including Bing, is that the cost of maintaining an index containing new content and refreshing or updating previously indexed content is a big job. Toss in some AI goodness and cash burning furiously.

“Google” is now the word for search whether it works or does not. Perhaps regulatory actions will alter the fact that in Denmark, 99 percent of user queries flow to Google. Yep, Denmark. But one can’t go wrong with a ballpark figure like 95 percent of search queries outside of China and a handful of other countries are part of the Google market share.

How will the new team tackle the Google? I hope in a way that delivers more progress than Cogito. Remember that? Okay, no problem.

PS. Is a 13-year-old company an upstart? Sigh.

Stephen E Arnold, November 20, 2024

FOGINT: Kenya Throttles Telegram to Protect KCSE Exam Integrity

November 20, 2024

Secondary school students in Kenya need to do well on their all-encompassing final exam if they hope to go to college. Several Telegram services have emerged to assist students through this crucial juncture—by helping them cheat on the test. Authorities caught on to the practice and have restricted Telegram usage during this year’s November exams. As a result, reports Kenyans.co.ke, “NetBlocks Confirms Rising User Frustrations with Telegram Slowdown in Kenya.” Since Telegram is Kenya’s fifth most downloaded social-media platform, that is a lot of unhappy users. Writer Rene Otinga tells us:

“According to an internet observatory, NetBlocks, Telegram was restricted in Kenya with their data showing the app as being down across various internet providers. Users across the country have reported receiving several error messages while trying to interact with the app, including a ‘Connecting’ error when trying to access the Telegram desktop. However, a letter shared online from the Communications Authority of Kenya (CAK) also confirmed the temporary suspension of Telegram services to quell the perpetuation of criminal activities.”

Apparently, the restriction worked. We learn:

“On Friday, Education Principal Secretary Belio Kipsang said only 11 incidents of attempted sneaking of mobile phones were reported across the country. While monitoring examinations in Kiambu County, the PS said this was the fewest number of cheating cases the ministry had experienced in recent times.”

That is good news for honest students in Kenya. But for Telegram, this may be just the beginning of its regulatory challenges. Otinga notes:

“Governments are wary of the app, which they suspect is being used to spread disinformation, spread extremism, and in Kenya, promote examination cheating. European countries are particularly critical of the app, with the likes of Belarus, Russia, Ukraine, Germany, Norway, and Spain restricting or banning the messaging app altogether.”

Encryption can hide a multitude of sins. But when regulators are paying attention, it might not be enough to keep one out of hot water.

Cynthia Murrell, November 20, 2024

Entity Extraction: Not As Simple As Some Vendors Say

November 19, 2024

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

Most of the systems incorporating entity extraction have been trained to recognize the names of simple entities and mostly based on the use of capitalization. An “entity” can be a person’s name, the name of an organization, or a location like Niagara Falls, near Buffalo, New York. The river “Niagara” when bound to “Falls” means a geologic feature. The “Buffalo” is not a Bubalina; it is a delightful city with even more pleasing weather.

The same entity extraction process has to work for specialized software used by law enforcement, intelligence agencies, and legal professionals. Compared to entity extraction for consumer-facing applications like Google’s Web search or Apple Maps, the specialized software vendors have to contend with:

- Gang slang in English and other languages; for example, “bumble bee.” This is not an insect; it is a nickname for the Latin Kings.

- Organizations operating in Lao PDR and converted to English words like Zhao Wei’s Kings Romans Casino. Mr. Wei has been allegedly involved in gambling activities in a poorly-regulated region in the Golden Triangle.

- Individuals who use aliases like maestrolive, james44123, or ahmed2004. There are either “real” people behind the handles or they are sock puppets (fake identities).

Why do these variations create a challenge? In order to locate a business, the content processing system has to identify the entity the user seeks. For an investigator, chopping through a thicket of language and idiosyncratic personas is the difference between making progress or hitting a dead end. Automated entity extraction systems can work using smart software, carefully-crafted and constantly updated controlled vocabulary list, or a hybrid system.

Automated entity extraction systems can work using smart software, carefully-crafted and constantly updated controlled vocabulary list, or a hybrid system.

Let’s take an example which confronts a person looking for information about the Ku Group. This is a financial services firm responsible for the Kucoin. The Ku Group is interesting because it has been found guilty in the US for certain financial activities in the State of New York and by the US Securities & Exchange Commission.

EU Docks Meta (Zuckbook) Five Days of Profits! Wow, Painful, Right?

November 19, 2024

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

Let’s keep this short. According to “real” news outfits “Meta Fined Euro 798 Million by EU Over Abusing Classified Ads Dominance.” This is the lovable firm’s first EU antitrust fine. Of course, Meta (the Zuckbook) will let loose its legal eagles to dispute the fine.

The Facebook money machine keeps on doing its thing. Thanks, MidJourney. Good enough.

What the “real” news outfits did not do is answer this question, “How long does it take the Zuck outfit to generate about $840 million US dollars?

The answer is that it takes that fine firm about five days to earn or generate the cash to pay a fine that would cripple many organizations. In case you were wondering, five days works out to about 1.4 percent of a calendar year.

I bet that fine will definitely force the Zuck to change its ways. I wish I knew how much the EU spent pursuing this particular legal matter. My hunch is that the number has disappeared into the murkiness of Brussels’ bookkeeping.

And the Zuckbook? It will keep on keeping on.

Stephen E Arnold, November 19, 2024

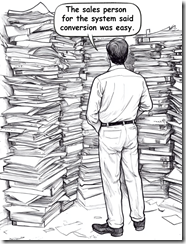

Content Conversion: Search and AI Vendors Downplay the Task

November 19, 2024

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

Marketers and PR people often have degrees in political science, communications, or art history. This academic foundation means that some of these professionals can listen to a presentation and struggle to figure out what’s a horse, what’s horse feathers, and what’s horse output.

Consequently, many organizations engaged in “selling” enterprise search, smart software, and fusion-capable intelligence systems downplay or just fib about how darned easy it is to take “content” and shove it into the Fancy Dan smart software. The pitch goes something like this: “We have filters that can handle 90 percent of the organization’s content. Word, PowerPoint, Excel, Portable Document Format (PDF), HTML, XML, and data from any system that can export tab delimited content. Just import and let our system increase your ability to analyze vast amounts of content. Yada yada yada.”

Thanks, Midjourney. Good enough.

The problem is that real life content is often a problem. I am not going to trot out my list of content problem children. Instead I want to ask a question: If dealing with content is a slam dunk, why do companies like IBM and Oracle sustain specialized tools to convert Content Type A into Content Type B?

The answer is that content processing is an essential step because [a] structured and unstructured content can exist in different versions. Figuring out the one that is least wrong and most timely is tricky. [b] Humans love mobile devices, laptops, home computers, photos, videos, and audio. Furthermore, how does a content processing get those types of content from a source not located in an organization’s office (assuming it has one) and into the content processing system? The answer is, “Money, time, persuasion, and knowledge of what employee has what.” Finding a unicorn at the Kentucky Derby is more likely. [c] Specialized systems employ lingo like “Export as” and provide some file types. Yeah. The problem is that the output may not contain everything that is in the specialized software program. Examples range from computational chemistry systems to those nifty AutoCAD type drawing system to slick electronic trace routing solutions to DaVinci Resolve video systems which can happily pull “content” from numerous places on a proprietary network set up. Yeah, no problem.

Evidence of how big this content conversion issue is appears in the IBM write up “A New Tool to Unlock Data from Enterprise Documents for Generative AI.” If the content conversion work is trivial, why is IBM wasting time and brainpower figuring out something like making a PowerPoint file smart software friendly?

The reason is that as big outfits get “into” smart software, the people working on the project find that the exception folder gets filled up. Some documents and content types don’t convert. If a boss asks, “How do we know the data in the AI system are accurate?”, the hapless IT person looking at the exception folder either lies or says in a professional voice, “We don’t have a clue?”

IBM’s write up says:

IBM’s new open-source toolkit, Docling, allows developers to more easily convert PDFs, manuals, and slide decks into specialized data for customizing enterprise AI models and grounding them on trusted information.

But one piece of software cannot do the job. That’s why IBM reports:

The second model, TableFormer, is designed to transform image-based tables into machine-readable formats with rows and columns of cells. Tables are a rich source of information, but because many of them lie buried in paper reports, they’ve historically been difficult for machines to parse. TableFormer was developed for IBM’s earlier DeepSearch project to excavate this data. In internal tests, TableFormer outperformed leading table-recognition tools.

Why are these tools needed? Here’s IBM’s rationale:

Researchers plan to build out Docling’s capabilities so that it can handle more complex data types, including math equations, charts, and business forms. Their overall aim is to unlock the full potential of enterprise data for AI applications, from analyzing legal documents to grounding LLM responses on corporate policy documents to extracting insights from technical manuals.

Based on my experience, the paragraph translates as, “This document conversion stuff is a killer problem.”

When you hear a trendy enterprise search or enterprise AI vendor talk about the wonders of its system, be sure to ask about document conversion. Here are a few questions to put the spotlight on what often becomes a black hole of costs:

- If I process 1,000 pages of PDFs, mostly text but with some charts and graphs, what’s the error rate?

- If I process 1,000 engineering drawings with embedded product and vendor data, what percentage of the content is parsed for the search or AI system?

- If I process 1,000 non text objects like videos and iPhone images, what is the time required and the metadata accuracy for the converted objects?

- Where do unprocessable source objects go? An exception folder, the trash bin, or to my in box for me to fix up?

Have fun asking questions.

Stephen E Arnold, November 19, 2024

After AI Billions, a Hail, Mary Play

November 19, 2024

Now it is scramble time. Reuters reports, “OpenAI and Others Seek New Path to Smarter AI as Current Methods Hit Limitations.” Why does this sound familiar? Perhaps because it is a replay of the enterprise search over-promise and under-deliver approach. Will a new technique save OpenAI and other firms? Writers Krystal Hu and Anna Tong tell us:

“After the release of the viral ChatGPT chatbot two years ago, technology companies, whose valuations have benefited greatly from the AI boom, have publicly maintained that ‘scaling up’ current models through adding more data and computing power will consistently lead to improved AI models. But now, some of the most prominent AI scientists are speaking out on the limitations of this ‘bigger is better’ philosophy. … Behind the scenes, researchers at major AI labs have been running into delays and disappointing outcomes in the race to release a large language model that outperforms OpenAI’s GPT-4 model, which is nearly two years old, according to three sources familiar with private matters.”

One difficulty, of course, is the hugely expensive and time-consuming LLM training runs. Another: it turns out easily accessible data is finite after all. (Maybe they can use AI to generate more data? Nah, that would be silly.) And then there is that pesky hallucination problem. So what will AI firms turn to in an effort to keep this golden goose alive? We learn:

“Researchers are exploring ‘test-time compute,’ a technique that enhances existing AI models during the so-called ‘inference’ phase, or when the model is being used. For example, instead of immediately choosing a single answer, a model could generate and evaluate multiple possibilities in real-time, ultimately choosing the best path forward. This method allows models to dedicate more processing power to challenging tasks like math or coding problems or complex operations that demand human-like reasoning and decision-making.”

OpenAI is using this approach in its new O1 model, while competitors like Anthropic, xAI, and Google DeepMind are reportedly following suit. Researchers claim this technique more closely mimics the way humans think. That couldn’t be just marketing hooey, could it? And even if it isn’t, is this tweak really enough?

Cynthia Murrell, November 19, 2024

AI and Efficiency: What Is the Cost of Change?

November 18, 2024

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

Companies are embracing smart software. One question which gets from my point of view little attention is, “What is the cost of changing an AI system a year or two down the road?” The focus at this time is getting some AI up and running so an organization can “learn” whether AI works or not. A parallel development is taking place in software vendors enterprise and industry-centric specialized software. Examples range from a brand new AI powered accounting system to Microsoft “sticking” AI into the ASCII editor Notepad.

Thanks, MidJourney. Good enough.

Let’s tally the costs which an organization faces 24 months after flipping the switch in, for example, a hospital chain which uses smart software to convert a physician’s spoken comments about a patient to data which can be used for analysis to provide insight into evidence based treatment for the hospital’s constituencies.

Here are some costs for staff, consultants, and lawyers:

- Paying for the time required to figure out what is on the money and what is not good or just awful like dead patients

- The time required to figure out if the present vendor can fix up the problem or a new vendor’s system must be deployed

- Going through the smart software recompete or rebid process

- Getting the system up and running

- The cost of retraining staff

- Chasing down dependencies like other third party software for the essential “billing process”

- Optimizing the changed or alternative system.

The enthusiasm for smart software makes talking about these future costs fade a little.

I read “AI Makes Tech Debt More Expensive,” and I want to quote one passage from the pretty good essay:

In essence, the goal should be to unblock your AI tools as much as possible. One reliable way to do this is to spend time breaking your system down into cohesive and coherent modules, each interacting through an explicit interface. A useful heuristic for evaluating a set of modules is to use them to explain your core features and data flows in natural language. You should be able to concisely describe current and planned functionality. You might also want to set up visibility and enforcement to make progress toward your desired architecture. A modern development team should work to maintain and evolve a system of well-defined modules which robustly model the needs of their domain. Day-to-day feature work should then be done on top of this foundation with maximum leverage from generative AI tooling.

Will organizations make this shift? Will the hyperbolic AI marketers acknowledge the future costs of pasting smart software on existing software like circus posters on crumbling walls?

Nope.

Those two year costs will be interesting for the bean counters when those kicked cans end up in their workspaces.

Stephen E Arnold, November 18, 2024

Salesforce to MSFT: We Are Coming, Baby Cakes

November 18, 2024

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

No smart software. Just a dumb dinobaby. Oh, the art? Yeah, MidJourney.

Salesforce, an outfit that hopped on the “attention” bandwagon, is now going whole hog with smart software. “Salesforce to Hire More Than 1,000 Workers to Boost AI Product Sales” makes clear that AI is going to be the hook for the company for the next hype cycle riding toward Next Big Thing theme park.

The write up says:

Agentforce is a new layer on the Salesforce platform, designed to enable companies to build and deploy AI agents that autonomously perform tasks.

Now that’s a buzz packed sentence: “Layer,” sales call data as a “platform”, “AI agents”, “autonomously”, and smart software that can “perform tasks.”

The idea is that sales are an important part of a successful organization. The exception is that monopolies really don’t need too many sales professionals. Lawyers? Yes. Forward deployed engineers? Yes. Marketers? Yes. Door knockers? Well, probably fewer going forward.

How does the Salesforce AI system work? The answer is simple it seems:

These AI agents operate independently, triggered by data changes, business rules, pre-built automations, or API signals.

Who writes the rules? I wonder if AI writes it own rules or do specialists get an opportunity to demonstrate their ability to become essential cogs in the Salesforce customers’ machines?

What do customers do with smart Salesforce? Once again, the answer is easy to provide. The write up says:

Companies such as OpenTable, Saks and Wiley are currently utilizing Agentforce to augment their workforce and enhance customer experiences. Over the past two years, Salesforce has focused on controlling sales expenses by reducing jobs and encouraging customers to use self-service or third-party purchasing options.

I think I understand. Get rid of pesky humans, their vacations, health care, pension plans, and annoying demands for wage increases. Salesforce delivers “efficiency.”

I am not sure what to make of this set of statements. Underpinning Salesforce is a database. The stuff on top of the database are interfaces. Now smart software promises to deliver efficiency and obviously another layer of “smart stuff” to provide what software and services have been promising since the days of the punched card.

Smart software, like Web search, is a natural monopoly unless specific deep pocket outfits can create a defensible niche and sell enough smart software to that niche before some other company eats their lunch.

But that’s what some companies do? Eat other individual’s lunch. So whose taking those lunches tomorrow? Amazon, Google, Microsoft, or Salesforce? Maybe the lunch thief will be a pesky start up essentially off the radar of the big hungry dogs?

With AI development shifting East, is the Silicon Valley AI way the future. Heck, even Google is moving smart software to London which is a heck of a lot easier flight to some innovative locations.

Hopefully one of the AI companies can convert billions in AI investment into new revenue and big profits in a sprightly manner. So far, I see marketing and AI dead ends. Is Salesforce, as the long gone Philco radio company used to say, “The leader”? On one hand, Salesforce is hiring. On the other, get rid of employees. Okay, I think I understand.

Stephen E Arnold, November 18, 2024