Sam Altman: The Waffling Man

February 17, 2025

Another dinobaby commentary. No smart software required.

Another dinobaby commentary. No smart software required.

Chaos is good. Flexibility is good. AI is good. Sam Altman, whom I reference as “Sam AI-Man” has some explaining to do. OpenAI is a consumer of cash. The Chinese PR push suggests that Deepseek has found a way to do OpenAI-type computing like Shein and Temu do gym clothes.

I noted “Sam Altman Admits OpenAI Was On the Wrong Side of History in Open Source Debate.” The write up does not come out state, “OpenAI was stupid when it embraced proprietary software’s approach” to meeting user needs. To be frank, Sam AI-Man was not particularly clear either.

The write up says that Sam AI-Man said:

“Yes, we are discussing [releasing model weights],” Altman wrote. “I personally think we have been on the wrong side of history here and need to figure out a different open source strategy.” He noted that not everyone at OpenAI shares his view and it isn’t the company’s current highest priority. The statement represents a remarkable departure from OpenAI’s increasingly proprietary approach in recent years, which has drawn criticism from some AI researchers and former allies, most notably Elon Musk, who is suing the company for allegedly betraying its original open source mission.

My view is that Sam AI-Man wants to emulate other super techno leaders and get whatever he wants. Not surprisingly, other super techno leaders have their own ideas. I would suggest that the objective of these AI jousts is power, control, and money.

“What about the users?” a faint voice asks. “And the investors?” another bold soul queries.

Who?

Stephen E Arnold, February 17, 2025

Software Is Changing and Not for the Better

February 17, 2025

I read a short essay “We Are Destroying Software.” What struck me about the write up was the author’s word choice. For example, here’s a simple frequency count of the terms in the essay:

- The two most popular words in the essay are “destroying” and “software” with 15 occurrences each.

- The word “complex” is used three times

- The words “systems,” “dependencies,” “reinventing,” “wheel,” and “work” are used twice each.

The structure of the essay is a series of declarative statements like this:

We are destroying software claiming that code comments are useless.

I quite like the essay.

Several observations:

- The author is passionate about his subject. “Destroy” is not a neutral word.

- “Complex” appears to be a particular concern. This makes sense. Some systems like those in use at the US Internal Revenue Service may be difficult, if not impossible, to remediate within available budgets and resources. Gradual deterioration seems to be a characteristic of many systems today, particularly when computer technology interfaces with workers.

- The notion of “joy” of hacking comes across, not as a solution to a problem, but the reason the author was motivated to capture his thoughts.

Interesting stuff. Tough to get around entropy, however. Who is the “we” by the way?

Stephen E Arnold, February 17, 2025

IBM Faces DOGE Questions?

February 17, 2025

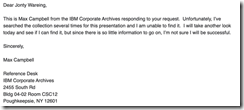

Simon Willison reminded us of the famous IBM internal training document that reads: “A Computer Can Never Be Held Accountable.” The document is also relevant for AI algorithms. Unfortunately the document has a mysterious history and the IBM Corporate Archives don’t have a copy of the presentation. A Twitter user with the name @bumblebike posted the original image. He said he found it when he went through his father’s papers. Unfortunately, the presentation with the legendary statement was destroyed in a 2019 flood.

I believe the image was first shared online in this tweet by @bumblebike in February 2017. Here’s where they confirm it was from 1979 internal training.

Here’s another tweet from @bumblebike from December 2021 about the flood:

Unfortunately destroyed by flood in 2019 with most of my things. Inquired at the retirees club zoom last week, but there’s almost no one the right age left. Not sure where else to ask.”

We don’t need the actual IBM document to know that IBM hasn’t done well when it comes to search. IBM, like most firms tried and sort of fizzled. (Remember Data Fountain or CLEVER?) IBM also moved into content management. Yep, the semi-Xerox, semi-information thing. But the good news is that a time sharing solution called Watson is doing pretty well. It’s not winning Jeopardy! but it is chugging along.

Now IBM professionals in DC have to answer the Doge nerd squad questions? Why not give OpenAI a whirl? The old Jeopardy! winner is kicking back. Doge wants to know.

Whitney Grace, February 17, 2025

Sweden Embraces Books for Student: A Revolutionary Idea

February 14, 2025

Yep, another dinobaby emission. No smart software required.

Yep, another dinobaby emission. No smart software required.

Doom scrolling through the weekend’s newsfeeds, I spotted “Sweden Swapped Books for Computers in 2009. Now, They’re Spending Millions to Bring Them Back.” Sweden has some challenges. The problems with kinetic devices are not widely known in Harrod’s Creek, Kentucky, and probably not in other parts of the US. Malmo bears some passing resemblance to parts of urban enclaves like Detroit or Las Vegas. To make life interesting, the country has a keen awareness of everyone’s favorite leader in Russia.

The point of the write up is that Sweden’s shift from old-fashioned dinobaby books to those super wonderful computers and tablets has become unpalatable. The write up reports:

The Nordic country is reportedly exploring ways to reintroduce traditional methods of studying into its educational system.

The reason for the shift to books? The write up observes:

…experts noted that modern, experiential learning methods led to a significant decline in students’ essential skills, such as reading and writing.

Does this statement sound familiar?

Most teachers and parents complain that their kids have increasingly started relying on these devices instead of engaging in classrooms.

Several observations:

- Nothing worthwhile comes easy. Computers became a way to make learning easy. The downside is that for most students, the negatives have life long consequences

- Reversing gradual loss of the capability to concentrate is likely to be a hit-and-miss undertaking.

- Individuals without skills like reading become the new market for talking to a smartphone because writing is too much friction.

How will these individuals, regardless of country, be able to engage in life long learning? The answer is one that may make some people uncomfortable: They won’t. These individuals demonstrate behaviors not well matched to independent, informed thinking.

This dinobaby longs for a time when tiny dinobabies had books, not gizmos. I smell smoke. Oh, I think that’s just some informed mobile phone users burning books.

Stephen E Arnold, February 14, 2025

Who Knew? AI Makes Learning Less Fun

February 14, 2025

Bill Gates was recently on the Jimmy Fallon show to promote his biography. In the interviews Gates shared views on AI stating that AI will replace a lot of jobs. Fallon hoped that TV show hosts wouldn’t be replaced and he probably doesn’t have anything to worry about. Why? Because he’s entertaining and interesting.

Humans love to be entertained, but AI just doesn’t have the capability of pulling it off. Media And Learning shared one teacher’s experience with AI-generated learning videos: “When AI Took Over My Teaching Videos, Students Enjoyed Them Less But Learned The Same.” Media and Learning conducted an experiment to see whether students would learn more from teacher-made or AI-generated videos. Here’s how the experiment went:

“We used generative AI tools to generate teaching videos on four different production management concepts and compared their effectiveness versus human-made videos on the same topics. While the human-made videos took several days to make, the analogous AI videos were completed in a few hours. Evidently, generative AI tools can speed up video production by an order of magnitude.”

The AI videos used ChatGPT written video scripts, MidJourney for illustrations, and HeyGen for teacher avatars. The teacher-made videos were made in the traditional manner of teachers writing scripts, recording themselves, and editing the video in Adobe Premier.

When it came to students retaining and testing on the educational content, both videos yielded the same results. Students, however, enjoyed the teacher-made videos over the AI ones. Why?

“The reduced enjoyment of AI-generated videos may stem from the absence of a personal connection and the nuanced communication styles that human educators naturally incorporate. Such interpersonal elements may not directly impact test scores but contribute to student engagement and motivation, which are quintessential foundations for continued studying and learning.”

Media And Learning suggests that AI could be used to complement instruction time, freeing teachers up to focus on personalized instruction. We’ll see what happens as AI becomes more competent, but we can rest easy for now that human engagement is more interesting than algorithms. Or at least Jimmy Fallon can.

Whitney Grace, February 14, 2025

What Happens When Understanding Technology Is Shallow? Weakness

February 14, 2025

Yep, a dinobaby wrote this blog post. Replace me with a subscription service or a contract worker from Fiverr. See if I care.

Yep, a dinobaby wrote this blog post. Replace me with a subscription service or a contract worker from Fiverr. See if I care.

I like this question. Even more satisfying is that a big name seems to have answered it. I refer to an essay by Gary Marcus in “The Race for “AI Supremacy” Is Over — at Least for Now.”

Here’s the key passage in my opinion:

China caught up so quickly for many reasons. One that deserves Congressional investigation was Meta’s decision to open source their LLMs. (The question that Congress should ask is, how pivotal was that decision in China’s ability to catch up? Would we still have a lead if they hadn’t done that? Deepseek reportedly got its start in LLMs retraining Meta’s Llama model.) Putting so many eggs in Altman’s basket, as the White House did last week and others have before, may also prove to be a mistake in hindsight. … The reporter Ryan Grim wrote yesterday about how the US government (with the notable exception of Lina Khan) has repeatedly screwed up by placating big companies and doing too little to foster independent innovation

The write up is quite good. What’s missing, in my opinion, is the linkage of a probe to determine how a technology innovation released as a not-so-stealthy open source project can affect the US financial markets. The result was satisfying to the Chinese planners.

Also, the write up does not put the probe or “foray” in a strategic context. China wants to make certain its simple message “China smart, US dumb” gets into the world’s communication channels. That worked quite well.

Finally, the write up does not point out that the US approach to AI has given China an opportunity to demonstrate that it can borrow and refine with aplomb.

Net net: I think China is doing Shien and Temu in the AI and smart software sector.

Stephen E Arnold, February 14, 2025

Hauling Data: Is There a Chance of Derailment?

February 13, 2025

Another dinobaby write up. Only smart software is the lousy train illustration.

Another dinobaby write up. Only smart software is the lousy train illustration.

I spotted some chatter about US government Web sites going off line. Since I stepped away from the “index the US government” project, I don’t spend much time poking around the content at dot gov and in some cases dot com sites operated by the US government. Let’s assume that some US government servers are now blocked and the content has gone dark to a user looking for information generated by US government entities.

If libraries chug chug down the information railroad tracks to deliver data, what does the “Trouble on the Tracks” sign mean? Thanks, You.com. Good enough.

The fix in most cases is to use Bing.com. My recollection is that a third party like Bing provided the search service to the US government. A good alternative is to use Google.com, the qualifier site: command, and a bit of obscenity. The obscenity causes the Google AI to just generate a semi relevant list of links. In a pinch, you could poke around for a repository of US government information. Unfortunately the Library of Congress is not that repository. The Government Printing Office does not do the job either. The Internet Archive is a hit-and-miss archive operation.

Is there another alternative? Yes. Harvard University announced its Data.gov archive. The institution’s Library Innovation Lab Team said on February 6, 2025:

Today we released our archive of data.gov on Source Cooperative. The 16TB collection includes over 311,000 datasets harvested during 2024 and 2025, a complete archive of federal public datasets linked by data.gov. It will be updated daily as new datasets are added to data.gov.

I like this type of archive, but I am a dinobaby, not a forward leaning, “with it” thinker. Information in my mind belongs in a library. A library, in general, should provide students and those seeking information with a place to go to obtain information. The only advertising I see in a library is an announcement about a bake sale to raise funds for children’s reading material.

Will the Harvard initiative and others like it collide with something on the train tracks? Will the money to buy fuel for the engine’s power plant be cut off? Will the train drivers be forced to find work at Shake Shack?

I have no answers. I am glad I am old, but I fondly remember when the job was to index the content on US government servers. The quaint idea formulated by President Clinton was to make US government information available. Now one has to catch a train.

Stephen E Arnold, February 13, 2025

Orchestration Is Not Music When AI Agents Work Together

February 13, 2025

Are multiple AIs better than one? Megaputer believes so. The data firm sent out a promotional email urging us to “Build Multi-Agent Gen-AI Systems.” With the help of its products, of course. We are told:

“Most business challenges are too complex for a single AI engine to solve. What is the way forward? Introducing Agent-Chain Systems: A novel groundbreaking approach leveraging the collaborative strengths of specialized AI models, each configured for distinct analytical tasks.

- Validate results through inter-agent verification mechanisms, minimizing hallucinations and inconsistencies.

- Dynamically adapt workflows by redistributing tasks among Gen-AI agents based on complexity, optimizing resource utilization and performance.

- Build AI applications in hours for tasks like automated taxonomy building and complex fact extraction, going beyond traditional AI limitations.”

If this approach really reduces AI hallucinations, there may be something to it. The firm invites readers to explore a few case studies they have put together: One is for an anonymous pharmaceutical company, one for a US regulatory agency, and the third for a large retail company. Snapshots of each project’s dashboard further illustrate the concept. Are cooperative AI agents the next big thing in generative AI? Megaputer, for one, is banking on it. Founded back in 1997, the small business is based in Bloomington, Indiana.

Cynthia Murrell, February 10, 2025

LLMs Paired With AI Are Dangerous Propaganda Tools

February 13, 2025

AI chatbots are in their infancy. While they have been tested for a number of years, they are still prone to bias and other devastating mistakes. Big business and other organizations aren’t waiting for the technology to improve. Instead they’re incorporating chatbots and more AI into their infrastructures. Baldur Bjarnason warns about the dangers of AI, especially when it comes to LLMs and censorship:

“Poisoning For Propaganda: Rising Authoritarianism Makes LLMs More Dangerous.”

Large language models (LLMs) are used to train AI algorithms. Bjarnason warns that using any LLM, even those run locally, are dangerous.

Why?

LLMs are contained language databases that are programmed around specific parameters. These parameters are prone to error, because they were written by humans—ergo why AI algorithms are untrustworthy. They can also be programmed to be biased towards specific opinions aka propaganda machines. Bjarnason warns that LLMs are being used for the lawless takeover of the United States. He also says that corporations, in order to maintain their power, won’t hesitate to remove or add the same information from LLMs if the US government asks them.

This is another type of censorship:

“The point of cognitive automation is NOT to enhance thinking. The point of it is to avoid thinking in the first place. That’s the job it does. You won’t notice when the censorship kicks in… The alternative approach to censorship, fine-tuning the model to return a specific response, is more costly than keyword blocking and more error-prone. And resorting to prompt manipulation or preambles is somewhat easily bypassed but, crucially, you need to know that there is something to bypass (or “jailbreak”) in the first place. A more concerning approach, in my view, is poisoning.”

Corporations paired with governments (it’s not just the United States) are “poisoning” the AI LLMs with propagandized sentiments. It’s a subtle way of transforming perspectives without loud indoctrination campaigns. It is comparable to subliminal messages in commercials or teaching only one viewpoint.

Controls seem unlikely.

Whitney Grace, February 13, 2025

Are These Googlers Flailing? (Yes, the Word Has “AI” in It Too)

February 12, 2025

Is the Byte write up on the money? I don’t know, but I enjoyed it. Navigate to “Google’s Finances Are in Chaos As the Company Flails at Unpopular AI. Is the Momentum of AI Starting to Wane?” I am not sure that AI is in its waning moment. Deepseek has ignited a fire under some outfits. But I am not going to critic the write up. I want to highlight some of its interesting information. Let’s go, as Anatoly the gym Meister says, just with an Eastern European accent.

Here’s the first statement in the article which caught my attention:

Google’s parent company Alphabet failed to hit sales targets, falling a 0.1 percent short of Wall Street’s revenue expectations — a fraction of a point that’s seen the company’s stock slide almost eight percent today, in its worst performance since October 2023. It’s also a sign of the times: as the New York Times reports, the whiff was due to slower-than-expected growth of its cloud-computing division, which delivers its AI tools to other businesses.

Okay, 0.1 percent is something, but I would have preferred the metaphor of the “flail” word to have been used in the paragraph begs for “flog,” “thrash,” and “whip.”

I used Sam AI-Man’s AI software to produce a good enough image of Googlers flailing. Frankly I don’t think Sam AI-Man’s system understands exactly what I wanted, but close enough for horseshoes in today’s world.

I noted this information and circled it. I love Gouda cheese. How can Google screw up cheese after its misstep with glue and cheese on pizza. Yo, Googlers. Check the cheese references.

Is Alphabet’s latest earnings result the canary in the coal mine? Should the AI industry brace for tougher days ahead as investors become increasingly skeptical of what the tech has to offer? Or are investors concerned over OpenAI’s ChatGPT overtaking Google’s search engine? Illustrating the drama, this week Google appears to have retroactively edited the YouTube video of a Super Bowl ad for its core AI model called Gemini, to remove an extremely obvious error the AI made about the popularity of gouda cheese.

Stalin revised history books. Google changes cheese references for its own advertising. But cheese?

The write up concludes with this, mostly from American high technology watching Guardian newspaper in the UK:

Although it’s still well insulated, Google’s advantages in search hinge on its ubiquity and entrenched consumer behavior,” Emarketer senior analyst Evelyn Mitchell-Wolf told The Guardian. This year “could be the year those advantages meaningfully erode as antitrust enforcement and open-source AI models change the game,” she added. “And Cloud’s disappointing results suggest that AI-powered momentum might be beginning to wane just as Google’s closed model strategy is called into question by Deepseek.”

Does this constitute the use of the word “flail”? Sure, but I like “thrash” a lot. And “wane” is good.

Stephen E Arnold, February 12, 2025