AI: Yes, Intellectual Work Will Succumb, Just Sooner Rather Than Later

January 22, 2025

This blog post is the work of an authentic dinobaby. Sorry. No smart software can help this reptilian thinker.

This blog post is the work of an authentic dinobaby. Sorry. No smart software can help this reptilian thinker.

Has AI innovation stalled? Nope. “It’s Getting Harder to Measure Just How Good AI Is Getting” explains:

OpenAI’s end-of-year series of releases included their latest large language model (LLM), o3. o3 does not exactly put the lie to claims that the scaling laws that used to define AI progress don’t work quite that well anymore going forward, but it definitively puts the lie to the claim that AI progress is hitting a wall.

Okay, that proves that AI is hitting the gym and getting pumped.

However, the write up veers into an unexpected calcified space:

The problem is that AIs have been improving so fast that they keep making benchmarks worthless. Once an AI performs well enough on a benchmark we say the benchmark is “saturated,” meaning it’s no longer usefully distinguishing how capable the AIs are, because all of them get near-perfect scores.

What is wrong with the lack of benchmarks? Nothing. Smart software is probabalistic. How accurate is the weather? Ask a wonk at the National Weather Service and you get quite optimistic answers. Ask a child whose birthday party at the park was rained out on a day Willie the Weather said that it would be sunny, and you get a different answer.

Okay, forget measurements. Here’s what the write up says will happen, and the prediction sounds really rock solid just like Willie the Weatherman:

The way AI is going to truly change our world is by automating an enormous amount of intellectual work that was once done by humans…. Like it or not (and I don’t really like it, myself; I don’t think that this world-changing transition is being handled responsibly at all) none of the three are hitting a wall, and any one of the three would be sufficient to lastingly change the world we live in.

Follow the argument? I must admit jumping from getting good, to an inability to measure “good” to humans will be replaced because AI can do intellectual work is quite a journey. Perhaps I am missing something, but:

- Just because people outside of research labs have smart software that seems to be working like a smart person, what about those hallucinatory outputs? Yep, today’s models make stuff up because probability dictates the output

- Use cases for smart software doing “intellectual work” are where in the write up? They aren’t because Vox doesn’t have any which are comfortable to journalists and writers who can be replaced by the SEO AI’s advertised on Telegram search engine optimization channels or by marketers writing for Forbes Magazine. That’s right. Excellent use cases are smart software killing jobs once held by fresh MBAs or newly minted CFAs. Why? Cheaper and as long as the models are “good enough” to turn a profit, let ‘em rip. Yahoooo.

- Smart software is created by humans, and humans shape what it does, how it is deployed, and care not a whit about the knock on effects. Technology operates in the hands of humans. Humans are deeply flawed entities. Mother Theresas are outnumbered by street gangs in Reno, Nevada, based on my personal observations of that fine city.

Net net: Vox which can and will be replaced by a cheaper and good enough alternative doesn’t want to raise that issue. Instead, Vox wanders around the real subject. That subject is that as those who drive AI figure out how to use what’s available and good enough, certain types of work will be pushed into the black boxes of smart software. Could smart software have written this essay? Yes. Could it have done a better job? Publications like the supremely weird Buzzfeed and some consultants I know sure like “good enough.” As long as it is cheap, AI is a winner.

Stephen E Arnold, January 22, 2025

AWS and AI: Aw, Of Course

January 21, 2025

Mat Garman Interview Reveals AWS Perspective on AI

It should be no surprise that AWS is going all in on Artificial Intelligence. Will Amazon become an AI winner? Sure, if it keeps those managing the company’s third-party reseller program away from AWS. Nilay Patel, The Verge‘s Editor-in Chief, interviewed AWS head Matt Garmon. He explains “Why CEO Matt Garman Is Willing to Bet AWS on AI.” Patel writes:

“Matt has a really interesting perspective for that kind of conversation since he’s been at AWS for 20 years — he started at Amazon as an intern and was AWS’s original product manager. He’s now the third CEO in just five years, and I really wanted to understand his broad view of both AWS and where it sits inside an industry that he had a pivotal role in creating. … Matt’s perspective on AI as a technology and a business is refreshingly distinct from his peers, including those more incentivized to hype up the capabilities of AI models and chatbots. I really pushed Matt about Sam Altman’s claim that we’re close to AGI and on the precipice of machines that can do tasks any human could do. I also wanted to know when any of this is going to start returning — or even justifying — the tens of billions of dollars of investments going into it. His answers on both subjects were pretty candid, and it’s clear Matt and Amazon are far more focused on how AI technology turns into real products and services that customers want to use and less about what Matt calls ‘puffery in the press.'”

What a noble stance within a sea of AI hype. The interview touches on topics like AWS’ domination of streaming delivery, its partnerships with telco companies, and problems of scale as it continues to balloon. Garmon also compares the shift to AI to the shift from typewriters to computers. See the write-up for more of their conversation.

Cynthia Murrell, January 21, 2025

AI Doom: Really Smart Software Is Coming So Start Being Afraid, People

January 20, 2025

Prepared by a still-alive dinobaby.

Prepared by a still-alive dinobaby.

The essay “Prophecies of the Flood” gathers several comments about software that thinks and decides without any humans fiddling around. The “flood” metaphor evokes the streams of money about which money people fantasize. The word “flood” evokes the Hebrew Biblical idea’s presentation of a divinely initiated cataclysm intended to cleanse the Earth of widespread wickedness. Plus, one cannot overlook the image of small towns in North Carolina inundated in mud and debris from a very bad storm.

When the AI flood strikes as a form of divine retribution, will the modern arc be filled with humans? Nope. The survivors will be those smart agents infused with even smarter software. Tough luck, humanoids. Thanks, OpenAI, I knew you could deliver art that is good enough.

To sum up: A flood is bad news, people.

The essay states:

the researchers and engineers inside AI labs appear genuinely convinced they’re witnessing the emergence of something unprecedented. Their certainty alone wouldn’t matter – except that increasingly public benchmarks and demonstrations are beginning to hint at why they might believe we’re approaching a fundamental shift in AI capabilities. The water, as it were, seems to be rising faster than expected.

The signs of darkness, according to the essay, include:

- Rising water in the generally predictable technology stream in the park populated with ducks

- Agents that “do” something for the human user or another smart software system. To humans with MBAs, art history degrees, and programming skills honed at a boot camp, the smart software is magical. Merlin wears a gray T shirt, sneakers, and faded denims

- Nifty art output in the form of images and — gasp! — videos.

The essay concludes:

The flood of intelligence that may be coming isn’t inherently good or bad – but how we prepare for it, how we adapt to it, and most importantly, how we choose to use it, will determine whether it becomes a force for progress or disruption. The time to start having these conversations isn’t after the water starts rising – it’s now.

Let’s assume that I buy this analysis and agree with the notion “prepare now.” How realistic is it that the United Nations, a couple of super powers, or a motivated individual can have an impact? Gentle reader, doom sells. Examples include The Big Short: Inside the Doomsday Machine, The Shifts and Shocks: What We’ve Learned – and Have Still to Learn – from the Financial Crisis, and Too Big to Fail: How Wall Street and Washington Fought to Save the Financial System from Crisis – and Themselves, and others, many others.

Have these dissections of problems had a material effect on regulators, elected officials, or the people in the bank down the street from your residence? Answer: Nope.

Several observations:

- Technology doom works because innovations have positive and negative impacts. To make technology exciting, no one is exactly sure what the knock on effects will be. Therefore, doom is coming along with the good parts

- Taking a contrary point of view creates opportunities to engage with those who want to hear something different. Insecurity is a powerful sales tool.

- Sending messages about future impacts pulls clicks. Clicks are important.

Net net: The AI revolution is a trope. Never mind that after decades of researchers’ work, a revolution has arrived. Lionel Messi allegedly said, “It took me 17 years to become an overnight success.” (Mr. Messi is a highly regarded professional soccer player.)

Will the ill-defined technology kill humans? Answer: Who knows. Will humans using ill-defined technology like smart software kill humans? Answer: Absolutely. Can “anyone” or “anything” take an action to prevent AI technology from rippling through society. Answer: Nope.

Stephen E Arnold, January 20, 2025

How To: Create Junk Online Content with AI

January 16, 2025

A dinobaby produced this post. Sorry. No smart software was able to help the 80 year old this time around.

A dinobaby produced this post. Sorry. No smart software was able to help the 80 year old this time around.

Why sign up for a Telegram SEO expert in Myanmar or the Philippines? You can do it yourself. An explainer called “AI Marketing Strategy: How to Use AI for Marketing (Examples & Tools)” provides the recipe. The upside? Well, I am not sure. The downside? More baloney with which to dupe smart software and dumb humans.

What does the free write up cover? Here’s the list of topics. Which whet your appetite for AI-generated baloney?

- A definition of AI marketing

- How to use AI in your strategy for cutting corners and whacking out “real” information

- The steps you have to follow to find the pot of gold

- The benefits of being really smart with smart software

- The three — count them — types of smart software marketing

- The three — count them — “best” AI marketing software (I love “best”. So credible)

- A smart software FAQ

- How to “future proof” your business with an AI marketing strategy.

Let me give you an example of the riches tucked inside this EWeek “real” news article. The write up says:

Maintain data quality

Okay, marketers are among the world’s leaders in data accuracy, thoroughness, and detail fact checking. That’s why the handful of giant outfits providing smart software explain how to keep cheese on pizza with glue and hallucinate.

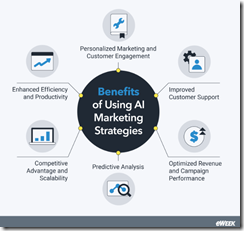

Why should one use smart software to market? That’s easy. The answer is that smart software makes it easy to produce output which may be incorrect. If you want more benefits, here’s the graphic from the write up which explains it to short-cutters who don’t want to spend time doing work the old-fashioned way:

A graphic which may or may not have been produced with smart software designed to create “McKinsey” type illustrations suitable for executives with imposter syndrome.

This graphic is followed by an explanation of the three — count them — three types of AI marketing. I am not sure the items listed are marketing, but, hey, when one is doing a deep dive one doesn’t linger too long deep in the content ocean with concepts like machine learning, natural language processing, and computer vision. (I am not joking. These are the three types of AI marketing. Who knew? Certainly not this dinobaby.

The author, according to the definitive write up, possesses “more than 10 years of experience covering technology, software, and news.” The home base for this professional is the Philippines, which along with Thailand and Cambodia, one of the hot beds for a wide range of activities, including the use of smart software to generate those SEO services publicized on Telegram.

Was the eWeek article written with the help of AI? Boy, this dinobaby doesn’t know.

Stephen E Arnold, January 16, 2025

The AI Profit and Cost Race: Drivers, Get Your Checkbooks Out

January 15, 2025

A dinobaby-crafted post. I confess. I used smart software to create the heart wrenching scene of a farmer facing a tough 2025.

A dinobaby-crafted post. I confess. I used smart software to create the heart wrenching scene of a farmer facing a tough 2025.

Microsoft appears ready to spend $80 billion “on AI-enabled data centers” by December 31, 2025. Half of the money will go to US facilities, and the other half, other nation states. I learned this information from a US cable news outfit’s article “Microsoft Expects to Spend $80 Billion on AI-Enabled Data Centers in Fiscal 2025.” Is Microsoft tossing out numbers as part of a marketing plan to trigger the lustrous Google, or is Microsoft making clear that it is going whole hog for smart software despite the worries of investors that an AI revenue drought persists? My thought is that Microsoft likes to destabilize the McKinsey-type thinking at Google, wait for the online advertising giant to deliver another fabulous Sundar & Prabhakar Comedy Tour, and then continue plodding forward.

The write up reports:

Several top-tier technology companies are rushing to spend billions on Nvidia graphics processing units for training and running AI models. The fast spread of OpenAI’s ChatGPT assistant, which launched in late 2022, kicked off the AI race for companies to deliver their own generative AI capabilities. Having invested more than $13 billion in OpenAI, Microsoft provides cloud infrastructure to the startup and has incorporated its models into Windows, Teams and other products.

Yep, Google-centric marketing.

Thanks, You.com. Good enough.

But if Microsoft does spend $80 billion, how will the company convert those costs into a profit geyser? That’s a good question. Microsoft appears to be cooperating with discounts for its mainstream consumer software. I saw advertisements offering Windows 11 Professional for $25. Other deep discounts can be found for Office 365, Visio, and even the bread-and-butter sales pitch PowerPoint application.

Tweaking Google is one thing. Dealing with cost competition is another.

I noted that the South China Morning Post’s article “Alibaba Ties Up with Lee Kai-fu’s Unicorn As China’s AI Sector Consolidates.” Tucked into that rah rah write up was this statement:

The cooperation between two of China’s top AI players comes as price wars continue in the domestic market, forcing companies to further slash prices or seek partnerships with former foes. Alibaba Cloud said on Tuesday it would reduce the fees for using its visual reasoning AI model by up to 85 per cent, the third time it had marked down the prices of its AI services in the past year. That came after TikTok parent ByteDance last month cut the price of its visual model to 0.003 yuan (US$0.0004) per thousand token uses, about 85 per cent lower than the industry average.

The message is clear. The same tactic that China’s electric vehicle manufacturers are using will be applied to smart software. The idea is that people will buy good enough products and services if the price is attractive. Bean counters intuitively know that a competitor that reduces prices and delivers an acceptable product can gain market share. The companies unable to compete on price face rising costs and may be forced to cut their prices, thus risking financial collapse.

For a multi-national company, the cost of Chinese smart software may be sufficiently good to attract business. Some companies which operate under US sanctions and controls of one type or another may be faced with losing some significant markets. Examples include Brazil, India, Middle Eastern nations, and others. That means that a price war can poke holes in the financial predictions which outfits like Microsoft are basing some business decisions.

What’s interesting is that this smart software tactic apparently operating in China fits in with other efforts to undermine some US methods of dominating the world’s financial system. I have no illusions about the maturity of the AI software. I am, however, realistic about the impact of spending significant sums with the fervent belief that a golden goose will land on the front lawn of Microsoft’s headquarters. I am okay with talking about AI in order to wind up Google. I am a bit skeptical about hosing $80 billion into data centers. These puppies gobble up power, which is going to get expensive quickly if demand continues to blast past the power generation industry’s projections. An economic downturn in 2025 will not help ameliorate the situation. Toss in regional wars and social turmoil and what does one get?

Risk. Welcome to 2025.

Stephen E Arnold, January 15, 2025

AI Search Engine from Alibaba Grows Apace

January 15, 2025

Prepared by a still-alive dinobaby.

Prepared by a still-alive dinobaby.

The Deepseek red herring has been dragged across the path of US AI innovators. A flurry of technology services wrote about Deepseek’s ability to give US smart software companies a bit of an open source challenge. The hook, however, was not just the efficacy of the approach. The killer message was, “Better, faster, and cheaper.” Yep, cheaper, the concept which raises questions about certain US outfits burning cash in units of a one billion dollars with every clock tick.

A number of friendly and lovable deer are eating the plants in Uncle Sam’s garden. How many of these are living in the woods looking for a market to consume? Thanks OpenAI, good enough.

Now Alibaba is coming for AI search. The Chinese company crows on PR Newswire, "Alibaba’s Accio AI Search Engine Hits 500,000 SME User Milestone." Sounds like a great solution for US businesses doing work for the government. The press release reveals:

"Alibaba International proudly announces that its artificial intelligence (AI)-powered business-to-business (B2B) search engine for product sourcing, Accio, has reached a significant milestone since its launch in November 2024, currently boasting over 500,000 small and medium-sized enterprise (SME) users. … During the peak global e-commerce sales seasons in November and December, more than 50,000 SMEs worldwide have actively used Accio to source inspirations for Black Friday and Christmas inventory stocking. User feedback shows that the search engine helped them achieve this efficiently. Accio now holds a net promoter score (NPS) exceeding 50[1], indicating a high level of customer satisfaction. On December 13, 2024, the dynamic search engine was also named ‘Product of the Day’ on Product Hunt, a site that curates new products in tech, further cementing its status as an indispensable tool for SME buyers worldwide."

Well, good for them. And, presumably, for China ‘s information gathering program. Founded in 1999, Alibaba Group is based in Hangzhou, Zhejiang. One can ask many questions about Alibaba, including ones related to the company’s interaction with Chinese government officials. When a couple of deer are eating one’s garden vegetables, a good question to ask is, “How many of these adorable creatures live in the woods?” One does not have to be Natty Bumpo to know that the answer is, “There are more where those came from.”

Cynthia Murrell, January 15, 2025

Agentic Workflows and the Dust Up Between Microsoft and Salesforce

January 14, 2025

Prepared by a still-alive dinobaby.

Prepared by a still-alive dinobaby.

The Register, a UK online publication, does a good job of presenting newsworthy events with a touch of humor. Today I spotted a new type of information in the form of an explainer plus management analysis. Plus the lingo and organization suggest a human did all or most of the work required to crank out a very good article called “In AI Agent Push, Microsoft Re-Orgs to Create CoreAI – Platform and Tools Team.”

I want to highlight the explainer part of the article. The focus is on the notion of agentic; specifically:

agentic applications with memory, entitlements, and action space that will inherit powerful model capabilities. And we will adapt these capabilities for enhanced performance and safety across roles, business processes, and industry domains. Further, how we build, deploy, and maintain code for these AI applications is also fundamentally changing and becoming agentic.

These words are attributed to Microsoft’s top dog Satya Nadella, but they sound as if one of the highly paid wordsmiths laboring for the capable Softies. Nevertheless, the idea is important. In order to achieve the agentic pinnacle, Microsoft has to reorganize. Whoever can figure out how to make agentic applications work across different vendors’ solutions will be able to make money. That’s the basic idea: Smart software is going to create a new big thing for enterprise software and probably some consumers.

The write up explains:

It’s arguably just plain old software talking to plain old software, which would be nothing new. The new angle here, though, is that it’s driven mainly by, shall we say, imaginative neural networks and models making decisions, rather than algorithms following entirely deterministic routes. Which is still software working with software. Nadella thinks building artificially intelligent agentic apps and workflows needs “a new AI-first app stack — one with new UI/UX patterns, runtimes to build with agents, orchestrate multiple agents, and a reimagined management and observability layer.”

To win the land in this new territory, Microsoft must have a Core AI team. Google and Salesforce presumably have this type of set up. Microsoft has to step up its AI efforts. The Register points out:

Nadella noted that “our internal organizational boundaries are meaningless to both our customers and to our competitors”. That’s an odd observation given Microsoft published his letter, which concludes with this observation: “Our success in this next phase will be determined by having the best AI platform, tools, and infrastructure. We have a lot of work to do and a tremendous opportunity ahead, and together, I’m looking forward to building what comes next.”

Here’s what I found interesting:

- Agentic is the next big thing in smart software. Essentially smart software that does one thing is useful. Orchestrating agents to do a complex process is the future. The software decides. Everything works well — at least, that’s the assumption.

- Microsoft, like Google, is now in a Code Yellow or Code Red mode. The company feels the heat from Salesforce. My hunch is that Microsoft knows that add ins like Ghostwriter for Microsoft Office is more useful than Microsoft’s own Copilot for many users. If the same boiled fish appears on the enterprise menu, Microsoft is in a world of hurt from Salesforce and probably a lot of other outfits.

- The re-org parallels the disorder that surfaced at Google when it fixed up its smart software operation or tried to deal with the clash of the wizards in that estimable company. Pushing boxes around on an organization chart is honorable work, but that management method may not deliver the agentic integration some people want.

The conclusion I drew from The Register’s article is that the big AI push and the big players’ need to pop up a conceptual level in smart software is perceived as urgent. Costs? No problem. Hallucination? No problem. Hardware availability? No problem. Software? No problem. A re-organization is obvious and easy. No problem.

Stephen E Arnold, January 14, 2025

More about NAMER, the Bitext Smart Entity Technology

January 14, 2025

A dinobaby product! We used some smart software to fix up the grammar. The system mostly worked. Surprised? We were.

A dinobaby product! We used some smart software to fix up the grammar. The system mostly worked. Surprised? We were.

We spotted more information about the Madrid, Spain based Bitext technology firm. The company posted “Integrating Bitext NAMER with LLMs” in late December 2024. At about the same time, government authorities arrested a person known as “Broken Tooth.” In 2021, an alert for this individual was posted. His “real” name is Wan Kuok-koi, and he has been in an out of trouble for a number of years. He is alleged to be part of a criminal organization and active in a number of illegal behaviors; for example, money laundering and human trafficking. The online service Irrawady reported that Broken Tooth is “the face of Chinese investment in Myanmar.”

Broken Tooth (né Wan Kuok-koi, born in Macau) is one example of the importance of identifying entity names and relating them to individuals and the organizations with which they are affiliated. A failure to identify entities correctly can mean the difference between resolving an alleged criminal activity and a get-out-of-jail-free card. This is the specific problem that Bitext’s NAMER system addresses. Bitext says that large language models are designed for for text generation, not entity classification. Furthermore, LLMs pose some cost and computational demands which can pose problems to some organizations working within tight budget constraints. Plus, processing certain data in a cloud increases privacy and security risks.

Bitext’s solution provides an alternative way to achieve fine-grained entity identification, extraction, and tagging. Bitext’s solution combines classical natural language processing solutions solutions with large language models. Classical NLP tools, often deployable locally, complement LLMs to enhance NER performance.

NAMER excels at:

- Identifying generic names and classifying them as people, places, or organizations.

- Resolving aliases and pseudonyms.

- Differentiating similar names tied to unrelated entities.

Bitext supports over 20 languages, with additional options available on request. How does the hybrid approach function? There are two effective integration methods for Bitext NAMER with LLMs like GPT or Llama are. The first is pre-processing input. This means that entities are annotated before passing the text to the LLM, ideal for connecting entities to knowledge graphs in large systems. The second is to configure the LLM to call NAMER dynamically.

The output of the Bitext system can generate tagged entity lists and metadata for content libraries or dictionary applications. The NAMER output can integrate directly into existing controlled vocabularies, indexes, or knowledge graphs. Also, NAMER makes it possible to maintain separate files of entities for on-demand access by analysts, investigators, or other text analytics software.

By grouping name variants, Bitext NAMER streamlines search queries, enhancing document retrieval and linking entities to knowledge graphs. This creates a tailored “semantic layer” that enriches organizational systems with precision and efficiency.

For more information about the unique NAMER system, contact Bitext via the firm’s Web site at www.bitext.com.

Stephen E Arnold, January 14, 2025

Some AI Wisdom: Is There a T Shirt?

January 14, 2025

Prepared by a still-alive dinobaby.

Prepared by a still-alive dinobaby.

I was catching up with newsfeeds and busy filtering the human output from the smart software spam-arator. I spotted “The Serious Science of Trolling LLMs,” published in the summer of 2024. The article explains that value can be derived from testing large language models like ChatGPT, Gemini, and others with prompts to force the software to generate something really stupid, off base, incorrect, or goofy. I zipped through the write up and found it interesting. Then I came upon this passage:

the LLM business is to some extent predicated on deception; we are not supposed to know where the magic ends and where cheap tricks begin. The vendors’ hope is that with time, we will reach full human-LLM parity; and until then, it’s OK to fudge it a bit. From this perspective, the viral examples that make it patently clear that the models don’t reason like humans are not just PR annoyances; they are a threat to product strategy.

Several observations:

- Progress from my point of view with smart software seems to have slowed. The reason may be that free and low cost services cannot affords to provide the functionality they did before someone figured out the cost per query. The bean counters spoke and “quality” went out the window.

- The gap between what the marketers say and what the systems do is getting wider. Sorry, AI wizards, the systems typically fail to produce an output satisfactory for my purposes on the first try. Multiple prompts are required. Again a cost cutting move in my opinion.

- Made up information or dead wrong information is becoming more evident. My hunch is that the consequence of ingesting content produced by AI is degrading the value of the models originally trained on human generated content. I think this is called garbage in — garbage out.

Net net: Which of the deep pocket people will be the first to step back from smart software built upon systems that consume billions of dollars the way my French bulldog eats doggie treats? The Chinese system Deepseek’s marketing essentially says, “Yo, we built this LLM at a fraction of the cost of the spendthrifts at Google, Microsoft, and OpenAI. Are the Chinese AI wizards dragging a red herring around the AI forest?

To go back to the Lcamtuf essay, “it’s OK to fudge a bit.” Nope, it is mandatory to fudge a lot.

Stephen E Arnold, January 14, 2025

AI Defined in an Arts and Crafts Setting No Less

January 13, 2025

Prepared by a still-alive dinobaby.

Prepared by a still-alive dinobaby.

I was surprised to learn that a design online service (what I call arts and crafts) tackled a to most online publications skip. The article “What Does AI Really Mean?” tries to define AI or smart software. I remember a somewhat confused and erratic college professor trying to define happiness. Wow, that was a wild and crazy lecture. (I think the person’s name was Dr. Chapman. I tip my ball cap with the SS8 logo on it to him.) The author of this essay is a Googler, so it must be outstanding, furthering the notion of quantum supremacy at Google.

What is AI? The write up says:

I hope this helped you better understand what those terms mean and the processes which encompass the term “AI”.

Okay, “helped you understand better.” What does the essay do to help me understand better. Hang on to your SS8 ball cap. The author briefly defines these buzzwords:

- Data as coordinates

- Querying per approximation

- Language models both large and small

- Fine “Tunning” (Isn’t that supposed to be tuning?)

- Enhancing context with information, including grounded generation

- Embedding.

For me, a list of buzzwords is not a definition. (At least the hapless Dr. Chapman tried to provide concrete examples and references to his own experience with happiness, which as I recall eluded him.)

The “definition” jumps to a section called “Let’s build.” The author concludes the essay with:

I hope this helped you better understand what those terms mean and the processes which encompass the term “AI”. This merely scratches the surface of complexity, though. We still need to talk about AI Agents and how all these approaches intertwine to create richer experiences. Perhaps we can do that in a later article — let me know in the comments if you’d like that!

That’s it. The Google has, from his point of view, defined AI. As Holden Caufield in The Catcher in the Rye said:

“I can’t explain what I mean. And even if I could, I’m not sure I’d feel like it.”

Bingo.

Stephen E Arnold, January 13, 2025