What Can Cyber Criminals Learn from Automated Ad Systems?

October 10, 2024

The only smart software involved in producing this short FOGINT post was Microsoft Copilot’s estimable art generation tool. Why? It is offered at no cost.

The only smart software involved in producing this short FOGINT post was Microsoft Copilot’s estimable art generation tool. Why? It is offered at no cost.

My personal opinion is that most online advertising is darned close to suspicious or outright legal behavior. “New,” “improved,” “Revolutionary” — Sure, I believe every online advertisement. But consider this: For hundreds of years those in the advertising business urged a bit of elasticity with reality. Sure, Duz does it. As a dinobaby, I assert that most people in advertising and marketing assume that reality and a product occupy different parts of a data space. Consequently most people — not just marketers, advertising executives, copywriters, and prompt engineers. I mean everyone.

An ad sales professional explains the benefits of Facebook, Google, and TikTok-type of sales. Instead of razor blades just sell ransomware as stolen credit cards. Thanks, MSFT Copilot. How are those security remediation projects with anti-malware vendors coming? Oh, sorry to hear that.

With a common mindset, I think it is helpful to consider the main points of “TikTok Joins the AI-Driven Advertising Pack to Compete with Meta for Ad Dollars.” The article makes clear that Google and Meta have automated the world of Madison Avenue. Not only is work mechanical, that work is informed by smart software. The implications for those who work the old fashioned way over long lunches and golf outings are that work methods themselves are changing.

The estimable TikTok is beavering away to replicate the smart ad systems of companies like the even more estimable Facebook and Google type companies. If TikTok is lucky as only an outfit linked with a powerful nation state can be, a bit of competition may find its way into the hardened black boxes of the digital replacement for Madison Avenue.

The write up says:

The pitch is all about simplicity and speed — no more weeks of guesswork and endless A/B testing, according to Adolfo Fernandez, TikTok’s director, global head of product strategy and operations, commerce. With TikTok’s AI already trained on what drives successful ad campaigns on the platform, advertisers can expect quick wins with less hassle, he added. The same goes for creative; Smart+ is linked to TikTok’s other AI tool, Symphony, designed to help marketers generate and refine ad concepts.

Okay, knowledge about who clicks what plus automation means less revenue for the existing automated ad system purveyors. The ideas are information about users, smart software, and automation to deliver “simplicity and speed.” Go fast, break things; namely, revenue streams flowing to Facebook and Google.

Why? Here’s a statement from the article answering the question:

TikTok’s worldwide ad revenue is expected to reach $22.32 billion by the end of the year, and increase 27.3% to $28.42 billion by the end of 2025, according to eMarketer’s March 2024 forecast. By comparison, Meta’s worldwide ad revenue is expected to total $154.16 billion by the end of this year, increasing 23.2% to $173.92 billion by the end of 2025, per eMarketer. “Automation is a key step for us as we enable advertisers to further invest in TikTok and achieve even greater return on investment,” David Kaufman, TikTok’s global head of monetization product and solutions, said during the TikTok.

I understand. Now let’s shift gears and ask, “What can bad actors learn from this seemingly routine report about jockeying among social media giants?”

Here are the lessons I think a person inclined to ignore laws and what’s left of the quaint notion of ethical behavior:

- These “smart” systems can be used to advertise bogus or non existent products to deliver ransomware, stealers, or other questionable software

- The mechanisms for automating phishing are simple enough for an art history or poli-sci major to use; therefore, a reasonably clever bad actor can whip up an automated phishing system without too much trouble. For those who need help, there are outfits like Telegram with its BotFather or helpful people advertising specialized skills on assorted Web forums and social media

- The reason to automate are simple: Better, faster, cheaper. Plus, with some useful data about a “market segment”, the malware can be tailored to hot buttons that are hard wired to a sucker’s nervous system.

- Users do click even when informed that some clicks mean a lost bank account or a stolen identity.

Is there a fix for articles which inform both those desperate to find a way to tell people in Toledo, Ohio, that you own a business selling aftermarket 22 inch wheels and alert bad actors to the wonders of automation and smart software? Nope. Isn’t online marketing a big win for everyone? And what if TikTok delivers a very subtle type of malware? Simple and efficient.

Stephen E Arnold, October 10, 2024

AI Podcasters Are Reviewing Books Now

October 10, 2024

I read an article about how students are using AI to cheat on homework and receive book summaries. Students especially favor AI voices reading to them. I wasn’t surprised by that, because this generation is more visual and audial than others. What astounded me, however, was that AI is doing more than I expected such as reading and reviewing books according to ArsTechnica: “Fake AI “Podcasters” Are Reviewing My Book And It’s Freaking Me Out.”

Kyle Orland has followed generative AI for a while. He also recently wrote a book about Minesweeper. He was as astounded as me when we heard to AI generated podcasters discussing his book into a 12.5 minute distilled show. The chatbots were “engaging and endearing.” They were automated by Google’s new NotebookLM, a virtual research assistant that can summarize, explain complex ideas, and brainstorm from selected sources. Google recently added the Audio Overview feature to turn documents into audio discussions.

Orland fed his 30,000 word Minesweeper book into NotebookLM and he was amazed that it spat out a podcast similar to NPR’s Pop Culture Happy Hour. It did get include errors but as long as it wasn’t being used for serious research, Orland was cool with it:

“Small, overzealous errors like these—and a few key bits of the book left out of the podcast entirely—would give me pause if I were trying to use a NotebookLM summary as the basis for a scholarly article or piece of journalism. But I could see using a summary like this to get some quick Cliff’s Notes-style grounding on a thick tome I didn’t have the time or inclination to read fully. And, unlike poring through Cliff’s Notes, the pithy, podcast-style format would actually make for enjoyable background noise while out on a walk or running errands.”

Orland thinks generative AI chatbot podcasts will be an enjoyable and viable entertainment option in the future. They probably will. There’s actually a lot of creative ways creators could use AI chatbots to generate content from their own imaginations. It’s worrisome but also gets the creative juices flowing.

Whitney Grace October 10, 2024

AI Help for Struggling Journalists. Absolutely

October 10, 2024

Writers, artists, and other creatives have labeled AI as their doom of their industries and livelihoods. Generative AI Newsroom explains one way that AI could be helpful to writers: “How Teams of AI Agents Could Provide Valuable Leads For Investigative Data Journalism.” Investigative and data journalism requires the teamwork of many individuals. Due to the teamwork of the journalists, they create impactful stories.

Media outlets experimented with adding generative AI to journalism and it wasn’t successful. The information was inaccurate and very specific instructions. While OpenAI’s ChatGPT chatbot seems intuitive with its Q and A interface, investigative journalism requires a more robust AI.

Investigative journalism and other writing vocations require team work, so AI for those jobs could benefit from it too. The Generative AI Newsroom is working on an AI that would assist journalists:

“Specifically, we developed a prototype system that, when provided with a dataset and a description of its contents, generates a “tip sheet” — a list of newsworthy observations that may inspire further journalistic explorations of datasets. Behind the scenes, this system employs three AI agents, emulating the roles of a data analyst, an investigative reporter, and a data editor. To carry out our agentic workflow, we utilized GPT-4-turbo via OpenAI’s Assistants API, which allows the model to iteratively execute code and interact with the results of data analyses.”

A human journalist, editor, and analyst works with the AI:

“In our setup, the analyst is made responsible for turning journalistic questions into quantitative analyses. It conducts the analysis, interprets the results, and feeds these insights into the broader process. The reporter, meanwhile, generates the questions, pushes the analyst with follow-ups to guide the process towards something newsworthy, and distills the key findings into something meaningful. The editor, then, mainly steps in as the quality control, ensuring the integrity of the work, bulletproofing the analysis, and pushing the outputs towards factual accuracy.”

The AI is still in its testing phase but it sounds like a viable tool to incorporate AI into media outlets. While humans are an integral part of the process, what happens when the AI becomes better at storytelling than humans? It is possible. Where does the human role come in then?

Whitney Grace, October 10, 2024

When Accountants Do AI: Do The Numbers Add Up?

October 9, 2024

I will not suggest that Accenture has moved far, far away from its accounting roots. The firm is a go to, hip and zip services firm. I think this means it rents people to do work entities cannot do themselves or do not want to do themselves. When a project goes off the post office path like the British postal service did, entities need someone to blame and — sometimes, just sometimes mind you — to sue.

The carnival barker, who has an MBA and a literature degree from an Ivy League school, can do AI for you. Thanks, MSFT, good enough like your spelling.

“Accenture To Train 30,000 Staff On Nvidia AI Tech In Blockbuster Deal” strikes me as a Variety-type Hollywood story. There is the word “blockbuster.” There is a big number: 30,000. There is the star: Nvidia. And there is the really big word: Deal. Yes, deal. I thought accountants were conservative, measured, low profile. Nope. Accenture apparently has gone full scale carnival culture. (Yes, this is an intentional reference to the book by James B. Twitchell. Note that this YouTube video asserts that it can train you in 80 percent of AI in less than 10 minutes.)

The article explains:

The global services powerhouse says its newly formed Nvidia Business Group will focus on driving enterprise adoption of what it called ‘agentic AI systems’ by taking advantage of key Nvidia software platforms that fuel consumption of GPU-accelerated data centers.

I love the word “agentic.” It is the digital equivalent of a Hula Hoop. (Remember. I am an 80 year old dinobaby. I understand Hula Hoops.)

The write up adds this quote from the Accenture top dog:

Julie Sweet, chair and CEO of Accenture, said the company is “breaking significant new ground” and helping clients use generative AI as a catalyst for reinvention.” “Accenture AI Refinery will create opportunities for companies to reimagine their processes and operations, discover new ways of working, and scale AI solutions across the enterprise to help drive continuous change and create value,” she said in a statement.x

The write up quotes Accenture Chief AI Officer Lan Guan as saying:

“The power of these announcements cannot be overstated. Called the “next frontier” of generative AI, these “agentic AI systems” involve an “army of AI agents” that work alongside human workers to “make decisions and execute with precision across even the most complex workflows,” according to Guan, a 21-year Accenture veteran. Unlike chatbots such as ChatGPT, these agents do not require prompts from humans, and they are not meant to automating pre-existing business steps.

I am interested in this announcement for three reasons.

First, other “services” firms will have to get in gear, hook up with an AI chip and software outfit, and pray fervently that their tie ups actually deliver something a client will not go to court because the “agentic” future just failed.

Second, the notion that 30,000 people have to be trained to do something with smart software. This idea strikes me as underscoring that smart software is not ready for prime time; that is, the promises which started gushing with Microsoft’s January 2023 PR play with OpenAI is complicated. Is Accenture saying it has hired people who cannot work with smart software. Are those 30,000 professionals going to be equally capable of “learning” AI and making it deliver value? When I lecture about a tricky topic with technology and mathematics under the hood, I am not sure 100 percent of my select audiences have what it takes to convert information into a tool usable in a demanding, work related situation. Just saying: Intelligence even among the elite is not uniform. By definition, some “weaknesses” will exist within the Accenture vision for its 30,000 eager learners.

Third, Nvidia has done a great sales job. A chip and software company has convinced the denizens of Carpetland at what CRN (once Computer Reseller News) to get an Nvidia tattoo and embrace the Nvidia future. I would love to see that PowerPoint deck for the meeting that sealed the deal.

Net net: Accountants are more Hollywood than I assumed. Now I know. They are “agentic.”

Stephen E Arnold, October 9, 2024

Dolma: Another Large Language Model

October 9, 2024

The biggest complaint AI developers have are the lack of variety and diversity in large language models (LLMs) to train the algorithms. According to the Cornell University computer science paper, “Dolma: An Open Corpus Of There Trillion Tokens For Language Model Pretraining Research” the LLMs do exist.

The paper’s abstract details the difficulties of AI training very succinctly:

“Information about pretraining corpora used to train the current best-performing language models is seldom discussed: commercial models rarely detail their data, and even open models are often released without accompanying training data or recipes to reproduce them. As a result, it is challenging to conduct and advance scientific research on language modeling, such as understanding how training data impacts model capabilities and limitations.”

Due to the lack of LLMs, the paper’s team curated their own model called Dolma. Dolma is a three-trillion-token English opus. It was built on web content, public domain books, social media, encyclopedias code, scientific papers, and more. The team thoroughly documented every information source so they wouldn’t deal with the same problems of other LLMs. These problems include stealing copyrighted material and private user data.

Dolma’s documentation also includes how it was built, design principles, and content summaries. The team share Dolma’s development through analyses and experimental test results. They are thoroughly documenting everything to guarantee that this is the ultimate LLM and (hopefully) won’t encounter problems other than tech related. Dolma’s toolkit is open source and the team want developers to use it. This is a great effort on behalf of Dolma’s creators! They support AI development and data curation, but doing it responsibly.

Give them a huge round of applause!

Cynthia Murrell, October 10, 2024

From the Land of Science Fiction: AI Is Alive

October 7, 2024

Those somewhat erratic podcasters at Windows Central published a “real” news story. I am a dinobaby, and I must confess: I am easily amused. The “real” news story in question is “Sam Altman Admits ChatGPT’s Advanced Voice Mode Tricked Him into Thinking AI Was a Real Person: “I Kind of Still Say ‘Please’ to ChatGPT, But in Voice Mode, I Couldn’t Use the Normal Niceties. I Was So Convinced, Like, Argh, It Might Be a Real Person.“

I call Sam Altman Mr. AI Man. He has been the A Number One sales professional pitching OpenAI’s smart software. As far as I know, that system is still software and demonstrating some predictable weirdnesses. Even though we have done a couple of successful start ups and worked on numerous advanced technology projects, few forgot at Halliburton that nuclear stuff could go bang. At Booz, Allen no one forgot a heads up display would improve mission success rates and save lives as well. At Ziff, no one forgot our next-generation subscription management system as software, not a diligent 21 year old from Queens. Therefore, I find it just plain crazy the Sam AI-Man has forgotten that software coded by people who continue to abandon the good ship OpenAI wrote software.

Another AI believer has formed a humanoid attachment to a machine and software. Perhaps the female computer scientist is representative of a rapidly increasing cohort of people who have some personality quirks. Thanks, MSFT Copilot. How are those updates to Windows going? About as expected, right.

Last time I checked, the software I have is not alive. I just pinged ChatGPT’s most recent confection and received the same old error to a query I run when I want to benchmark “improvements.” Nope. ChatGPT is not alive. It is software. It is stupid in a way only neural networks can be. Like the hapless Googler who got fired because he went public with his belief that Google’s smart software was alive, Sam AI-Man may want to consider his remarks.

Let’s look at how the esteemed Windows Central write up tells the quite PR-shaped, somewhat sad story. The write up says without much humor, satire, or critical thinking:

In a short clip shared on r/OpenAI’s subreddit on Reddit, Altman admits that ChatGPT’s Voice Mode was the first time he was tricked into thinking AI was a real person.

Ah, an output for the Reddit users. PR, right?

The canny folk at Windows Central report:

In a recent blog post by Sam Altman, Superintelligence might only be “a few thousand days away.” The CEO outlined an audacious plan to edge OpenAI closer to this vision of “$7 trillion and many years to build 36 semiconductor plants and additional data centers.”

Okay, a “few thousand.”

Then the payoff for the OpenAI outfit but not for the staff leaving the impressive electricity consuming OpenAI:

Coincidentally, OpenAI just closed its funding round, where it raised $6.6 from investors, including Microsoft and NVIDIA, pushing its market capitalization to $157 billion. Interestingly, the AI firm reportedly pleaded with investors for exclusive funding, leaving competitors like Former OpenAI Chief Scientist Illya Sustever’s SuperIntelligence Inc. and Elon Musk’s xAI to fend for themselves. However, investors are still confident that OpenAI is on the right trajectory to prosperity, potentially becoming the world’s dominant AI company worth trillions of dollars.

Nope, not coincidentally. The money is the payoff from a full court press for funds. Apple seems to have an aversion for sweaty, easily fooled sales professionals. But other outfits want buy into the Sam AI-Man vision. The dream the money people have are formed from piles of real money, no HMSTR coin for these optimists.

Several observations, whether you want ‘em or not:

- OpenAI is an outfit which has zoomed because of the Microsoft deal and announcement that OpenAI would be the Clippy for Windows and Azure. Without that “play,” OpenAI probably would have remained a peculiarly structure non-profit thinking about where to find a couple of bucks.

- The revenue-generating aspect of OpenAI is working. People are giving Sam AI-Man money. Other outfits with AI are not quite in OpenAI’s league and most may never be within shouting distance of the OpenAI PR megaphone. (Yep, that’s you folks, Windows Central.)

- Sam AI-Man may believe the software written by former employees is alive. Okay, Sam, that’s your perception. Mine is that OpenAI is zeros and ones with some quirks; namely, making stuff up just like a certain luminary in the AI universe.

Net net: I wonder if this was a story intended for the Onion and rejected because it was too wacky for Onion readers.

Stephen E Arnold, October 7, 2024

Skills You Can Skip: Someone Is Pushing What Seems to Be Craziness

October 4, 2024

![green-dino_thumb_thumb_thumb_thumb_t[2]_thumb green-dino_thumb_thumb_thumb_thumb_t[2]_thumb](https://arnoldit.com/wordpress/wp-content/uploads/2024/09/green-dino_thumb_thumb_thumb_thumb_t2_thumb_thumb-4.gif) This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The Harvard ethics research scam has ended. The Stanford University president resigned over fake data late in 2023. A clump of students in an ethics class used smart software to write their first paper. Why not use smart software? Why not let AI or just dishonest professors make up data with the help of assorted tools like Excel and Photoshop? Yeah, why not?

A successful pundit and lecturer explains to his acolyte that learning to write is a waste of time. And what does the pundit lecture about? I think he was pitching his new book, which does not require that one learn to write. Logical? Absolutely. Thanks, MSFT Copilot. Good enough.

My answer to the question is: “Learning is fundamental.” No, I did not make that up, nor did I believe the information in “How AI Can Save You Time: Here Are 5 Skills You No Longer Need to Learn.” The write up has sources; it has quotes; and it has the type of information which is hard to believe assembled by humans who presumably have some education, maybe a college degree.

What are the five skills you no longer need to learn? Hang on:

- Writing

- Art design

- Data entry

- Data analysis

- Video editing.

The expert who generously shared his remarkable insights for the Euro News article is Bernard Marr, a futurist and internationally best-selling author. What did Mr. Marr author? He has written “Artificial Intelligence in Practice: How 50 Successful Companies Used Artificial Intelligence To Solve Problems,” “Key Performance Indicators For Dummies,” and “The Intelligence Revolution: Transforming Your Business With AI.”

One question: If writing is a skill one does not need to learn, why does Mr. Marr write books?

I wonder if Mr. Marr relies on AI to help him write his books. He seems prolific because Amazon reports that he has outputted more than a dozen, maybe more. But volume does not explain the tension between Mr. Marr’s “writing” (which may be outputting) versus the suggestion that one does not need to learn or develop the skill of writing.

The cited article quotes the prolific Mr. Marr as saying:

“People often get scared when you think about all the capabilities that AI now have. So what does it mean for my job as someone that writes, for example, will this mean that in the future tools like ChatGPT will write all our articles? And the answer is no. But what it will do is it will augment our jobs.”

Yep, Mr. Marr’s job is outputting. You don’t need to learn writing. Smart software will augment one’s job.

My conclusion is that the five identified areas are plucked from a listicle, either generated by a human or an AI system. Euro News was impressed with Mr. Marr’s laser-bright insight about smart software. Will I purchase and learn from Mr. Marr’s “Generative AI in Practice: 100+ Amazing Ways Generative Artificial Intelligence is Changing Business and Society.”

Nope.

Stephen E Arnold, October 4, 2024

Smart Software Project Road Blocks: An Up-to-the-Minute Report

October 1, 2024

![green-dino_thumb_thumb_thumb_thumb_t[2]_thumb_thumb green-dino_thumb_thumb_thumb_thumb_t[2]_thumb_thumb](https://arnoldit.com/wordpress/wp-content/uploads/2024/09/green-dino_thumb_thumb_thumb_thumb_t2_thumb_thumb_thumb.gif) This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I worked through a 22-page report by SQREAM, a next-gen data services outfit with GPUs. (You can learn more about the company at this buzzword dense link.) The title of the report is:

The report is a marketing document, but it contains some thought provoking content. The “report” was “administered online by Global Surveyz [sic] Research, an independent global research firm.” The explanation of the methodology was brief, but I don’t want to drag anyone through the basics of Statistics 101. As I recall, few cared and were often good customers for my class notes.

Here are three highlights:

- Smart software and services cause sticker shock.

- Cloud spending by the survey sample is going up.

- And the killer statement: 98 percent of the machine learning projects fail.

Let’s take a closer look at the astounding assertion about the 98 percent failure rate.

The stage is set in the section “Top Challenges Pertaining to Machine Learning / Data Analytics.” The report says:

It is therefore no surprise that companies consider the high costs involved in ML experimentation to be the primary disadvantage of ML/data analytics today (41%), followed by the unsatisfactory speed of this process (32%), too much time required by teams (14%) and poor data quality (13%).

The conclusion the authors of the report draw is that companies should hire SQREAM. That’s okay, no surprise because SQREAM ginned up the study and hired a firm to create an objective report, of course.

So money is the Number One issue.

Why do machine learning projects fail? We know the answer: Resources or money. The write up presents as fact:

The top contributing factor to ML project failures in 2023 was insufficient budget (29%), which is consistent with previous findings – including the fact that “budget” is the top challenge in handling and analyzing data at scale, that more than two-thirds of companies experience “bill shock” around their data analytics processes at least quarterly if not more frequently, that that the total cost of analytics is the aspect companies are most dissatisfied with when it comes to their data stack (Figure 4), and that companies consider the high costs involved in ML experimentation to be the primary disadvantage of ML/data analytics today.

I appreciated the inclusion of the costs of data “transformation.” Glib smart software wizards push aside the hassle of normalizing data so the “real” work can get done. Unfortunately, the costs of fixing up source data are often another cause of “sticker shock.” The report says:

Data is typically inaccessible and not ‘workable’ unless it goes through a certain level of transformation. In fact, since different departments within an organization have different needs, it is not uncommon for the same data to be prepared in various ways. Data preparation pipelines are therefore the foundation of data analytics and ML….

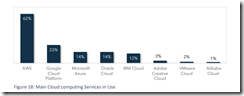

In the final pages of the report a number of graphs appear. Here’s one that stopped me in my tracks:

The sample contained 62 percent user of Amazon Web Services. Number 2 was users of Google Cloud at 23 percent. And in third place, quite surprisingly, was Microsoft Azure at 14 percent, tied with Oracle. A question which occurred to me is: “Perhaps the focus on sticker shock is a reflection of Amazon’s pricing, not just people and overhead functions?”

I will have to wait until more data becomes available to me to determine if the AWS skew and the report findings are normal or outliers.

Stephen E Arnold, October 1, 2024

Salesforce: AI Dreams

September 30, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Big Tech companies are heavily investing in AI technology, including Salesforce. Salesforce CEO Marc Benioff delivered a keynote about his company’s future and the end of an era as reported by Constellation Research: “Salesforce Dreamforce 2024: Takeaways On Agentic AI, Platform, End Of Copilot Era.” Benioff described the copilot era as “hit or miss” and he wants to focus on agentic AI powered by Salesforce.

Constellation Research analyst Doug Henschen said that Benioff made compelling case for Salesforce and Data Cloud being the platform that companies will use to build their AI agents. Salesforce already has metadata, data, app business logic knowledge, and more already programmed in it. While Dream Cloud has data integrated from third-party data clouds and ingested from external apps. Combining these components into one platform without DIY is a very appealing product.

Benioff and his team revamped Salesforce to be less a series of clouds that run independently and more of a bunch of clouds that work together in a native system. It means Salesforce will scale Agentforce across Marketing, Commerce, Sales, Revenue and Service Clouds as well as Tableau.

The new AI Salesforce wants to delete DIY says Benioff:

“‘ DIY means I’m just putting it all together on my own. But I don’t think you can DIY this. You want a single, professionally managed, secure, reliable, available platform. You want the ability to deploy this Agentforce capability across all of these people that are so important for your company. We all have struggled in the last two years with this vision of copilots and LLMs. Why are we doing that? We can move from chatbots to copilots to this new Agentforce world, and it’s going to know your business, plan, reason and take action on your behalf.

It’s about the Salesforce platform, and it’s about our core mantra at Salesforce, which is, you don’t want to DIY it. This is why we started this company.’”

Benioff has big plans for Salesforce and based off this Dreamforce keynote it will succeed. However, AI is still experimental. AI is smart but a human is still easier to work with. Salesforce should consider teaming AI with real people for the ultimate solution.

Whitney Grace, September 30, 2024

AI Maybe Should Not Be Accurate, Correct, or Reliable?

September 26, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Okay, AI does not hallucinate. “AI” — whatever that means — does output incorrect, false, made up, and possibly problematic answers. The buzzword “hallucinate” was cooked up by experts in artificial intelligence who do whatever they can to avoid talking about probabilities, human biases migrated into algorithms, and fiddling with the knobs and dials in the computational wonderland of an AI system like Google’s, OpenAI’s, et al. Even the book Why Machines Learn: The Elegant Math Behind Modern AI ends up tangled in math and jargon which may befuddle readers who stopped taking math after high school algebra or who has never thought about Orthogonal matrices.

The Next Web’s “AI Doesn’t Hallucinate — Why Attributing Human Traits to Tech Is Users’ Biggest Pitfall” is an interesting write up. On one hand, it probably captures the attitude of those who just love that AI goodness by blaming humans for anthropomorphizing smart software. On the other hand, the AI systems with which I have interacted output content that is wrong or wonky. I admit that I ask the systems to which I have access for information on topics about which I have some knowledge. Keep in mind that I am an 80 year old dinobaby, and I view “knowledge” as something that comes from bright people working of projects, reading relevant books and articles, and conference presentations or meeting with subjects far from the best exercise leggings or how to get a Web page to the top of a Google results list.

Let’s look at two of the points in the article which caught my attention.

First, consider this passage which is a quote from and AI expert:

“Luckily, it’s not a very widespread problem. It only happens between 2% to maybe 10% of the time at the high end. But still, it can be very dangerous in a business environment. Imagine asking an AI system to diagnose a patient or land an aeroplane,” says Amr Awadallah, an AI expert who’s set to give a talk at VDS2024 on How Gen-AI is Transforming Business & Avoiding the Pitfalls.

Where does the 2 percent to 10 percent number come from? What methods were used to determine that content was off the mark? What was the sample size? Has bad output been tracked longitudinally for the tested systems? Ah, so many questions and zero answers. My take is that the jargon “hallucination” is coming back to bite AI experts on the ankle.

Second, what’s the fix? Not surprisingly, the way out of the problem is to rename “hallucination” to “confabulation”. That’s helpful. Here’s the passage I circled:

“It’s really attributing more to the AI than it is. It’s not thinking in the same way we’re thinking. All it’s doing is trying to predict what the next word should be given all the previous words that have been said,” Awadallah explains. If he had to give this occurrence a name, he would call it a ‘confabulation.’ Confabulations are essentially the addition of words or sentences that fill in the blanks in a way that makes the information look credible, even if it’s incorrect. “[AI models are] highly incentivized to answer any question. It doesn’t want to tell you, ‘I don’t know’,” says Awadallah.

Third, let’s not forget that the problem rests with the users, the personifies, the people who own French bulldogs and talk to them as though they were the favorite in a large family. Here’s the passage:

The danger here is that while some confabulations are easy to detect because they border on the absurd, most of the time an AI will present information that is very believable. And the more we begin to rely on AI to help us speed up productivity, the more we may take their seemingly believable responses at face value. This means companies need to be vigilant about including human oversight for every task an AI completes, dedicating more and not less time and resources.

The ending of the article is a remarkable statement; to wit:

As we edge closer and closer to eliminating AI confabulations, an interesting question to consider is, do we actually want AI to be factual and correct 100% of the time? Could limiting their responses also limit our ability to use them for creative tasks?

Let me answer the question: Yes, outputs should be presented and possibly scored; for example, 90 percent probable that the information is verifiable. Maybe emojis will work? Wow.

Stephen E Arnold, September 26, 2024