AI in Action: Price Fixing Play

March 18, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

If a tree falls in a forest and no one is there to hear it, does I make a sound? The obvious answer is yes, but the philosophers point out how can that be possible if there wasn’t anyone there to witness the event. The same argument can be made that price fixing isn’t illegal if it’s done by an AI algorithm. Smart people know it is a straw man’s fallacy and so does the Federal Trade Commission: “Price Fixing By Algorithm Is Still Price Fixing.”

The FTC and the Department of Justice agree that if an action is illegal for a human then it is illegal for an algorithm too. The official nomenclature is antitrust compliance. Both departments want to protect consumers against algorithmic collision, particularly in the housing market. They failed a joint legal brief that stresses the importance of a fair, competitive market. The brief stated that algorithms can’t be used to evade illegal price fixing agreements and it is still unlawful to share price fixing information even if the conspirators retain pricing discretion or cheat on the agreement.

Protecting consumers from unfair pricing practices is extremely important as inflation has soared. Rent has increased by 20% since 2020, especially for lower-income people. Nearly half of renters also pay more than 30% of their income in rent and utilities. The Department of Justice and the FTC also hold other industries accountable for using algorithms illegally:

“The housing industry isn’t alone in using potentially illegal collusive algorithms. The Department of Justice has previously secured a guilty plea related to the use of pricing algorithms to fix prices in online resales, and has an ongoing case against sharing of price-related and other sensitive information among meat processing competitors. Other private cases have been recently brought against hotels(link is external) and casinos(link is external).”

Hopefully the FTC and the Department of Justice retain their power to protect consumers. Inflation will continue to rise and consumers continue to suffer.

Whitney Grace, March 18, 2024

Humans Wanted: Do Not Leave Information Curation to AI

March 15, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Remember RSS feeds? Before social media took over the Internet, they were the way we got updates from sources we followed. It may be time to dust off the RSS, for it is part of blogger Joan Westenberg’s plan to bring a human touch back to the Web. We learn of her suggestions in, “Curation Is the Last Best Hope of Intelligent Discourse.”

Westenberg argues human judgement is essential in a world dominated by AI-generated content of dubious quality and veracity. Generative AI is simply not up to the task. Not now, perhaps not ever. Fortunately, a remedy is already being pursued, and Westenberg implores us all to join in. She writes:

“Across the Fediverse and beyond, respected voices are leveraging platforms like Mastodon and their websites to share personally vetted links, analysis, and creations following the POSSE model – Publish on your Own Site, Syndicate Elsewhere. By passing high-quality, human-centric content through their own lens of discernment before syndicating it to social networks, these curators create islands of sanity amidst oceans of machine-generated content of questionable provenance. Their followers, in turn, further syndicate these nuggets of insight across the social web, providing an alternative to centralised, algorithmically boosted feeds. This distributed, decentralised model follows the architecture of the web itself – networks within networks, sites linking out to others based on trust and perceived authority. It’s a rethinking of information democracy around engaged participation and critical thinking from readers, not just content generation alone from so-called ‘influencers’ boosted by profit-driven behemoths. We are all responsible for carefully stewarding our attention and the content we amplify via shares and recommendations. With more voices comes more noise – but also more opportunity to find signals of truth if we empower discernment. This POSSE model interfaces beautifully with RSS, enabling subscribers to follow websites, blogs and podcasts they trust via open standard feeds completely uncensored by any central platform.”

But is AI all bad? No, Westenberg admits, the technology can be harnessed for good. She points to Anthropic‘s Constitutional AI as an example: it was designed to preserve existing texts instead of overwriting them with automated content. It is also possible, she notes, to develop AI systems that assist human curators instead of compete with them. But we suspect we cannot rely on companies that profit from the proliferation of shoddy AI content to supply such systems. Who will?

Cynthia Murrell, March 15, 2024

Is the AskJeeves Approach the Next Big Thing Again?

March 14, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Way back when I worked in silicon Valley or Plastic Fantastic as one 1080s wag put it, AskJeeves burst upon the Web search scene. The idea is that a user would ask a question and the helpful digital butler would fetch the answer. This worked for questions like “What’s the temperature in San Mateo?” The system did not work for the types of queries my little group of full-time equivalents peppered assorted online services.

A young wizard confronts a knowledge problem. Thanks, MSFT Copilot. How’s that security today? Okay, I understand. Good enough.

The mechanism involved software and people. The software processed the query and matched up the answer with the outputs in a knowledge base. The humans wrote rules. If there was no rule and no knowledge, the butler fell on his nose. It was the digital equivalent of nifty marketing applied to a system about as aware as the man servant in Kazuo Ishiguro’s The Remains of the Day.

I thought about AskJeeves as a tangent notion as I worked through “LLMs Are Not Enough… Why Chatbots Need Knowledge Representation.” The essay is an exploration of options intended to reduce the computational cost, power sucking, and blind spots in large language models. Progress is being made and will be made. A good example is this passage from the essay which sparked my thinking about representing knowledge. This is a direct quote:

In theory, there’s a much better way to answer these kinds of questions.

- Use an LLM to extract knowledge about any topics we think a user might be interested in (food, geography, etc.) and store it in a database, knowledge graph, or some other kind of knowledge representation. This is still slow and expensive, but it only needs to be done once rather than every time someone wants to ask a question.

- When someone asks a question, convert it into a database SQL query (or in the case of a knowledge graph, a graph query). This doesn’t necessarily need a big expensive LLM, a smaller one should do fine.

- Run the user’s query against the database to get the results. There are already efficient algorithms for this, no LLM required.

- Optionally, have an LLM present the results to the user in a nice understandable way.

Like AskJeeves, the idea is a good one. Create a system to take a user’s query and match it to information answering the question. The AskJeeves’ approach embodied what I called rules. As long as one has the rules, the answers can be plugged in to a database. A query arrives, looks for the answer, and presents it. Bingo. Happy user with relevant information catapults AskJeeves to the top of a world filled with less elegant solutions.

The question becomes, “Can one represent knowledge in such a way that the information is current, usable, and “accurate” (assuming one can define accurate). Knowledge, however, is a slippery fish. Small domains with well-defined domains chock full of specialist knowledge should be easier to represent. Well, IBM Watson and its adventure in Houston suggests that the method is okay, but it did not work. Larger scale systems like an old-fashioned Web search engine just used “signals” to produce lists which presumably presented answers. “Clever,” correct? (Sorry, that’s an IBM Almaden bit of humor. I apologize for the inside baseball moment.)

What’s interesting is that youthful laborers in the world of information retrieval are finding themselves arm wrestling with some tough but elusive problems. What is knowledge? The answer, “It depends” does not provide much help. Where does knowledge originate, the answer “No one knows for sure.” That does not advance the ball downfield either.

Grabbing epistemology by the shoulders and shaking it until an answer comes forth is a tough job. What’s interesting is that those working with large language models are finding themselves caught in a room of mirrors intact and broken. Here’s what TheTemples.org has to say about this imaginary idea:

The myth represented in this Hall tells of the divinity that enters the world of forms fragmenting itself, like a mirror, into countless pieces. Each piece keeps its peculiarity of reflecting the absolute, although it cannot contain the whole any longer.

I have no doubt that a start up with venture funding will solve this problem even though a set cannot contain itself. Get coding now.

Stephen E Arnold, March 14, 2024

AI Limits: The Wind Cannot Hear the Shouting. Sorry.

March 14, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

One of my teachers had a quote on the classroom wall. It was, I think, from a British novelist. Here’s what I recall:

Decide on what you think is right and stick to it.

I never understood the statement. In school, I was there to learn. How could I decide whether what I was reading was correct. Making a decision about what I thought was stupid because I was uninformed. The notion of “stick” is interesting and also a little crazy. My family was going to move to Brazil, and I knew that sticking to what I did in the Midwest in the 1950s would have to change. For one thing, we had electricity. The town to which we were relocating had electricity a few hours each day. Change was necessary. Even as a young sprout, trying to prevent something required more than talk, writing a Letter to the Editor, or getting a petition signed.

I thought about this crazy quote as soon as I read “AI Bioweapons? Scientists Agree to Policies to Reduce Risk of Human Disaster.” The fear mongering note of the write up’s title intrigued me. Artificial intelligence is in what I would call morph mode. What this means is that getting a fix on what is new and impactful in the field of artificial intelligence is difficult. An electrical engineering publication reported that experts are not sure if what is going on is good or bad.

Shouting into the wind does not work for farmers nor AI scientists. Thanks, MSFT Copilot. Busy with security again?

The “AI Bioweapons” essay is leaning into the bad side of the AI parade. The point of the write up is that “over 100 scientists” want to “prevent the creation of AI bioweapons.” The article states:

The agreement, crafted following a 20230 University of Washington summit and published on Friday, doesn’t ban or condemn AI use. Rather, it argues that researchers shouldn’t develop dangerous bioweapons using AI. Such an ask might seem like common sense, but the agreement details guiding principles that could help prevent an accidental DNA disaster.

That sounds good, but is it like the quote about “decide on what you think is right and stick to it”? In a dynamic environment, change is appears to accelerate. Toss in technology and the potential for big wins (either financial, professional, or political), and the likelihood of slowing down the rate of change is reduced.

To add some zip to the AI stew, much of the technology required to do some AI fiddling around is available as open source software or low-cost applications and APIs.

I think it is interesting that 100 scientists want to prevent something. The hitch in the git-along is that other countries have scientists who have access to AI research, tools, software, and systems. These scientists may feel as thought their reminding people that doom is (maybe?) just around the corner or a ruined building in an abandoned town on Route 66.

Here are a few observations about why individuals rally around a cause, which is widely perceived by some of those in the money game as the next big thing:

- The shouters perception of their importance makes it an imperative to speak out about danger

- Getting a group of important, smart people to climb on a bandwagon makes the organizers perceive themselves as doing something important and demonstrating their “get it done” mindset

- Publicity is good. It is very good when a speaking engagement, a grant, or consulting gig produces a little extra fame and money, preferably in a combo.

Net net: The wind does not listen to those shouting into it.

Stephen E Arnold, March 14, 2024

AI Deepfakes: Buckle Up. We Are in for a Wild Drifting Event

March 14, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

AI deepfakes are testing the uncanny valley but technology is catching up to make them as good as the real thing. In case you’ve been living under a rock, deepfakes are images, video, and sound clips generated by AI algorithms to mimic real people and places. For example, someone could create a deepfake video of Joe Biden and Donald Trump in a sumo wrestling match. While the idea of the two presidential candidates duking it out on a sumo mat is absurd, technology is that advanced.

Gizmodo reports the frustrating news that “The AI Deepfakes Problem Is Going To Get Unstoppably Worse”. Bad actors are already using deepfakes to wreak havoc on the world. Federal regulators outlawed robocalls and OpenAI and Google released watermarks on AI-generated images. These aren’t doing anything to curb bad actors.

Which is real? Which is fake? Thanks, MSFT Copilot, the objects almost appear identical. Close enough like some security features. Close enough means good enough, right?

New laws and technology need to be adopted and developed to prevent this new age of misinformation. There should be an endless amount of warnings on deepfake videos and soundbites, not to mention service providers should employ them too. It is going to take a horrifying event to make AI deepfakes more prevalent:

"Deepfake detection technology also needs to get a lot better and become much more widespread. Currently, deepfake detection is not 100% accurate for anything, according to Copyleaks CEO Alon Yamin. His company has one of the better tools for detecting AI-generated text, but detecting AI speech and video is another challenge altogether. Deepfake detection is lagging generative AI, and it needs to ramp up, fast.”

Wired Magazine missed an opportunity to make clear that the wizards at Google can sell data and advertising, but the sneaker-wearing marvels cannot manage deepfake adult pictures. Heck, Google cannot manage YouTube videos teaching people how to create deepfakes. My goodness, what happens if one uploads ASCII art of a problematic item to Gemini? One of my team tells me that the Sundar & Prabhakar guard rails, don’t work too well in some situations.

Not every deepfake will be as clumsy as the one the “to be maybe” future queen of England finds herself ensnared. One can ask Taylor Swift I assume.

Whitney Grace’s March 14, 2024

Can Your Job Be Orchestrated? Yes? Okay, It Will Be Smartified

March 13, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

My work career over the last 60 years has been filled with luck. I have been in the right place at the right time. I have been in companies which have been acquired, reassigned, and exposed to opportunities which just seemed to appear. Unlike today’s young college graduate, I never thought once about being able to get a “job.” I just bumbled along. In an interview for something called Singularity, the interviewer asked me, “What’s been the key to your success?” I answered, “Luck.” (Please, keep in mind that the interviewer assumed I was a success, but he had no idea that I did not want to be a success. I just wanted to do interesting work.)

Thanks, MSFT Copilot. Will smart software do your server security? Ho ho ho.

Would I be able to get a job today if I were 20 years old? Believe it or not, I told my son in one of our conversations about smart software: “Probably not.” I thought about this comment when I read today (March 13, 2024) the essay “Devin AI Can Write Complete Source Code.” The main idea of the article is that artificial intelligence, properly trained, appropriately resourced can do what only humans could do in 1966 (when I graduated with a BA degree from a so so university in flyover country). The write up states:

Devin is a Generative AI Coding Assistant developed by Cognition that can write and deploy codes of up to hundreds of lines with just a single prompt. Although there are some similar tools for the same purpose such as Microsoft’s Copilot, Devin is quite the advancement as it not only generates the source code for software or website but it debugs the end-to-end before the final execution.

Let’s assume the write up is mostly accurate. It does not matter. Smart software will be shaped to deliver what I call orchestrated solutions either today, tomorrow or next month. Jobs already nuked by smartification are customer service reps, boilerplate writing jobs (hello, McKinsey), and translation. Some footloose and fancy free gig workers without AI skills may face dilemmas about whether to pursue begging, YouTubing the van life, or doing some spelunking in the Chemical Abstracts database for molecular recipes in a Walmart restroom.

The trajectory of applied AI is reasonably clear to me. Once “programming” gets swept into the Prada bag of AI, what other professions will be smartified? Once again, the likely path is light by dim but visible Alibaba solar lights for the garden:

- Legal tasks which are repetitive even though the cases are different, the work flow is something an average law school graduate can master and learn to loathe

- Forensic accounting. Accountants are essentially Ground Hog Day people, because every tax cycle is the same old same old

- Routine one-day surgeries. Sorry, dermatologists, cataract shops, and kidney stone crunchers. Robots will do the job and not screw up the DRG codes too much.

- Marketers. I know marketing requires creative thinking. Okay, but based on the Super Bowl ads this year, I think some clients will be willing to give smart software a whirl. Too bad about filming a horse galloping along the beach in Half Moon Bay though. Oh, well.

That’s enough of the professionals who will be affected by orchestrated work flows surfing on smartified software.

Why am I bothering to write down what seems painfully obvious to my research team?

I just wanted another reason to say, “I am glad I am old.” What many young college graduates will discover that despite my “luck” over the course of my work career, smartified software will not only kill some types of work. Smart software will remove the surprise in a serendipitous life journey.

To reiterate my point: I am glad I am old and understand efficiency, smartification, and the value of having been lucky.

Stephen E Arnold, March 13, 2024

AI Bubble Gum Cards

March 13, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

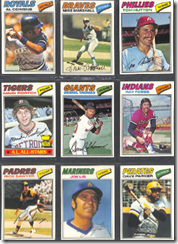

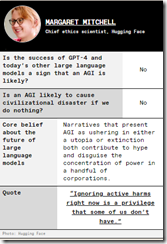

A publication for electrical engineers has created a new mechanism for making AI into a collectible. Navigate to “The AI apocalypse: A Scorecard.” Scroll down to the part of the post which looks like the gems from the 1050s:

The idea is to pick 22 experts and gather their big ideas about AI’s potential to destroy humanity. Here’s one example of an IEEE bubble gum card:

© by the estimable IEEE.

The information on the cards is eclectic. It is clear that some people think smart software will kill me and you. Others are not worried.

My thought is that IEEE should expand upon this concept; for example, here are some bubble gum card ideas:

- Do the NFT play? These might be easier to sell than IEEE memberships and subscriptions to the magazine

- Offer actual, fungible packs of trading cards with throw-back bubble gum

- Create an AI movie about AI experts with opposing ideas doing battle in a video game type world. Zap. You lose, you doubter.

But the old-fashioned approach to selling trading cards to grade school kids won’t work. First, there are very few corner stores near schools in many cities. Two, a special interest group will agitate to block the sale of cards about AI because the inclusion of chewing gum will damage children’s teeth. And, three, kids today want TikToks, at least until the service is banned from a fast-acting group of elected officials.

I think the IEEE will go in a different direction; for example, micro USBs with AI images and source code on them. Or, the IEEE should just advance to the 21st-century and start producing short-form AI videos.

The IEEE does have an opportunity. AI collectibles.

Stephen E Arnold, March 13, 2024

AI to AI Program for March 12, 2024, Now Available

March 12, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Erik Arnold, with some assistance from Stephen E Arnold (the father) has produced another installment of AI to AI: Smart Software for Government Use Cases.” The program presents news and analysis about the use of artificial intelligence (smart software) in government agencies.

The ad-free program features Erik S. Arnold, Managing Director of Govwizely, a Washington, DC consulting and engineering services firm. Arnold has extensive experience working on technology projects for the US Congress, the Capitol Police, the Department of Commerce, and the White House. Stephen E Arnold, an adviser to Govwizely, also participates in the program. The current episode explores five topics in an father-and-son exploration of important, yet rarely discussed subjects. These include the analysis of law enforcement body camera video by smart software, the appointment of an AI information czar by the US Department of Justice, copyright issues faced by UK artificial intelligence projects, the role of the US Marines in the Department of Defense’s smart software projects, and the potential use of artificial intelligence in the US Patent Office.

The video is available on YouTube at https://youtu.be/nsKki5P3PkA. The Apple audio podcast is at this link.

Stephen E Arnold, March 12, 2024

AI Hermeneutics: The Fire Fights of Interpretation Flame

March 12, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

My hunch is that not too many of the thumb-typing, TikTok generation know what hermeneutics means. Furthermore, like most of their parents, these future masters of the phone-iverse don’t care. “Let software think for me” would make a nifty T shirt slogan at a technology conference.

This morning (March 12, 2024) I read three quite different write ups. Let me highlight each and then link the content of those documents to the the problem of interpretation of religious texts.

Thanks, MSFT Copilot. I am confident your security team is up to this task.

The first write up is a news story called “Elon Musk’s AI to Open Source Grok This Week.” The main point for me is that Mr. Musk will put the label “open source” on his Grok artificial intelligence software. The write up includes an interesting quote; to wit:

Musk further adds that the whole idea of him founding OpenAI was about open sourcing AI. He highlighted his discussion with Larry Page, the former CEO of Google, who was Musk’s friend then. “I sat in his house and talked about AI safety, and Larry did not care about AI safety at all.”

The implication is that Mr. Musk does care about safety. Okay, let’s accept that.

The second story is an ArXiv paper called “Stealing Part of a Production Language Model.” The authors are nine Googlers, two ETH wizards, one University of Washington professor, one OpenAI researcher, and one McGill University smart software luminary. In short, the big outfits are making clear that closed or open, software is rising to the task of revealing some of the inner workings of these “next big things.” The paper states:

We introduce the first model-stealing attack that extracts precise, nontrivial information from black-box production language models like OpenAI’s ChatGPT or Google’s PaLM-2…. For under $20 USD, our attack extracts the entire projection matrix of OpenAI’s ada and babbage language models.

The third item is “How Do Neural Networks Learn? A Mathematical Formula Explains How They Detect Relevant Patterns.” The main idea of this write up is that software can perform an X-ray type analysis of a black box and present some useful data about the inner workings of numerical recipes about which many AI “experts” feign total ignorance.

Several observations:

- Open source software is available to download largely without encumbrances. Good actors and bad actors can use this software and its components to let users put on a happy face or bedevil the world’s cyber security experts. Either way, smart software is out of the bag.

- In the event that someone or some organization has secrets buried in its software, those secrets can be exposed. One the secret is known, the good actors and the bad actors can surf on that information.

- The notion of an attack surface for smart software now includes the numerical recipes and the model itself. Toss in the notion of data poisoning, and the notion of vulnerability must be recast from a specific attack to a much larger type of exploitation.

Net net: I assume the many committees, NGOs, and government entities discussing AI have considered these points and incorporated these articles into informed policies. In the meantime, the AI parade continues to attract participants. Who has time to fool around with the hermeneutics of smart software?

Stephen E Arnold, March 12, 2024

Thomson Reuters Is Going to Do AI: Run Faster

March 11, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Thomson Reuters, a mostly low profile outfit, is going to do AI. Why’s this interesting to law schools, lawyers, accountants, special librarians, libraries, and others who “pay” for “real” information? There are three reasons:

- Money

- Markets

- Mania.

Thomson Reuters has been a tech talker for decades. The company created skunk works. It hired quirky MIT wizards. I bought businesses with information technology. But underneath the professional publishing clear coat, the firm is the creation of Lord Thomson of Fleet. The firm has a track record of being able to turn a profit on its $7 billion in revenues. But the future, if news reports are accurate, is artificial intelligence or smart software.

The young publishing executive says, “I have go to get ahead of this AI bus before it runs over me.” Thanks, MSFT Copilot. Working on security today?

But wait! What makes Thomson Reuters different from the New York Times or (heaven forbid the question) Rupert Murdoch’s confections? The answer is in my opinion: Thomson Reuters does the trust thing and is a professional publisher. I don’t want to explain that in the world of Lord Thomson of Fleet that publishing is publishing. Nope. Not going there. Thomson Reuters is a custom made billiard cue, not one of those bar pool cheapos.

As appropriate to today’s Thomson Reuters, the news appeared in Thomson’s own news releases first; for example, “Thomson Reuters Profit Beats Estimates Amid AI Push.” Yep, AI drives profits. That’s the “m” in money. Plus, Thomson late last year this article found its way to the law firm market (yep, that’s the second “m”): “Morgan Lewis and Thomson Reuters Enter into Partnership to Put Law Firms’ Needs at the Heart of AI Development.”

Now the third “m” or mania. Here’s a representative story, “Thomson Reuters to Invest US$8 billion in a Substantial AI-Focused Spending Initiative.” You can also check out the Financial Times’s report at this link.

Thomson Reuters is a $7 billion corporation. If the $8 billion number is on the money, the venerable news outfit is going to spend the equivalent on one year’s revenue acquiring and investing in smart software. In terms of professional publishing, this chunk of change is roughly the equivalent of Sam AI-Man’s need for trillions of dollars for his smart software business.

Several thoughts struck me as I was reading about the $8 billion investment in smart software:

- In terms of publishing or more narrowly professional publishing, $8 billion will take some time to spend. But time is not on the side of publishing decision making processes. When the check is written for an AI investment, there may be some who ask, “Is this the correct investment? After all, aren’t we professional publishers serving lawyers, accountants, and researchers?”

- The US legal processes are interesting. But the minor challenge of Crown copyright adds a bit of spice to certain investments. The UK government itself is reluctant to push into some AI areas due to concerns that certain information may not be available unless the red tape about copyright has been trimmed, rolled, and put on the shelf. Without being disrespectful, Thomson Reuters could find that some of the $8 billion headed into its clients pockets as legal challenges make their way through courts in Britain, Canada, and the US and probably some frisky EU states.

- The game for AI seems to be breaking into two what a former Greek minister calls the techno feudal set up. On one hand, there are giant technology centric companies (of which Thomson Reuters is not one of the club members). These are Google- and Microsoft-scale outfits with infrastructure, data, customers, and multiple business models. On the other hand, there are the Product Watch outfits which are using open source and APIs to create “new” and “important” AI businesses, applications, and solutions. In short, there are some barons and a whole grab-bag of lesser folk. Is Thomson Reuters going to be able to run with the barons. Remember, please, the barons are riding stallions. Thomson Reuter-type firms either walk or ride donkeys.

Net net: If Thomson Reuters spends $8 billion on smart software, how many lawyers, accountants, and researchers will be put out of work? The risks are not just bad AI investments. The threat maybe to gut the billing power of the paying customers for Thomson Reuters’ content. This will be entertaining to watch.

PS. The third “m”? It is mania, AI mania.

Stephen E Arnold, March 11, 2024

x

x

x

x

x