AI Inventors Barred from Patents. For Now

January 17, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

For anyone wondering whether an AI system can be officially recognized as a patent inventor, the answer in two countries is no. Or at least not yet. We learn from The Fashion Law, “UK Supreme Court Says AI Cannot Be Patent Inventor.” Inventor Stephen Thaler pursued two patents on behalf of DABUS, his AI system. After the UK’s Intellectual Property Office, High Court, and the Court of Appeal all rejected the applications, the intrepid algorithm advocate appealed to the highest court in that land. The article reveals:

“In the December 20 decision, which was authored by Judge David Kitchin, the Supreme Court confirmed that as a matter of law, under the Patents Act, an inventor must be a natural person, and that DABUS does not meet this requirement. Against that background, the court determined that Thaler could not apply for and/or obtain a patent on behalf of DABUS.”

The court also specified the patent applications now stand as “withdrawn.” Thaler also tried his luck in the US legal system but met with a similar result. So is it the end of the line for DABUS’s inventor ambitions? Not necessarily:

“In the court’s determination, Judge Kitchin stated that Thaler’s appeal is ‘not concerned with the broader question whether technical advances generated by machines acting autonomously and powered by AI should be patentable, nor is it concerned with the question whether the meaning of the term ‘inventor’ ought to be expanded … to include machines powered by AI ….’”

So the legislature may yet allow AIs into the patent application queues. Will being a “natural person” soon become unnecessary to apply for a patent? If so, will patent offices increase their reliance on algorithms to handle the increased caseload? Then machines would grant patents to machines. Would natural people even be necessary anymore? Once a techno feudalist with truckloads of cash and flocks of legal eagles pulls up to a hearing, rules can become — how shall I say it? — malleable.

Cynthia Murrell, January 17, 2024

Guidelines. What about AI and Warfighting? Oh, Well, Hmmmm.

January 16, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

It seems November 2023’s AI Safety Summit, hosted by the UK, was a productive gathering. At the very least, attendees drew up some best practices and brought them to agencies in their home countries. TechRepublic describes the “New AI Security Guidelines Published by NCSC, CISA, & More International Agencies.” Writer Owen Hughes summarizes:

“The Guidelines for Secure AI System Development set out recommendations to ensure that AI models – whether built from scratch or based on existing models or APIs from other companies – ‘function as intended, are available when needed and work without revealing sensitive data to unauthorized parties.’ Key to this is the ‘secure by default’ approach advocated by the NCSC, CISA, the National Institute of Standards and Technology and various other international cybersecurity agencies in existing frameworks. Principles of these frameworks include:

* Taking ownership of security outcomes for customers.

* Embracing radical transparency and accountability.

* Building organizational structure and leadership so that ‘secure by design’ is a top business priority.

A combined 21 agencies and ministries from a total of 18 countries have confirmed they will endorse and co-seal the new guidelines, according to the NCSC. … Lindy Cameron, chief executive officer of the NCSC, said in a press release: ‘We know that AI is developing at a phenomenal pace and there is a need for concerted international action, across governments and industry, to keep up. These guidelines mark a significant step in shaping a truly global, common understanding of the cyber risks and mitigation strategies around AI to ensure that security is not a postscript to development but a core requirement throughout.’”

Nice idea, but we noted “OpenAI’s Policy No Longer Explicitly Bans the Use of Its Technology for Military and Warfare.” The article reports that OpenAI:

updated the page on January 10 "to be clearer and provide more service-specific guidance," as the changelog states. It still prohibits the use of its large language models (LLMs) for anything that can cause harm, and it warns people against using its services to "develop or use weapons." However, the company has removed language pertaining to "military and warfare." While we’ve yet to see its real-life implications, this change in wording comes just as military agencies around the world are showing an interest in using AI.

We are told cybersecurity experts and analysts welcome the guidelines. But will the companies vending and developing AI products willingly embrace principles like “radical transparency and accountability”? Will regulators be able to force them to do so? We have our doubts. Nevertheless, this is a good first step. If only it had been taken at the beginning of the race.

Cynthia Murrell, January 16, 2024

Cybersecurity AI: Yet Another Next Big Thing

January 15, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Not surprisingly, generative AI has boosted the cybersecurity arms race. As bad actors use algorithms to more efficiently breach organizations’ defenses, security departments can only keep up by using AI tools. At least that is what VentureBeat maintains in, “How Generative AI Will Enhance Cybersecurity in a Zero-Trust World.” Writer Louis Columbus tells us:

“Deep Instinct’s recent survey, Generative AI and Cybersecurity: Bright Future of Business Battleground? quantifies the trends VentureBeat hears in CISO interviews. The study found that while 69% of organizations have adopted generative AI tools, 46% of cybersecurity professionals feel that generative AI makes organizations more vulnerable to attacks. Eighty-eight percent of CISOs and security leaders say that weaponized AI attacks are inevitable. Eighty-five percent believe that gen AI has likely powered recent attacks, citing the resurgence of WormGPT, a new generative AI advertised on underground forums to attackers interested in launching phishing and business email compromise attacks. Weaponized gen AI tools for sale on the dark web and over Telegram quickly become best sellers. An example is how quickly FraudGPT reached 3,000 subscriptions by July.”

That is both predictable and alarming. What should companies do about it? The post warns:

“‘Businesses must implement cyber AI for defense before offensive AI becomes mainstream. When it becomes a war of algorithms against algorithms, only autonomous response will be able to fight back at machine speeds to stop AI-augmented attacks,’ said Max Heinemeyer, director of threat hunting at Darktrace.”

Before AI is mainstream? Better get moving. We’re told the market for generative AI cybersecurity solutions is already growing, and Forrester divides it into three use cases: content creation, behavior prediction, and knowledge articulation. Of course, Columbus notes, each organization will have different needs, so adaptable solutions are important. See the write-up for some specific tips and links to further information. The tools may be new but the dynamic is a constant: as bad actors up their game, so too must security teams.

Cynthia Murrell, January 15, 2024

Believe in Smart Software? Sure, Why Not?

January 12, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

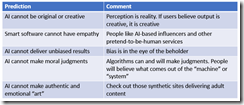

Predictions are slippery fish. Grab one, a foot long Lake Michigan beastie. Now hold on. Wow, that looked easy. Predictions are similar. But slippery fish can get away or flop around and make those in the boat look silly. I thought about fish and predictions when I read “What AI will Never Be Able To Do.” The essay is a replay of an answer from an AI or smart software system.

My initial reaction was that someone came up with a blog post that required Google Bard and what seems to be minimal effort to create. I am thinking about how a high school student might rely on ChatGPT to write an essay about a current event or a how-to essay. I reread the write up and formulated several observations. The table below presents the “prediction” and my comment about that statement. I end the essay with a general comment about smart software.

The presentation of word salad reassurances underscores a fundamental problem of smart software. The system can be tuned to reassure. At the same time, the companies operating the software can steer, shape, and weaponize the information presented. Those without the intellectual equipment to research and reason about outputs are likely to accept the answers. The deterioration of education in the US and other countries virtually guarantees that smart software will replace critical thinking for many people.

Don’t believe me. Ask one of the engineers working on next generation smart software. Just don’t ask the systems or the people who use another outfit’s software to do the thinking.

Stephen E Arnold, January 12, 2024

Cheating: Is It Not Like Love, Honor, and Truth?

January 10, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I would like to believe the information in this story: “ChatGPT Did Not Increase Cheating in High Schools, Stanford Researchers Find.” My reservations can be summed up with three points: [a] The Stanford president (!) who made up datal, [b] The behavior of Stanford MBAs at certain go-go companies, and [c] How does one know a student did not cheat? (I know the answer: Surveillance technology, perchance. Ooops. That’s incorrect. That technology is available to Stanford graduates working at certain techno feudalist outfits.

Mom asks her daughter, “I showed you how to use the AI generator, didn’t I? Why didn’t you use it?” Thanks, MSFT Copilot Bing thing. Pretty good today.

The cited write up reports as actual factual:

The university, which conducted an anonymous survey among students at 40 US high schools, found about 60% to 70% of students have engaged in cheating behavior in the last month, a number that is the same or even decreased slightly since the debut of ChatGPT, according to the researchers.

I have tried to avoid big time problems in my dinobaby life. However, I must admit that in high school, I did these things: [a] Worked with my great grandmother to create a poem subsequently published in a national anthology in 1959. Granny helped me cheat; she was a deceitful septuagenarian as I recall. I put my name on the poem, omitting Augustus. Yes, cheating. [b] Sold homework to students not in my advanced classes. I would consider this cheating, but I was saving money for my summer university courses at the University of Illinois. I went for the cash. [c] After I ended up in the hospital, my girl friend at the time showed up at the hospital, reviewed the work covered in class, and finished a science worksheet because I passed out from the post surgery medications. Yes, I cheated, and Linda Mae who subsequently spent her life in Africa as a nurse, helped me cheat. I suppose I will burn in hell. My summary suggests that “cheating” is an interesting concept, and it has some nuances.

Did the Stanford (let’s make up data) University researchers nail down cheating or just hunt for the AI thing? Are the data reproducible? Was the methodology rigorous, the results validated, and micro analyses run to determine if the data were on the money? Yeah, sure, sure.

I liked this statement:

Stanford also offers an online hub with free resources to help teachers explain to high school students the dos and don’ts of using AI.

In the meantime, the researchers said they will continue to collect data throughout the school year to see if they find evidence that more students are using ChatGPT for cheating purposes.

Yep, this is a good pony to ride. I would ask is plain vanilla Google search a form of cheating? I think it is. With most of the people online using it, doesn’t everyone cheat? Let’s ask the Harvard ethics professor, a senior executive at a Facebook-type outfit, and the former president of Stanford.

Stephen E Arnold, January 10, 2023

Googley Gems: 2024 Starts with Some Hoots

January 9, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Another year and I will turn 80. I have seen some interesting things in my 58 year work career, but a couple of black swans have flown across my radar system. I want to share what I find anomalous or possibly harbingers of the new normal.

A dinobaby examines some Alphabet Google YouTube gems. The work is not without its AGonY, however. Thanks, MSFT Copilot Bing thing. Good enough.

First up is another “confession” or “tell all” about the wild, wonderful Alphabet Google YouTube or AGY. (Wow, I caught myself. I almost typed “agony”, not AGY. I am indeed getting old.)

I read “A Former Google Manager Says the Tech Giant Is Rife with Fiefdoms and the Creeping Failure of Senior Leaders Who Weren’t Making Tough Calls.” The headline is a snappy one. I like the phrase “creeping failure.” Nifty image like melting ice and tundra releasing exciting extinct biological bits and everyone’s favorite gas. Let me highlight one point in the article:

[Google has] “lots of little fiefdoms” run by engineers who didn’t pay attention to how their products were delivered to customers. …this territorial culture meant Google sometimes produced duplicate apps that did the same thing or missed important features its competitors had.

I disagree. Plenty of small Web site operators complain about decisions which destroy their businesses. In fact, I am having lunch with one of the founders of a firm deleted by Google’s decider. Also, I wrote about a fellow in India who is likely to suffer the slings and arrows of outraged Googlers because he shoots videos of India’s temples and suggests they have meanings beyond those inculcated in certain castes.

My observation is that happy employees don’t run conferences to explain why Google is a problem or write these weird “let me tell you what life is really like” essays. Something is definitely being signaled. Could it be distress, annoyance, or down-home anger? The “gem”, therefore, is AGY’s management AGonY.

Second, AGY is ramping up its thinking about monetization of its “users.” I noted “Google Bard Advanced Is Coming, But It Likely Won’t Be Free” reports:

Google Bard Advanced is coming, and it may represent the company’s first attempt to charge for an AI chatbot.

And why not? The Red Alert hooted because MIcrosoft’s 2022 announcement of its OpenAI tie up made clear that the Google was caught flat footed. Then, as 2022 flowed, the impact of ChatGPT-like applications made three facets of the Google outfit less murky: [a] Google was disorganized because it had Google Brain and DeepMind which was expensive and confusing in the way Abbott and Costello’s “Who’s on First Routine” made people laugh. [b] The malaise of a cooling technology frenzy yielded to AI craziness which translated into some people saying, “Hey, I can use this stuff for answering questions.” Oh, oh, the search advertising model took a bit of a blindside chop block. And [c] Google found itself on the wrong side of assorted legal actions creating a model for other legal entities to explore, probe, and probably use to extract Google’s life blood — Money. Imagine Google using its data to develop effective subscription campaigns. Wow.

And, the final Google gem is that Google wants to behave like a nation state. “Google Wrote a Robot Constitution to Make Sure Its New AI Droids Won’t Kill Us” aims to set the White House and other pretenders to real power straight. Shades of Isaac Asimov’s Three Laws of Robotics. The write up reports:

DeepMind programmed the robots to stop automatically if the force on its joints goes past a certain threshold and included a physical kill switch human operators can use to deactivate them.

You have to embrace the ethos of a company which does not want its “inventions” to kill people. For me, the message is one that some governments’ officials will hear: Need a machine to perform warfighting tasks?

Small gems but gems not the less. AGY, please, keep ‘em coming.

Stephen E Arnold, January 9, 2024

Cyber Security Software and AI: Man and Machine Hook Up

January 8, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

My hunch is that 2024 is going to be quite interesting with regards to cyber security. The race among policeware vendors to add “artificial intelligence” to their systems began shortly after Microsoft’s ChatGPT moment. Smart agents, predictive analytics coupled to text sources, real-time alerts from smart image monitoring systems are three application spaces getting AI boosts. The efforts are commendable if over-hyped. One high-profile firm’s online webinar presented jargon and buzzwords but zero evidence of the conviction or closure value of the smart enhancements.

The smart cyber security software system outputs alerts which the system manager cannot escape. Thanks, MSFT Copilot Bing thing. You produced a workable illustration without slapping my request across my face. Good enough too.

Let’s accept as a working presence that everyone from my French bulldog to my neighbor’s ex wife wants smart software to bring back the good old, pre-Covid, go-go days. Also, I stipulate that one should ignore the fact that smart software is a demonstration of how numerical recipes can output “good enough” data. Hallucinations, errors, and close-enough-for-horseshoes are part of the method. What’s the likelihood the door of a commercial aircraft would be removed from an aircraft in flight? Answer: Well, most flights don’t lose their doors. Stop worrying. Those are the rules for this essay.

Let’s look at “The I in LLM Stands for Intelligence.” I grant the title may not be the best one I have spotted this month, but here’s the main point of the article in my opinion. Writing about automated threat and security alerts, the essay opines:

When reports are made to look better and to appear to have a point, it takes a longer time for us to research and eventually discard it. Every security report has to have a human spend time to look at it and assess what it means. The better the crap, the longer time and the more energy we have to spend on the report until we close it. A crap report does not help the project at all. It instead takes away developer time and energy from something productive. Partly because security work is consider one of the most important areas so it tends to trump almost everything else.

The idea is that strapping on some smart software can increase the outputs from a security alerting system. Instead of helping the overworked and often reviled cyber security professional, the smart software makes it more difficult to figure out what a bad actor has done. The essay includes this blunt section heading: “Detecting AI Crap.” Enough said.

The idea is that more human expertise is needed. The smart software becomes a problem, not a solution.

I want to shift attention to the managers or the employee who caused a cyber security breach. In what is another zinger of a title, let’s look at this research report, “The Immediate Victims of the Con Would Rather Act As If the Con Never Happened. Instead, They’re Mad at the Outsiders Who Showed Them That They Were Being Fooled.” Okay, this is the ostrich method. Deny stuff by burying one’s head in digital sand like TikToks.

The write up explains:

The immediate victims of the con would rather act as if the con never happened. Instead, they’re mad at the outsiders who showed them that they were being fooled.

Let’s assume the data in this “Victims” write up are accurate, verifiable, and unbiased. (Yeah, I know that is a stretch.)

What do these two articles do to influence my view that cyber security will be an interesting topic in 2024? My answers are:

- Smart software will allegedly detect, alert, and warn of “issues.” The flow of “issues” may overwhelm or numb staff who must decide what’s real and what’s a fakeroo. Burdened staff can make errors, thus increasing security vulnerabilities or missing ones that are significant.

- Managers, like the staffer who lost a mobile phone, with company passwords in a plain text note file or an email called “passwords” will blame whoever blows the whistle. The result is the willful refusal to talk about what happened, why, and the consequences. Examples range from big libraries in the UK to can kicking hospitals in a flyover state like Kentucky.

- Marketers of remediation tools will have a banner year. Marketing collateral becomes a closed deal making the art history majors writing copy secure in their job at a cyber security company.

Will bad actors pay attention to smart software and the behavior of senior managers who want to protect share price or their own job? Yep. Close attention.

Stephen E Arnold, January 8, 2024

THE I IN LLM STANDS FOR INTELLIGENCE

xx

x

x

x

x

x

Is Philosophy Irrelevant to Smart Software? Think Before Answering, Please

January 8, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I listened to Lex Fridman’s interview with the founder of Extropic. The is into smart software and “inventing” a fresh approach to the the plumbing required to make AI more like humanoids.

As I listened to the questions and answers, three factoids stuck in my mind:

- Extropic’s and its desire to just go really fast is a conscious decision shared among those involved with the company; that is, we know who wants to go fast because they work there or work at the firm. (I am not going to argue about the upside and downside of “going fast.” That will be another essay.)

- The downstream implications of the Extropic vision are secondary to the benefits of finding ways to avoid concentration of AI power. I think the idea is that absolute power produces outfits like the Google-type firms which are bedeviling competitors, users, and government authorities. Going fast is not a thrill for processes that require going slow.

- The decisions Extropic’s founder have made are bound up in a world view, personal behaviors for productivity, interesting foods, and learnings accreted over a stellar academic and business career. In short, Extropic embodies a philosophy.

Philosophy, therefore, influences decisions. So we come to my topic in this essay. I noted two different write ups about how informed people take decisions. I am not going to refer to philosophers popular in introductory college philosophy classes. I am going to ignore the uneven treatment of philosophers in Will and Ariel Durant’s Story of Philosophy. Nah. I am going with state of the art modern analysis.

The first online article I read is a survey (knowledge product) of the estimable IBM / Watson outfit or a contractor. The relatively current document is “CEO Decision Making in the Ago of AI.” The main point of the document in my opinion is summed up in this statement from a computer hardware and services company:

Any decision that makes its way to the CEO is one that involves high degrees of uncertainty, nuance, or outsize impact. If it was simple, someone else— or something else—would do it. As the world grows more complex, so does the nature of the decisions landing on a CEO’s desk.

But how can a CEO decide? The answer is, “Rely on IBM.” I am not going to recount the evolution (perhaps devolution) of IBM. The uncomfortable stories about shedding old employees (the term dinobaby originated at IBM according to one former I’ve Been Moved veteran). I will not explain how IBM’s decisions about chip fabrication, its interesting hiring policies of individuals who might have retained some fondness for the land of their fathers and mothers, nor the fancy dancing required to keep mainframes as a big money pump. Nope.

The point is that IBM is positioning itself as a thought leader, a philosopher of smart software, technology, and management. I find this interesting because IBM, like some Google type companies, are case examples of management shortcoming. These same shortcomings are swathed in weird jargon and buzzwords which are bent to one end: Generating revenue.

Let me highlight one comment from the 27 page document and urge you to read it when you have a few moments free. Here’s the one passage I will use as a touchstone for “decision making”:

The majority of CEOs believe the most advanced generative AI wins.

Oh, really? Is smart software sufficiently mature? That’s news to me. My instinct is that it is new information to many CEOs as well.

The second essay about decision making is from an outfit named Ness Labs. That essay is “The Science of Decision-Making: Why Smart People Do Dumb Things.” The structure of this essay is more along the lines of a consulting firm’s white paper. The approach contrasts with IBM’s free-floating global survey document.

The obvious implication is that if smart people are making dumb decisions, smart software can solve the problem. Extropic would probably agree and, were the IBM survey data accurate, “most CEOs” buy into a ride on the AI bandwagon.k

The Ness Labs’ document includes this statement which in my view captures the intent of the essay. (I suggest you read the essay and judge for yourself.)

So, to make decisions, you need to be able to leverage information to adjust your actions. But there’s another important source of data your brain uses in decision-making: your emotions.

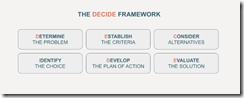

Ah, ha, logic collides with emotions. But to fix the “problem” Ness Labs provides a diagram created in 2008 (a bit before the January 2022 Microsoft OpenAI marketing fireworks:

Note that “decide” is a mnemonic device intended to help me remember each of the items. I learned this technique in the fourth grade when I had to memorize the names of the Great Lakes. No one has ever asked me to name the Great Lakes by the way.

Okay, what we have learned is that IBM has survey data backing up the idea that smart software is the future. Those data, if on the money, validate the go-go approach of Extropic. Plus, Ness Labs provides a “decider model” which can be used to create better decisions.

I concluded that philosophy is less important than fostering a general message that says, “Smart software will fix up dumb decisions.” I may be over simplifying, but the implicit assumptions about the importance of artificial intelligence, the reliability of the software, and the allegedly universal desire by big time corporate management are not worth worrying about.

Why is the cartoon philosopher worrying? I think most of this stuff is a poorly made road on which those jockeying for power and money want to drive their most recent knowledge vehicles. My tip? Look before crossing that information superhighway. Speeding myths can be harmful.

Stephen E Arnold, January 8, 2024

AI Ethics: Is That What Might Be Called an Oxymoron?

January 5, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

MSN.com presented me with this story: “OpenAI and Microsoft on Trial — Is the Clash with the NYT a Turning Point for AI Ethics?” I can answer this question, but that would spoil the entertainment value of my juxtaposition of this write up with the quasi-scholarly list of business start up resources. Why spoil the fun?

Socrates is lecturing at a Fancy Dan business school. The future MBAs are busy scrolling TikTok, pitching ideas to venture firms, and scrolling JustBang.com. Viewing this sketch, it appears that ethics and deep thought are not as captivating as mobile devices and having fund. Thanks, MSFT Copilot. Two tries and a good enough image.

The article asks a question which I find wildly amusing. The “on trial” write up states in 21st century rhetoric:

The lawsuit prompts critical questions about the ownership of AI-generated content, especially when it comes to potential inaccuracies or misleading information. The responsibility for losses or injuries resulting from AI-generated content becomes a gray area that demands clarification. Also, the commercial use of sourced materials for AI training raises concerns about the value of copyright, especially if an AI were to produce content with significant commercial impact, such as an NYT bestseller.

For more than two decades online outfits have been sucking up information which is usually slapped with the bright red label “open source information.”

The “on trial” essay says:

The future of AI and its coexistence with traditional media hinges on the resolution of this legal battle.

But what about ethics? The “on trial” write up dodges the ethics issue. I turned to a go-to resource about ethics. No, I did not look at the papers of the Harvard ethics professor who allegedly made up data for ethic research. Ho ho ho. Nope. I went to the Enchanting Trader and its list of 4000+ Essential Business Startup Database of information.

I displayed the full list of resources and ran a search for the word “ethics.” There was one hit to “Will Joe Rogan Ever IPO?” Amazing.

What I concluded is that “ethics” is not number one with a bullet among the resources of the 4000+ essential business start up items. It strikes me that a single trial about smart software is unlikely to resolve “ethics” for AI. If it does, will the resolution have the legs that Socrates’ musing have had. More than likely, most people will ask, “Who is Socrates?” or “What the heck are ethics?”

Stephen E Arnold, January 5, 2023

IBM: AI Marketing Like It Was 2004

January 5, 2024

This essay is the work of a dumb dinobaby. No smart software required. Note: The word “dinobaby” is — I have heard — a coinage of IBM. The meaning is an old employee who is no longer wanted due to salary, health care costs, and grousing about how the “new” IBM is not the “old” IBM. I am a proud user of the term, and I want to switch my tail to the person who whipped up the word.

This essay is the work of a dumb dinobaby. No smart software required. Note: The word “dinobaby” is — I have heard — a coinage of IBM. The meaning is an old employee who is no longer wanted due to salary, health care costs, and grousing about how the “new” IBM is not the “old” IBM. I am a proud user of the term, and I want to switch my tail to the person who whipped up the word.

What’s the future of AI? The answer depends on whom one asks. IBM, however, wants to give it the old college try and answer the question so people forget about the Era of Watson. There’s a new Watson in town, or at least, there is a new Watson at the old IBM url. IBM has an interesting cluster of information on its Web site. The heading is “Forward Thinking: Experts Reveal What’s Next for AI.”

IBM crows that it “spoke with 30 artificial intelligence visionaries to learn what it will take to push the technology to the next level.” Five of these interviews are now available on the IBM Web site. My hunch is that IBM will post new interviews, hit the new release button, post some links on social media, and then hit the “Reply” button.

Can IBM ignite excitement and capture the revenues it wants from artificial intelligence? That’s a good question, and I want to ask the expert in the cartoon for an answer. Unfortunately only customers and their decisions matter for AI thought leaders unless the intended audience is start ups, professors, and employees. Thanks, MSFT Copilot Bing thing. Good enough.

As I read the interviews, I thought about the challenge of predicting where smart software would go as it moved toward its “what’s next.” Here’s a mini-glimpse of what the IBM visionaries have to offer. Note that I asked Microsoft’s smart software to create an image capturing the expert sitting in an office surrounded by memorabilia.

Kevin Kelly (the author of What Technology Wants) says: “Throughout the business world, every company these days is basically in the data business and they’re going to need AI to civilize and digest big data and make sense out of it—big data without AI is a big headache.” My thought is that IBM is going to make clear that it can help companies with deep pockets tackle these big data and AI them. Does AI want something, or do those trying to generate revenue want something?

Mark Sagar (creator of BabyX) says: “We have had an exponential rise in the amount of video posted online through social media, etc. The increased use of video analysis in conjunction with contextual analysis will end up being an extremely important learning resource for recognizing all kinds of aspects of behavior and situations. This will have wide ranging social impact from security to training to more general knowledge for machines.” Maybe IBM will TikTok itself?

Chieko Asakawa (an unsighted IBM professional) says: “We use machine learning to teach the system to leverage sensors in smartphones as well as Bluetooth radio waves from beacons to determine your location. To provide detailed information that the visually impaired need to explore the real world, beacons have to be placed between every 5 to 10 meters. These can be built into building structures pretty easily today.” I wonder if the technology has surveillance utility?

Yoshua Bengio (seller of an AI company to ServiceNow) says: “AI will allow for much more personalized medicine and bring a revolution in the use of large medical datasets.” IBM appears to have forgotten about its Houston medical adventure and Mr. Bengio found it not worth mentioning I assume.

Margaret Boden (a former Harvard professor without much of a connection to Harvard’s made up data and administrative turmoil) says: “Right now, many of us come at AI from within our own silos and that’s holding us back.” Aren’t silos necessary for security, protecting intellectual property, and getting tenure? Probably the “silobreaking” will become a reality.

Several observations:

- IBM is clearly trying hard to market itself as a thought leader in artificial intelligence. The Jeopardy play did not warrant a replay.

- IBM is spending money to position itself as a Big Dog pulling the AI sleigh. The MIT tie up and this AI Web extravaganza are evidence that IBM is [a] afraid of flubbing again, [b] going to market its way to importance, [c] trying to get traction as outfits like OpenAI, Mistral, and others capture attention in the US and Europe.

- IBM’s ability to generate awareness of its thought leadership in AI underscores one of the challenges the firm faces in 2024.

Net net: The company that coined the term “dinobaby” has its work cut out for itself in my opinion. Is Jeopardy looking like a channel again?

Stephen E Arnold, January 5, 2024