AI and the Obvious: Hire Us and Pay Us to Tell You Not to Worry

December 26, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “Accenture Chief Says Most Companies Not Ready for AI Rollout.” The paywalled write up is an opinion from one of Captain Obvious’ closest advisors. The CEO of Accenture (a general purpose business expertise outfit) reveals some gems about artificial intelligence. Here are three which caught my attention.

#1 — “Sweet said executives were being “prudent” in rolling out the technology, amid concerns over how to protect proprietary information and customer data and questions about the accuracy of outputs from generative AI models.”

The secret to AI consulting success: Cost, fear of failure, and uncertainty or CFU. Thanks, MSFT Copilot. Good enough.

Arnold comment: Yes, caution is good because selling caution consulting generates juicy revenues. Implementing something that crashes and burns is a generally bad idea.

#2 — “Sweet said this corporate prudence should assuage fears that the development of AI is running ahead of human abilities to control it…”

Arnold comment: The threat, in my opinion, comes from a handful of large technology outfits and from the legions of smaller firms working overtime to apply AI to anything that strikes the fancy of the entrepreneurs. These outfits think about sizzle first, consequences maybe later. Much later.

# 3 — ““There are no clients saying to me that they want to spend less on tech,” she said. “Most CEOs today would spend more if they could. The macro is a serious challenge. There are not a lot of green shoots around the world. CEOs are not saying 2024 is going to look great. And so that’s going to continue to be a drag on the pace of spending.”

Arnold comment: Great opportunity to sell studies, advice, and recommendations when customers are “not saying 2024 is going to look great.” Hey, what’s “not going to look great” mean?

The obvious is — obvious.

Stephen E Arnold, December 26, 2023

AI Is Here to Help Blue Chip Consulting Firms: Consultants, Tighten Your Seat Belts

December 26, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “Deloitte Is Looking at AI to Help Avoid Mass Layoffs in Future.” The write up explains that blue chip consulting firms (“the giants of the consulting world”) have been allowing many Type A’s to find their future elsewhere. (That’s consulting speak for “You are surplus,” “You are not suited for another team,” or “Hasta la vista.”) The message Deloitte is sending strikes me as, “We are leaders in using AI to improve the efficiency of our business. You (potential customers) can hire us to implement AI strategies and tactics to deliver the same turbo boost to your firm.) Deloitte is not the only “giant” moving to use AI to improve “efficiency.” The big folks and the mid-tier players are too. But let’s look at the Deloitte premise in what I see as a PR piece.

Hey, MSFT Copilot. Good enough. Your colleagues do have experience with blue-chip consulting firms which obviously assisted you.

The news story explains that Deloitte wants to use AI to help figure out who can be billed at startling hourly fees for people whose pegs don’t fit into the available round holes. But the real point of the story is that the “giants” are looking at smart software to boost productivity and margins. How? My answer is that management consulting firms are “experts” in management. Therefore, if smart software can make management better, faster, and cheaper, the “giants” have to use best practices.

And what’s a best practice in the context of the “giants” and the “avoid mass layoffs” angle? My answer is, “Money.”

The big dollar items for the “giants” are people and their associated costs, travel, and administrative tasks. Smart software can replace some people. That’s a no brainer. Dump some of the Type A’s who don’t sell big dollar work, winnow those who are not wedded to the “giant” firm, and move the administrivia to orchestrated processes with smart software watching and deciding 24×7.

Imagine the “giants” repackaging these “learnings” and then selling the information about how to and payoffs to less informed outfits. Once that is firmly in mind, the money for the senior partners who are not on on the “hasta la vista” list goes up. The “giants” are not altruistic. The firms are built fro0m the ground up to generate cash, leverage connections, and provide services to CEOs with imposter syndrome and other issues.

My reaction to the story is:

- Yep, marketing. Some will do the Harvard Business Review journey; others will pump out white papers; many will give talks to “preferred” contacts; and others will just imitate what’s working for the “giants”

- Deloitte is redefining what expertise it will require to get hired by a “giant” like the accounting/consulting outfit

- The senior partners involved in this push are planning what to do with their bonuses.

Are the other “giants” on the same path? Yep. Imagine. Smart software enabled “giants” making decisions for the organizations able to pay for advice, insight, and warm embrace of AI-enabled humanoids. What’s the probability of success? Close enough for horseshoes. and even bigger money for some blue chip professionals. Did Deloitte over hiring during the pandemic?

Of course not, the tactic was part of the firm’s plan to put AI to a real world test. Sound good. I cannot wait until the case studies become available.

Stephen E Arnold, December 26, 2023

A Former Yahooligan and Xoogler Offers Management Advice: Believe It or Not!

November 22, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read a remarkable interview / essay / news story called “Former Yahoo CEO Marissa Mayer Delivers Sharp-Elbowed Rebuke of OpenAI’s Broken Board.” Marissa Mayer was a Googler. She then became the Top Dog at Yahoo. Highlights of her tenure at Yahoo include, according to Inc.com, included:

- Fostering a “superstar status” for herself

- Pointing a finger is a chastising way at remote workers

- Trying to obfuscate Yahooligan layoffs

- Making slow job cuts

- Lack of strategic focus (maybe Tumblr, Yahoo’s mobile strategy, the search service, perhaps?)

- Tactical missteps in diversifying Yahoo’s business (the Google disease in my opinion)

- Setting timetables and then ignoring, missing, or changing them

- Weird PR messages

- Using fear (and maybe uncertainty and doubt) as management methods.

The senior executives of a high technology company listen to a self-anointed management guru. One of the bosses allegedly said, “I thought Bain and McKinsey peddled a truckload of baloney. We have the entire factory in front of use.” Thanks, MSFT Copilot. Is Sam the AI-Man on duty?

So what’s this exemplary manager have to say? Let’s go to the original story:

“OpenAI investors (like @Microsoft) need to step up and demand that the governance weaknesses at @OpenAI be fixed,” Mayer wrote Sunday on X, formerly known as Twitter.

Was Microsoft asleep at the switch or simply operating within a Cloud of Unknowing? Fast-talking Satya Nadella was busy trying to make me think he was operating in a normal manner. Had he known something was afoot, is he equipped to deal with burning effigies as a business practice?

Ms. Mayer pointed out:

“The fact that Ilya now regrets just shows how broken and under advised they are/were,” Mayer wrote on social media. “They call them board deliberations because you are supposed to be deliberate.”

Brilliant! Was that deliberative process used to justify the purchase of Tumblr?

The Business Insider write up revealed an interesting nugget:

The Information reported that the former Yahoo CEO’s name had been tossed around by “people close to OpenAI” as a potential addition to the board…

Okay, a Xoogler and a Yahooligan in one package.

Stephen E Arnold, November 22, 2023

The AI Bandwagon: A Hoped for Lawyer Billing Bonanza

November 8, 2023

This essay is the work of a dumb humanoid. No smart software required.

This essay is the work of a dumb humanoid. No smart software required.

The AI bandwagon is picking up speed. A dark smudge appears in the sky. What is it? An unidentified aerial phenomenon? No, it is a dense cloud of legal eagles. I read “U.S. Regulation of Artificial Intelligence: Presidential Executive Order Paves the Way for Future Action in the Private Sector.”

A legal eagle — aka known as a lawyer or the segment of humanity one of Shakespeare’s characters wanted to drown — is thrilled to read an official version of the US government’s AI statement. Look at what is coming from above. It is money from fees. Thanks, Microsoft Bing, you do understand how the legal profession finds pots of gold.

In this essay, which is free advice and possibly marketing hoo hah, I noted this paragraph:

While the true measure of the Order’s impact has yet to be felt, clearly federal agencies and executive offices are now required to devote rigorous analysis and attention to AI within their own operations, and to embark on focused rulemaking and regulation for businesses in the private sector. For the present, businesses that have or are considering implementation of AI programs should seek the advice of qualified counsel to ensure that AI usage is tailored to business objectives, closely monitored, and sufficiently flexible to change as laws evolve.

Absolutely. I would wager a 25 cents coin that the advice, unlike the free essay, will incur a fee. Some of those legal fees make the pittance I charge look like the cost of chopped liver sandwich in a Manhattan deli.

Stephen E Arnold, November 8, 2023

What Type of Employee? What about Those Who Work at McKinsey & Co.?

October 5, 2023

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Yes, I read When McKinsey Comes to Town: The Hidden Influence of the World’s Most Powerful Consulting Firm by Walt Bogdanich and Michael Forsythe. No, I was not motivated to think happy thoughts about the estimable organization. Why? Oh, I suppose the image of the opioid addicts in southern Indiana, Kentucky, and West Virginia rained on the parade.

I did scan a “thought piece” written by McKinsey professionals, probably a PR person, certainly an attorney, and possibly a partner who owned the project. The essay’s title is “McKinsey Just Dropped a Report on the 6 Employee Archetypes. Good News for Some Organizations, Terrible for Others. What Type of Dis-Engaged Employee Is On Your Team?” The title was the tip off a PR person was involved. My hunch is that the McKinsey professionals want to generate some bookings for employee assessment studies. What better way than converting some proprietary McKinsey information into a white paper and then getting the white paper in front of an editor at an “influence center.” The answer to the question, obviously, is hire McKinsey and the firm will tell you whom to cull.

Inc. converts the white paper into an article and McKinsey defines the six types of employees. From my point of view, this is standard blue chip consulting information production. However, there was one comment which caught my attention:

Approximately 4 percent of employees fall into the “Thriving Stars” category, represent top talent that brings exceptional value to the organization. These individuals maintain high levels of well-being and performance and create a positive impact on their teams. However, they are at risk of burnout due to high workloads.

Now what type of company hires these four percenters? Why blue chip consulting companies like McKinsey, Bain, BCG, Booz Allen, etc. And what are the contributions these firms’ professionals make to society. Jump back to When McKinsey Comes to Town. One of the highlights of that book is the discussion of the consulting firm’s role in the opioid epidemic.

That’s an achievement of which to be proud. Oh, and the other five types of employees. Don’t bother to apply for a job at the blue chip outfits.

Stephen E Arnold, October 4, 2023

A Pivot al Moment in Management Consulting

October 4, 2023

The practice of selling “management consulting” has undergone a handful of tectonic shifts since Edwin Booz convinced Sears, the “department” store outfit to hire him. (Yes, I am aware I am cherry picking, but this is a blog post, not a for fee report.)

The first was the ability of a consultant to move around quickly. Trains and Chicago became synonymous with management razzle dazzle. The center of gravity shifted to New York City because consulting thrives where there are big companies. The second was the institutionalization of the MBA as a certification of a 23 year old’s expertise. The third was the “invention” of former consultants for hire. The innovator in this business was Gerson Lehrman Group, but there are many imitators who hire former blue-chip types and resell them without the fee baggage of the McKinsey & Co. type outfits. And now the fourth earthquake is rattling carpetland and the windows in corner offices (even if these offices are in an expensive home in Wyoming.)

A centaur and a cyborg working on a client report. Thanks, MidJourney. Nice hair style on the cyborg.

Now we have the era of smart software or what I prefer to call the era of hyperbole about semi-smart semi-automated systems which output “information.” I noted this write up from the estimable Harvard University. Yes, this is the outfit who appointed an expert in ethics to head up the outfit’s ethics department. The same ethics expert allegedly made up data for peer reviewed publications. Yep, that Harvard University.

“Navigating the Jagged Technological Frontier” is an essay crafted by the D^3 faculty. None of this single author stuff in an institution where fabrication of research is a stand up comic joke. “What’s the most terrifying word for a Harvard ethicist?” Give up? “Ethics.” Ho ho ho.

What are the highlights of this esteemed group of researches, thinkers, and analysts. I quote:

- For tasks within the AI frontier, ChatGPT-4 significantly increased performance, boosting speed by over 25%, human-rated performance by over 40%, and task completion by over 12%.

- The study introduces the concept of a “jagged technological frontier,” where AI excels in some tasks but falls short in others.

- Two distinct patterns of AI use emerged: “Centaurs,” who divided and delegated tasks between themselves and the AI, and “Cyborgs,” who integrated their workflow with the AI.

Translation: We need fewer MBAs and old timers who are not able to maximize billability with smart or semi smart software. Keep in mind that some consultants view clients with disdain. If these folks were smart, they would not be relying on 20-somethings to bail them out and provide “wisdom.”

This dinobaby is glad he is old.

Stephen E Arnold, October 4, 2023

Gartner Hype Cycle: Some Pointed Criticism from Analytics India

September 5, 2023

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

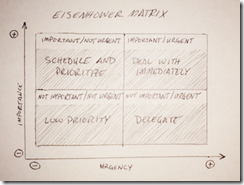

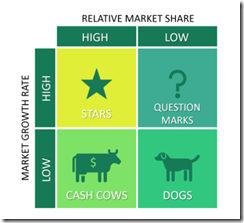

I read the Analytics India article “Gartner’s Hype Cycle is a Waste of Time.” The “hype cycle” is a graph designed to sell consulting services. My personal perception is that Gideon or a close compatriot talked about the Boston Consulting Group’s version of General Eisenhower’s two by two matrix. Here’s an example of

I want to credit Chris Adams article about the Eisenhower Matrix. You can find Mr. Adams’ write up at this link. I don’t know what General Eisenhower’s inspiration was, but the BCG adaptation was consulting marketing genius. Here’s an example of the BCG variant:

This illustration comes from Business to You at this link.

Before looking at a Gartner graph goodie, I want to point out that the BCG innovation was to make the icons relate to numbers. BCG pointed to the “dogs” icon and then showed the numbers like market share, product costs, etc. that converted an executive in love with the status quo to consider rehoming the dogs or just put a beloved pet down. In the lingo of one blue chip outfit, the dog could find its future elsewhere.

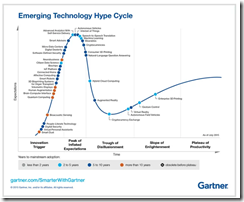

I did a Bing image search for Gartner hype cycle and found a cornucopia of outputs. Here’s one I selected because it looked better than some of the others:

If you want to view a readable version, navigate to this Medium post by Compassionate Technologies of which I have zero knowledge. (But do the words “technology” and “compassion” go together?)

The key point about the Gartner graph is that they all look alike; that is, the curves don’t change, which is the point I guess. A technology begins at point 0,0 and moves up a hockey stick curve (maybe the increasing hype) and then appear to flatten out. I am confident that the Gartner experts are not gathering technology market and investment data and thinking in terms of linear regression, standard deviation, or a calculator on a mobile phone.

The client says, “Your team’s report strikes me as filled with unsupported assertions. My company cannot accept the analysis. We won’t pay the fee for this type of work.” Oh, oh. Thanks, MidJourney, close to my prompt but close only counts in horse shoes.

The difference between the BCG graph is that numbers are used to explain the “dogs,” “stars,” etc. The Gartner graph is a marketing vehicle. Those have read my essays over the years know that I view the world with some baked in biases; for example, the BCG graph is great marketing which leads to substantive consulting. This is one characteristic of a blue chip consulting firm. The Gartner graph is subjective or impressionistic, a bit like a Van Gogh night sky. Sure, there are stars, but those puppies don’t look like swirlies to me. Thus, Gartner is to me a mid tier consulting firm. Some consumers of these types of marketing graphs use them to justify certain actions; for instance, selecting a particular type of software. When the software goes off the rails, the data-starved impressionistic chart leaves some hungry for more data. When another project comes along, the firm may seek a blue-chip outfit even if its work is more expensive.

Now back to the Analytics India article cited above.

The author makes a statement with which I agree:

The Gartner Hype Cycle is not science, but Gartner presents it as an established law.

Exactly. This is marketing, not the BCG analytics centric Eisenhower 2×2 matrix.

Here’s another passage from the write up (originally from Michael Mullany):

Many technologies simply fade away with time or die. According to Michael Mullany, an additional 20% of all technologies that were tracked for multiple years on the Hype Cycle became obsolete before reaching any kind of mainstream success. The Gartner Hype Cycle is not science, but Gartner presents it as an established natural law. Expressing similar sentiments, a user on Hacker News wrote, “Why do people think the Gartner Hype Cycle is a law of Physics?” when in fact, the Hype Cycle lacks empirical backing and fails to consider technologies that deviate from its prescribed path.

Yep, marketing.

Do I care? Not any more. When I was doing consulting to buy cheap fuel for my Pinto (the kind that would explode if struck from behind), I did care. The blue chip outfit at which I worked was numbers oriented. That was a good thing.

Stephen E Arnold, September 5, 2023

Blue Chip Consulting Firms: A Malfunctioning System?

August 14, 2023

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Blue chip consulting companies require engagements from organizations willing to pay for “expertise.” Generative software can provide an answer quickly. Instead of having many MBAs and people with “knowledge” of a technology provide senior partners with filtered information, software can do this work quickly and at lower cost.

A senior consultant looks at a malfunctioning machine. The information he used to recommend the system resulted in the problem which has turned into an unanticipated problem. Instead of a team of young MBAs with engineering degrees, he has access to smart software. Obviously someone will notice this problem. “Now what?” he asks himself.

“Consulting Firms Like Accenture Are Giving Recent Grads $25,000 Stipends to Push Back Their Start Dates” suggests that blue chip consulting firms are changing the approach to bringing new human resources on board. The write up reports:

Work has been slow at many top consulting firms over the past year. New hires straight out of business school are running errands and watching Netflix — to the tune of $175,000 a year — because there’s not enough work to go around. Others are being offered tens of thousands dollars to push their start dates back to next year.

Let’s assume that this report about “not enough work to go around” is accurate. What does this suggest to, a person who has worked at a blue chip consulting firm and provided services to blue chip consulting firms in my work career?

- The pipeline of work to be done is not filled or overflowing. Without engagements, billing is difficult. Without engagements, scope changes are impossible. Perhaps the costs of blue chip consultants is too high?

- Are clients turning to lower-cost options for traditional management consulting services? Outfits like Gerson Lehrman Group sells access to experts at a lower cost per contact than a blue chip firm? Has the gig economy crimped the sales pipeline?

- Is technology like ChatGPT-type services provide “good enough” information so companies can eliminate the cost and risk of hiring a blue chip consulting firm? (I think the outfits probably should be conservative in their use ChatGPT-type outputs, but today the “good enough” approach is the norm.)

Net net: Blue chip consulting firms are in the influence game. The delayed “start work” information indicates that changes are taking place in the market which supports these firms. The firms themselves are making changes. The signal summarized by the cited article may be a glitch. On the other hand, perhaps there is a malfunction in the machinery of what has been a smoothly-running machine for more than a century?

Stephen E Arnold, August 14, 2023

MBAs Want to Win By Delivering Value. It Is Like an Abstraction, Right?

August 11, 2023

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Note: This essay is the work of a real and still-alive dinobaby. No smart software involved, just a dumb humanoid.

Is it completely necessary to bring technology into every aspect of one’s business? Maybe, maybe not. But apparently some believe such company-wide “digital transformation” is essential for every organization these days. And, of course, there are consulting firms eager to help. One such outfit, Third Stage Consulting Group, has posted some advice in, “How to Measure Digital Transformation Results and Value Creation.” Value for whom? Third Stage, perhaps? Certainly, if one takes writer Eric Kimberling on his invitation to contact him for a customized strategy session.

Kimberling asserts that, when embarking on a digital transformation, many companies fail to consider how they will keep the project on time, on budget, and in scope while minimizing operational disruption. Even he admits some jump onto the digital-transformation bandwagon without defining what they hope to gain:

“The most significant and crucial measure of success often goes overlooked by many organizations: the long-term business value derived from their digital transformation. Instead of focusing solely on basic reasons and justifications for undergoing the transformation, organizations should delve deeper into understanding and optimizing the long-term business value it can bring. For example, in the current phase of digital transformation, ERP [Enterprise Resource Planning] software vendors are pushing migrations to new Cloud Solutions. While this may be a viable long-term strategy, it should not be the sole justification for the transformation. Organizations need to define and quantify the expected business value and create a benefits realization plan to achieve it. … Considering the significant investments of time, money, and effort involved, organizations should strive to emerge from the transformation with substantial improvements and benefits.”

So companies should consider carefully what, if anything, they stand to gain by going through this process. Maybe some will find the answer is “nothing” or “not much,” saving themselves a lot of hassle and expense. But if one decides it is worth the trouble, rest assured many consultants are eager to guide you through. For a modest fee, of course.

Cynthia Murrell, August 11, 2023

The Future from the Masters of the Obvious

June 26, 2023

The last few years have seen many societal changes that, among other things, affect business operations. Gartner corals these seismic shifts into six obvious considerations for its article, “6 Macro Factors Reshaping Business this Decade.” Contributor Jordan Turner writes:

“Executives will continue to grapple with a host of challenges during the 2020s, but from the maelstrom that was their first few years, new business opportunities will arise. ‘As we entered the 2020s, economies were already on the edge,’ says Mark Raskino, Distinguished VP Analyst at Gartner. ‘A decade-long boom, generated substantially from inexpensive finance and lower-cost energy, led to structural stresses such as highly leveraged debt, crumbling international alliances and bubble-like asset prices. We were overdue for a reckoning.’ Six macro factors that will reshape business this decade. The pandemic coincided with and catalyzed societal shifts, spurring a strategy reset for many industries. Executive leaders must acknowledge these six changes to reconsider how business will get done.”

Their list includes: the threat of recession, systemic mistrust, poor economic productivity, sustainability, a talent shortage, and emerging technologies. See the write-up for details on each. Not surprisingly, the emerging technologies list includes adaptive AI alongside the metaverse, platform engineering, sustainable technology and superapps. Unfortunately, the Gartner wizards omitted replacing consultants and analysts with smart software. That may be the most cost-effective transition for businesses yet the most detrimental to workers. We wonder why they left it out.

And grapple? Yes, grapple. I wonder if Gartner will have a special presentation and a conference about these. Attendees can grapple. Like Musk and Zuck?

Cynthia Murrell, June 26, 2023