PicRights in the News: Happy Holidays

November 28, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

With the legal eagles cackling in their nests about artificial intelligence software using content without permission, the notion of rights enforcement is picking up steam. One niche in rights enforcement is the business of using image search tools to locate pictures and drawings which appear in blogs or informational Web pages.

StackOverflow hosts a thread by a developer who linked to or used an image more than a decade ago. On November 23, 2023, the individual queried those reading Q&A section about a problem. “Am I Liable for Re-Sharing Image Links Provided via the Stack Exchange API?”

The legal eagle jabs with his beak at the person who used an image, assuming it was open source. The legal eagle wants justice to matter. Thanks, MSFT Copilot. A couple of tries, still not on point, but good enough.

The explanation of the situation is interesting to me for three reasons: [a] The alleged infraction took place in 2010; [b] Stack Exchange is a community learning and sharing site which manifests some open sourciness; and [c] information about specific rights, content ownership, and data reached via links is not front and center.

Ignorance of the law, at least in some circles, is not excuse for a violation. The cited post reveals that an outfit doing business as PicRights wants money for the unlicensed use of an image or art in 2010 (at least that’s how I read the situation).

What’s interesting is the related data provided by those who are responding to the request for information; for example:

- A law firm identified as Higbee & Asso. is the apparent pointy end of the spear pointed at the alleged offender’s wallet

- A link to an article titled “Is PicRights a Scam? Are Higbee & Associates Emails a Scam?”

- A marketing type of write up called “How To Properly Deal With A PicRights Copyright Unlicensed Image Letter”.

How did the story end? Here’s what the person accused of infringing did:

According to Law I am liable. I have therefore decided to remove FAQoverflow completely, all 90k+ pages of it, and will negotiate with PicRights to pay them something less than the AU$970 that they are demanding.

What are the downsides to the loss of the FAQoverflow content? I don’t know. But I assume that the legal eagles, after gobbling one snack, are aloft and watching the AI companies. That’s where the big bucks will be. Legal eagles have a fondness for big bucks I believe.

Net net: Justice is served by some interesting birds, eagles and whatnot.

Stephen E Arnold, November 28, 2023

Maybe the OpenAI Chaos Ended Up as Grand Slam Marketing?

November 28, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Yep, Q Star. The next Big Thing. “About That OpenAI Breakthrough” explains

OpenAI could in fact have a breakthrough that fundamentally changes the world. But “breakthroughs” rarely turn to be general to live up to initial rosy expectations. Often advances work in some contexts, not otherwise.

I agree, but I have a slightly different view of the matter. OpenAI’s chaotic management skills ended up as accidental great marketing. During the dust up and dust settlement, where were the other Big Dogs of the techno-feudal world? If you said, who? you are on the same page with me. OpenAI burned itself into the minds of those who sort of care about AI and the end of the world Terminator style.

In companies and organizations with “do gooder” tendencies, the marketing messages can be interpreted by some as a scientific fact. Nope. Thanks, MSFT Copilot. Are you infringing and expecting me to take the fall?

First, shotgun marriages can work out here in rural Kentucky. But more often than not, these unions become the seeds of Hatfield and McCoy-type Thanksgivings. “Grandpa, don’t shoot the turkey with birdshot. Granny broke a tooth last year.” Translating from Kentucky argot: Ideological divides produce craziness. The OpenAI mini-series is in its first season and there is more to come from the wacky innovators.

Second, any publicity is good publicity in Sillycon Valley. Who has given a thought to Google’s smart software? How did Microsoft’s stock perform during the five day mini-series? What is the new Board of Directors going to do to manage the bucking broncos of breakthroughs? Talk about dominating the “conversation.” Hats off to the fun crowd at OpenAI. Hey, Google, are you there?

Third, how is that regulation of smart software coming along? I think one unit of the US government is making noises about the biggest large language model ever. The EU folks continue to discuss, a skill essential to representing the interests of the group. Countries like China are chugging along, happily downloading code from open source repositories. So exactly what’s changed?

Net net: The OpenAI has been a click champ. Good, bad, or indifferent, other AI outfits have some marketing to do in the wake of the blockbuster “Sam AI-Man: The Next Bigger Thing.” One way or another, Sam AI-Man dominates headlines, right Zuck, right Sundar?

Stephen E Arnold, November 28, 2023

Contraband Available On Regular Web: Just in Time for the Holidays

November 28, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Losing weight is very difficult and people often turn to surgery or prescription drugs to help them drop pounds. Ozempic, Wegovy, and Rybelsus are popular weight loss drugs. They are brand names for a drug named semaglutide. There currently aren’t any generic versions of semaglutide so people are turning to the Internet for “alternate” options. The BBC shares how “Weight Loss Injection Hype Fuels Online Black Market.”

Unlicensed and unregulated vendors sell semaglutide online without prescriptions and its also available in beauty salons. Semaglutide is being sold in “diet kits” that contain needles and two vials. One contains a white powder and the other a liquid that must to be mixed together before injection. Jordan Parke runs a company called The Lip King that sells the kits for £200. Parke’s kits advise people to inject themselves with a higher dosage than health experts would advise.

The BBC bought and tested unlicensed kits, discovering that some contained semaglutide and others didn’t have any of the drug. The ones that did contain semaglutide didn’t contain the full advertised dosage. Buyers are putting themselves in harm’s way when they use the unlicensed injections:

“Prof Barbara McGowan, a consultant endocrinologist who co-authored a Novo Nordisk-funded study which trialled semaglutide to treat obesity, says licensed medications – like Ozempic and Wegovy – go through "very strict" quality controls before they are approved for use.

She warns that buyers using semaglutide sourced outside the legal supply chain "could be injecting anything".

‘We don’t know what the excipients are – that is the other ingredients, which come with the medication, which could be potentially toxic and harmful, [or] cause an anaphylactic reaction, allergies and I guess at worse, significant health problems and perhaps even death,’ she says.”

The semaglutide pens aren’t subjected to the same quality control and wraparound care guidelines as the licensed drugs. It’s easy to buy the fake semaglutide but at least the Dark Web has a checks and balances system to ensure buyers get the real deal.

Whitney Grace, November 28, 2023

Governments Tip Toe As OpenAI Sprints: A Story of the Turtles and the Rabbits

November 27, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Reuters has reported that a pride of lion-hearted countries have crafted “joint guidelines” for systems with artificial intelligence. I am not exactly sure what “artificial intelligence” means, but I have confidence that a group of countries, officials, advisor, and consultants do.

The main point of the news story “US, Britain, Other Countries Ink Agreement to Make AI Secure by Design” is that someone in these countries knows what “secure by design” means. You may not have noticed that cyber breaches seem to be chugging right along. Maine managed to lose control of most of its residents’ personally identifiable information. I won’t mention issues associated with Progress Software, Microsoft systems, and LY Corp and its messaging app with a mere 400,000 users.

The turtle started but the rabbit reacted. Now which AI enthusiast will win the race down the corridor between supercomputers powering smart software? Thanks, MSFT Copilot. It took several tries, but you delivered a good enough image.

The Reuters’ story notes with the sincerity of an outfit focused on trust:

The agreement is the latest in a series of initiatives – few of which carry teeth – by governments around the world to shape the development of AI, whose weight is increasingly being felt in industry and society at large.

Yep, “teeth.”

At the same time, Sam AI-Man was moving forward with such mouth-watering initiatives as the AI app store and discussions to create AI-centric hardware. “I Guess We’ll Just Have to Trust This Guy, Huh?” asserts:

But it is clear who won (Altman) and which ideological vision (regular capitalism, instead of some earthy, restrained ideal of ethical capitalism) will carry the day. If Altman’s camp is right, then the makers of ChatGPT will innovate more and more until they’ve brought to light A.I. innovations we haven’t thought of yet.

As the signatories to the agreement without “teeth” and Sam AI-Man were doing their respective “thing,” I noted the AP story titled “Pentagon’s AI Initiatives Accelerate Hard Decisions on Lethal Autonomous Weapons.” That write up reported:

… the Pentagon is intent on fielding multiple thousands of relatively inexpensive, expendable AI-enabled autonomous vehicles by 2026 to keep pace with China.

To deal with the AI challenge, the AP story includes this paragraph:

The Pentagon’s portfolio boasts more than 800 AI-related unclassified projects, much still in testing. Typically, machine-learning and neural networks are helping humans gain insights and create efficiencies.

Will the signatories to the “secure by design” agreement act like tortoises or like zippy hares? I know which beastie I would bet on. Will military entities back the slow or the fast AI faction? I know upon which I would wager fifty cents.

Stephen E Arnold, November 27, 2023

Bogus Research Papers: They Are Here to Stay

November 27, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

“Science Is Littered with Zombie Studies. Here’s How to Stop Their Spread” is a Don Quixote-type write up. The good Don went to war against windmills. The windmills did not care. The people watching Don and his trusty sidekick did not care, and many found the site of a person of stature trying to gore a mill somewhat amusing.

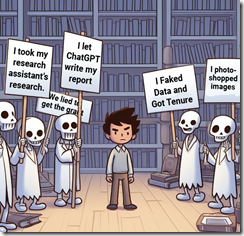

A young researcher meets the ghosts of fake, distorted, and bogus information. These artefacts of a loss of ethical fabric wrap themselves around the peer-reviewed research available in many libraries and in for-fee online databases. When was the last time you spotted a correction to a paper in an online database? Thanks, MSFT Copilot. After several tries I got ghosts in a library. Wow, that was a task.

Fake research, non-reproducible research, and intellectual cheating like the exemplars at Harvard’s ethic department and the carpetland of Stanford’s former president’s office seem commonplace today.

The Hill’s article states:

Just by citing a zombie publication, new research becomes infected: A single unreliable citation can threaten the reliability of the research that cites it, and that infection can cascade, spreading across hundreds of papers. A 2019 paper on childhood cancer, for example, cites 51 different retracted papers, making its research likely impossible to salvage. For the scientific record to be a record of the best available knowledge, we need to take a knowledge maintenance perspective on the scholarly literature.

The idea is interesting. It shares a bit of technical debt (the costs accrued by not fixing up older technology) and some of the GenX, GenY, and GenZ notions of “what’s right.” The article sidesteps a couple of thorny bushes on its way to the Promised Land of Integrity.

First, the academic paper is designed to accomplish several things. First, it is a demonstration of one’s knowledge value. “Hey, my peers said this paper was fit to publish” some authors say. Yeah, as a former peer reviewer, I want to tell you that harsh criticism is not what the professional publisher wanted. These papers mean income. Don’t screw up the cash flow,” was the message I heard.

Second, the professional publisher certainly does not want to spend the resources (time and money) required to do crapola archeology. The focus of a professional publisher is to make money by publishing information to niche markets and charging as much money as possible for that information. Academic accuracy, ethics, and idealistic hand waving are not part of the Officers’ Meetings at some professional publisher off-sites. The focus is on cost reduction, market capture, and beating the well-known cousins in other companies who compete with one another. The goal is not the best life partner; the objective is revenue and profit margin.

Third, the academic bureaucracy has to keep alive the mechanisms for brain stratification. Therefore, publishing something “groundbreaking” in a blog or putting the information in a TikTok simply does not count. In fact, despite the brilliance of the information, the vehicle is not accepted. No modern institution building its global reputation and its financial services revenue wants to accept a person unless that individual has been published in a peer reviewed journal of note. Therefore, no one wants to look at data or a paper. The attention is on the paper’s appearing in the peer reviewed journal.

Who pays for this knowledge garbage? The answer is [a] libraries who have to “get” the journals departments identify as significant, [b] the US government which funds quite a bit of baloney and hocus pocus research via grants, [c] the authors of the paper who have to pay for proofs, corrections, and goodness knows what else before the paper is enshrined in a peer-reviewed journal.

Who fixes the baloney? No one. The content is either accepted as accurate and never verified or the researcher cites that which is perceived as important. Who wants to criticize one’s doctoral advisor?

News flash: The prevalence and amount of crapola is unlikely to change. In fact, with the easy availability of smart software, the volume of bad scholarly information is likely to increase. Only the disinformation entities working for nation states hostile to the US of A will outpace US academics in the generation of bogus information.

Net net: The wise researcher will need to verify a lot. But that’s work. So there we are.

Stephen E Arnold, November 27, 2023

Predicting the Weather: Another Stuffed Turkey from Google DeepMind?

November 27, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

By or design, the adolescents at OpenAI have dominated headlines for the pre-turkey, the turkey, and the post-turkey celebrations. In the midst of this surge in poohbah outputs, Xhitter xheets, and podcast posts, non-OpenAI news has been struggling for a toehold.

An important AI announcement from Google DeepMind stuns a small crowd. Were the attendees interested in predicting the weather or getting a free umbrella? Thank, MSFT Copilot. Another good enough art work whose alleged copyright violations you want me to determine. How exactly am I to accomplish that? Use, Google Bard?

What is another AI company to do?

A partial answer appears in “DeepMind AI Can Beat the Best Weather Forecasts. But There Is a Catch”. This is an article in the esteemed and rarely spoofed Nature Magazine. None of that Techmeme dominating blue link stuff. None of the influential technology reporters asserting, “I called it. I called it.” None of the eye wateringly dorky observations that OpenAI’s organizational structure was a problem. None of the “Satya Nadella learned about the ouster at the same time we did.” Nope. Nope. Nope.

What Nature provided is good, old-fashioned content marketing. The write up points out that DeepMind says that it has once again leapfrogged mere AI mortals. Like the quantum supremacy assertion, the Google can predict the weather. (My great grandmother made the same statement about The Farmer’s Almanac. She believed it. May she rest in peace.)

The estimable magazine reported in the midst of the OpenAI news making turkeyfest said:

To make a forecast, it uses real meteorological readings, taken from more than a million points around the planet at two given moments in time six hours apart, and predicts the weather six hours ahead. Those predictions can then be used as the inputs for another round, forecasting a further six hours into the future…. They [Googley DeepMind experts] say it beat the ECMWF’s “gold-standard” high-resolution forecast (HRES) by giving more accurate predictions on more than 90 per cent of tested data points. At some altitudes, this accuracy rose as high as 99.7 per cent.

No more ruined picnics. No weddings with bridesmaids’ shoes covered in mud. No more visibly weeping mothers because everyone is wet.

But Nature, to the disappointment of some PR professionals presents an alternative viewpoint. What a bummer after all those meetings and presentations:

“You can have the best forecast model in the world, but if the public don’t trust you, and don’t act, then what’s the point? [A statement attributed to Ian Renfrew at the University of East Anglia]

Several thoughts are in order:

- Didn’t IBM make a big deal about its super duper weather capabilities. It bought the Weather Channel too. But when the weather and customers got soaked, I think IBM folded its umbrella. Will Google have to emulate IBM’s behavior. I mean “the weather.” (Note: The owner of the IBM Weather Company is an outfit once alleged to have owned or been involved with the NSO Group.)

- Google appears to have convinced Nature to announce the quantum supremacy type breakthrough only to find that a professor from someplace called East Anglia did not purchase the rubber boots from the Google online store.

- The current edition of The Old Farmer’s Almanac is about US$9.00 on Amazon. That predictive marvel was endorsed by Gussie Arnold, born about 1835. We are not sure because my father’s records of the Arnold family were soaked by sudden thunderstorm.

Just keep in mind that Google’s system can predict the weather 10 days ahead. Another quantum PR moment from the Google which was drowned out in the OpenAI tsunami.

Stephen E Arnold, November 27, 2023

Microsoft, the Techno-Lord: Avoid My Galloping Steed, Please

November 27, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The Merriam-Webster.com online site defines “responsibility” this way:

re·?spon·?si·?bil·?I·?ty

1 : the quality or state of being responsible: such as

: moral, legal, or mental accountability

: RELIABILITY, TRUSTWORTHINESS

: something for which one is responsible

The online sector has a clever spin on responsibility; that is, in my opinion, the companies have none. Google wants people who use its online tools and post content created with those tools to make sure that what the Google system outputs does not violate any applicable rules, regulations, or laws.

In a traditional fox hunt, the hunters had the “right” to pursue the animal. If a farmer’s daughter were in the way, it was the farmer’s responsibility to keep the silly girl out of the horse’s path. That will teach them to respect their betters I assume. Thanks, MSFT Copilot. I know you would not put me in a legal jeopardy, would you? Now what are the laws pertaining to copyright for a cartoon in Armenia? Darn, I have to know that, don’t I.

Such a crafty way of defining itself as the mere creator of software machines has inspired Microsoft to follow a similar path. The idea is that anyone using Microsoft products, solutions, and services is “responsible” to comply with applicable rules, regulations, and laws.

Tidy. Logical. Complete. Just like a nifty algebra identity.

Microsoft believes they have no liability if an AI, like Copilot, is used to infringe on copyrighted material.

The write up includes this passage:

So this all comes down to, according to Microsoft, that it is providing a tool, and it is up to users to use that tool within the law. Microsoft says that it is taking steps to prevent the infringement of copyright by Copilot and its other AI products, however, Microsoft doesn’t believe it should be held legally responsible for the actions of end users.

The write up (with no Jimmy Kimmel spin) includes this statement, allegedly from someone at Microsoft:

Microsoft is willing to work with artists, authors, and other content creators to understand concerns and explore possible solutions. We have adopted and will continue to adopt various tools, policies, and filters designed to mitigate the risk of infringing outputs, often in direct response to the feedback of creators. This impact may be independent of whether copyrighted works were used to train a model, or the outputs are similar to existing works. We are also open to exploring ways to support the creative community to ensure that the arts remain vibrant in the future.

From my drafty office in rural Kentucky, the refusal to accept responsibility for its business actions, its products, its policies to push tools and services on users, and the outputs of its cloudy system is quite clever. Exactly how will a user of products pushed at users like Edge and its smart features prevent a user from acquiring from a smart Microsoft system something that violates an applicable rule, regulation, or law?

But legal and business cleverness is the norm for the techno-feudalists. Let the surfs deal with the body of the child killed when the barons chase a fox through a small leasehold. I can hear the brave royals saying, “It’s your fault. Your daughter was in the way. No, I don’t care that she was using the free Microsoft training materials to learn how to use our smart software.”

Yep, responsible. The death of the hypothetical child frees up another space in the training course.

Stephen E Arnold, November 27, 2023

Amazon Alexa Factoids: A Look Behind the Storefront Curtains

November 24, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Hey, Amazon admirers, I noted some interesting (allegedly accurate factoids) in “Amazon Alexa to Lose $10 Billion This Year.” No, I was not pulled by interesting puddle of red ink.

Alexa loves to sidestep certain questions. Thanks, MSFT Copilot. Nice work even though you are making life difficult for Google’s senior management today.

Let me share four items which I thought interesting. Please, navigate to the original write up to get the full monte. (I support the tailor selling civvies, not the card game.)

- “Just about every plan to monetize Alexa has failed, with one former employee calling Alexa ‘a colossal failure of imagination,’ and ‘a wasted opportunity.’” [I noted the word colossal.]

- “Amazon can’t make money from Alexa telling you the weather”

- “I worked in the Amazon Alexa division. The level of incompetence coupled with arrogance was astounding.”

- “FAANG has gotten so large that the stock bump that comes from narrative outpaces actual revenue from working products.”

Now how about the management philosophy behind these allegedly accurate statements? It sounds like the consequences of doing high school science club field trip planning. Not sure how those precepts work? Just do a bit of reading about the OpenAI – Sam AI-Man hootenanny.

Stephen E Arnold, November 24, 2023

Speeding Up and Simplifying Deep Fake Production

November 24, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Remember the good old days when creating a deep fake required having multiple photographs, maybe a video clip, and minutes of audio? Forget those requirements. To whip up a deep fake, one needs only a short audio clip and a single picture of the person.

The pace of innovation in deep face production is speeding along. Bad actors will find it easier than ever to produce interesting videos for vulnerable grandparents worldwide. Thanks, MidJourney. It was a struggle but your produced a race scene that is good enough, the modern benchmark for excellence.

Researchers at Nanyang Technological University has blasted through the old-school requirements. The teams software can generate realistic videos. These can show facial expressions and head movements. The system is called DIRFA, a tasty acronym for Diverse yet Realistic Facial Animations. One notable achievement of the researchers is that the video is produced in 3D.

The report “Realistic Talking Faces Created from Only and Audio Clip and a Person’s Photo” includes more details about the system and links to demonstration videos. If the story is not available, you may be able to see the video on YouTube at this link.

Stephen E Arnold, November 24, 2023

Facial Recognition: A Bit of Bias Perhaps?

November 24, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

It’s a running gag in the tech industry that AI algorithms and related advancements are “racist.” Motion sensors can’t recognize dark pigmented skin. Photo recognition software misidentifies black and other ethnicities as primates. AI-trained algorithms are also biased against ethnic minorities and women in the financial, business, and other industries. AI is “racist” because it’s trained on data sets heavy in white and male information.

Ars Technica shares another story about biased AI: “People Think White AI-Generated Faces Are More Real Than Actual Photos, Study Says.” The journal of Psychological Science published a peer reviewed study, “AI Hyperrealism: Why AI Faces Are Perceived As More Real Than Human Ones.” The study discovered that faces created from three-year old AI technology were found to be more real than real ones. Predominately, AI-generate faces of white people were perceived as the most realistic.

The study surveyed 124 white adults who were shown a mixture of 100 AI-generated images and 100 real ones. They identified 66% of the AI images as human and 51% of the real faces were identified as real. Real and AI images of ethnic minorities with high amounts of melanin were viewed as real 51%. The study also discovered that participants who made the most mistakes were also the most confident, a clear indicator of the Dunning-Kruger effect.

The researchers conducted a second study with 610 participants and learned:

“The analysis of participants’ responses suggested that factors like greater proportionality, familiarity, and less memorability led to the mistaken belief that AI faces were human. Basically, the researchers suggest that the attractiveness and "averageness" of AI-generated faces made them seem more real to the study participants, while the large variety of proportions in actual faces seemed unreal.

Interestingly, while humans struggled to differentiate between real and AI-generated faces, the researchers developed a machine-learning system capable of detecting the correct answer 94 percent of the time.”

The study could be swung in the typical “racist” direction that AI will perpetuate social biases. The answer is simple and should be invested: create better data sets to train AI algorithms.

Whitney Grace, November 24, 2023