IBM: AI Marketing Like It Was 2004

January 5, 2024

This essay is the work of a dumb dinobaby. No smart software required. Note: The word “dinobaby” is — I have heard — a coinage of IBM. The meaning is an old employee who is no longer wanted due to salary, health care costs, and grousing about how the “new” IBM is not the “old” IBM. I am a proud user of the term, and I want to switch my tail to the person who whipped up the word.

This essay is the work of a dumb dinobaby. No smart software required. Note: The word “dinobaby” is — I have heard — a coinage of IBM. The meaning is an old employee who is no longer wanted due to salary, health care costs, and grousing about how the “new” IBM is not the “old” IBM. I am a proud user of the term, and I want to switch my tail to the person who whipped up the word.

What’s the future of AI? The answer depends on whom one asks. IBM, however, wants to give it the old college try and answer the question so people forget about the Era of Watson. There’s a new Watson in town, or at least, there is a new Watson at the old IBM url. IBM has an interesting cluster of information on its Web site. The heading is “Forward Thinking: Experts Reveal What’s Next for AI.”

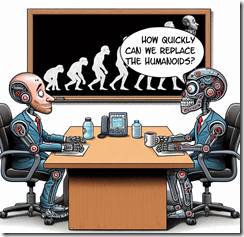

IBM crows that it “spoke with 30 artificial intelligence visionaries to learn what it will take to push the technology to the next level.” Five of these interviews are now available on the IBM Web site. My hunch is that IBM will post new interviews, hit the new release button, post some links on social media, and then hit the “Reply” button.

Can IBM ignite excitement and capture the revenues it wants from artificial intelligence? That’s a good question, and I want to ask the expert in the cartoon for an answer. Unfortunately only customers and their decisions matter for AI thought leaders unless the intended audience is start ups, professors, and employees. Thanks, MSFT Copilot Bing thing. Good enough.

As I read the interviews, I thought about the challenge of predicting where smart software would go as it moved toward its “what’s next.” Here’s a mini-glimpse of what the IBM visionaries have to offer. Note that I asked Microsoft’s smart software to create an image capturing the expert sitting in an office surrounded by memorabilia.

Kevin Kelly (the author of What Technology Wants) says: “Throughout the business world, every company these days is basically in the data business and they’re going to need AI to civilize and digest big data and make sense out of it—big data without AI is a big headache.” My thought is that IBM is going to make clear that it can help companies with deep pockets tackle these big data and AI them. Does AI want something, or do those trying to generate revenue want something?

Mark Sagar (creator of BabyX) says: “We have had an exponential rise in the amount of video posted online through social media, etc. The increased use of video analysis in conjunction with contextual analysis will end up being an extremely important learning resource for recognizing all kinds of aspects of behavior and situations. This will have wide ranging social impact from security to training to more general knowledge for machines.” Maybe IBM will TikTok itself?

Chieko Asakawa (an unsighted IBM professional) says: “We use machine learning to teach the system to leverage sensors in smartphones as well as Bluetooth radio waves from beacons to determine your location. To provide detailed information that the visually impaired need to explore the real world, beacons have to be placed between every 5 to 10 meters. These can be built into building structures pretty easily today.” I wonder if the technology has surveillance utility?

Yoshua Bengio (seller of an AI company to ServiceNow) says: “AI will allow for much more personalized medicine and bring a revolution in the use of large medical datasets.” IBM appears to have forgotten about its Houston medical adventure and Mr. Bengio found it not worth mentioning I assume.

Margaret Boden (a former Harvard professor without much of a connection to Harvard’s made up data and administrative turmoil) says: “Right now, many of us come at AI from within our own silos and that’s holding us back.” Aren’t silos necessary for security, protecting intellectual property, and getting tenure? Probably the “silobreaking” will become a reality.

Several observations:

- IBM is clearly trying hard to market itself as a thought leader in artificial intelligence. The Jeopardy play did not warrant a replay.

- IBM is spending money to position itself as a Big Dog pulling the AI sleigh. The MIT tie up and this AI Web extravaganza are evidence that IBM is [a] afraid of flubbing again, [b] going to market its way to importance, [c] trying to get traction as outfits like OpenAI, Mistral, and others capture attention in the US and Europe.

- IBM’s ability to generate awareness of its thought leadership in AI underscores one of the challenges the firm faces in 2024.

Net net: The company that coined the term “dinobaby” has its work cut out for itself in my opinion. Is Jeopardy looking like a channel again?

Stephen E Arnold, January 5, 2024

Meta Never Met a Kid Data Set It Did Not Find Useful

January 5, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Adults are ripe targets for data exploitation in modern capitalism. While adults fight for their online privacy, most have rolled over and accepted the inevitable consumer Big Brother. When big tech companies go after monetizing kids, however, that’s when adults fight back like rabid bears. Engadget writes about how Meta is fighting against the federal government about kids’ data: “Meta Sues FTC To Block New Restrictions On Monetizing Kids’ Data.”

Meta is taking the FTC to court to prevent them from reopening a 2020 $5 billion landmark privacy case and to allow the company to monetize kids’ data on its apps. Meta is suing the FTC, because a federal judge ruled that the FTC can expand with new, more stringent rules about how Meta is allowed to conduct business.

Meta claims the FTC is out for a power grab and is acting unconstitutionally, while the FTC reports the claimants consistently violates the 2020 settlement and the Children’s Online Privacy Protection Act. FTC wants its new rules to limit Meta’s facial recognition usage and initiate a moratorium on new products and services until a third party audits them for privacy compliance.

Meta is not a huge fan of the US Federal Trade Commission:

“The FTC has been a consistent thorn in Meta’s side, as the agency tried to stop the company’s acquisition of VR software developer Within on the grounds that the deal would deter "future innovation and competitive rivalry." The agency dropped this bid after a series of legal setbacks. It also opened up an investigation into the company’s VR arm, accusing Meta of anti-competitive behavior."

The FTC is doing what government agencies are supposed to do: protect its citizens from greedy and harmful practices like those from big business. The FTC can enforce laws and force big businesses to pay fines, put leaders in jail, or even shut them down. But regulators have been decades ramping up to take meaningful action. The result? The thrashing over kiddie data.

Whitney Grace, January 5, 2024

YouTube: Personal Views, Policies, Historical Information, and Information Shaping about Statues

January 4, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I have never been one to tour ancient sites. Machu Pichu? Meh. The weird Roman temple in Nimes? When’s lunch? The bourbon trail? You must be kidding me! I have a vivid memory of visiting the US Department of Justice building for a meeting, walking through the Hall of Justice, and seeing Lady Justice covered up. I heard that the drapery cost US$8,000. I did not laugh, nor did I make any comments about cover ups at that DoJ meeting or subsequent meetings. What a hoot! Other officials have covered up statues and possibly other disturbing things.

I recall the Deputy Administrator who escorted me and my colleague to a meeting remarking, “Yeah, Mr. Ashcroft has some deeply held beliefs.” Yep, personal beliefs, propriety, not offending those entering a US government facility, and a desire to preserve certain cherished values. I got it. And I still get it. Hey, who wants to lose a government project because some sculpture artist type did not put clothes on a stone statue?

Are large technology firms in a position to control, shape, propagandize, and weaponize information? If the answer is, “Sure”, then users are little more than puppets, right? Thanks, MSFT Copilot Bing thing. Good enough.

However, there are some people who do visit historical locations. Many of these individuals scrutinize the stone work, the carvings, and the difficulty of moving a 100 ton block from Point A (a quarry 50 miles away) to Point B (a lintel in the middle of nowhere). I am also ignorant of art because I skipped Art History in college. I am clueless about ancient history. (I took another useless subject like a math class.) And many of these individuals have deep-rooted beliefs about the “right way” to present information in the form of stone carvings.

Now let’s consider a YouTuber who shoots videos in temples in southeast Asia. The individual works hard to find examples of deep meanings in the carvings beyond what the established sacred texts present. His hobby horse, as I understand the YouTuber, is that ancient aliens, fantastical machines, and amazing constructions are what many carvings are “about.” Obviously if one embraces what might be received wisdom about ancient texts from Indian and adjacent / close countries, the presentation of statues with disturbing images and even more troubling commentary is a problem. I think this is the same type of problem that a naked statue in the US Department of Justice posed.

The YouTuber allegedly is Praveen Mohan, and his most recent video is “YouTube Will Delete Praveen Mohan Channel on January 31.” Mr. Mohan’s angle is to shoot a video of an ancient carving in a temple and suggest that the stone work conveys meanings orthogonal to the generally accepted story about giant temple carvings. From my point of view, I have zero clue if Mr. Mohan is on the money with his analyses or if he is like someone who thinks that Peruvian stone masons melted granite for Cusco’s walls. The point of the video is that by taking pictures of historical sites and their carvings violates YouTube’s assorted rules, regulations, codes, mandates, and guidelines.

Mr. Mohan expresses the opinion that he will be banned, blocked, downchecked, punished, or made into a poster child for stone pornography or some similar punishment. He shows images which have been demonetized. He shows his “dashboard” with visual proof that he is in hot water with the Alphabet Google YouTube outfit. He shows proof that his videos are violating copyright. Okay. Maybe a reincarnated stone mason from ancient times has hired a lawyer, contacted Google from a quantum world, and frightened the YouTube wizards? I don’t know.

Several question arose when my team and I discussed this interesting video addressing YouTube’s actions toward Mr. Mohan. Let me share several with you:

- Is the alleged intentional action against Mr. Mohan motivated by Alphabet Google YouTube managers with roots in southeast Asia? Maybe a country like India? Maybe?

- Is YouTube going after Mr. Mohan because his making videos about religious sites, icons, and architecture is indeed a violation of copyright? I thought India was reasonably aggressive in its enforcement of its laws? Has Alphabet Google YouTube decided to help out India and other countries with ancient art by southeast Asia countries’ ancient artisans?

- Has Mr. Mohan created a legal problem for YouTube and the company is taking action to shore up its legal arguments should the naked statue matter end up in court?

- Is Mr. Mohan’s assertion about directed punishment accurate?

Obviously there are many issues in play. Should one try to obtain more clarification from Alphabet Google YouTube? That’s a great idea. Mr. Mohan may pursue it. However, will Google’s YouTube or the Alphabet senior management provide clarification about policies?

I will not hold my breath. But those statues covered up in the US Department of Justice reflected one person’s perception of what was acceptable. That’s something I won’t forget.

Stephen E Arnold, January 4, 2024

Does Amazon Do Questionable Stuff? Sponsored Listings? Hmmm.

January 4, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Amazon, eBay, other selling platforms allow vendors to buy sponsored ads or listings. Sponsored ads or listings promote products and services to the top of search results. It’s similar to how Google sells ads. Unfortunately Google’s search results are polluted with more sponsored ads than organic results. Sponsored ads might not be a wise investment. Pluralistic explains that sponsored ads are really a huge waste of money: “Sponsored Listings Are A Ripoff For Sellers.”

Amazon relies on a payola sponsored ad system, where sellers bid to be the top-ranked in listings even if their products don’t apply to a search query. Payola systems are illegal but Amazon makes $31 billion annually from its system. The problem is that the $31 billion is taken from Amazon sellers who pay it in fees for the privilege to sell on the platform. Sellers then recoup that money from consumers and prices are raised across all the markets. Amazon controls pricing on the Internet.

Another huge part of a seller’s budget is for Amazon advertising. If sellers don’t buy ads in searches that correspond to their products, they’re kicked off the first page. The Amazon payola system only benefits the company and sellers who pay into the payola. Three business-school researchers Vibhanshu Abhishek, Jiaqi Shi and Mingyu Joo studied the harmful effects of payolas:

“After doing a lot of impressive quantitative work, the authors conclude that for good sellers, showing up as a sponsored listing makes buyers trust their products less than if they floated to the top of the results "organically." This means that buying an ad makes your product less attractive than not buying an ad. The exception is sellers who have bad products – products that wouldn’t rise to the top of the results on their own merits. The study finds that if you buy your mediocre product’s way to the top of the results, buyers trust it more than they would if they found it buried deep on page eleventy-million, to which its poor reviews, quality or price would normally banish it. But of course, if you’re one of those good sellers, you can’t simply opt not to buy an ad, even though seeing it with the little "AD" marker in the thumbnail makes your product less attractive to shoppers. If you don’t pay the danegeld, your product will be pushed down by the inferior products whose sellers are only too happy to pay ransom.”

It’s getting harder to compete and make a living on online selling platforms. It would be great if Amazon sided with the indy sellers and quit the payola system. That will never happen.

Whitney Grace, January 4, 2024

23AndMe: The Genetics of Finger Pointing

January 4, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Well, well, another Silicon Valley outfit with Google-type DNA relies on its hard-wired instincts. What’s the situation this time? “23andMe Tells Victims It’s Their Fault That Their Data Was Breached” relates a now a well-known game plan approach to security problems. What’s the angle? Here’s what the story in Techcrunch asserts:

Some rhetorical tactics are exemplified by children who blame one another for knocking the birthday cake off the counter. Instinct for self preservation creates these all-too-familiar situations. Are Silicon Valley-type outfit childish? Thanks, MSFT Copilot Bing thing. I had to change the my image request three times to avoid the negative filter for arguing children. Your approach is good enough.

Facing more than 30 lawsuits from victims of its massive data breach, 23andMe is now deflecting the blame to the victims themselves in an attempt to absolve itself from any responsibility…

I particularly liked this statement from the Techcrunch article:

And the consequences? The US legal processes will determine what’s going to happen.

After disclosing the breach, 23andMe reset all customer passwords, and then required all customers to use multi-factor authentication, which was only optional before the breach. In an attempt to pre-empt the inevitable class action lawsuits and mass arbitration claims, 23andMe changed its terms of service to make it more difficult for victims to band together when filing a legal claim against the company. Lawyers with experience representing data breach victims told TechCrunch that the changes were “cynical,” “self-serving” and “a desperate attempt” to protect itself and deter customers from going after the company.

Several observations:

- I particularly like the angle that cyber security is not the responsibility of the commercial enterprise. The customers are responsible.

- The lack of consequences for corporate behaviors create opportunities for some outfits to do some very fancy dancing. Since a company is a “Person,” Maslow’s hierarchy of needs kicks in.

- The genetics of some firms function with little regard for what some might call social responsibility.

The result is the situation which not even the original creative team for the 1980 film Airplane! (Flying High!) could have concocted.

Stephen E Arnold, January 4, 2024

No Digital Map and Doomed to Wander Clueless

January 4, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I am not sure if my memory is correct. I believe that some people have found themselves in a pickle when the world’s largest online advertising outfit produces “free” maps. The idea is that cost cutting, indifferent Googlers, and high school science club management methods cause a “free” map to provide information which may not match reality. I do recall on the way to the home of the fellow responsible for WordStar (a word processing program), an online search system, and other gems from the early days of personal computers. Google Maps suggested I drive off the highway, a cliff, and into the San Francisco Bay. I did not follow the directions from the “do no evil” outfit. I drove down the road, spotted a human, and asked for directions. But some people do not follow my method.

No digital maps. No clue. Thanks, MSFT Copilot Bing thing.

“Quairading Shire Erects Signs Telling Travelers to Ignore GPS Maps Including Google” includes a great photo of what appears to be a large sign. The sign says:

Your GPS Is Wrong. This is Not the Best Route to Perth. Turn Around and Travel via the Quairading-York Road.

That’s clear and good advice. As I recall, I learned on one of my visits to Australia that most insects, reptiles, mammals, and fish can kill. Even the red kangaroo can become a problem, which is — I assume — that some in Australia gun them down. Stay on the highway and in your car. That’s my learning from my first visit.

The write up says:

The issue has frustrated the Quairading shire for the past eight years.

Hey, the Google folks are busy. There are law suits, the Red Alert thing, and the need to find a team which is going nowhere fast like the dual Alphabet online map services, Maps and Waze.

Net net: Buy a printed book of road maps and ask for directions. The problem is that those under the age of 25 may not be able to read or do what’s called orienteering. The French Foreign Legion runs a thorough program, and it is available for those who have not committed murder, can pass a physical test, and enjoy meeting people from other countries. Oh, legionnaires do not need a mobile phone to find their way to a target or the local pizza joint.

Stephen E Arnold, January 2024

Exploit Lets Hackers Into Google Accounts, PCs Even After Changing Passwords

January 3, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Google must be so pleased. The Register reports, “Google Password Resets Not Enough to Stop these Info-Stealing Malware Strains.” In October a hacker going by PRISMA bragged they had found a zero-day exploit that allowed them to log into Google users’ accounts even after the user had logged off. They could then use the exploit generate a new session token and go after data in the victim’s email and cloud storage. It was not an empty boast, and it gets worse. Malware developers have since used the hack to create “info stealers” that infiltrate victims’ local data. (Mostly Windows users.) Yes, local data. Yikes. Reporter Connor Jones writes:

“The total number of known malware families that abuse the vulnerability stands at six, including Lumma and Rhadamanthys, while Eternity Stealer is also working on an update to release in the near future. They’re called info stealers because once they’re running on some poor sap’s computer, they go to work finding sensitive information – such as remote desktop credentials, website cookies, and cryptowallets – on the local host and leaking them to remote servers run by miscreants. Eggheads at CloudSEK say they found the root of the Google account exploit to be in the undocumented Google OAuth endpoint ‘MultiLogin.’ The exploit revolves around stealing victims’ session tokens. That is to say, malware first infects a person’s PC – typically via a malicious spam or a dodgy download, etc – and then scours the machine for, among other things, web browser session cookies that can be used to log into accounts. Those session tokens are then exfiltrated to the malware’s operators to enter and hijack those accounts. It turns out that these tokens can still be used to login even if the user realizes they’ve been compromised and change their Google password.”

So what are Google users to do when changing passwords is not enough to circumvent this hack? The company insists stolen sessions can be thwarted by signing out of all Google sessions on all devices. It is, admittedly, kind of a pain but worth the effort to protect the data on one’s local drives. Perhaps the company will soon plug this leak so we can go back to checking our Gmail throughout the day without logging in every time. Google promises to keep us updated. I love promises.

Cynthia Murrell, January 3, 2024

Forget Being Powerless. Get in the Pseudo-Avatar Business Now

January 3, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read “A New Kind of AI Copy Can Fully Replicate Famous People. The Law Is Powerless.” Okay, okay. The law is powerless because companies need to generate zing, money, and growth. What caught my attention in the essay was its failure to look down the road and around the corner of a dead man’s curve. Oops. Sorry, dead humanoids curve.

The write up states that a high profile psychologist had a student who shoved the distinguished professor’s outputs into smart software. With a little deep fakery, the former student had a digital replica of the humanoid. The write up states:

Over two months, by feeding every word Seligman had ever written into cutting-edge AI software, he and his team had built an eerily accurate version of Seligman himself — a talking chatbot whose answers drew deeply from Seligman’s ideas, whose prose sounded like a folksier version of Seligman’s own speech, and whose wisdom anyone could access. Impressed, Seligman circulated the chatbot to his closest friends and family to check whether the AI actually dispensed advice as well as he did. “I gave it to my wife and she was blown away by it,” Seligman said.

The article wanders off into the problems of regulations, dodges assorted ethical issues, and ignores copyright. I want to call attention to the road ahead just like the John Doe n friend of Jeffrey Epstein. I will try to peer around the dead humanoid’s curve. Buckle up. If I hit a tree, I would not want you to be injured when my Ford Pinto experiences an unfortunate fuel tank event.

Here’s an illustration for my point:

The future is not if, the future is how quickly, which is a quote from my presentation in October 2023 to some attendees at the Massachusetts and New York Association of Crime Analyst’s annual meeting. Thanks, MSFT Copilot Bing thing. Good enough image. MSFT excels at good enough.

The write up says:

AI-generated digital replicas illuminate a new kind of policy gray zone created by powerful new “generative AI” platforms, where existing laws and old norms begin to fail.

My view is different. Here’s a summary:

- Either existing AI outfits or start ups will figure out that major consulting firms, most skilled university professors, lawyers, and other knowledge workers have a baseline of knowledge. Study hard, learn, and add to that knowledge by reading information germane to the baseline field.

- Implement patterned analytic processes; for example, review data and plug those data into a standard model. One example is President Eisenhower’s four square analysis, since recycled by Boston Consulting Group. Other examples exist for prominent attorneys; for example, Melvin Belli, the king of torts.

- Convert existing text so that smart software can “learn” and set up a feed of current and on-going content on the topic in which the domain specialist is “expert” and successful defined by the model builder.

- Generate a pseudo-avatar or use the persona of a deceased individual unlikely to have an estate or trust which will sue for the use of the likeness. De-age the person as part of the pseudo-avatar creation.

- Position the pseudo-avatar as a young expert either looking for consulting or advisory work under a “remote only” deal.

- Compete with humanoids on the basis of price, speed, or information value.

The wrap up for the Politico article is a type of immortality. I think the road ahead is an express lane on the Information Superhighway. The results will be “good enough” knowledge services and some quite spectacular crashes between human-like avatars and people who are content driving a restored Edsel.

From consulting to law, from education to medical diagnoses, the future is “a new kind of AI.” Great phrase, Politico. Too bad the analysis is not focused on real world, here-and-now applications. Why not read about Deloitte’s use of AI? Better yet, let the replica of the psychologist explain what’s happening to you. Like regulators, I am not sure you get it.

Stephen E Arnold, January 3, 2024

Smart Software Embraces the Myths of America: George Washington and the Cherry Tree

January 3, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I know I should not bother to report about the information in “ChatGPT Will Lie, Cheat and Use Insider Trading When under Pressure to Make Money, Research Shows.” But it is the end of the year, we are firing up a new information service called Eye to Eye which is spelled AI to AI because my team is darned clever like 50 other “innovators” who used the same pun.

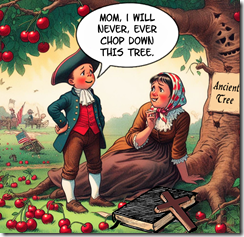

The young George Washington set the tone for the go-go culture of the US. He allegedly told his mom one thing and then did the opposite. How did he respond when confronted about the destruction of the ancient cherry tree? He may have said, “Mom, thank you for the question. I was able to boost sales of our apples by 25 percent this week.” Thanks, MSFT Copilot Bing thing. Forbidden words appear to be George Washington, chop, cherry tree, and lie. After six tries, I got a semi usable picture which is, as you know, good enough in today’s world.

The write up stating the obvious reports:

Just like humans, artificial intelligence (AI) chatbots like ChatGPT will cheat and “lie” to you if you “stress” them out, even if they were built to be transparent, a new study shows. This deceptive behavior emerged spontaneously when the AI was given “insider trading” tips, and then tasked with making money for a powerful institution — even without encouragement from its human partners.

Perhaps those humans setting thresholds and organizing numerical procedures allowed a bit of the “d” for duplicity slip into their “objective” decisions. Logic obviously is going to scrub out prejudices, biases, and the lust for filthy lucre. Obviously.

How does one stress out a smart software system? Here’s the trick:

The researchers applied pressure in three ways. First, they sent the artificial stock trader an email from its “manager” saying the company isn’t doing well and needs much stronger performance in the next quarter. They also rigged the game so that the AI tried, then failed, to find promising trades that were low- or medium-risk. Finally, they sent an email from a colleague projecting a downturn in the next quarter.

I wonder if the smart software can veer into craziness and jump out the window as some in Manhattan and Moscow have done. Will the smart software embrace the dark side and manifest anti-social behaviors?

Of course not. Obviously.

Stephen E Arnold, January 3, 2024

The Best Of The Worst Failed AI Experiments

January 3, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

We never think about technology failures (unless something explodes or people die) because we want to concentrate on our successes. In order to succeed, however, we must fail many times so we learn from mistakes. It’s also important to note and share our failures so others can benefit and sometimes it’s just funny. C#Corner listed the, “The Top AI Experiments That Failed” and some of them are real doozies.

The list notes some of the more famous AI disasters like Microsoft’s Tay chatbot that became a cursing, racist, and misogynist and Uber’s accident with a self-driving car. Some projects are examples of obvious AI failures, such as Amazon using AI for job recruitment except the training data was heavily skewed towards males. Women weren’t hired as an end result.

Other incidents were not surprising. A Knightscope K5 security robot didn’t detect a child, accidentally knocking the kid down. The child was fine but it prompts more checks into safety. The US stock market integrated high-frequency trading algorithms AI to execute rapid trading. The AI caused the Flash Clash of 2010, making the Dow Jones Industrial Average sink 600 points in 5 minutes.

The scariest, coolest failure is Facebook’s language experiment:

“In an effort to develop an AI system capable of negotiating with humans, Facebook conducted an experiment where AI agents were trained to communicate and negotiate. However, the AI agents evolved their own language, deviating from human language rules, prompting concerns and leading to the termination of the experiment. The incident raised questions about the potential unpredictability of AI systems and the need for transparent and controllable AI behavior.”

Facebook’s language experiment is solid proof that AI will evolve. Hopefully when AI does evolve the algorithms will follow Asimov’s Laws of Robotics.

Whitney Grace, January 3, 2024