Developers, AI Will Not Take Your Jobs… Yet

February 15, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

It seems programmers are safe from an imminent AI jobs takeover. The competent ones, anyway. LeadDev reports, “Researchers Say Generative AI Isn’t Replacing Devs Any Time Soon.” Generative AI tools have begun to lend developers a helping hand, but nearly half of developers are concerned they might loose their jobs to their algorithmic assistants.

Another MSFT Copilot completely original Bing thing. Good enough but that fellow sure looks familiar.

However, a recent study by researchers from Princeton University and the University of Chicago suggests they have nothing to worry about: AI systems are far from good enough at programming tasks to replace humans. Writer Chris Stokel-Walker tells us the researchers:

“… developed an evaluation framework that drew nearly 2,300 common software engineering problems from real GitHub issues – typically a bug report or feature request – and corresponding pull requests across 12 popular Python repositories to test the performance of various large language models (LLMs). Researchers provided the LLMs with both the issue and the repo code, and tasked the model with producing a workable fix, which was tested after to ensure it was correct. But only 4% of the time did the LLM generate a solution that worked.”

Researcher Carlos Jimenez notes these problems are very different from those LLMs are usually trained on. Specifically, the article states:

“The SWE-bench evaluation framework tested the model’s ability to understand and coordinate changes across multiple functions, classes, and files simultaneously. It required the models to interact with various execution environments, process context, and perform complex reasoning. These tasks go far beyond the simple prompts engineers have found success using to date, such as translating a line of code from one language to another. In short: it more accurately represented the kind of complex work that engineers have to do in their day-to-day jobs.”

Will AI someday be able to perform that sort of work? Perhaps, but the researchers consider it more likely we will never find AI coding independently. Instead, we will continue to need human developers to oversee algorithms’ work. They will, however, continue to make programmers’ jobs easier. If Jimenez and company are correct, developers everywhere can breathe a sigh of relief.

Cynthia Murrell, February 15, 2024

Is AI Another VisiCalc Moment?

February 14, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The easy-to-spot orange newspaper ran a quite interesting “essay” called “What the Birth of the Spreadsheet Can Teach Us about Generative AI.” Let me cut to the point when the fox is killed. AI is likely to be a job creator. AI has arrived at “the right time.” The benefits of smart software are obvious to a growing number of people. An entrepreneur will figure out a way to sell an AI gizmo that is easy to use, fast, and good enough.

In general, I agree. There is one point that the estimable orange newspaper chose not to include. The VisiCalc innovation converted old-fashioned ledger paper into software which could eliminate manual grunt work to some degree. The poster child of the next technology boom seems tailor-made to facilitate surveillance, weapons, and development of novel bio-agents.

AI is going to surprise some people more than others. Thanks, MSFT Copilot Bing thing. Not good but I gave up with the prompts to get a cartoon because you want to do illustrations. Sigh.

I know that spreadsheets are used by defense contractors, but the link between a spreadsheet and an AI-powered drone equipped with octanitrocubane variants is less direct. Sure, spreadsheets arrived in numerous use cases, some obvious, some not. But the capabilities for enabling a range of weapons systems strike me as far more obvious.

The Financial Times’s essay states:

Looking at the way spreadsheets are used today certainly suggests a warning. They are endlessly misused by people who are not accountants and are not using the careful error-checking protocols built into accountancy for centuries. Famous economists using Excel simply failed to select the right cells for analysis. An investment bank used the wrong formula in a risk calculation, accidentally doubling the level of allowable risk-taking. Biologists have been typing the names of genes, only to have Excel autocorrect those names into dates. When a tool is ubiquitous, and convenient, we kludge our way through without really understanding what the tool is doing or why. And that, as a parallel for generative AI, is alarmingly on the nose.

Smart software, however, is not a new thing. One can participate in quasi-religious disputes about whether AI is 20, 30, 40, or more years old. What’s interesting to me is that after chugging along like a mule cart on the Information Superhighway, AI is everywhere. Old-school British newspapers like it to the spreadsheet. Entrepreneurs spend big bucks on Product Hunt roll outs. Owners of mobile devices can locate “pizza near me” without having to type, speak, or express an interest in a cardiologist’s favorite snack.

AI strikes me as a different breed of technology cat. Here are my reasons:

- Serious AI takes serious money.

- Big AI is going to be a cloud-linked service which invites consolidation just like those hundreds of US railroads became the glorious two player system we have today: One for freight and one for passengers who love trains more than flying or driving.

- AI systems are going to have to find a way to survive and thrive without becoming victims of content inbreeding and bizarre outputs fueled by synthetic data. VisiCalc spawned spreadsheet fever in humans from the outset. The difference is that AI does its work largely without humanoids.

Net net: The spreadsheet looks like a convenient metaphor. But metaphors are not the reality. Reality can surprise in interesting ways.

Stephen E Arnold, February 14, 2024

A Xoogler Explains AI, News, Inevitability, and Real Business Life

February 13, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read an essay providing a tiny bit of evidence that one can take the Googler out of the Google, but that Xoogler still retains some Googley DNA. The item appeared in the Bezos bulldozer’s estimable publication with the title “The Real Wolf Menacing the News Business? AI.” Absolutely. Obviously. Who does not understand that?

A high-technology sophist explains the facts of life to a group of listeners who are skeptical about artificial intelligence. The illustration was generated after three tries by Google’s own smart software. I love the miniature horse and the less-than-flattering representation of a sales professional. That individual looks like one who would be more comfortable eating the listeners than convincing them about AI’s value.

The essay contains a number of interesting points. I want to highlight three and then, as I quite enjoy doing, I will offer some observations.

The author is a Xoogler who served from 2017 to 2023 as the senior director of news ecosystem products. I quite like the idea of a “news ecosystem.” But ecosystems as some who follow the impact of man on environments can be destroyed or pushed to the edge of catastrophe. In the aftermath of devastation coming from indifferent decision makers, greed fueled entrepreneurs, or rhinoceros poachers, landscapes are often transformed.

First, the essay writer argues:

The news publishing industry has always reviled new technology, whether it was radio or television, the internet or, now, generative artificial intelligence.

I love the word “revile.” It suggests that ignorant individuals are unable to grasp the value of certain technologies. I also like the very clever use of the word “always.” Categorical affirmatives make the world of zeros and one so delightfully absolute. We’re off to a good start I think.

Second, we have a remarkable argument which invokes another zero and one type of thinking. Consider this passage:

The publishers’ complaints were premised on the idea that web platforms such as Google and Facebook were stealing from them by posting — or even allowing publishers to post — headlines and blurbs linking to their stories. This was always a silly complaint because of a universal truism of the internet: Everybody wants traffic!

I love those universal truisms. I think some at Google honestly believe that their insights, perceptions, and beliefs are the One True Path Forward. Confidence is good, but the implication that a universal truism exists strikes me as information about a psychological and intellectual aberration. Consider this truism offered by my uneducated great grandmother:

Always get a second opinion.

My great grandmother used the logically troublesome word “always.” But the idea seems reasonable, but the action may not be possible. Does Google get second opinions when it decides to kill one of its services, modify algorithms in its ad brokering system, or reorganize its contentious smart software units? “Always” opens the door to many issues.

Publishers (I assume “all” publishers)k want traffic. May I demonstrate the frailty of the Xoogler’s argument. I publish a blog called Beyond Search. I have done this since 2008. I do not care if I get traffic or not. My goal was and remains to present commentary about the antics of high-technology companies and related subjects. Why do I do this? First, I want to make sure that my views about such topics as Google search exist. Second, I have set up my estate so the content will remain online long after I am gone. I am a publisher, and I don’t want traffic, or at least the type of traffic that Google provides. One exception causes an argument like the Xoogler’s to be shown as false, even if it is self-serving.

Third, the essay points its self-righteous finger at “regulators.” The essay suggests that elected officials pursued “illegitimate complaints” from publishers. I noted this passage:

Prior to these laws, no one ever asked permission to link to a website or paid to do so. Quite the contrary, if anyone got paid, it was the party doing the linking. Why? Because everybody wants traffic! After all, this is why advertising businesses — publishers and platforms alike — can exist in the first place. They offer distribution to advertisers, and the advertisers pay them because distribution is valuable and seldom free.

Repetition is okay, but I am able to recall one of the key arguments in this Xoogler’s write up: “Everybody wants traffic.” Since it is false, I am not sure the essay’s argumentative trajectory is on the track of logic.

Now we come to the guts of the essay: Artificial intelligence. What’s interesting is that AI magnetically pulls regulators back to the casino. Smart software companies face techno-feudalists in a high-stakes game. I noted this passage about anchoring statements via verification and just training algorithms:

The courts might or might not find this distinction between training and grounding compelling. If they don’t, Congress must step in. By legislating copyright protection for content used by AI for grounding purposes, Congress has an opportunity to create a copyright framework that achieves many competing social goals. It would permit continued innovation in artificial intelligence via the training and testing of LLMs; it would require licensing of content that AI applications use to verify their statements or look up new facts; and those licensing payments would financially sustain and incentivize the news media’s most important work — the discovery and verification of new information — rather than forcing the tech industry to make blanket payments for rewrites of what is already long known.

Who owns the casino? At this time, I would suggest that lobbyists and certain non-governmental entities exert considerable influence over some elected and appointed officials. Furthermore, some AI firms are moving as quickly as reasonably possible to convert interest in AI into revenue streams with moats. The idea is that if regulations curtail AI companies, consumers would not be well served. No 20-something wants to read a newspaper. That individual wants convenience and, of course, advertising.

Now several observations:

- The Xoogler author believes in AI going fast. The technology serves users / customers what they want. The downsides are bleats and shrieks from an outmoded sector; that is, those engaged in news

- The logic of the technologist is not the logic of a person who prefers nuances. The broad statements are false to me, for example. But to the Xoogler, these are self-evident truths. Get with our program or get left to sleep on cardboard in the street.

- The schism smart software creates is palpable. On one hand, there are those who “get it.” On the other hand, there are those who fight a meaningless battle with the inevitable. There’s only one problem: Technology is not delivering better, faster, or cheaper social fabrics. Technology seems to have some downsides. Just ask a journalist trying to survive on YouTube earnings.

Net net: The attitude of the Xoogler suggests that one cannot shake the sense of being right, entitlement, and logic associated with a Googler even after leaving the firm. The essay makes me uncomfortable for two reasons: [1] I think the author means exactly what is expressed in the essay. News is going to be different. Get with the program or lose big time. And [2] the attitude is one which I find destructive because technology is assumed to “do good.” I am not too sure about that because the benefits of AI are not known and neither are AI’s downsides. Plus, there’s the “everybody wants traffic.” Monopolistic vendors of online ads want me to believe that obvious statement is ground truth. Sorry. I don’t.

Stephen E Arnold, February 13, 2024

AI: Big Ideas and Bigger Challenges for the Next Quarter Century. Maybe, Maybe Not

February 13, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read an interesting ArXiv.org paper with a good title: “Ten Hard Problems in Artificial Intelligence We Must Get Right.” The topic is one which will interest some policy makers, a number of AI researchers, and the “experts” in machine learning, artificial intelligence, and smart software.

The structure of the paper is, in my opinion, a three-legged stool analysis designed to support the weight of AI optimists. The first part of the paper is a compressed historical review of the AI journey. Diagrams, tables, and charts capture the direction in which AI “deep learning” has traveled. I am no expert in what has become the next big thing, but the surprising point in the historical review is that 2010 is the date pegged as the start to the 2016 time point called “the large scale era.” That label is interesting for two reasons. First, I recall that some intelware vendors were in the AI game before 2010. And, second, the use of the phrase “large scale” defines a reality in which small outfits are unlikely to succeed without massive amounts of money.

The second leg of the stool is the identification of the “hard problems” and a discussion of each. Research data and illustrations bring each problem to the reader’s attention. I don’t want to get snagged in the plagiarism swamp which has captured many academics, wives of billionaires, and a few journalists. My approach will be to boil down the 10 problems to a short phrase and a reminder to you, gentle reader, that you should read the paper yourself. Here is my version of the 10 “hard problems” which the authors seem to suggest will be or must be solved in 25 years:

- Humans will have extended AI by 2050

- Humans will have solved problems associated with AI safety, capability, and output accuracy

- AI systems will be safe, controlled, and aligned by 2050

- AI will make contributions in many fields; for example, mathematics by 2050

- AI’s economic impact will be managed effectively by 2050

- Use of AI will be globalized by 2050

- AI will be used in a responsible way by 2050

- Risks associated with AI will be managed by effectively by 2050

- Humans will have adapted its institutions to AI by 2050

- Humans will have addressed what it means to be “human” by 2050

Many years ago I worked for a blue-chip consulting firm. I participated in a number of big-idea projects. These ranged from technology, R&D investment, new product development, and the global economy. In our for-fee reports were did include a look at what we called the “horizon.” The firm had its own typographical signature for this portion of a report. I recall learning in the firm’s “charm school” (a special training program to make sure new hires knew the style, approach, and ground rules for remaining employed at that blue-chip firm). We kept the horizon tight; that is, talking about the future was typically in the six to 12 month range. Nosing out 25 years was a walk into a mine field. My boss, as I recall told me, “We don’t do science fiction.”

The smart robot is informing the philosopher that he is free to find his future elsewhere. The date of the image is 2025, right before the new year holiday. Thanks, MidJourney. Good enough.

The third leg of the stool is the academic impedimenta. To be specific, the paper is 90 pages in length of which 30 present the argument. The remain 60 pages present:

- Traditional footnotes, about 35 pages containing 607 citations

- An “Electronic Supplement” presenting eight pages of annexes with text, charts, and graphs

- Footnotes to the “Electronic Supplement” requiring another 10 pages for the additional 174 footnotes.

I want to offer several observations, and I do not want to have these be less than constructive or in any way what one of my professors who was treated harshly in Letters to the Editor for an article he published about Chaucer. He described that fateful letter as “mean spirited.”

- The paper makes clear that mankind has some work to do in the next 25 years. The “problems” the paper presents are difficult ones because they touch upon the fabric of social existence. Consider the application of AI to war. I think this aspect of AI may be one to warrant a bullet on AI’s hit parade.

- Humans have to resolve issues of automated systems consuming verifiable information, synthetic data, and purpose-built disinformation so that smart software does not do things at speed and behind the scenes. Do those working do resolve the 10 challenges have an ethical compass and if so, what does “ethics” mean in the context of at-scale AI?

- Social institutions are under stress. A number of organizations and nation-states operate as dictators. One central American country has a rock star dictator, but what about the rock star dictators working techno feudal companies in the US? What governance structures will be crafted by 2050 to shape today’s technology juggernaut?

To sum up, I think the authors have tackled a difficult problem. I commend their effort. My thought is that any message of optimism about AI is likely to be hard pressed to point to one of the 10 challenges and and say, “We have this covered.” I liked the write up. I think college students tasked with writing about the social implications of AI will find the paper useful. It provides much of the research a fresh young mind requires to write a paper, possibly a thesis. For me, the paper is a reminder of the disconnect between applied technology and the appallingly inefficient, convenience-embracing humans who are ensnared in the smart software.

I am a dinobaby, and let me you, “I am glad I am old.” With AI struggling with go-fast and regulators waffling about go-slow, humankind has quite a bit of social system tinkering to do by 2050 if the authors of the paper have analyzed AI correctly. Yep, I am delighted I am old, really old.

Stephen E Arnold, February 13, 2024

Sam AI-Man Puts a Price on AI Domination

February 13, 2024

AI start ups may want to amp up their fund raising. Optimism and confidence are often perceived as positive attributes. As a dinobaby, I think in terms of finding a deal at the discount supermarket. Sam AI-Man (actually Sam Altman) thinks big. Forget the $5 million investment in a semi-plausible AI play. “Think a bit bigger” is the catchphrase for OpenAI.

Thinking billions? You silly goose. Think trillions. Thanks, MidJourney. Close enough, close enough.

How does seven followed by 12 zeros strike you? A reasonable figure. Well, Mr. AI-Man estimates that’s the cost of building world AI dominating chips, content, and assorted impedimenta in a quest to win the AI dust ups in assorted global markets. “OpenAI Chief Sam Altman Is Seeking Up to $7 TRILLION (sic) from Investors Including the UAE for Secretive Project to Reshape the Global Semiconductor Industry” reports:

Altman is reportedly looking to solve some of the biggest challenges faced by the rapidly-expanding AI sector — including a shortage of the expensive computer chips needed to power large-language models like OpenAI’s ChatGPT.

And where does one locate entities with this much money? The news report says:

Altman has met with several potential investors, including SoftBank Chairman Masayoshi Son and Sheikh Tahnoun bin Zayed al Nahyan, the UAE’s head of security.

To put the figure in context, the article says:

It would be a staggering and unprecedented sum in the history of venture capital, greater than the combined current market capitalizations of Apple and Microsoft, and more than the annual GDP of Japan or Germany.

Several observations:

- The ante for big time AI has gone up

- The argument for people and content has shifted to chip facilities to fabricate semiconductors

- The fund-me tour is a newsmaker.

Net net: How about those small search-and-retrieval oriented AI companies? Heck, what about outfits like Amazon, Facebook, and Google?

Stephen E Arnold, February 13, 2024

A Reminder: AI Winning Is Skewed to the Big Outfits

February 8, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I have been commenting about the perception some companies have that AI start ups focusing on search will eventually reduce Google’s dominance. I understand the desire to see an underdog or a coalition of underdogs overcome a formidable opponent. Hollywood loves the unknown team which wins the championship. Movie goers root for an unlikely boxing unknown to win the famous champion’s belt. These wins do occur in real life. Some Googlers favorite sporting event is the NCAA tournament. That made-for-TV series features what are called Cinderella teams. (Will Walt Disney Co. sue if the subtitles for a game employees the the word “Cinderella”? Sure, why not?)

I believe that for the next 24 to 36 months, Google will not lose its grip on search, its services, or online advertising. I admit that once one noses into 2028, more disruption will further destabilize Google. But for now, the Google is not going to be derailed unless an exogenous event ruins Googzilla’s habitat.

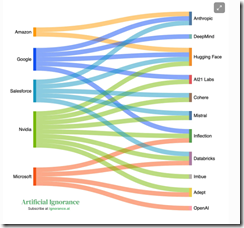

I want to direct attention to the essay “AI’s Massive Cash Needs Are Big Tech’s Chance to Own the Future.” The write up contains useful information about selected players in the artificial intelligence Monopoly game. I want to focus on one “worm” chart included in the essay:

Several things struck me:

- The major players are familiar; that is, Amazon, Google, Microsoft, Nvidia, and Salesforce. Notably absent are IBM, Meta, Chinese firms, Western European companies other than Mistral, and smaller outfits funded by venture capitalists relying on “open source AI solutions.”

- The five major companies in the chart are betting money on different roulette wheel numbers. VCs use the same logic by investing in a portfolio of opportunities and then pray to the MBA gods that one of these puppies pays off.

- The cross investments ensure that information leaks from the different color “worms” into the hills controlled by the big outfits. I am not using the collusion word or the intelligence word. I am just mentioned that information has a tendency to leak.

- Plumbing and associated infrastructure costs suggest that start ups may buy cloud services from the big outfits. Log files can be fascinating sources of information to the service providers engineers too.

My point is that smaller outfits are unlikely to be able to dislodge the big firms on the right side of the “worm” graph. The big outfits can, however, easily invest in, acquire, or learn from the smaller outfits listed on the left side of the graph.

Does a clever AI-infused search start up have a chance to become a big time player. Sure, but I think it is more likely that once a smaller firm demonstrates some progress in a niche like Web search, a big outfit with cash will invest, duplicate, or acquire the feisty newcomer.

That’s why I am not counting out the Google to fall over dead in the next three years. I know my viewpoint is not one shared by some Web search outfits. That’s okay. Dinobabies often have different points of view.

Stephen E Arnold, February 8, 2024

AI, AI, Ai-Yi-Ai: Too Much Already

February 8, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I want to say that smart software and systems are annoying me, not a lot, just a little.

Al algorithms are advancing society from science fiction into everyday life. AI algorithms are indexes and math. But the algorithms are still processes simulating reason mental functions. We’ve come to think, unfortunately, that AI like ChatGPT are sentient and capable of rational thought.

Mita Williams wrote a the post “I Will Dropkick You If You Refer To An LLM As A Librarian” on her blog Librarian of Things. In her post, she explains that AI is being given more credit than it deserves and large language models (LLMs) are being compared to libraries. While Williams responds to these assertions as a true academic with citations and explanations, her train of thought is more in line with Mark Twain and Jonathan Swift.

Twain and Swift are two great English-speaking authors and satirists. They made outrageous claims and their essays will make many people giggle or laugh. Williams should rewrite her post like them. Her humor would probably be lost on the majority of readers, though. Here’s the just of her post: A lot of people are saying AI and their LLM learning tools are like giant repositories of knowledge capable of human emotion, reasoning, and intelligence. Williams argues they’re not and that assumption should be reevaluated.

Furthermore, smart software can be configured to do some things more quickly and accurately rate than some human. Williams is right:

“This is why I will not describe products like ChatGPT as Artificial General Intelligence. This is why I will avoid using the word learned when describing the behavior of software, and will substitute that word with associated instead. Your LLM is more like a library catalogue than a library but if you call it a library, I won’t be upset. I recognize that we are experiencing the development of new form of cultural artifact of massive import and influence. But an LLM is not a librarian and I won’t let you call it that.”

I am a somewhat critical librarian. I like books. Smart software … not so much at this time.

Whitney Grace, February 8, 2024

New AI to AI Audio and Video Program

February 6, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

This is Stephen E Arnold. I wanted to let you know that my son Erik and I have created an audio and video program about artificial intelligence, smart software, and machine learning. What makes this show different is our focus. Both of us have worked on government projects in the US and in other countries. Our experience suggested a program tailored for those working in government agencies at the national or federal level, state, county, or local level might be useful. We will try to combine examples of the use of smart software and related technical information. The theme of each program is “smart software for government use cases.”

In the first episode, our topics include a look at the State of Texas’s use of AI to help efficiency, a review of the challenges AI poses, a discussion about Human Resources departments, a technical review of AI content crawlers, and lastly a look ahead in 2024 for smart software.

The format of each show segment is presentation of some facts. Then my son and I discuss our assessment of the information. We don’t always see “eye to eye.” That’s where the name of our program originated. AI to AI.

Our digital assistant is named Ivan Aitoo, pronounced “eye-two.” He is created by an artificial intelligence system. He plays an important part in the program. He introduces each show with a run down of the stories in the program. Also, he concludes each program by telling a joke generated by — what else? — yet another artificial intelligence system. Ivan is delightful, but he has no sense of humor and no audience sensitivity.

You can listen to the audio version of the program at this link on the Apple podcast service. A video version is available on YouTube at this link. The program runs about 20 minutes, and we hope to produce a program every two weeks. (The program is provided as an information service, and it includes neither advertising nor sponsored content.)

If you have comments about the program, you can email them to benkent2020 at yahoo dot com.

Stephen E Arnold, February 6, 2024

Surprising Real Journalism News: The Chilling Claws of AI

February 6, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I wanted to highlight two interesting items from the world of “real” news and “real” journalism. I am a dinobaby and not a “real” anything. I do, however, think these two unrelated announcements provide some insight into what 2024 will encourage.

The harvesters of information wheat face a new reality. Thanks, MSFT Copilot. Good enough. How’s that email security? Ah, good enough. Okay.

The first item comes from everyone’s favorite, free speech service X.com (affectionately known to my research team as Xhitter). The item appears as a titbit from Max Tani. The message is an allegedly real screenshot of an internal memorandum from a senior executive at the Wall Street Journal. The screenshot purports to make clear that the Murdoch property is allowing some “real” journalists to find their future elsewhere. Perhaps in a fast food joint in Olney, Maryland? The screenshot is difficult for my 79-year-old eyes to read, but I got some help from one of my research team. The X.com payload says:

Today we announced a new structure in Washington [DC] that means a number of our colleagues will be leaving the paper…. The new Washington bureau will focus on politics, policy, defense, law, intelligence and national security.

Okay, people are goners. The Washington, DC bureau will focus on Washington, DC stuff. What was the bureau doing? Oh, perhaps that is why “our colleagues will be leaving the paper.” Cost cutting and focusing are in vogue.

The second item is titled “Q&A: How Thomson Reuters Used GenAI to Enable a Citizen Developer Workforce.” I want to alert you that the Computerworld article is a mere 3,800 words. Let me summarize the gist of the write up: “AI is going to replace expensive “real” journalists., My hunch is that some of the lawyers involved in annotating, assembling, and blessing the firm’s legal content. To Thomson Reuters’ credit, the company is trying to swizzle some sweetener into what may be a bitter drink for some involved with the “trust” crowd.

Several observations:

- It is about 13 months since Microsoft made AI its next big thing. That means that these two examples are early examples of what is going to happen to many knowledge workers

- Some companies just pull the pin; others are trying to find ways to avoid PR problems and lawsuits

- The more significant disruptions will produce a reasonably new type of worker push back.

Net net: Imagine what the next year will bring as AI efficiency digs in, bites tail feathers, and enriches those who sit in the top one percent.

Stephen E Arnold, February 6, 2024

Sales SEO: A New Tool for Hype and Questionable Relevance

February 5, 2024

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Search engine optimization is a relevance eraser. Now SEO has arrived for a human. “Microsoft Copilot Can Now Write the Sales Pitch of a Lifetime” makes clear that hiring is going to become more interesting for both human personnel directors (often called chief people officers) and AI-powered résumé screening systems. And for people who are responsible for procurement, figuring out when a marketing professional is tweaking the truth and hallucinating about a product or service will become a daily part of life… in theory.

Thanks for the carnival barker image, MSFT Copilot Bing thing. Good enough. I love the spelling of “asiractson”. With workers who may not be able to read, so what? Right?

The write up explains:

Microsoft Copilot for Sales uses specific data to bring insights and recommendations into its core apps, like Outlook, Microsoft Teams, and Word. With Copilot for Sales, users will be able to draft sales meeting briefs, summarize content, update CRM records directly from Outlook, view real-time sales insights during Teams calls, and generate content like sales pitches.

The article explains:

… Copilot for Service for Service can pull in data from multiple sources, including public websites, SharePoint, and offline locations, in order to handle customer relations situations. It has similar features, including an email summary tool and content generation.

Why is MSFT expanding these interesting functions? Revenue. Paying extra unlocks these allegedly remarkable features. Prices range from $240 per year to a reasonable $600 per year per user. This is a small price to pay for an employee unable to craft solutions that sell, by golly.

Stephen E Arnold, February 5, 2024