23andMe: Those Users and Their Passwords!

December 5, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Silicon Valley and health are match fabricated in heaven. Not long ago, I learned about the estimable management of Theranos. Now I find out that “23andMe confirms hackers stole ancestry data on 6.9 million users.” If one follows the logic of some Silicon Valley outfits, the data loss is the fault of the users.

“We have the capability to provide the health data and bioinformation from our secure facility. We have designed our approach to emulate the protocols implemented by Jack Benny and his vault in his home in Beverly Hills,” says the enthusiastic marketing professional from a Silicon Valley success story. Thanks, MSFT Copilot. Not exactly Jack Benny, Ed, and the foghorn, but I have learned to live with “good enough.”

According to the peripatetic Lorenzo Franceschi-Bicchierai:

In disclosing the incident in October, 23andMe said the data breach was caused by customers reusing passwords, which allowed hackers to brute-force the victims’ accounts by using publicly known passwords released in other companies’ data breaches.

Users!

What’s more interesting is that 23andMe provided estimates of the number of customers (users) whose data somehow magically flowed from the firm into the hands of bad actors. In fact, the numbers, when added up, totaled almost seven million users, not the original estimate of 14,000 23andMe customers.

I find the leak estimate inflation interesting for three reasons:

- Smart people in Silicon Valley appear to struggle with simple concepts like adding and subtracting numbers. This gap in one’s education becomes notable when the discrepancy is off by millions. I think “close enough for horse shoes” is a concept which is wearing out my patience. The difference between 14,000 and almost 17 million is not horse shoe scoring.

- The concept of “security” continues to suffer some set backs. “Security,” one may ask?

- The intentional dribbling of information reflects another facet of what I call high school science club management methods. The logic in the case of 23andMe in my opinion is, “Maybe no one will notice?”

Net net: Time for some regulation, perhaps? Oh, right, it’s the users’ responsibility.

Stephen E Arnold, December 5, 2023

AI Adolescence Ascendance: AI-iiiiii!

December 1, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

The monkey business of smart software has revealed its inner core. The cute high school essays and the comments about how to do search engine optimization are based on the fundamental elements of money, power, and what I call ego-tanium. When these fundamental elements go critical, exciting things happen. I know this this assertion is correct because I read “The AI Doomers Have Lost This Battle”, an essay which appears in the weird orange newspaper The Financial Times.

The British bastion of practical financial information says:

It would be easy to say that this chaos showed that both OpenAI’s board and its curious subdivided non-profit and for-profit structure were not fit for purpose. One could also suggest that the external board members did not have the appropriate background or experience to oversee a $90bn company that has been setting the agenda for a hugely important technology breakthrough.

In my lingo, the orange newspaper is pointing out that a high school science club management style is like a burning electric vehicle. Once ignited, the message is, “Stand back, folks. Let it burn.”

“Isn’t this great?” asks the driver. The passenger, a former Doomsayer, replies, “AIiiiiiiiiii.” Thanks MidJourney, another good enough illustration which I am supposed to be able to determine contains copyrighted material. Exactly how? may I ask. Oh, you don’t know.

The FT picks up a big-picture idea; that is, smart software can become a problem for humanity. That’s interesting because the book “Weapons of Math Destruction” did a good job of explaining why algorithms can go off the rails. But the FT’s essay embraces the idea of software as the Terminator with the enthusiasm of the crazy old-time guy who shouted “Eureka.”

I note this passage:

Unfortunately for the “doomers”, the events of the last week have sped everything up. One of the now resigned board members was quoted as saying that shutting down OpenAI would be consistent with the mission (better safe than sorry). But the hundreds of companies that were building on OpenAI’s application programming interfaces are scrambling for alternatives, both from its commercial competitors and from the growing wave of open-source projects that aren’t controlled by anyone. AI will now move faster and be more dispersed and less controlled. Failed coups often accelerate the thing that they were trying to prevent.

Okay, the yip yap about slowing down smart software is officially wrong. I am not sure about the government committees’ and their white papers about artificial intelligence. Perhaps the documents can be printed out and used to heat the camp sites of knowledge workers who find themselves out of work.

I find it amusing that some of the governments worried about smart software are involved in autonomous weapons. The idea of a drone with access to a facial recognition component can pick out a target and then explode over the person’s head is an interesting one.

Is there a connection between the high school antics of OpenAI, the hand-wringing about smart software, and the diffusion of decider systems? Yes, and the relationship is one of those hockey stick curves so loved by MBAs from prestigious US universities. (Non reproducibility and a fondness for Jeffrey Epstein-type donors is normative behavior.)

Those who want to cash in on the next Big Thing are officially in the 2023 equivalent of the California gold rush. Unlike the FT, I had no doubt about the ascendance of the go-fast approach to technological innovation. Technologies, even lousy ones, are like gerbils. Start with a two or three and pretty so there are lots of gerbils.

Will the AI gerbils and the progeny be good or bad. Because they are based on the essential elements of life — money, power, and ego-tanium — the outlook is … exciting. I am glad I am a dinobaby. Too bad about the Doomers, who are regrouping to try and build shield around the most powerful elements now emitting excited particles. The glint in the eyes of Microsoft executives and some venture firms are the traces of high-energy AI emissions in the innovators’ aqueous humor.

Stephen E Arnold, December 1, 2023

Google Maps: Rapid Progress on Un-Usability

November 30, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read a Xhitter.com post about Google Maps. Those who have either heard me talk about the “new” Google Maps or who have read some of my blog posts on the subject know my view. The current Google Maps is useless for my needs. Last year, as one of my team were driving to a Federal secure facility, I bought an overpriced paper map at one of the truck stops. Why? I had no idea how to interact with the map in a meaningful way. My recollection was that I could coax Google Maps and Waze to be semi-helpful. Now the Google Maps’s developers have become tangled in a very large thorn bush. The team discusses how large the thorn bush is, how sharp the thorns are, and how such a large thorn bush could thrive in the Googley hot house.

This dinobaby expresses some consternation at [a] not knowing where to look, [b] how to show the route, and [c] not cause a motor vehicle accident. Thanks, MSFT Copilot. Good enough I think.

The result is enhancements to Google Maps which are the digital equivalent of skin cancer. The disgusting result is a vehicle for advertising and engagement that no one can use without head scratching moments. Am I alone in my complaint. Nope, the afore mentioned Xhitter.com post aligns quite well with my perception. The author is a person who once designed a more usable version of Google Maps.

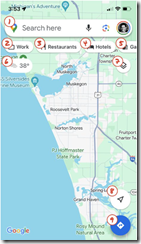

Her Xhitter.com post highlights the digital skin cancer the team of Googley wizards has concocted. Here’s a screen capture of her annotated, life-threatening disfigurement:

She writes:

The map should be sacred real estate. Only things that are highly useful to many people should obscure it. There should be a very limited number of features that can cover the map view. And there are multiple ways to add new features without overlaying them directly on the map.

Sounds good. But Xooglers and other outsiders are not likely to get much traction from the Map team. Everyone is working hard at landing in the hot AI area or some other discipline which will deliver a bonus and a promotion. Maps? Nope.

The former Google Maps’ designer points out:

In 2007, I was 1 of 2 designers on Google Maps. At that time, Maps had already become a cluttered mess. We were wedging new features into any space we could find in the UI. The user experience was suffering and the product was growing increasingly complicated. We had to rethink the app to be simple and scale for the future.

Yep, Google Maps, a case study for people who are brilliant who have lost the atlas to reality. And “sacred” at Google? Ad revenue, not making dear old grandma safer when she drives. (Tesla, Cruise, where are those smart, self-driving cars? Ah, I forgot. They are with Waymo, keeping their profile low.)

Stephen E Arnold, November 30, 2023

Amazon Customer Service: Let Many Flowers Bloom and Die on the Vine

November 29, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

Amazon has been outputting artificial intelligence “assertions” at a furious pace. What’s clear is that Amazon is “into” the volume and variety business in my opinion. The logic of offering multiple “works in progress” and getting them to work reasonably well is going to have three characteristics: The first is that deploying and operating different smart software systems is going to be expensive. The second is that tuning and maintaining high levels of accuracy in the outputs will be expensive. The third is that supporting the users, partners, customers, and integrators is going to be expensive. If we use a bit of freshman in high school algebra, the common factor is expensive. Amazon’s remarkable assertion that no one wants to bet a business on just one model strikes me as a bit out of step with the world in which bean counters scuttle and scurry in green eyeshades and sleeve protectors. (See. I am a dinobaby. Sleeve protectors. I bet none of the OpenAI type outfits have accountants who use these fashion accessories!)

Let’s focus on just one facet of the expensive burdens I touched upon above— customer service. Navigate to the remarkable and stunningly uncritical write up called “How to Reach Amazon Customer Service: A Complete Guide.” The write up is an earthworm list of the “options” Amazon provides. As Amazon was announcing its new new big big things, I was trying to figure out why an order for an $18 product was rejected. The item in question was one part of a multipart order. The other, more costly items were approved and billed to my Amazon credit card.

Thanks MSFT Copilot. You do a nice broken bulldozer or at least a good enough one.

But the dog treats?

I systematically worked through the Amazon customer service options. As a Prime customer, I assumed one of them would work. Here’s my report card:

- Amazon’s automated help. A loop. See Help pages which suggested I navigate too the customer service page. Cute. A first year comp sci student’s programming error. A loop right out of the box. Nifty.

- The customer service page. Well, that page sent me to Help and Help sent me to the automation loop. Cool Zero for two.

- Access through the Amazon app. Nope. I don’t install “apps” on my computing devices unless I have zero choice. (Yes, I am thinking about Apple and Google.) Too bad Amazon, I reject your app the way I reject QR codes used by restaurants. (Do these hash slingers know that QR codes are a fave of some bad actors?)

- Live chat with Amazon customer service was not live. It was a bot. The suggestion? Get back in the loop. Maybe the chat staff was at the Amazon AI announcement or just severely overstaffed or simply did not care. Another loser.

- Request a call from Amazon customer service. Yeah, I got to that after I call Amazon customer service. Another loser.

I repeated the “call Amazon customer service” twice and I finally worked through the automated system and got a person who barely spoke English. I explained the problem. One product rejected because my Amazon credit card was rejected. I learned that this particular customer service expert did not understand how that could have happened. Yeah, great work.

How did I resolve the rejected credit card. I called the Chase Bank customer service number. I told a person my card was manipulated and I suspected fraud. I was escalated to someone who understood the word “fr4aud.” After about five minutes of “’Will you please hold”, the Chase person told me, “The problem is at Amazon, not your card and not Chase.”

What was the fix? Chase said, “Cancel the order.” I did and went to another vendor.

Now what’s that experience suggest about Amazon’s ability (willingness) to provide effective, efficient customer support to users of its purported multiple large language models, AI systems, and assorted marketing baloney output during Amazon’s “we are into AI” week?

My answer? The Bezos bulldozer has an engine belching black smoke, making a lot of noise because the muffler has a hole in it, and the thumpity thump of the engine reveals that something is out of tune.

Yeah, AI and customer support. Just one of the “expensive” things Amazon may not be able to deliver. The troubling thing is that Amazon’s AI may have been powering the multiple customer support systems. Yikes.

Stephen E Arnold, November 29, 2023

Is YouTube Marching Toward Its Waterloo?

November 28, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I have limited knowledge of the craft of warfare. I do have a hazy recollection that Napoleon found himself at the wrong end of a pointy stick at the Battle of Waterloo. I do recall that Napoleon lost the battle and experienced the domino effect which knocked him down a notch or two. He ended up on the island of Saint Helena in the south Atlantic Ocean with Africa a short 1,200 miles to the east. But Nappy had no mobile phone, no yacht purchased with laundered money, and no Internet. Losing has its downsides. Bummer. No empire.

I thought about Napoleon when I read “YouTube’s Ad Blocker Crackdown Heats Up.” The question I posed to myself was, “Is the YouTube push for subscription revenue and unfettered YouTube user data collection a road to Google’s Battle of Waterloo?”

Thanks, MSFT Copilot. You have a knack for capturing the essence of a loser. I love good enough illustrations too.

The cited article from Channel News reports:

YouTube is taking a new approach to its crackdown on ad-blockers by delaying the start of videos for users attempting to avoid ads. There were also complaints by various X (formerly Twitter) users who said that YouTube would not even let a video play until the ad blocker was disabled or the user purchased a YouTube Premium subscription. Instead of an ad, some sources using Firefox and Edge browsers have reported waiting around five seconds before the video launches the content. According to users, the Chrome browser, which the streaming giant shares an owner with, remains unaffected.

If the information is accurate, Google is taking steps to damage what the firm has called the “user experience.” The idea is that users who want to watch “free” videos, have a choice:

- Put up with delays, pop ups, and mindless appeals to pay Google to show videos from people who may or may not be compensated by the Google

- Just fork over a credit card and let Google collect about $150 per year until the rates go up. (The cable TV and mobile phone billing model is alive and well in the Google ecosystem.)

- Experiment with advertisement blocking technology and accept the risk of being banned from Google services

- Learn to love TikTok, Instagram, DailyMotion, and Bitchute, among other options available to a penny-conscious consumer of user-produced content

- Quit YouTube and new-form video. Buy a book.

What happened to Napoleon before the really great decision to fight Wellington in a lovely part of Belgium. Waterloo is about nine miles south of the wonderful, diverse city of Brussels. Napoleon did not have a drone to send images of the rolling farmland, where the “enemies” were located, or the availability of something behind which to hide. Despite Nappy’s fine experience in his march to Russia, he muddled forward. Despite allegedly having said, “The right information is nine-tenths of every battle,” the Emperor entered battle, suffered 40,000 casualties, and ended up in what is today a bit of a tourist hot spot. In 1816, it was somewhat less enticing. Ordering troops to charge uphill against a septuagenarian’s forces was arguably as stupid as walking to Russia as snowflakes began to fall.

How does this Waterloo related to the YouTube fight now underway? I see several parallels:

- Google’s senior managers, informed with the management lore of 25 years of unfettered operation, knows that users can be knocked along a path of the firm’s choice. Think sheep. But sheep can be disorderly. One must watch sheep.

- The need to stem the rupturing of cash required to operate a massive “free” video service is another one of those Code Yellow and Code Red events for the company. With search known to be under threat from Sam AI-Man and the specters of “findability” AI apps, the loss of traffic could be catastrophic. Despite Google’s financial fancy dancing, costs are a bit of a challenge: New hardware costs money, options like making one’s own chips costs money, allegedly smart people cost money, marketing costs money, legal fees cost money, and maintaining the once-free SEO ad sales force costs money. Got the message: Expenses are a problem for the Google in my opinion.

- The threat of either TikTok or Instagram going long form remains. If these two outfits don’t make a move on YouTube, there will be some innovator who will. The price of “move fast and break things” means that the Google can be broken by an AI surfer. My team’s analysis suggests it is more brittle today than at any previous point in its history. The legal dust up with Yahoo about the Overture / GoTo issue was trivial compared to the cost control challenge and the AI threat. That’s a one-two for the Google management wizards to solve. Making sense of the Critique of Pure Reason is a much easier task in my view.

The cited article includes a statement which is likely to make some YouTube users uncomfortable. Here’s the statement:

Like other streaming giants, YouTube is raising its rates with the Premium price going up to $13.99 in the U.S., but users may have to shell out the money, and even if they do, they may not be completely free of ads.

What does this mean? My interpretation is that [a] even if you pay, a user may see ads; that is, paying does not eliminate ads for perpetuity; and [b] the fee is not permanent; that is, Google can increase it at any time.

Several observations:

- Google faces high-cost issues from different points of the business compass: Legal in the US and EU, commercial from known competitors like TikTok and Instagram, and psychological from innovators who find a way to use smart software to deliver a more compelling video experience for today’s users. These costs are not measured solely in financial terms. The mental stress of what will percolate from the seething mass of AI entrepreneurs. Nappy did not sleep too well after Waterloo. Too much Beef Wellington, perhaps?

- Google’s management methods have proven appropriate for generating revenue from a ad model in which Google controls the billing touch points. When those management techniques are applied to non-controllable functions, they fail. The hallmark of the management misstep is the handling of Dr. Timnit Gebru, a squeaky wheel in the Google AI content marketing machine. There is nothing quite like stifling a dissenting voice, the squawk of a parrot, and a don’t-let-the-door-hit-you-when -you-leave moment.

- The post-Covid, continuous warfare, and unsteady economic environment is causing the social fabric to fray and in some cases tear. This means that users may become contentious and become receptive to a spontaneous flash mob action toward Google and YouTube. User revolt at scale is not something Google has demonstrated a core competence.

Net net: I will get my microwave popcorn and watch this real-time Google Boogaloo unfold. Will a recipe become famous? How about Grilled Google en Croute?

Stephen E Arnold, November 28, 2023

A Former Yahooligan and Xoogler Offers Management Advice: Believe It or Not!

November 22, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I read a remarkable interview / essay / news story called “Former Yahoo CEO Marissa Mayer Delivers Sharp-Elbowed Rebuke of OpenAI’s Broken Board.” Marissa Mayer was a Googler. She then became the Top Dog at Yahoo. Highlights of her tenure at Yahoo include, according to Inc.com, included:

- Fostering a “superstar status” for herself

- Pointing a finger is a chastising way at remote workers

- Trying to obfuscate Yahooligan layoffs

- Making slow job cuts

- Lack of strategic focus (maybe Tumblr, Yahoo’s mobile strategy, the search service, perhaps?)

- Tactical missteps in diversifying Yahoo’s business (the Google disease in my opinion)

- Setting timetables and then ignoring, missing, or changing them

- Weird PR messages

- Using fear (and maybe uncertainty and doubt) as management methods.

The senior executives of a high technology company listen to a self-anointed management guru. One of the bosses allegedly said, “I thought Bain and McKinsey peddled a truckload of baloney. We have the entire factory in front of use.” Thanks, MSFT Copilot. Is Sam the AI-Man on duty?

So what’s this exemplary manager have to say? Let’s go to the original story:

“OpenAI investors (like @Microsoft) need to step up and demand that the governance weaknesses at @OpenAI be fixed,” Mayer wrote Sunday on X, formerly known as Twitter.

Was Microsoft asleep at the switch or simply operating within a Cloud of Unknowing? Fast-talking Satya Nadella was busy trying to make me think he was operating in a normal manner. Had he known something was afoot, is he equipped to deal with burning effigies as a business practice?

Ms. Mayer pointed out:

“The fact that Ilya now regrets just shows how broken and under advised they are/were,” Mayer wrote on social media. “They call them board deliberations because you are supposed to be deliberate.”

Brilliant! Was that deliberative process used to justify the purchase of Tumblr?

The Business Insider write up revealed an interesting nugget:

The Information reported that the former Yahoo CEO’s name had been tossed around by “people close to OpenAI” as a potential addition to the board…

Okay, a Xoogler and a Yahooligan in one package.

Stephen E Arnold, November 22, 2023

Turmoil in AI Land: Uncertainty R Us

November 21, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

It is now Tuesday, November 21, 2023. I learned this morning on the “Pivot” podcast that one of the co-hosts is the “best technology reporter.” I read a number of opinions about the high school science club approach to managing a multi-billion dollar alleged valued at lots of money last Friday, November 17, 2023, and today valued at much less money. I read some of the numerous “real news” stories on Hacker News, Techmeme, and Xitter, and learned:

- Gee, it was a mistake

- Sam AI-Man is working at Microsoft

- Sam AI-Man is not working at Microsoft

- Microsoft is ecstatic that opportunities are available

- Ilya Sutskever will become a blue-chip consultant specializing in Board-level governance

- OpenAI is open because it is business as usual in Sillycon Valley.

The AI ringmaster has issued an instruction or prompt to the smart software. The smart software does not obey. What’s happening is that not only are inputs not converted to the desired actions, the entire circus audience is not sure which is more entertaining, the software or the manager. Thanks, Microsoft Copilot. I gave up and used one of the good enough images.

“Firing Sam Altman Hasn’t Worked Out for OpenAI’s Board” reports:

Whether Altman ultimately stays at Microsoft or comes back to OpenAI, he’ll be more powerful than he was last week. And if he wants to rapidly develop and commercialize powerful AI models, nobody will be in a position to stop him. Remarkably, one of the 500 employees who signed Monday’s OpenAI employee letter is Ilya Sutskever, who has had a profound change of heart since he voted to oust Altman on Friday.

Okay, maybe Ilya Sutskever will not become a blue chip consultant. That’s okay, just mercurial.

Several observations:

- Smart software causes bright people to behave in sophomoric ways. I have argued for many years that many of the techno-feudalistic outfits are more like high school science clubs than run-of-the-mill high school sophomores. Intelligence coupled with a poorly developed judgment module causes some spectacular management actions.

- Poor Google must be uncomfortable as its struggles on the tenterhooks which have snagged its corporate body. Is Microsoft going to going to be the Big Dog in smart software? Is Sam AI-Man going to do something new to make life for Googzilla more uncomfortable than it already is? Is Google now faced with a crisis about which its flocks of legal eagles, its massive content marketing machine, and its tools for shaping content cannot do much to seize the narrative.

- Developers who have embraced the idea of OpenAI as the best partner in the world have to consider that their efforts may be for naught? Where do these wizards turn? To Microsoft and the Softie ethos? To the Zuck and his approach? To Google and its reputation for terminating services like snipers? To the French outfit with offices near some very good restaurants. (That doesn’t sound half bad, does it?)

I am not sure if Act I has ended or if the entire play has ended. After a short intermission, there will be more of something.

Stephen E Arnold, November 21, 2023

OpenAI: What about Uncertainty and Google DeepMind?

November 20, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

A large number of write ups about Microsoft and its response to the OpenAI management move populate my inbox this morning (Monday, November 20, 2023).

To give you a sense of the number of poohbahs, mavens, and “real” journalists covering Microsoft’s hiring of Sam (AI-Man) Altman, I offer this screen shot of Techmeme.com taken at 1100 am US Eastern time:

A single screenshot cannot do justice to the digital bloviating on this subject as well as related matters.

I did a quick scan because I simply don’t have the time at age 79 to read every item in this single headline service. Therefore, I admit that others may have thought about the impact of the Steve Jobs’s like termination, the revolt of some AI wizards, and Microsoft’s creating a new “company” and hiring Sam AI-Man and a pride of his cohorts in the span of 72 hours (give or take time for biobreaks).

In this short essay, I want to hypothesize about how the news has been received by that merry band of online advertising professionals.

To begin, I want to suggest that the turmoil about who is on first at OpenAI sent a low voltage signal through the collective body of the Google. Frisson resulted. Uncertainty and opportunity appeared together like the beloved Scylla and Charybdis, the old pals of Ulysses. The Google found its right and left Brainiac hemispheres considering that OpenAI would experience a grave set back, thus clearing a path for Googzilla alone. Then one of the Brainiac hemisphere reconsidered and perceive a grave threat from the split. In short, the Google tipped into its zone of uncertainty.

A group of online advertising experts meet to consider the news that Microsoft has hired Sam Altman. The group looks unhappy. Uncertainty is an unpleasant factor in some business decisions. Thanks Microsoft Copilot, you captured the spirit of how some Silicon Valley wizards are reacting to the OpenAI turmoil because Microsoft used the OpenAI termination of Sam Altman as a way to gain the upper hand in the cloud and enterprise app AI sector.

Then the matter appeared to shift back to the pre-termination announcement. The co-founder of OpenAI gained more information about the number of OpenAI employees who were planning to quit or, even worse, start posting on Instagram, WhatsApp, and TikTok (X.com is no longer considered the go-to place by the in crowd.

The most interesting development was not that Sam AI-Man would return to the welcoming arms of Open AI. No, Sam AI-Man and another senior executive were going to hook up with the geniuses of Redmond. A new company would be formed with Sam AI-Man in charge.

As these actions unfolded, the Googlers sank under a heavy cloud of uncertainty. What if the Softies could use Google’s own open source methods, integrate rumored Microsoft-developed AI capabilities, and make good on Sam AI-Man’s vision of an AI application store?

The Googlers found themselves reading every “real news” item about the trajectory of Sam AI-Man and Microsoft’s new AI unit. The uncertainty has morphed into another January 2023 Davos moment. Here’s my take as of 230 pm US Eastern, November 20, 2023:

- The Google faces a significant threat when it comes to enterprise AI apps. Microsoft has a lock on law firms, the government, and a number of industry sectors. Google has a presence, but when it comes to go-to apps, Microsoft is the Big Dog. More and better AI raises the specter of Microsoft putting an effective laser defense behinds its existing enterprise moat.

- Microsoft can push its AI functionality as the Azure difference. Furthermore, whether Google or Amazon for that matter assert their cloud AI is better, Microsoft can argue, “We’re better because we have Sam AI-Man.” That is a compelling argument for government and enterprise customers who cannot imagine work without Excel and PowerPoint. Put more AI in those apps, and existing customers will resist blandishments from other cloud providers.

- Google now faces an interesting problem: It’s own open source code could be converted into a death ray, enhanced by Sam AI-Man, and directed at the Google. The irony of Googzilla having its left claw vaporized by its own technology is going to be more painful than Satya Nadella rolling out another Davos “we’re doing AI” announcement.

Net net: The OpenAI machinations are interesting to many companies. To the Google, the OpenAI event and the Microsoft response is like an unsuspecting person getting zapped by Nikola Tesla’s coil. Google’s mastery of high school science club management techniques will now dig into the heart of its DeepMind.

Stephen E Arnold, November 20, 2023

OpenAI: Permanent CEO Needed

November 17, 2023

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

My rather lame newsreader spit out an “urgent alert” for me. Like the old teletype terminal: Ding, ding, ding, and a bunch of asterisks.

Surprise. Sam AI-Man allegedly has been given the opportunity to find his future elsewhere. Let me translate blue chip consultant speak for you. The “find your future elsewhere” phrase means you have been fired, RIFed, terminated with extreme prejudice, or “there’s the door. Use it now.” The particularly connotative spin depends on the person issuing the formal statement.

“Keep in mind that we will call you,” says the senior member of the Board of Directors. The head of the human resources committee says, “Remember. We don’t provide a reference. Why not try the Google AI system?” Thank you, MSFT Copilot. You must have been trained on content about Mr. Ballmer’s departure.

“OpenAI Fires Co-Founder and CEO Sam Altman for Lying to Company Board” states as rock solid basaltic truth:

OpenAI CEO and co-founder Sam Altman was fired for lying to the board of his company.

The good news is that a succession option, of sorts, is in place. Accordingly, OpenAI’s chief technical officer, has become the “interim CEO.” I like the “interim.” That’s solid.

For the moment, let’s assume the RIF statement is true. Furthermore, on this rainy Saturday in rural Kentucky, I shall speculate about the reasons for this announcement. Here we go:

- The problem is money, the lack thereof, or the impossibility of controlling the costs of the OpenAI system. Perhaps Sam AI-Man said, “Money is no problem.” The Board did not agree. Money is the problem.

- The lovey dovey relationship with the Microsofties has hit a rough patch. MSFT’s noises have been faint and now may become louder about AI chips, options, and innovations. Will these Microsoft bleats become more shrill as the ageing giant feels pain as it tries to make marketing hyperbole a reality. Let’s ask the Copilot, shall we?

- The Board has realized that the hyperbole has exceeded OpenAI’s technical ability to solve such problems as made up data (hallucinations), the resources to cope with the the looming legal storm clouds related to unlicensed use of some content (the Copyright Shield “promise”), fixing up the baked in bias of the system, and / or OpenAI ChatGPT’s vulnerability to nifty prompt engineering to override alleged “guardrails”.

What’s next?

My answer is, “Uncertainty.” Cue the Ray Charles’ hit with the lyric “Hit the road, Jack. Don’t you come back no more, no more, no more, no more. (I did not steal this song; I found it via Google on the Google YouTube. Honest.) I admit I did hear the tune playing in my head when I read the Guardian story.

Stephen E Arnold, November 17, 2023

x

x

x

x

x

x

How Google Works: Think about Making Sausage in 4K on a Big Screen with Dolby Sound

November 16, 2023

This essay is the work of a dumb, dinobaby humanoid. No smart software required.

This essay is the work of a dumb, dinobaby humanoid. No smart software required.

I love essays which provide a public glimpse of the way Google operates. An interesting insider description of the machinations of Googzilla’s lair appears in “What I Learned Getting Acquired by Google.” I am going to skip the “wow, the Google is great,” and focus on the juicy bits.

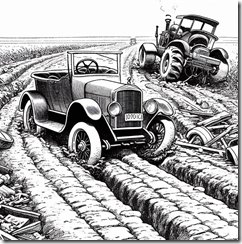

Driving innovation down Google’s Information Highway requires nerves of steel and the patience of Job. A good sense of humor, many brain cells, and a keen desire to make the techno-feudal system dominate are helpful as well. Thanks, Microsoft Bing. It only took four tries to get an illustration of vehicles without parts of each chopped off.

Here are the article’s “revelations.” It is almost like sitting in the Google cafeteria and listening to Tony Bennett croon. Alas, those days are gone, but the “best” parts of Google persist if the write up is on the money.

Let me highlight a handful of comments I found interesting and almost amusing:

- Google, according to the author, “an ever shifting web of goals and efforts.” I think this means going in many directions at once. Chaos, not logic, drives the sports car down the Information Highway

- Google has employees who want “to ship great work, but often couldn’t.” Wow, the Googley management method wastes resources and opportunities due to the Googley outfit’s penchant for being Googley. Yeah, Googley because lousy stuff is one output, not excellence. Isn’t this regressive innovation?

- There are lots of managers or what the author calls “top heavy.” But those at the top are well paid, so what’s the incentive to slim down? Answer: No reason.

- Google is like a teen with a credit card and no way to pay the bill. The debt just grows. That’s Google except it is racking up technical debt and process debt. That’s a one-two punch for sure.

- To win at Google, one must know which game to play, what the rules of that particular game are, and then have the Machiavellian qualities to win the darned game. What about caring for the users? What? The users! Get real.

- Google screws up its acquisitions. Of course. Any company Google buys is populated with people not smart enough to work at Google in the first place. “Real” Googlers can fix any acquisition. The technique was perfected years ago with Dodgeball. Hey, remember that?

Please, read the original essay. The illustration shows a very old vehicle trying to work its way down an information highway choked with mud, blocked by farm equipment, and located in an isolated fairy land. Yep, that’s the Google. What happens if the massive flows of money are reduced? Yikes!

Stephen E Arnold, November 16, 2023